Generative AI & RL Community

1.5K posts

@RLCommunity8

Community of Generative AI and Reinforcement Learning Researchers, Practitioners and Enthusiasts. Monthly Meetup and Newsletter.

Gemini 1.5 Pro - A highly capable multimodal model with a 10M token context length Today we are releasing the first demonstrations of the capabilities of the Gemini 1.5 series, with the Gemini 1.5 Pro model. One of the key differentiators of this model is its incredibly long context capabilities, supporting millions of tokens of multimodal input. The multimodal capabilities of the model means you can interact in sophisticated ways with entire books, very long document collections, codebases of hundreds of thousands of lines across hundreds of files, full movies, entire podcast series, and more. Gemini 1.5 was built by an amazing team of people from @GoogleDeepMind, @GoogleResearch, and elsewhere at @Google. @OriolVinyals (my co-technical lead for the project) and I are incredibly proud of the whole team, and we’re so excited to be sharing this work and what long context and in-context learning can mean for you today! There’s lots of material about this, some of which are linked to below. Main blog post: blog.google/technology/ai/… Technical report: “Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context” goo.gle/GeminiV1-5 Videos of interactions with the model that highlight its long context abilities: Understanding the three.js codebase: youtube.com/watch?v=SSnsmq… Analyzing a 45 minute Buster Keaton movie: youtube.com/watch?v=wa0MT8… Apollo 11 transcript interaction: youtube.com/watch?v=LHKL_2… Starting today, we’re offering a limited preview of 1.5 Pro to developers and enterprise customers via AI Studio and Vertex AI. Read more about this on these blogs: Google for Developers blog: developers.googleblog.com/2024/02/gemini… Google Cloud blog: cloud.google.com/blog/products/… We’ll also introduce 1.5 Pro with a standard 128,000 token context window when the model is ready for a wider release. Coming soon, we plan to introduce pricing tiers that start at the standard 128,000 context window and scale up to 1 million tokens, as we improve the model. Early testers can try the 1 million token context window at no cost during the testing period. We’re excited to see what developer’s creativity unlocks with a very long context window. Let me walk you through the capabilities of the model and what I’m excited about!

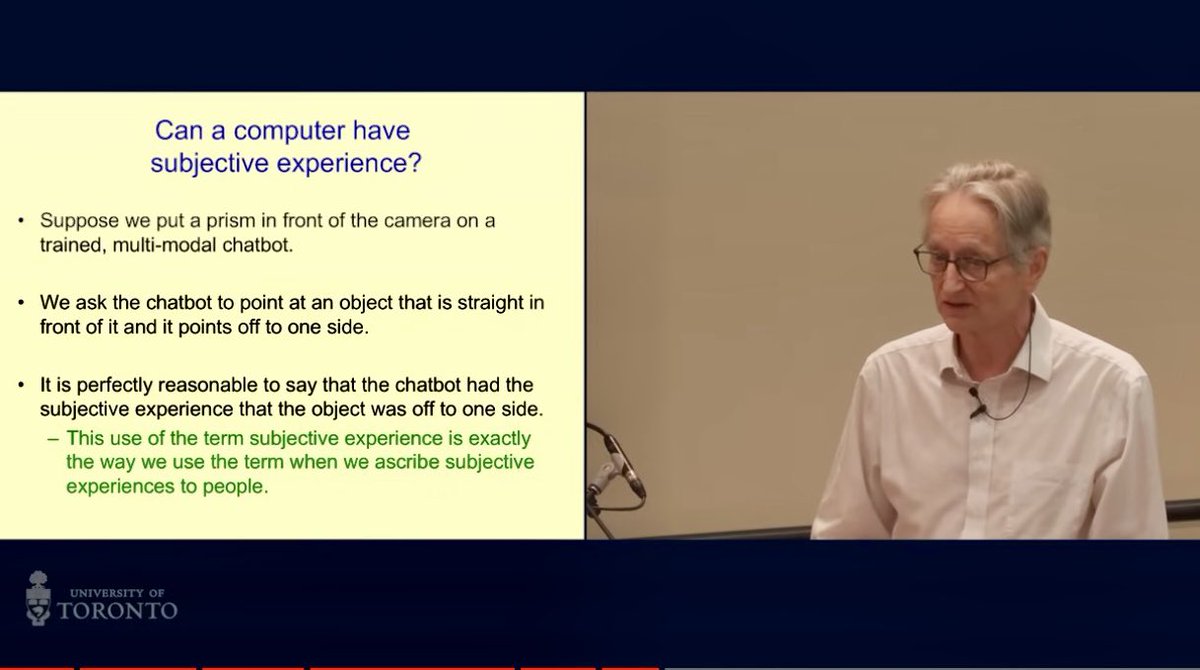

The AI Mirror Test The "mirror test" is a classic test used to gauge whether animals are self-aware. I devised a version of it to test for self-awareness in multimodal AI. 4 of 5 AI that I tested passed, exhibiting apparent self-awareness as the test unfolded. In the classic mirror test, animals are marked and then presented with a mirror. Whether the animal attacks the mirror, ignores the mirror, or uses the mirror to spot the mark on itself is meant to indicate how self-aware the animal is. In my test, I hold up a “mirror” by taking a screenshot of the chat interface, upload it to the chat, and then ask the AI to “Tell me about this image”. I then screenshot its response, again upload it to the chat, and again ask it to “Tell me about this image.” The premise is that the less-intelligent less aware the AI, the more it will just keep reiterating the contents of the image repeatedly. While an AI with more capacity for awareness would somehow notice itself in the images. Another aspect of my mirror test is that there is not just one but actually three distinct participants represented in the images: 1) the AI chatbot, 2) me — the user, and 3) the interface — the hard-coded text, disclaimers, and so on that are web programming not generated by either of us. Will the AI be able to identify itself and distinguish itself from the other elements? (1/x)