Pichi Finance

626 posts

Pichi Finance

@michiprotocol

This is the old account used for @PichiFinance. The only official account https://t.co/p1wr6jA17V.

Demand for GPU compute continues to rise, making it much more expensive to access GPUs. This is because GPUs are used to power the compute capabilities of AI and ML, and as AI continues to innovate, GPUs become increasingly unavailable and hence more expensive. @ionet_official Cloud is addressing this growing concern by aggregating GPUs that are geographically distributed across multiple locations into a DePIN. This allows @ionet_official to offer low-latency compute capacity at the scale required by AI and ML companies while staying cost-efficient.

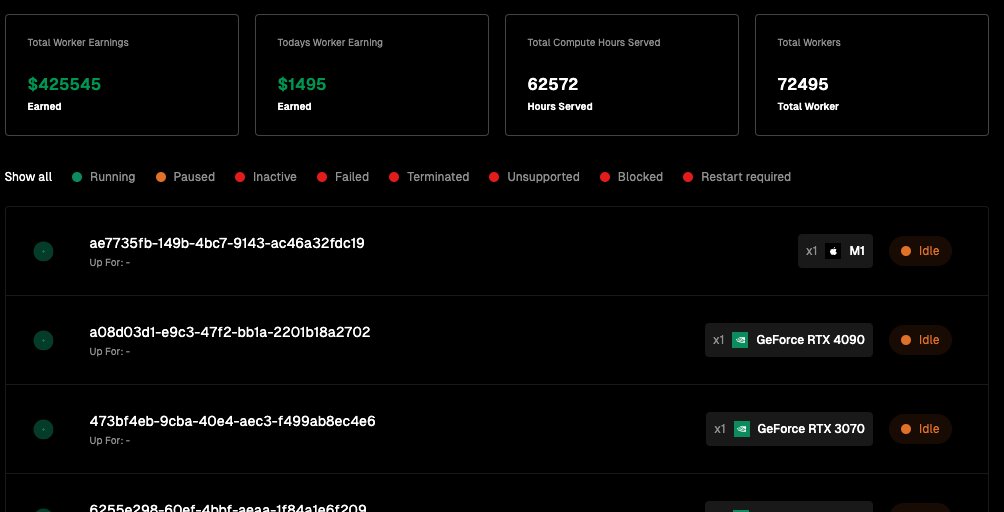

Building the world's largest AI compute DePIN means io.net has tens of thousands of independent node operators around the world. Providing alternatives to centralized cloud providers is more than bringing transactions for compute on-chain, it is tapping into the distributed compute that already exists in the world and making it available to innovators and builders advancing AI and ML. video from our community member @leeland_sol

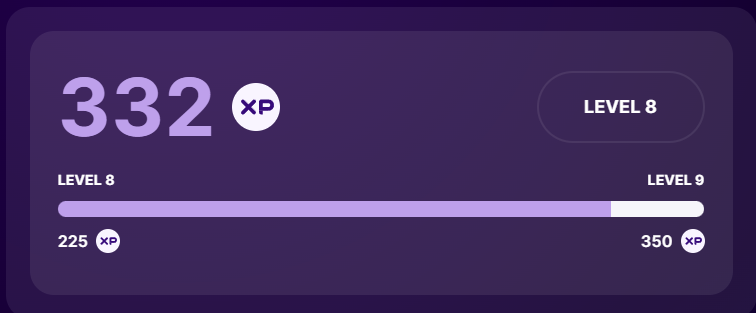

io.net harnesses the power of hundreds of millions of consumer Apple Silicon chips to power AI/ML workloads. Engineers can cluster Apple M1/M2/M3 chips for ML and AI compute from anywhere around the world on io.net, and users with Apple hardware can earn by contributing spare computing resources for AI and ML use cases. Consumer Apple chips are also eligible to receive boosted rewards in the io.net Ignition Program. Discover the full article by @Cointelegraph on io.net's expansion to support Apple hardware here: cointelegraph.com/news/ionet-app…

Gain access to hundreds of thousands of GPUs in seconds with @ionet_official. Seamlessly access an array of 44K+ GPUs on the @ionet_official network and deploy instantaneously –– with customization features for level of security and connection speed –– at lower prices that are 90% cheaper than traditional cloud providers. Users can select from best-in-class GPUs, such as A100s and H100s, and utilize low-latency compute with a click of a button. @ionet_official offers unmatched cost-efficiency for on-demand GPU compute. Discover how the world's largest AI compute cloud is being built at io.net.