Video & Image Sense Lab (VIS Lab)

54 posts

@VISLab_UvA

Computer Vision research group at @UvA_Amsterdam directed by Cees Snoek (@cgmsnoek)

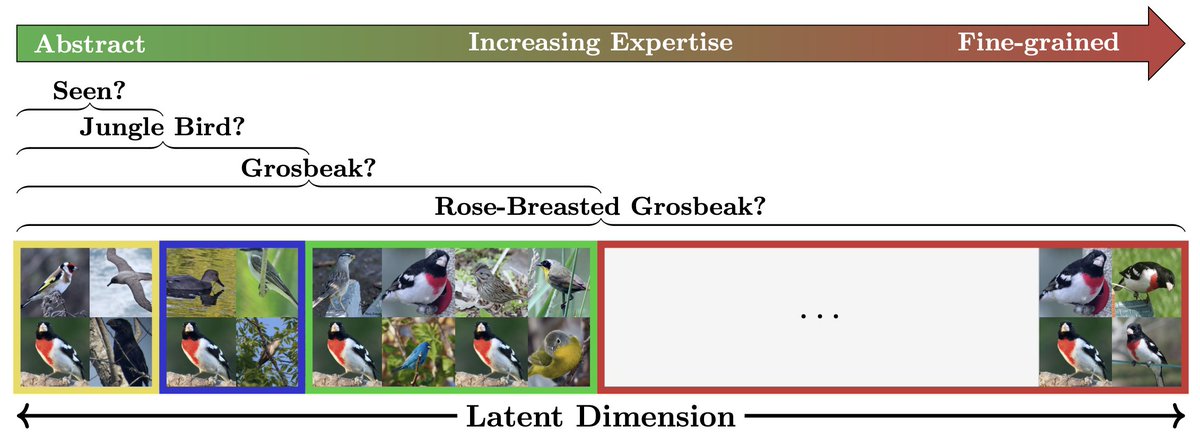

(1/6)🥳 Excited to share my latest research done as part of my MSc AI thesis! We introduced Hyperbolic Compositional CLIP (HyCoCLIP)—a novel framework that leverages the hierarchical nature of hyperbolic space for learning vision-language representations using scene compositions.

Today, we're introducing TVBench! 📹💬 Video-language evaluation is crucial, but are we doing it right? We find that current benchmarks fall short in testing temporal understanding. 🧵👇

Hello world! fundamentalailab.github.io

🌀 Equivariant Neural Fields (ENFs) for continuous PDE solving! We use ENFs as representation for solving PDEs in continuous space/time on different geometries while respecting their symmetries! (such as this internally heated ball of fluid) More details 👇

Interested in learning about the future of self-supervised learning? Don’t miss our workshop this Sunday at @eccvconf with an incredible lineup of speakers! 🔥 @imisra_ @oriane_simeoni @endernewton @olivierhenaff @y_m_asano @YutongBAI1002 More details at sslwin.org

📢SIGMA: Sinkhorn-Guided Masked Video Modeling got accepted to @eccvconf #ECCV2024 TL;DR: Instead of using pixel targets in Video Masked Modeling, we reconstruct jointly trained features using Sinkhorn guidance, achieving SOTA. 📝Project page: quva-lab.github.io/SIGMA/ 🌐Paper: arxiv.org/abs/2407.15447 Joint work with @MrzSalehi @fmthoker @egavves @cgmsnoek @y_m_asano

I’m hiring a postdoc to work with me on exciting projects in generative modelling (AI) and/or uncertainty quantification. You'll be part of a great team, embedded in @AmlabUva and the UvA-Bosch Delta Lab. Apply here: vacatures.uva.nl/UvA/job/Postdo… RT appreciated! #ML #GenAI

Incredibly excited to announce the 1st edition of our tutorial at @eccvconf w/ the amazing @y_m_asano and @MrzSalehi! "Time is precious: Self-Supervised Learning Beyond Images" on 30th Sept. from 09:00 to 13:00 at Amber 7+ 8 Catch the details here⬇️ shashankvkt.github.io/eccv2024-SSLBI…

📢SIGMA: Sinkhorn-Guided Masked Video Modeling got accepted to @eccvconf #ECCV2024 TL;DR: Instead of using pixel targets in Video Masked Modeling, we reconstruct jointly trained features using Sinkhorn guidance, achieving SOTA. 📝Project page: quva-lab.github.io/SIGMA/ 🌐Paper: arxiv.org/abs/2407.15447 Joint work with @MrzSalehi @fmthoker @egavves @cgmsnoek @y_m_asano