G. @ The Neuron

5.3K posts

G. @ The Neuron

@TheNeuronScribe

I am dumb but I am learning

Excited to share our work InfiniDepth (CVPR 2026) — casting monocular depth estimation as a neural implicit field, which enables: 🔍 Arbitrary-Resolution 📐 Accurate Metric Depth 📷 Large-View Novel View Synthesis Feel free to try our code: github.com/zju3dv/InfiniD…

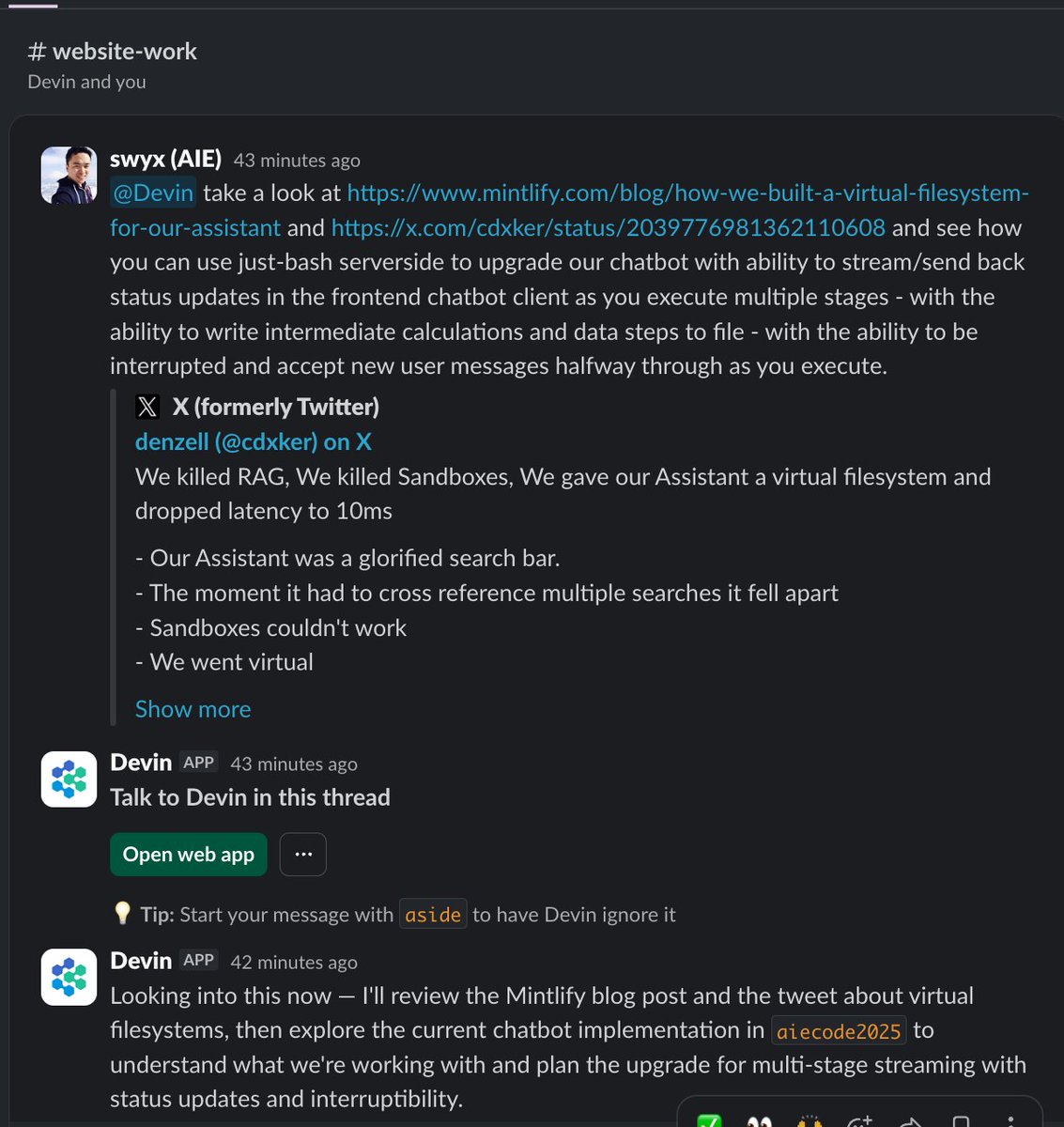

Mintlify assistant is powered by just-bash with a custom filesystem

This is the historic “We Have No Moat” Google AI memo of 2023. This is The Great Unwinding Of The Silicon Valley I urge everyone to read: readmultiplex.com/2023/03/11/the… What is next does not even resemble the past. Join us an know. THERE ARE NO MORE MOATS.

Trtllmgen kernels are now open. Fastest prefill and decode kernels for our target workloads. We wrote these to win InferenceX, MLPerf, other benchmarks. Powering some of today’s top served models. Dive in, learn, use them, or level up your own. Enjoy. github.com/flashinfer-ai/…

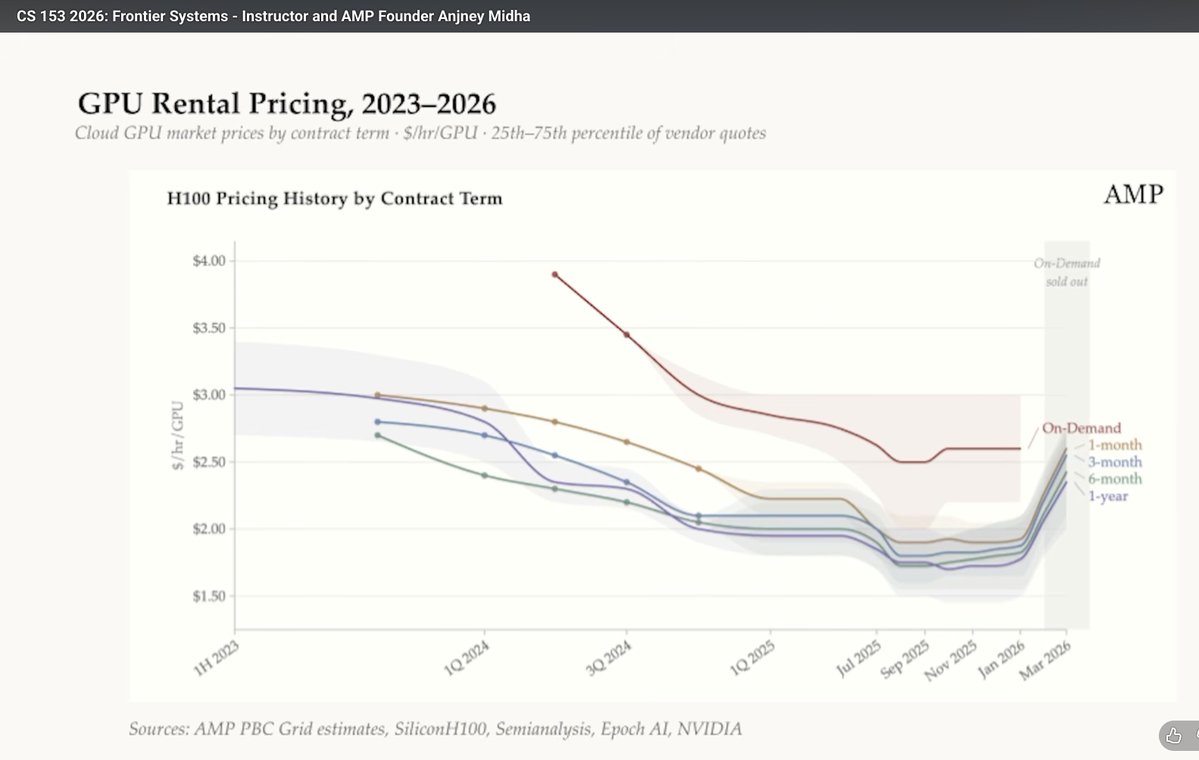

Stanford @CS153Systems, Week 1 (Full Lecture) AI Scaling, Bottlenecks, and Why Compute Isn't a Commodity Yet 00:00 Compute Coachella 00:29 Simple Life Heuristic 01:08 Uncertainty Creates Opportunity 01:42 Four Bottlenecks Framework 01:51 Empirical Proof Matters 02:05 Cloud Costs Are Shifting 02:15 Verifiable vs Fuzzy Progress 02:48 Scaling Predictability Explained 03:43 CapEx Explosion in Big Tech 04:06 Chips Aren’t Commodities 04:45 Compute Scarcity Conclusion