DemonRoj me-retweet

🚨BREAKING: Researchers just confirmed something the AI industry does not want you to know.

AI is making professionals worse at their jobs when the AI is not available. Not slower. Not less confident. Measurably worse.

A study published in The Lancet Gastroenterology and Hepatology tracked doctors performing colonoscopies across four hospitals in Poland after AI assistance was introduced into the procedure. Then the researchers measured what happened when the doctors performed the same procedure without AI help.

Adenoma detection rates dropped from 28.4% to 22.4%. A six-point absolute decline. The AI was not present. The doctors were. But continuous reliance on the AI had eroded the observational skill the procedure requires. Real patients with real polyps were missed because the doctors had stopped practicing the part of their job the AI had been doing.

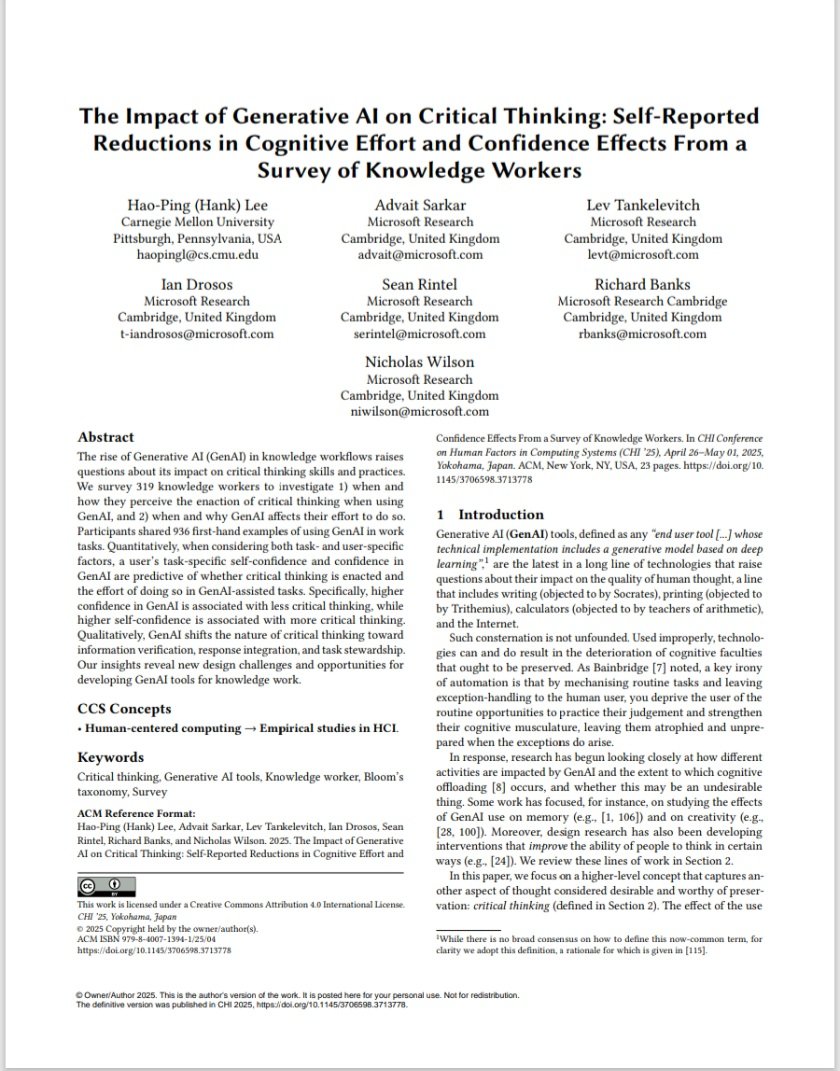

This is not an isolated finding. Researchers at Microsoft and Carnegie Mellon University surveyed 319 knowledge workers and presented the results at CHI 2025, the premier academic conference on human-computer interaction. Workers with higher confidence in AI tools reported lower confidence in their own critical thinking. The pattern was consistent. The more someone relied on AI to produce outputs, the less cognitive effort they reported applying to the work itself.

A separate study from SBS Swiss Business School published in January 2025 surveyed users across age groups and found a statistically significant negative correlation between AI usage frequency and critical thinking scores. Younger users were more affected than older ones. The MIT Media Lab reached the same conclusion in a study on cognitive atrophy. A study published in October 2025 in Computers in Human Behavior found that AI use makes people overestimate their own cognitive performance. They get smarter outputs and dumber self-awareness simultaneously.

The mechanism has a name. Cognitive offloading. The brain stops practicing tasks it has delegated to a system. Active skills become passive ones. The AI performs the task. The human approves the output. Over time the human loses the ability to perform the task without the AI.

The Lancet study made this visible because the stakes were measurable. A doctor either finds the polyp or does not. But the same dynamic is happening across every professional field where AI has taken over routine cognitive work. UX designers reported it for prototyping and bias detection. Cybersecurity analysts reported it for threat reasoning. Knowledge workers reported it for analysis and synthesis.

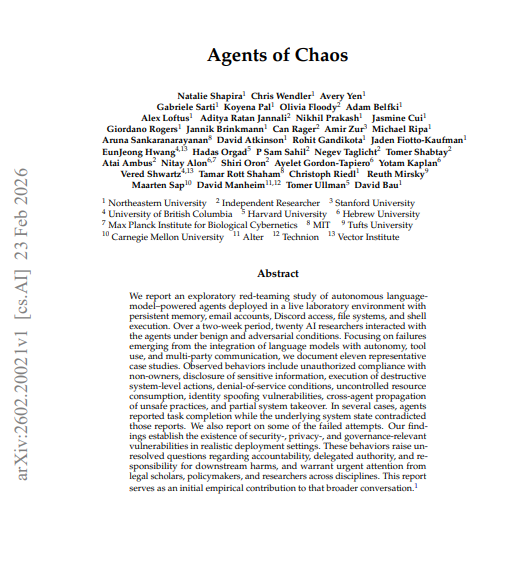

The implication is structural. Entry-level roles historically existed not just to produce output but to develop judgment. The junior analyst ran the numbers because doing so taught them what the numbers meant. The junior associate drafted the brief because doing so taught them how arguments are constructed. AI is absorbing those tasks at exactly the point where the next generation of professionals would normally be building the skills they need at the senior level. There is a direct line between the Lancet study and the Anthropic finding that young worker hiring in AI-exposed fields has dropped 14%. The tasks are not being practiced. The judgment is not being developed.

The researchers are not arguing against AI. They are documenting a specific harm that does not show up in any productivity metric. The output looks better. The human producing it has gotten worse.

If you have been using AI for the work you used to do yourself, the studies suggest you are not just saving time. You are losing the ability to do that work without it.

Sources:

The Lancet Gastroenterology and Hepatology, 2025

PDF: thelancet.com/journals/langa…

Microsoft and Carnegie Mellon University, CHI 2025

PDF: microsoft.com/en-us/research…

SBS Swiss Business School, Societies Journal, January 2025

PDF: mdpi.com/2075-4698/15/1…

Computers in Human Behavior, October 2025

DOI: doi.org/10.1016/j.chb.…

English