One of the most shared agentic workflows this year is the Night Shift pattern. Jamon Holmgren wrote it up in March and it's been circulating ever since. The idea is: you spend the day writing specs and thinking through architecture, then you kick off your coding agent before you close the laptop. It works overnight. You review commits over morning coffee.

Mitchell Hashimoto shared something similar. Last 30 minutes of the day, he spins up agents for research, issue triage, parallel experiments. They generate reports he reads the next morning. He calls it a "warm start" instead of a cold one.

Both workflows are genuinely good. If you haven't tried some version of this, you probably should. The individual productivity gains are real.

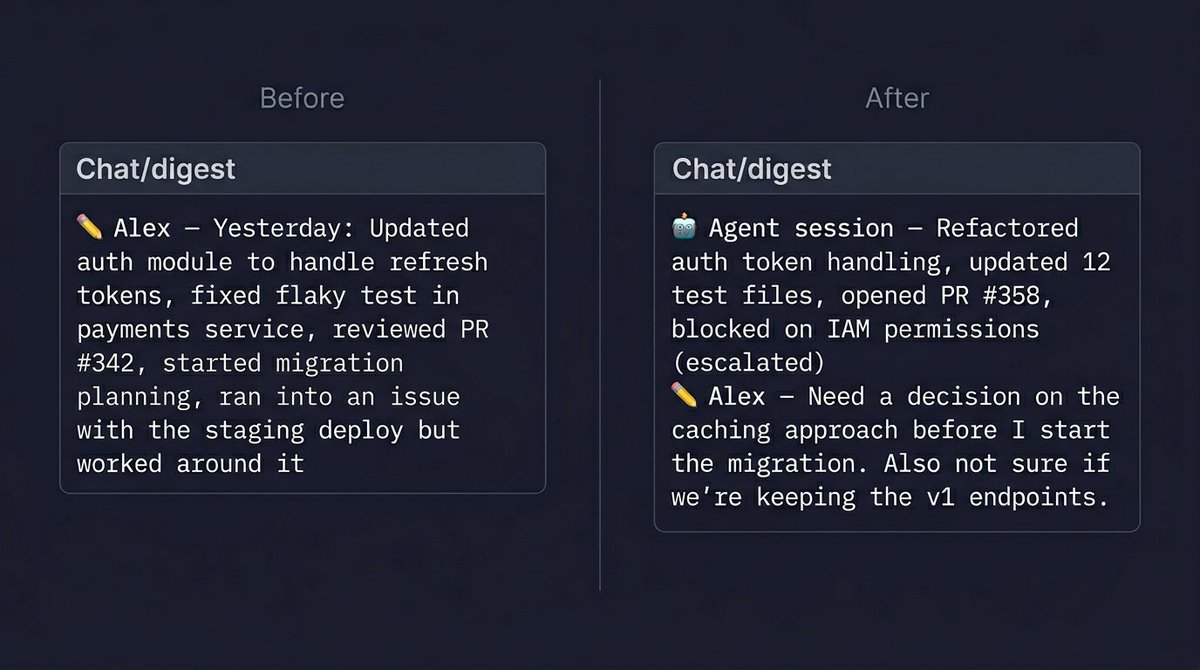

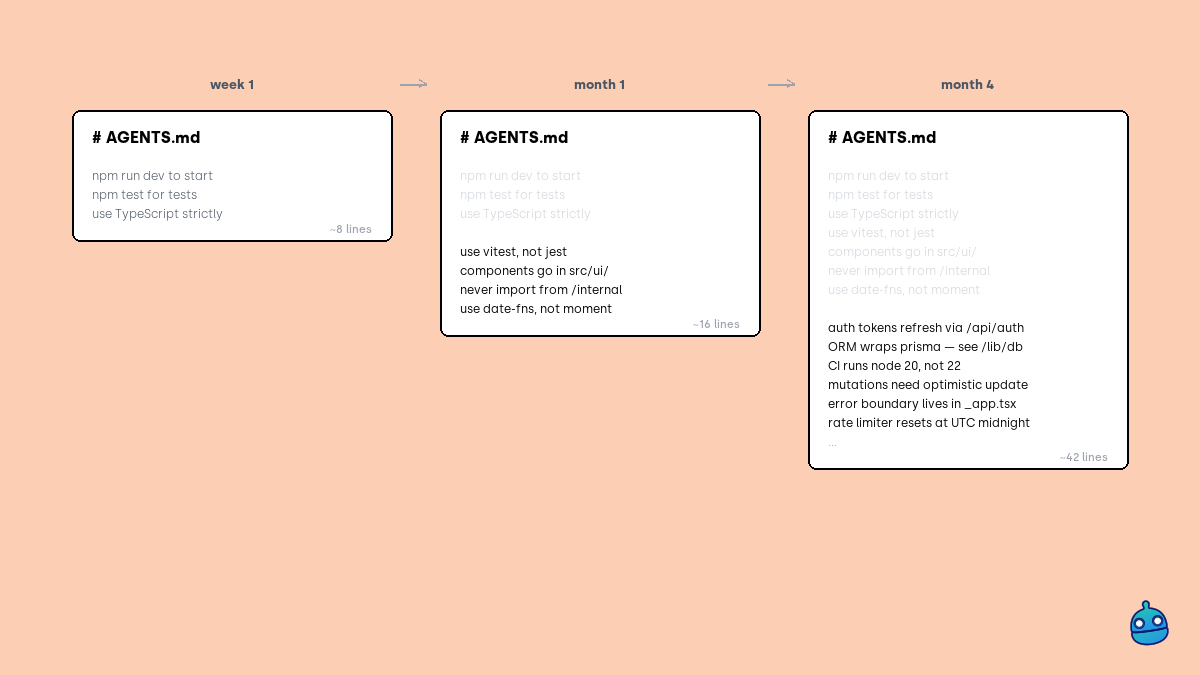

But here's the part that keeps bugging me. These are all single-player workflows. When three developers on the same team each kick off a night shift, who knows what happened by morning? The lead reviews their own diffs. The other two review theirs. Nobody has a combined picture of what all three agents and all three humans actually shipped overnight.

We solved the "write code faster" part pretty convincingly this year. The "tell the team what happened" part is mostly unsolved, and it's the part that matters for everyone who isn't the person who kicked off the agent.

English