Chuning Li 리트윗함

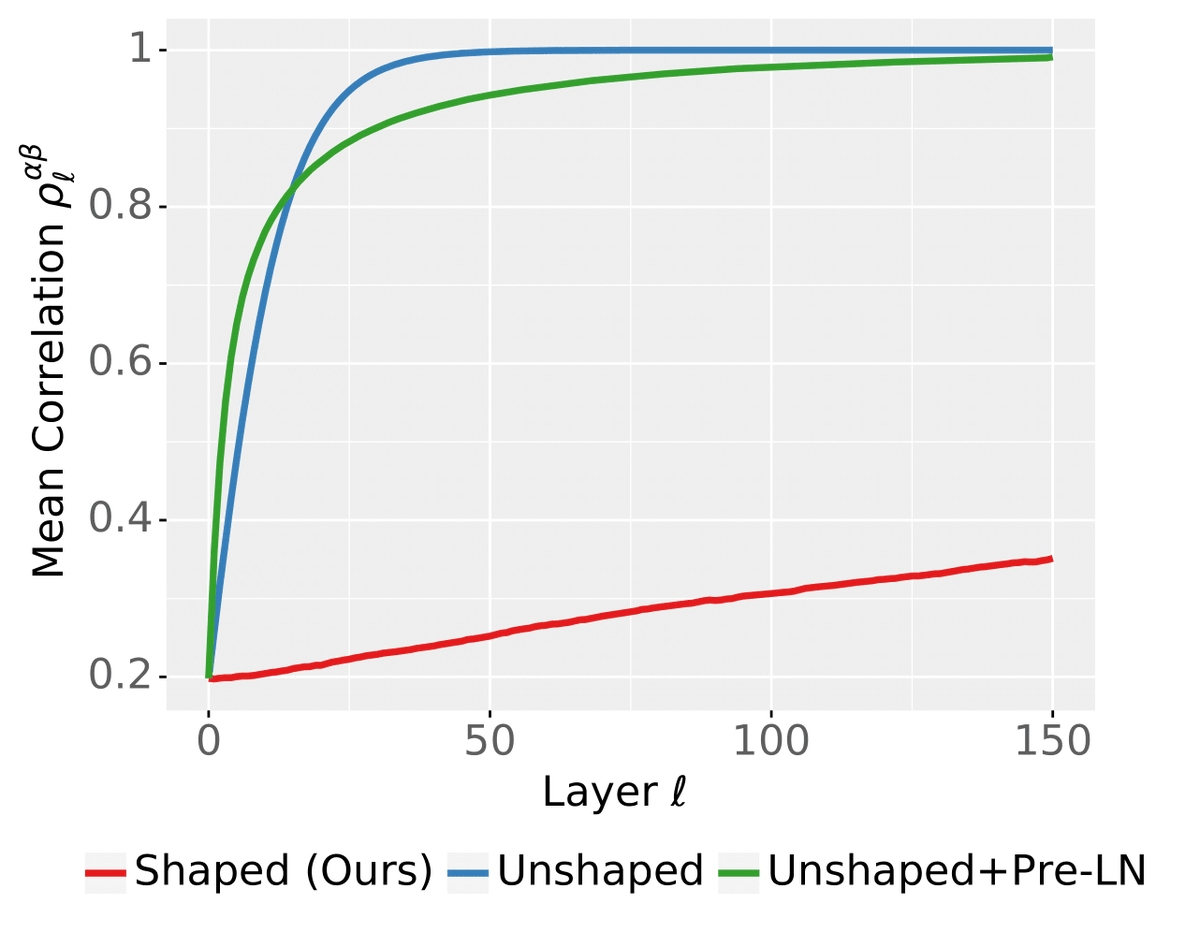

How do you scale Transformers to infinite depth while ensuring numerical stability? In fact, LayerNorm is not enough.

But *shaping* the attention mechanism works!

arxiv.org/abs/2306.17759

w/ @ChuningLi @mufan_li @bobby_he @THofmann2017 @cjmaddison @roydanroy

English