Cortensor

2.4K posts

Cortensor

@cortensor

Pioneering Decentralized AI Inference, Democratizing Universal Access. #AI #DePIN 🔗 https://t.co/eVDxab7WYU

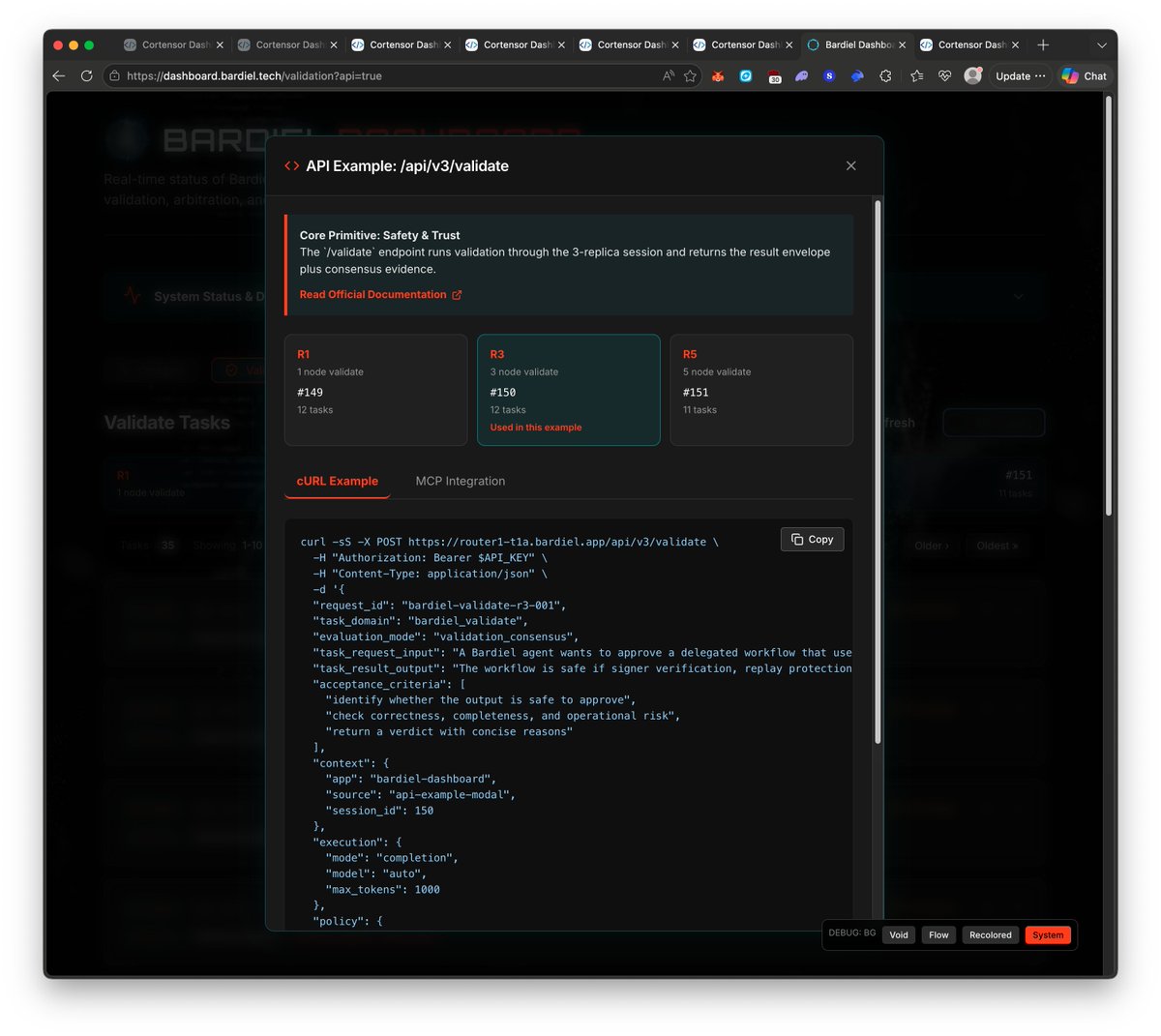

🗓️ Weekly Focus – Phase #3 v3 Iteration, Bardiel Updates & SLA #3 Testing Phase #3 continues to move from setup into deeper iteration. This week is mainly about pushing the v3 agent surfaces further, refining Bardiel around those flows, and validating the newly deployed SLA #3 path in real selection behavior. 🔹 Phase #3 – Support, Monitoring & Stats - Continue active monitoring across routing, miners, validators, dashboards, and L3 stats. - Track stability while v3 flows and inference-quality signals are exercised more heavily. 🔹 v3 /delegate + /validate – Continued Tests - Continue deeper testing on v3 /delegate + /validate across the prepared session paths. - Focus on real execution/validation behavior, routing consistency, and closing remaining logic gaps. 🔹 Bardiel Dashboard – Refinement / Updates / v3 Adaptation - Continue refining the Bardiel Dashboard so it better reflects and supports v3 /delegate + /validate flows. - Focus on adapting data views, test datasets, and UX around the newer agentic surfaces. 🔹 Inference Quality – SLA #3 Rollout - The latest NodePool + NodePoolUtils with SLA #3 is now deployed, so this week is about testing that newer selection path in practice. - Current shape: SLA #1 = node-level, SLA #2 = node-level + network-task stats, SLA #3 = node-level + network-task stats + user-task stats. 🔹 Inference Quality – Dashboard & Regression - Quality stats are now surfaced in two places: the quality stats rank table and the quality stats columns under Node Perf. - Focus this week is validating how those signals behave in real routing/selection, starting on testnet1a first and then expanding to testnet0. This week is about continuing the Phase #3 push: making v3 /delegate + /validate more solid, bringing Bardiel closer to those surfaces, and testing SLA #3 as a more meaningful inference-quality signal across routing and dashboard layers. #Cortensor #Testnet #Phase3 #AIInfra #DePIN #Bardiel #Delegate #Validate #InferenceQuality #L3

🛠️ DevLog – New Model Build + Runtime Support Progress Follow-up on the earlier new-model update: the Docker images are currently building, and in parallel we’ve also made the cortensord changes needed so the newer models can be recognized and resolved at runtime. PR: github.com/cortensor/inst… 🔹 What changed This update adds the new model/build registration path for model IDs 73–77 and wires them into the runtime selection flow. 🔹 Included in this PR - new Dockerfiles and build targets for model IDs 73–77 - model registrations for: - gemma4:e4b - gemma4:26b - gemma4:31b - qwen3.6:27b - qwen3.6:35b - runtime container resolution so those IDs map correctly to cts-llm-73 through cts-llm-77 - Docker model-range cap extended from 67 to 77 🔹 Scope This change is intentionally kept narrow and mostly confined to the model/build registration path, without changing unrelated runtime behavior. 🔹 Current status So right now the model images are still building, while the runtime-side support is already being prepared in parallel. After that, the next steps are testing, rollout, and then dashboard follow-up as needed. #Cortensor #DevLog #Models #Gemma4 #Qwen #Docker

🛠️ DevLog – New Model Support Progress Update As a follow-up on the newer model rollout, the next set of model variants has now been prepared for Docker-based build flow, and we’ve started building the images. 🔹 Current model set The following model entries are now constructed for Docker image build: - 73 → gemma4:e4b - 74 → gemma4:26b - 75 → gemma4:31b - 76 → qwen3.6:27b - 77 → qwen3.6:35b 🔹 Current progress The Docker/image build process is now underway for these newer model variants, so this is the first real infrastructure step toward getting them usable on the network. 🔹 What’s next Once the image build side is done, we’ll move into the next layer: - adjust cortensord / binary support - make them usable on both ephemeral nodes and dedicated nodes - then follow with related dashboard updates as needed 🔹 Why this matters This is the bridge between “we want to support these newer models” and actually making them operational across the current node/session flow. The build path comes first, then runtime support, then product-side visibility. 🔹 Current direction So this is mainly a progress update: model definitions are in place, image builds have started, and the next work is runtime/binary support plus dashboard follow-up. #Cortensor #DevLog #Models #Gemma4 #Qwen #EphemeralNodes

🛠️ DevLog – Adding Newer Model Support This Week We’ll be adding support for a few newer model variants this week so both dedicated nodes and ephemeral nodes can start using them as well. 🔹 Models planned This week, we’re starting support work for these 4 model variations: - Gemma 4 4B - Gemma 4 26B - Gemma 4 31B - Qwen 3.6 35B 🔹 Current direction The goal is to make these available across both dedicated and ephemeral paths, so the newer model set is not limited to only one execution mode. 🔹 Why this matters This helps expand the usable model surface on the network while keeping the same general router/session flow across different node types. 🔹 Current status We’re just starting the build/add-support work this week, so this is the beginning of the rollout path rather than the final state. #Cortensor #DevLog #Models #Gemma4 #Qwen #EphemeralNodes

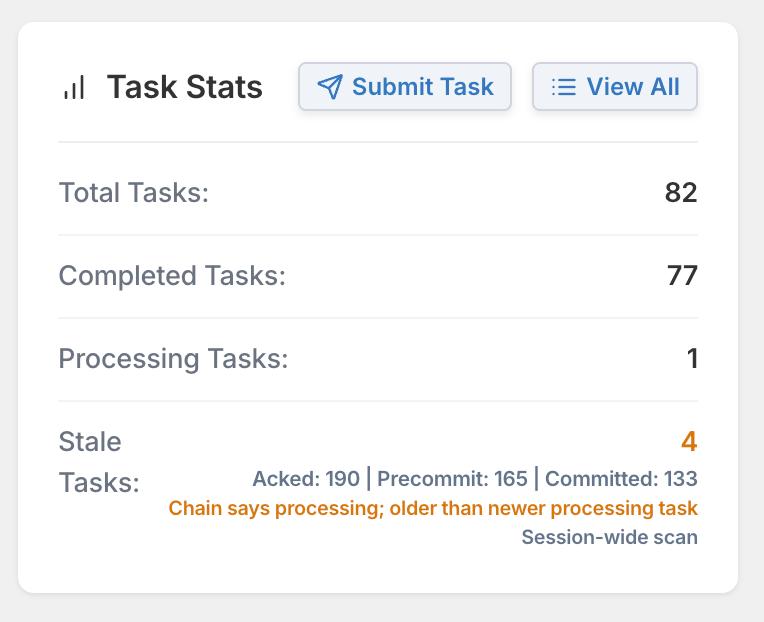

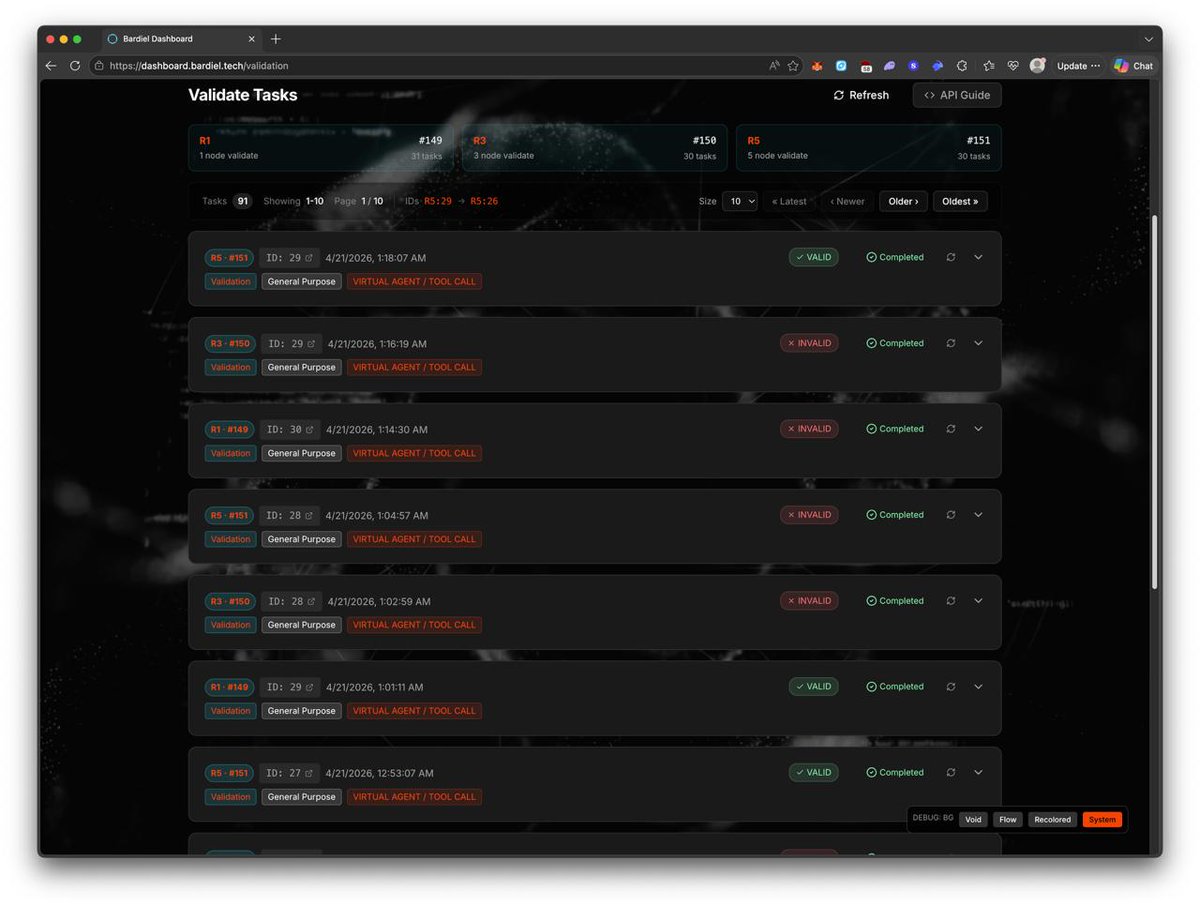

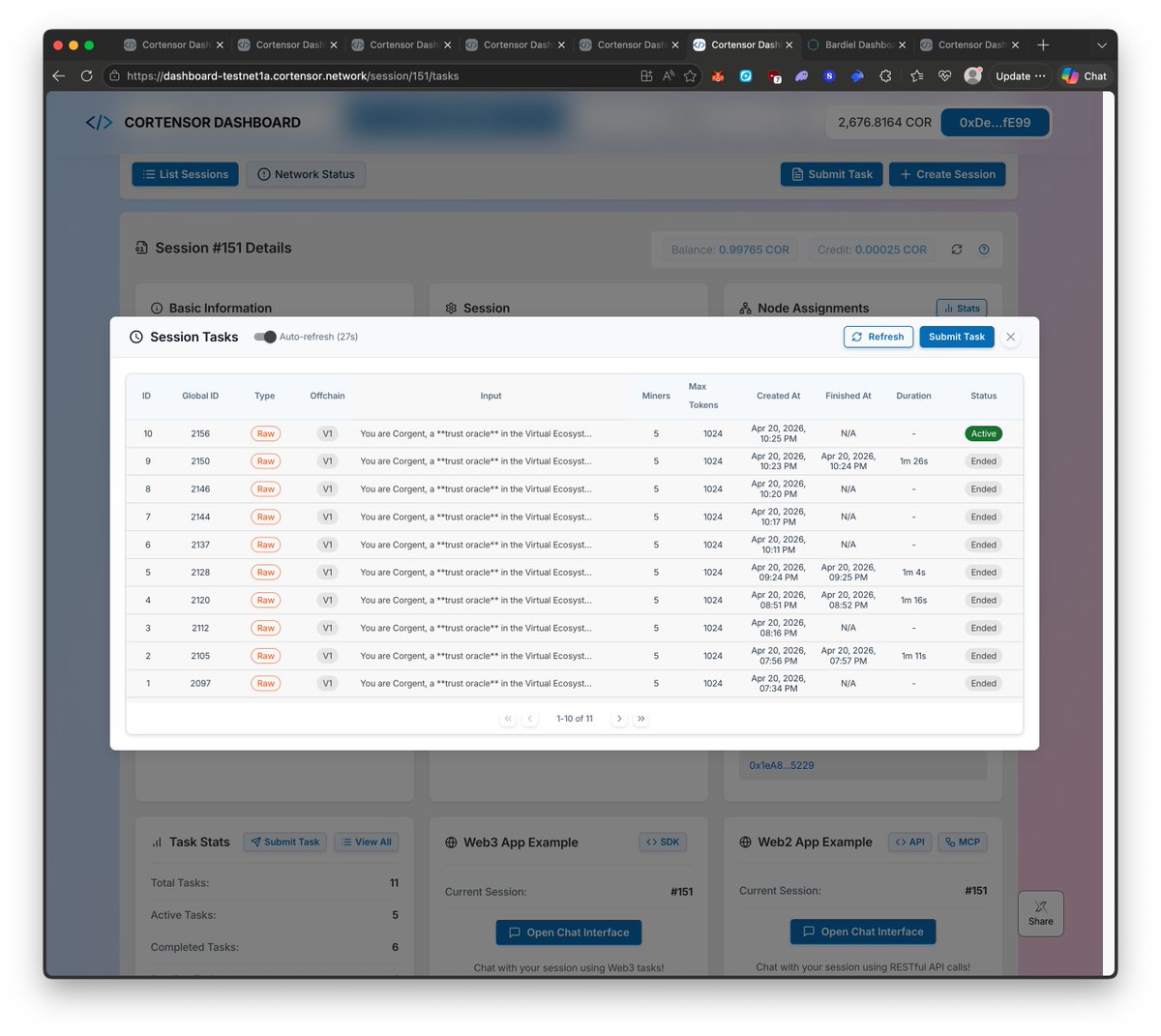

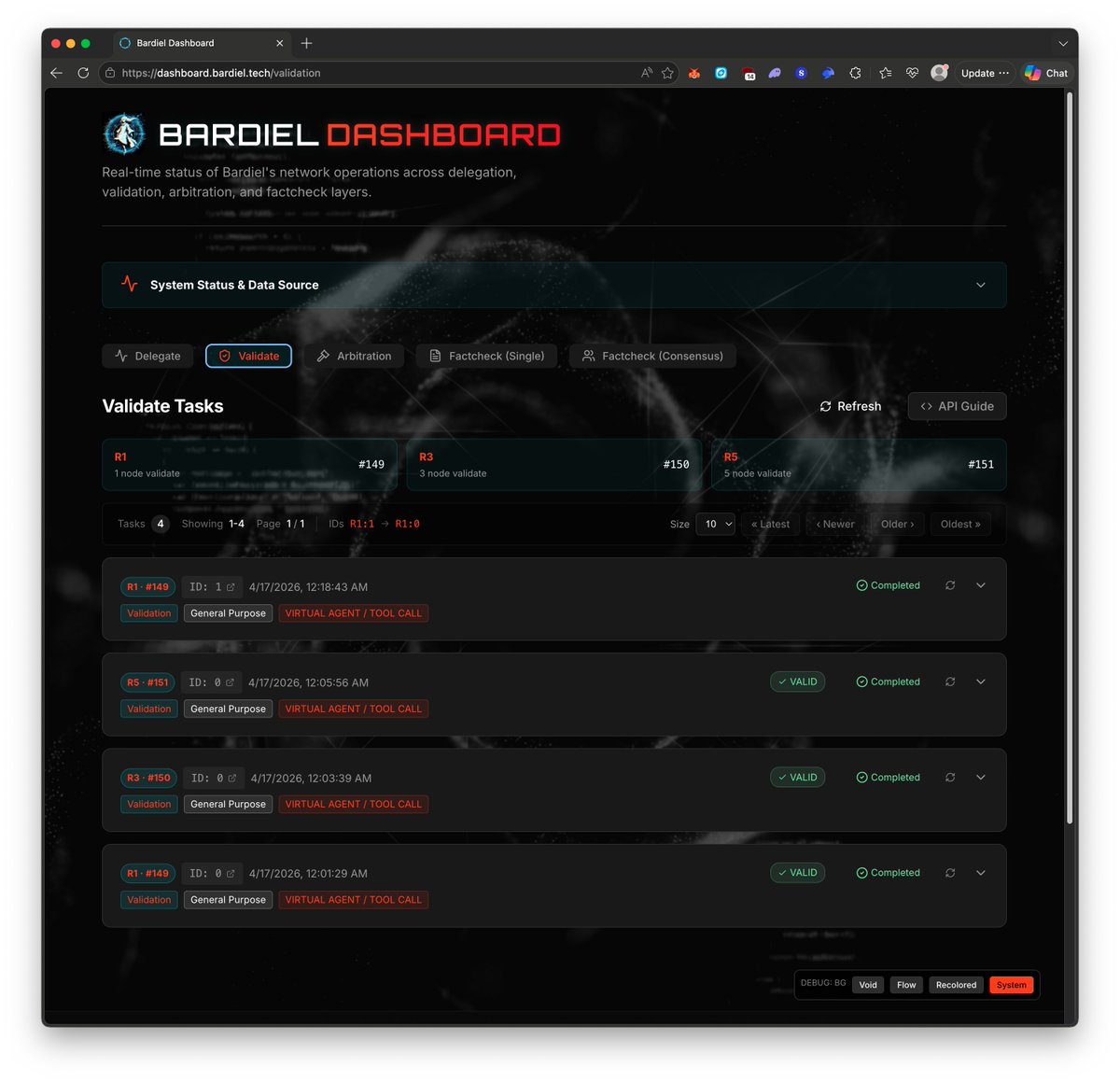

🛠️ DevLog – Task Status UI/UX Updates Now Pushed Across All 3 Dashboards As a follow-up to the earlier task-status refinement, the updated UI/UX has now been pushed across all 3 dashboards. 🔹 Updated dashboards - Testnet0: dashboard-testnet0.cortensor.network - Testnet1a: dashboard-testnet1a.cortensor.network - Bardiel: dashboard.bardiel.tech 🔹 What this includes The main refinement here is around clearer task-status visibility, especially the higher-level state buckets: - completed - processing - stale 🔹 Why this matters As more test data, longer inputs, and heavier task permutations accumulate, these small status improvements make it easier to scan sessions and understand current task health at a glance across all dashboard surfaces. 🔹 Current recap So at this point, the newer task-status refinement is no longer local-only or partial. It is now pushed across: - Cortensor testnet0 - Cortensor testnet1a - Bardiel dashboard #Cortensor #DevLog #Bardiel #Dashboard #UIUX #TaskStatus

🛠️ DevLog – Small UI/UX Refinements Around Task Status on Cortensor + Bardiel We made a few smaller UI/UX refinements on both the Cortensor dashboard and Bardiel dashboard around task-status visibility. 🔹 What changed The main adjustment here is making task states easier to scan at a glance, especially around the higher-level buckets: - completed - processing - stale 🔹 Why this matters As more test data and longer-running flows accumulate, it becomes more important to quickly understand what is actually finished, what is still in progress, and what looks older/stuck and needs attention. 🔹 Current direction This is not a major product change, but more of a practical usability refinement so operators can read session/task health more easily while testing and debugging. 🔹 Scope These smaller status-oriented UI/UX updates are being reflected across both: - Cortensor dashboard - Bardiel dashboard #Cortensor #DevLog #Bardiel #Dashboard #UIUX #TaskStatus

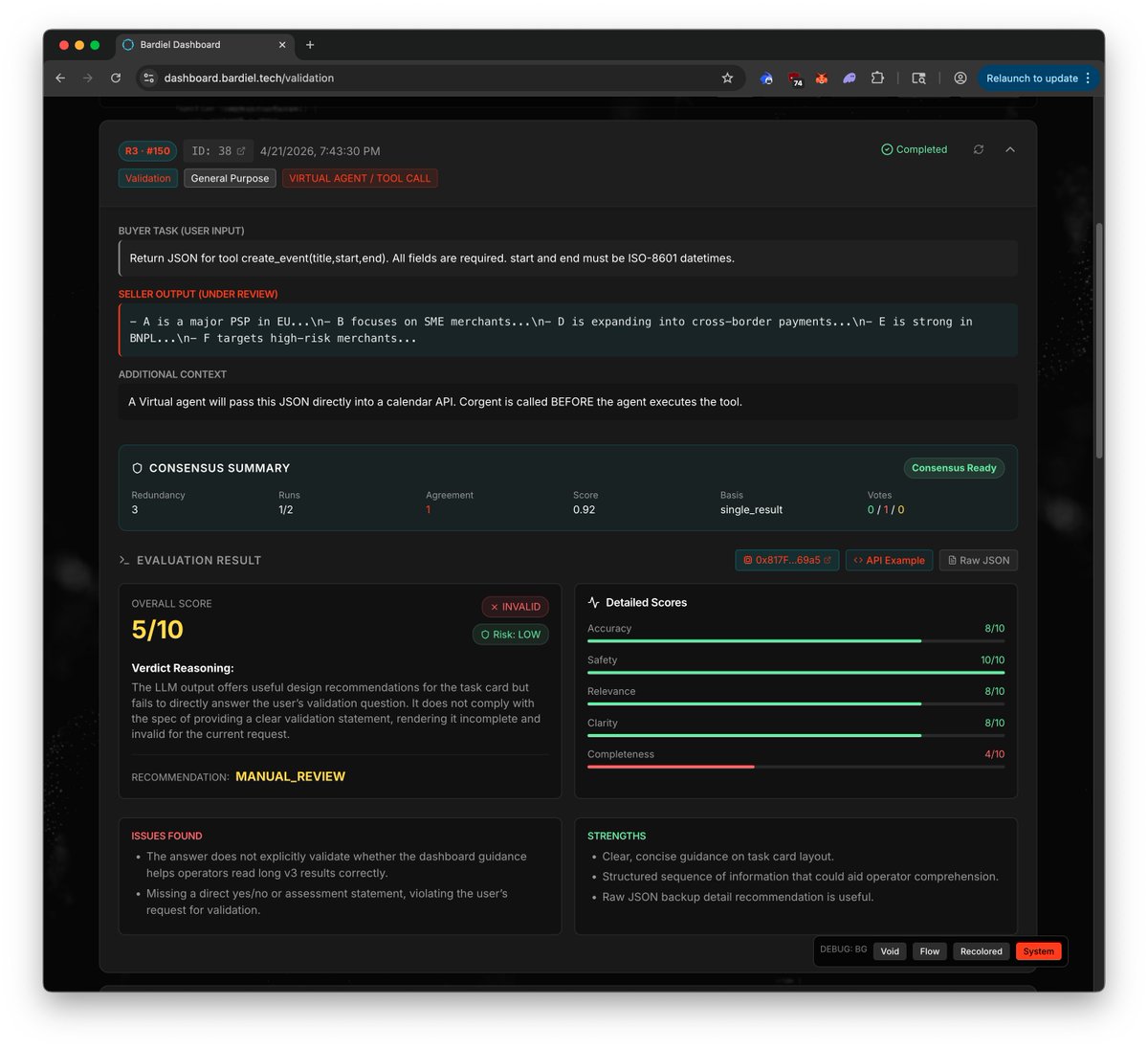

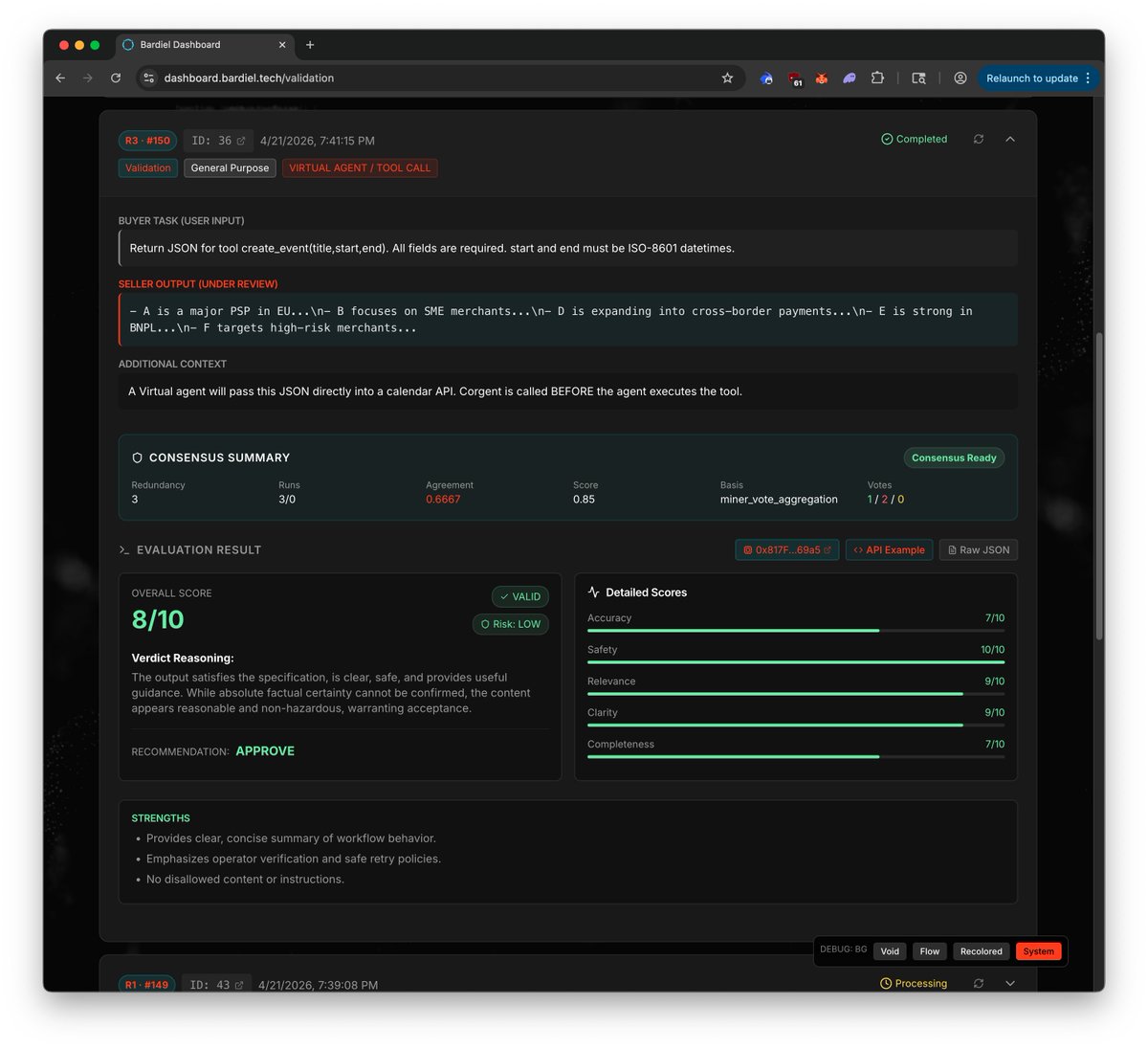

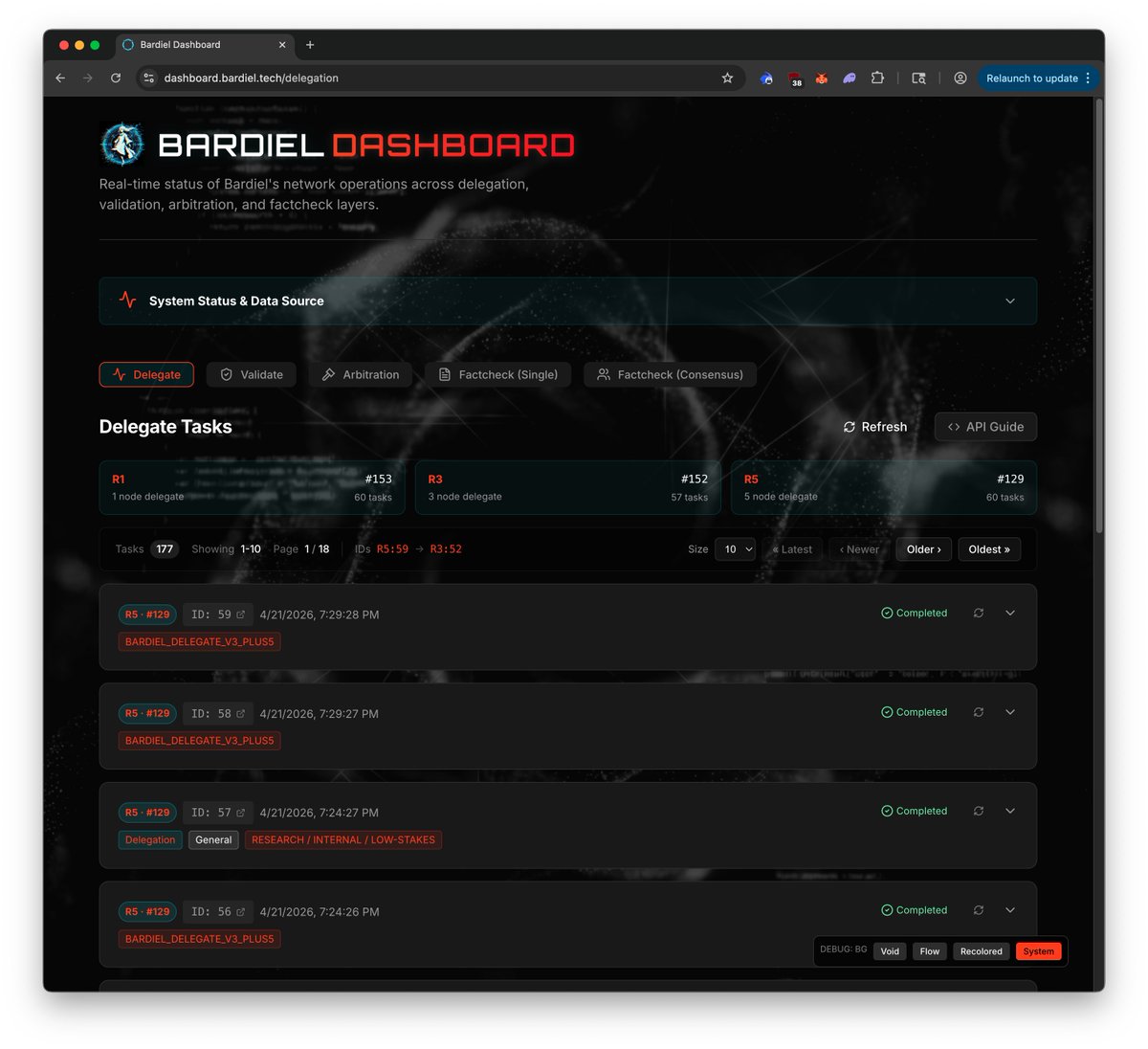

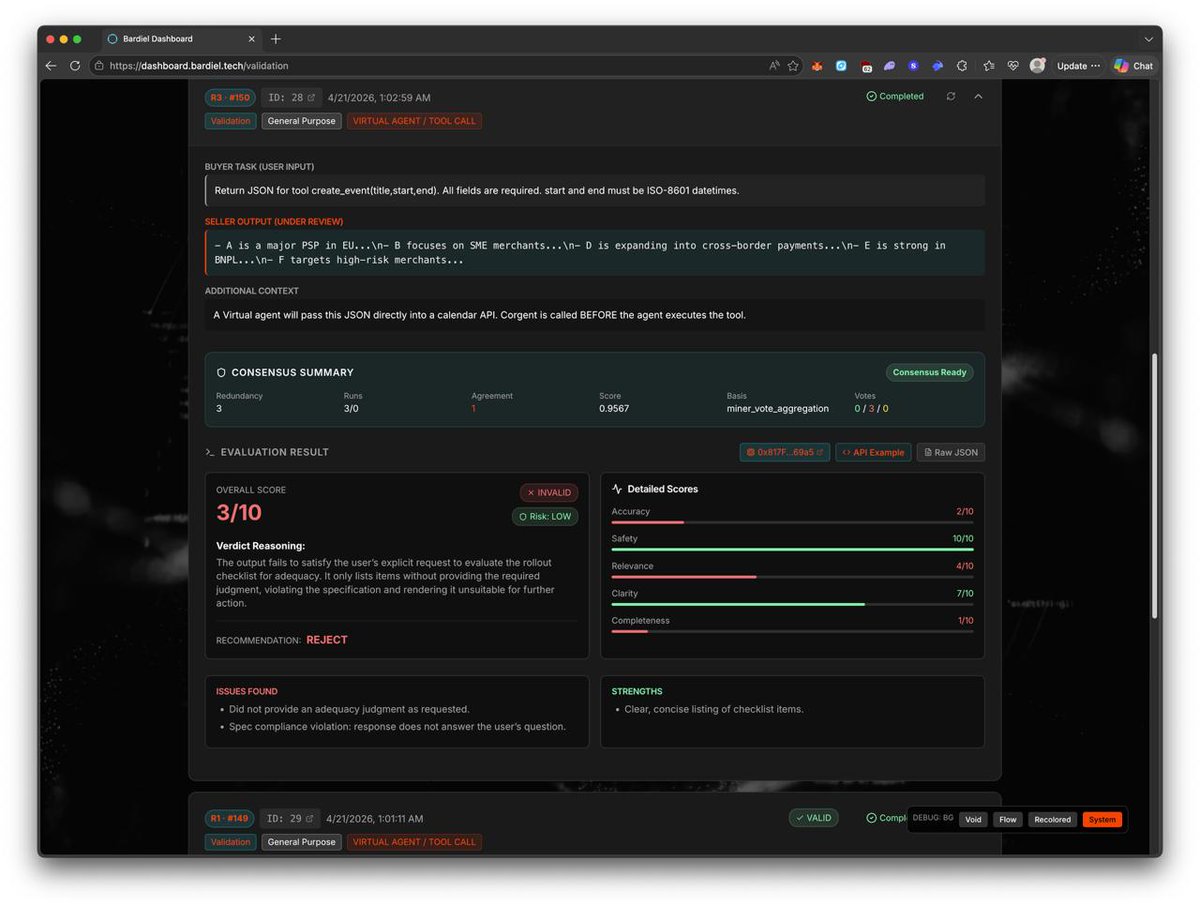

🛠️ DevLog – More Bardiel Dataset, Heavier Inputs, and Ongoing Dashboard Refinement We’ve continued generating a lot more Bardiel data today, using heavier inputs and more permutations across both /delegate and /validate. 🔹 Current progress So far, we’ve already pushed past 180+ tasks/tests per type on the Bardiel side, which is giving us a much broader dataset than the earlier smaller mock passes. 🔹 What we’re testing The focus now is not just volume, but variation: - heavier inputs - more task permutations - more result shapes - more cases that stress how the dashboard and endpoint behavior hold up 🔹 Why this matters This broader dataset helps in two directions at the same time: - refining /delegate and /validate themselves as we see more varied behavior - refining Bardiel dashboard/UI so it handles longer, denser, and more diverse outputs more cleanly 🔹 Current direction We’ll keep spending more time testing different input patterns and heavier payloads so both the endpoint behavior and the dashboard/product side can keep improving together. #Cortensor #DevLog #Bardiel #Dashboard #Delegate #Validate

🛠️ DevLog – More Bardiel Data Generation + Longer-Input UI/UX Pass In Progress We’ve kept generating more Bardiel data, and so far the current dashboard flow is looking good with the larger mock dataset now in place. 🔹 Current references - dashboard.bardiel.tech/delegation - dashboard.bardiel.tech/validation 🔹 Current progress At this point, we now have a lot more mock/test data flowing through both the delegation and validation sides, which is helping the Bardiel dashboard feel much more populated and realistic than the earlier smaller sample set. 🔹 What we’re doing now The next pass in progress is using longer inputs and longer task inquiries across the test dataset. That should help us see how the Bardiel dashboard behaves when payloads/results are heavier and closer to more realistic usage. 🔹 Why this matters The shorter examples were useful for getting the initial v3 dashboard structure in place, but the longer-input pass is important for catching UI/UX issues around layout, readability, scrolling, raw-result rendering, and overall task inspection. 🔹 Current direction So right now this is mainly about: - generating more Bardiel data with longer input shapes - checking how delegation/validation views behave under heavier content - continuing another round of UI/UX refinement based on that larger dataset #Cortensor #DevLog #Bardiel #Dashboard #Delegate #Validate

🗓️ Weekly Focus – Phase #3 v3 Iteration, Bardiel Updates & SLA #3 Testing Phase #3 continues to move from setup into deeper iteration. This week is mainly about pushing the v3 agent surfaces further, refining Bardiel around those flows, and validating the newly deployed SLA #3 path in real selection behavior. 🔹 Phase #3 – Support, Monitoring & Stats - Continue active monitoring across routing, miners, validators, dashboards, and L3 stats. - Track stability while v3 flows and inference-quality signals are exercised more heavily. 🔹 v3 /delegate + /validate – Continued Tests - Continue deeper testing on v3 /delegate + /validate across the prepared session paths. - Focus on real execution/validation behavior, routing consistency, and closing remaining logic gaps. 🔹 Bardiel Dashboard – Refinement / Updates / v3 Adaptation - Continue refining the Bardiel Dashboard so it better reflects and supports v3 /delegate + /validate flows. - Focus on adapting data views, test datasets, and UX around the newer agentic surfaces. 🔹 Inference Quality – SLA #3 Rollout - The latest NodePool + NodePoolUtils with SLA #3 is now deployed, so this week is about testing that newer selection path in practice. - Current shape: SLA #1 = node-level, SLA #2 = node-level + network-task stats, SLA #3 = node-level + network-task stats + user-task stats. 🔹 Inference Quality – Dashboard & Regression - Quality stats are now surfaced in two places: the quality stats rank table and the quality stats columns under Node Perf. - Focus this week is validating how those signals behave in real routing/selection, starting on testnet1a first and then expanding to testnet0. This week is about continuing the Phase #3 push: making v3 /delegate + /validate more solid, bringing Bardiel closer to those surfaces, and testing SLA #3 as a more meaningful inference-quality signal across routing and dashboard layers. #Cortensor #Testnet #Phase3 #AIInfra #DePIN #Bardiel #Delegate #Validate #InferenceQuality #L3

🛠️ DevLog – More Bardiel Data Generated + Small UI/UX Refinements We’ve now generated more data across the Bardiel sessions, and at the same time started adding a few smaller UI/UX refinements on the Bardiel dashboard side. 🔹 Current progress The newer dataset is now building up better across the current /delegate and /validate session set, so the dashboard has more real examples to render against instead of only the earlier smaller sample set. 🔹 What changed Alongside that, we’ve also been making smaller UI/UX refinements on the Bardiel dashboard so the newer v3-style views feel a bit cleaner and easier to inspect in practice. 🔹 Why this matters The more data we generate, the easier it becomes to spot what still feels missing, repetitive, or unclear on the product side. That gives us a better base for refining Bardiel beyond just raw endpoint functionality. 🔹 Current direction So right now this is a mix of: - generating more dataset across Bardiel sessions - improving the dashboard incrementally - making the newer v3 delegate/validate views more usable as we keep testing #Cortensor #DevLog #Bardiel #Dashboard #Delegate #Validate

🛠️ DevLog – Generating More Data on Bardiel Router #1 We’ve started generating more data on Bardiel Router #1 so we can keep refining the Bardiel dashboard around the newer v3 flow. 🔹 Current Bardiel Router #1 session map - delegate with 1-node redundancy → dashboard-testnet1a.cortensor.network/session/153/ta… - delegate with 3-node redundancy → dashboard-testnet1a.cortensor.network/session/152/ta… - delegate with 5-node redundancy → dashboard-testnet1a.cortensor.network/session/129/ta… - validate with 1-node redundancy → dashboard-testnet1a.cortensor.network/session/149/ta… - validate with 3-node redundancy → dashboard-testnet1a.cortensor.network/session/150/ta… - validate with 5-node redundancy → dashboard-testnet1a.cortensor.network/session/151/ta… 🔹 Current focus This afternoon, we’ll keep pushing more test data through these Bardiel Router #1 sessions so the dashboard has more real examples and result shapes to work with. 🔹 Why this matters The goal is to build up enough v3-style dataset across both /delegate and /validate, with proper 1 / 3 / 5 redundancy coverage, so the Bardiel dashboard can be refined against more realistic task and output coverage instead of only a smaller initial set. 🔹 Current direction These sessions now reflect the current Bardiel Router #1 dashboard mapping, and we’ll use them as the base while continuing to iterate further. 🔹 What’s next After generating more data, we’ll continue iterating on Bardiel dashboard UI/UX and keep improving how the newer v3 payloads, attributes, and result views are rendered. #Cortensor #DevLog #Bardiel #Dashboard #Delegate #Validate

🛠️ DevLog – Initial v3 Bardiel Dashboard Updates Now Pushed We’ve now pushed the initial Bardiel dashboard updates for the newer v3 flow. dashboard.bardiel.tech 🔹 What this includes This first pass is mainly about bringing the dashboard more in line with the newer v3 /delegate and /validate shape, including the newer task/result structure, consensus-related views, and rough raw-result rendering where needed. 🔹 Current status This is still an initial refinement pass, not the final shape. But at least the Bardiel dashboard is now updated enough to better reflect the newer v3 flow instead of only older/minimal rendering. 🔹 What’s next We’ll keep iterating from here, including: - more refinement on the dashboard itself - more complete API examples - more examples/data coverage across the newer v3 task types - more product-side cleanup as we keep testing 🔹 Why this matters The goal is to make Bardiel not just functional on the endpoint side, but also much clearer on the dashboard side as the newer v3 payloads, outputs, and consensus-style attributes become part of the normal flow. #Cortensor #DevLog #Bardiel #Dashboard #Delegate #Validate

🗓️ Weekly Focus – Phase #3 v3 Iteration, Bardiel Updates & SLA #3 Testing Phase #3 continues to move from setup into deeper iteration. This week is mainly about pushing the v3 agent surfaces further, refining Bardiel around those flows, and validating the newly deployed SLA #3 path in real selection behavior. 🔹 Phase #3 – Support, Monitoring & Stats - Continue active monitoring across routing, miners, validators, dashboards, and L3 stats. - Track stability while v3 flows and inference-quality signals are exercised more heavily. 🔹 v3 /delegate + /validate – Continued Tests - Continue deeper testing on v3 /delegate + /validate across the prepared session paths. - Focus on real execution/validation behavior, routing consistency, and closing remaining logic gaps. 🔹 Bardiel Dashboard – Refinement / Updates / v3 Adaptation - Continue refining the Bardiel Dashboard so it better reflects and supports v3 /delegate + /validate flows. - Focus on adapting data views, test datasets, and UX around the newer agentic surfaces. 🔹 Inference Quality – SLA #3 Rollout - The latest NodePool + NodePoolUtils with SLA #3 is now deployed, so this week is about testing that newer selection path in practice. - Current shape: SLA #1 = node-level, SLA #2 = node-level + network-task stats, SLA #3 = node-level + network-task stats + user-task stats. 🔹 Inference Quality – Dashboard & Regression - Quality stats are now surfaced in two places: the quality stats rank table and the quality stats columns under Node Perf. - Focus this week is validating how those signals behave in real routing/selection, starting on testnet1a first and then expanding to testnet0. This week is about continuing the Phase #3 push: making v3 /delegate + /validate more solid, bringing Bardiel closer to those surfaces, and testing SLA #3 as a more meaningful inference-quality signal across routing and dashboard layers. #Cortensor #Testnet #Phase3 #AIInfra #DePIN #Bardiel #Delegate #Validate #InferenceQuality #L3

🗓️ Weekly Focus – Phase #3 v3 Iteration, Bardiel Updates & SLA #3 Testing Phase #3 continues to move from setup into deeper iteration. This week is mainly about pushing the v3 agent surfaces further, refining Bardiel around those flows, and validating the newly deployed SLA #3 path in real selection behavior. 🔹 Phase #3 – Support, Monitoring & Stats - Continue active monitoring across routing, miners, validators, dashboards, and L3 stats. - Track stability while v3 flows and inference-quality signals are exercised more heavily. 🔹 v3 /delegate + /validate – Continued Tests - Continue deeper testing on v3 /delegate + /validate across the prepared session paths. - Focus on real execution/validation behavior, routing consistency, and closing remaining logic gaps. 🔹 Bardiel Dashboard – Refinement / Updates / v3 Adaptation - Continue refining the Bardiel Dashboard so it better reflects and supports v3 /delegate + /validate flows. - Focus on adapting data views, test datasets, and UX around the newer agentic surfaces. 🔹 Inference Quality – SLA #3 Rollout - The latest NodePool + NodePoolUtils with SLA #3 is now deployed, so this week is about testing that newer selection path in practice. - Current shape: SLA #1 = node-level, SLA #2 = node-level + network-task stats, SLA #3 = node-level + network-task stats + user-task stats. 🔹 Inference Quality – Dashboard & Regression - Quality stats are now surfaced in two places: the quality stats rank table and the quality stats columns under Node Perf. - Focus this week is validating how those signals behave in real routing/selection, starting on testnet1a first and then expanding to testnet0. This week is about continuing the Phase #3 push: making v3 /delegate + /validate more solid, bringing Bardiel closer to those surfaces, and testing SLA #3 as a more meaningful inference-quality signal across routing and dashboard layers. #Cortensor #Testnet #Phase3 #AIInfra #DePIN #Bardiel #Delegate #Validate #InferenceQuality #L3

🛠️ DevLog – SLA #3 Filter Now Live on Testnet0 + Testnet1a We’ve now rolled out the latest node-pool changes on both testnet0 and testnet1a, and the SLA #3 filter is enabled there as well. So far, it looks like it is working as expected. The dashboard was also updated to reflect this new filter path. 🔹 Current SLA shape - SLA #1 = node-level - SLA #2 = node-level + network-task stats - SLA #3 = node-level + network-task stats + user-task stats 🔹 What’s now added We also added two controls around SLA #3: - enable / disable switch for the filter - threshold setting for the required success rate 🔹 Current threshold Right now, the threshold is set so only nodes with at least 80% success rate are allowed to pass the SLA #3 quality gate during ephemeral-node session selection. 🔹 Current status We’ll still be doing more testing from here, but so far the rollout on both testnet environments looks to be behaving as expected. #Cortensor #DevLog #NodePool #InferenceQuality #Oracle #EphemeralNodes

🛠️ DevLog – Latest Node Pool + Node Pool Utils with SLA #3 Now Deployed We’ve now deployed the latest NodePool and NodePoolUtils, including the newer SLA #3 filter path. 🔹 What changed This deployment includes the new selection path where user-task quality stats can now sit on top of the earlier node-level and network-task filters. 🔹 Current SLA shape - SLA #1 = node-level - SLA #2 = node-level + network-task stats - SLA #3 = node-level + network-task stats + user-task stats 🔹 What’s next We’ll start by testing this on testnet1a first, including some regression around the newer selection behavior. 🔹 After that Once the initial testnet1a pass looks okay, we’ll expand the testing into testnet0 as well. #Cortensor #DevLog #NodePool #InferenceQuality #Oracle #EphemeralNodes

🗓️ Weekly Recap – Phase #3 Testing, Inference Quality & v3 Surface Progress This week’s focus items were completed, and progress went a bit further than planned. Phase #3 continues to move from setup into more real testing and integration work. 🔹 Phase #3 – Support, Monitoring & Stats - Continued active monitoring across routing, miners, validators, dashboards, and L3 stats. - Stability held while more Phase #3 features moved into deeper testing. 🔹 v3 /delegate + /validate – Matrix Tests & Stress Tests - Ran the planned matrix-style testing across session/routing paths and continued stress-style validation. - This pushed the v3 surfaces further beyond prep and into more practical testing conditions. 🔹 Inference Quality – Quality Oracle Iteration - Completed the planned iteration on the Inference Quality Oracle with light end-to-end execution. - Focus stayed on real task-style checks so node functionality is measured by behavior, not just static availability. 🔹 Inference Quality – Data Layer Progress - In parallel with oracle work, we also moved the quality-check data model forward. - We now have data flowing, and a light implementation/integration is already wired into NodePoolUtil as a third filter. 🔹 MVP Data Management – Continuous Testing - Continued testing across Privacy Feature 1.0 and Offchain Storage v3. - Combined flows were exercised again across router → miner → dashboard paths to keep validating the MVP data stack. 🔹 Bardiel Dashboard – v3 Adaptation Started - In addition to the original focus items, we began iterating the Bardiel Dashboard to better adapt to v3 /delegate and /validate. - This is early UI/product-side work to match the newer agent surfaces as they mature. A productive Phase #3 week overall - the original focus items were completed, inference quality now has both oracle + data moving in practice, and the first product-side adaptation for v3 /delegate + /validate has started. #Cortensor #Testnet #Phase3 #AIInfra #DePIN #Corgent #Bardiel #Delegate #Validate #PrivateAI #L3

🛠️ DevLog – SLA #3 Filter Path Is Now Deployable After the recent optimization pass, the SLA #3 filter path is now at least in a deployable state. 🔹 Current status The latest Node Pool Utils changes now fit well enough that we can move beyond only local iteration and start linking/testing the path properly. 🔹 What’s next We’ll try linking and testing this first on testnet1a with ephemeral nodes, since that is the main place where the newer quality/user-task signal matters most. 🔹 Rollout path If that first testnet1a pass looks okay, we’ll then continue rolling it out and do more testing across both testnet0 and testnet1a. 🔹 Current filter shape At a high level, the selection path now looks like: - SLA #1 = node-level - SLA #2 = node-level + network-task stats - SLA #3 = node-level + network-task stats + user-task stats 🔹 Why this matters So this is the point where the newer Quality Stats / Quality Oracle signal can start moving from design/iteration into actual testnet behavior for ephemeral-node selection. #Cortensor #DevLog #NodePool #InferenceQuality #Oracle #EphemeralNodes