Herr Greenrush (e/acc) 리트윗함

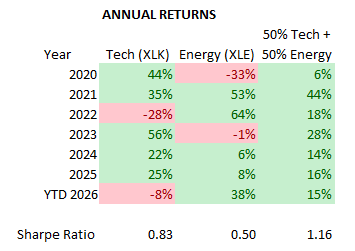

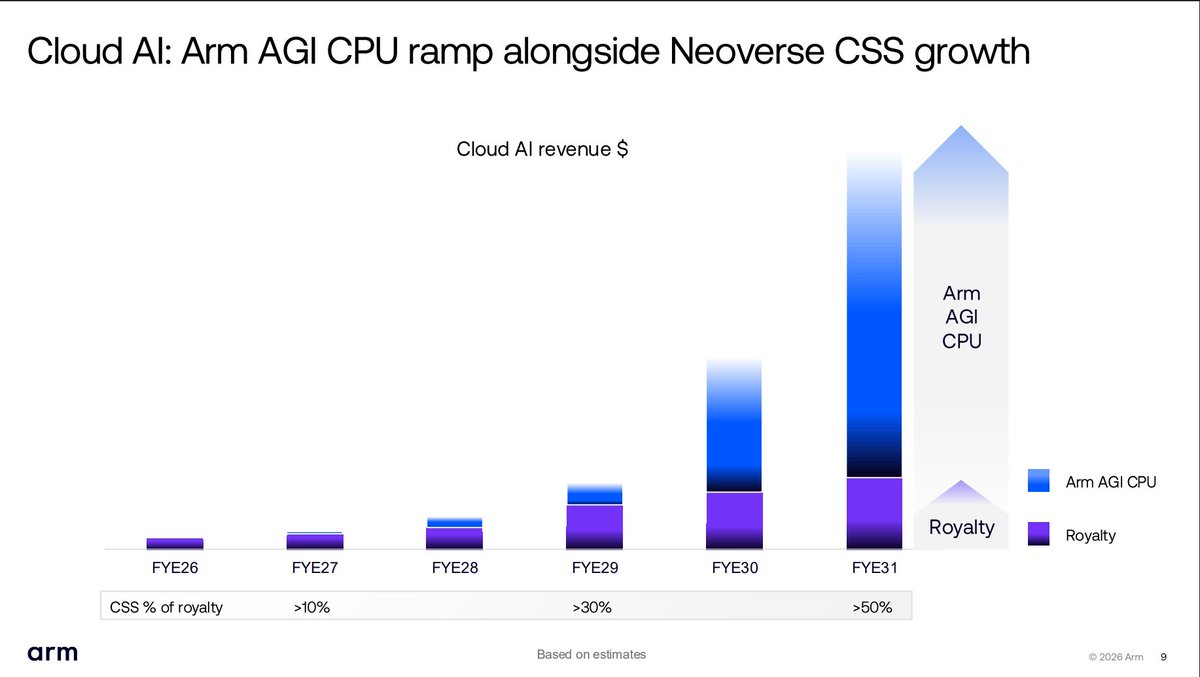

Arm's pivot to become a competitor to its customers may come at the expense of margins (from current 99% to 73% by FY31) but offers higher incremental revenue and gross profit. The ramp is stunning. FY28 $1B, FY29 $2B, FY30 $4B and FY31 $15B. ASP increases a factor too. Arm sees >55% margins on CPUs and implied upside on revenue. Rambus is probably close example of IP/product company and Rambus has >60% margins on product.

English