Michael Sutton

3.3K posts

Michael Sutton

@michaelsuttonil

Computer science, graph theory, parallelism, consensus; taking Kaspa to the next level

You are absolutely right: tools alone are not enough. What you also need is a unifying mission - a stag worth hunting together - and it cannot just be a product, a memecoin or a financial instrument. It has to point beyond finance and beyond economic settlement. That is why I do not think the real opportunity here is simply to build better tools for coordination. It is to build mission-driven institutions that can coordinate people around questions and goals that existing structures struggle to hold. I think I have already identified the stag I personally want to hunt: properly exploring the possibility that cognition is a scale-free process in our cosmos. A growing number of scientists and researchers are beginning to take this idea seriously. But the work lives in the cracks between established fields - AI, philosophy, physics, cosmology, biology, economics, and perhaps even some form of spirituality - which makes collaboration unusually hard and costly. The people best positioned to push it forward are usually too busy doing the actual work to also build the social infrastructure needed to coordinate the field in a persistent and goal-directed way. There are of course people like Dr. Michael Levin trying to connect the dots by organizing conferences and conversations across disciplines. But efforts like these are still relatively isolated, often revolve around key individuals, and lack a persistent address that others can turn to if they want to follow the work, contribute, or collaborate. And if this movement keeps growing, the coordination burden on those individuals will only increase. That is exactly why I think a distributed institution dedicated to this mission could matter - something like a Cognitive Cosmos Institute: a persistent coordination layer for people working on these questions, and potentially even a new channel for funding outside the established structures of academia. Maybe it fails. Maybe people think the whole idea is nonsense. But I believe we are at a unique moment in the development of our species. We now have tools that are still radically underutilized when it comes to building mission-driven institutions. I think people generally underestimate how much agency they actually have. Sometimes you can just do things. And it is up to us to build the tools that empower people to do exactly that.

> “The state is in redeem script, so only when the output UTXO is spent, the original script will be visible.” That’s incorrect. By definition of a covenant, the tx creating the output must verify the state written to it. This means that if you run the script engine over that tx you inevitably compute that output state along the way. I actually have a proposal directly related to this (see link below), but it’s only for convenience. The statement holds regardless github.com/michaelsutton/…

I wrote a PoC token contract in Silverscript, currently called DOG20 (better name ideas are welcome). It supports token ownership by 3 kinds of entities: 1. Public keys — like any regular Kaspa address. 2. P2SH addresses — which means ownership by a stateless contract, e.g. multisig. 3. Covenant IDs — which means ownership by a stateful contract. The third option is the interesting one, and it's a demonstration of a broader concept (that might be familiar to whoever watched the webinar by @IzioDev and @michaelsuttonil), called inter-covenant-communication (ICC). In this context, it means you can put arbitrary stateful rules around token control. For example: - “after the first 10 spends, wait a year before spending again” - zk-rollups can manage their L1 tokens using a stateful bridge. DOG20 also supports minters that are allowed to mint indefinitely — but that does not mean the supply must be unbounded. Let's say you want to publish a token and allow to issue only 100 new tokens each month. DOG20 doesn't support it natively, but you can achieve that by making the only minting entity a covenant. That covenant will store in its state `nextIssuance`, and will allow spends of 100 tokens only if `time > nextIssuance`, and will set `nextIssuance = nextIssuance + 30 days` each time it's used. I hope to explain about it a bit more in the future, but in the meantime, feel free to look at the examples linked in the next comment.

On hashd.ag/staghunt/, we are able to see @hashdag's theory for why decentralized blockchain is still needed and more needed than ever. Link to Y speech at @OxfordUnion: hashd.ag/oxford-union-a…. We are very proud to have orchestrated and funded it. Full-length 40-min video to be released soon.

Today there is a bigger update because there are 3 new PRs that are ready for review. The first one (github.com/kaspanet/vprog…) fixes some bugs and introduces the SchedulerState which unifies the way we expose shared state in our framework. The second one (github.com/kaspanet/vprog…) introduces the node-framework which builds on top of the first PR and introduces a generic platform for building L2 nodes that ingest data from the Kaspa L1 to produce state changes (and eventually proofs) of the L2-execution. The third one (github.com/kaspanet/vprog…) introduces the node-vprogs-cli - an actual binary that can be executed and that follows the L1 to execute transactions in a concrete VM. The binary is designed to support compile-time modularity, which means that by updating a single line in the backend.rs we can swap out both, the storage and the VM. A VM is defined by three functions: 1. A pre_process_block function which extracts the relevant L2 transactions from the L1 chainblock. 2. A process_transaction function which executes a single transaction (within its scope) and produces execution-results. 3. A post_process_block function which takes the execution results of the individual transactions and stitches them together into an aggegrated proof structure which can be settled on the L1. All steps are parallelized as much as the underlying causal structure permits. I still have a few chores on my todo-list and there are still a few missing parts (like syncing from state that we didn't actively witness) but this is pretty much as far as we get without re-integrating with the covenants on L1, so the next steps will be to actually design the concrete framework for settling state transitions on the L1 (and consequently syncing from it). I suspect that this will require a little bit of back and forth between the two development efforts but we are getting to the point where things start to get interesting.

I beg to differ @oneforonehaha. Cov+ZK is conceptually the same as what the OP_CAT camp in BTC is pushing for. In principle, we could've introduced programmability into kaspa by merely adding OP_CAT; that would've been easier to communicate, though not to build with; you'd still need a silverscript-like compiler cc ON, easy authentication of covenant lineage cc MS, etc. BTC's OP_CAT doesn't magically make program development accessible. Ecosystem differences aside, any practical, developer-friendly utilization of OP_CAT would be in the ballpark of what kas core designed here. (One arguable exception: BTC could've used the simpler block header sequencing commitment and require apps to zk-prove the entire onchain activity and not just their app's activity; infeasible for high throughput). With all the great ideas baked into the design, I still think we should view the upcoming HF as a natural next step, one that has no particular research angle or conceptual novelty. Think of it as OP_CAT++

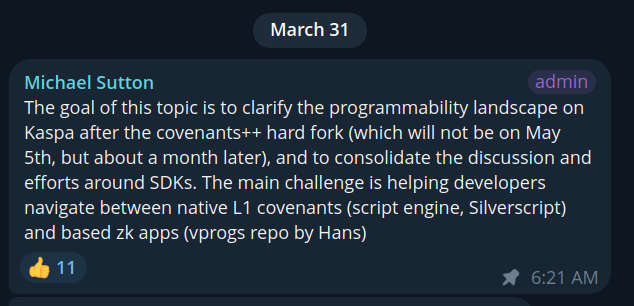

wrote an outlook for the upcoming “Toccata” hard fork -- native L1 covenants, based zk apps, why the activation window moved, and what the road from feature freeze to mainnet looks like: @michaelsuttonil/kaspa-covenants-toccata-hard-fork-outlook-a4d81a40900c" target="_blank" rel="nofollow noopener">medium.com/@michaelsutton…

wrote an outlook for the upcoming “Toccata” hard fork -- native L1 covenants, based zk apps, why the activation window moved, and what the road from feature freeze to mainnet looks like: @michaelsuttonil/kaspa-covenants-toccata-hard-fork-outlook-a4d81a40900c" target="_blank" rel="nofollow noopener">medium.com/@michaelsutton…