ollama

7.7K posts

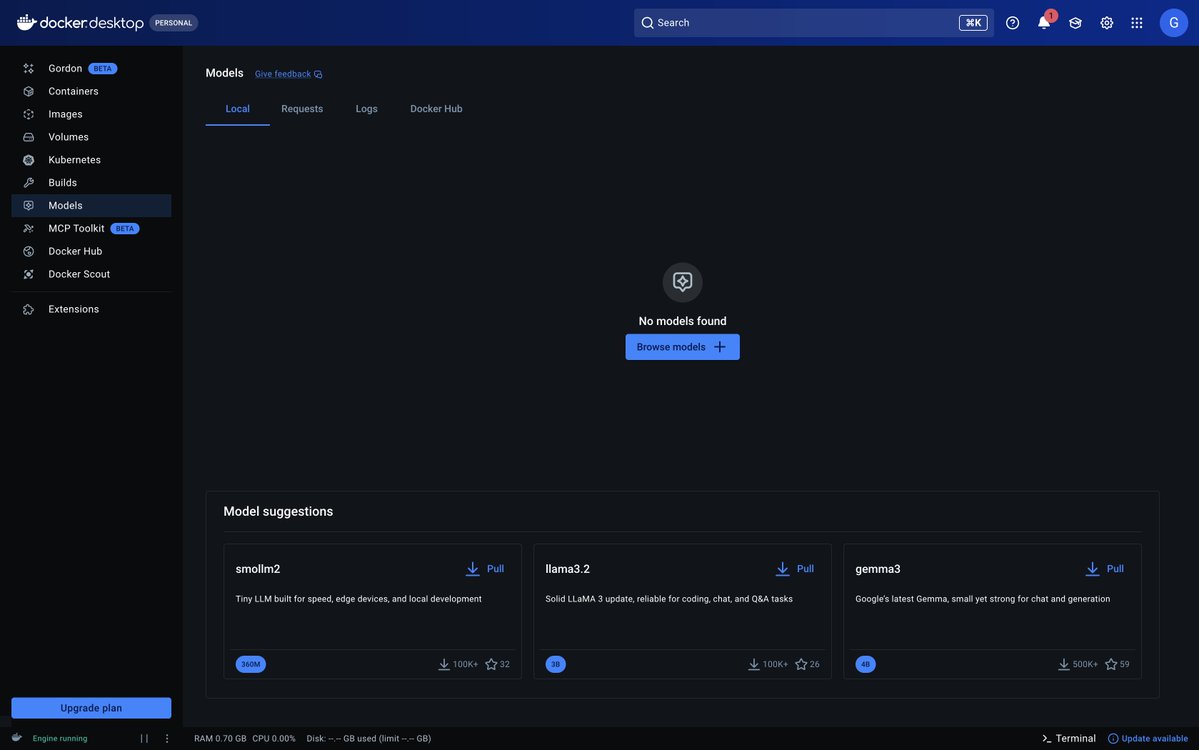

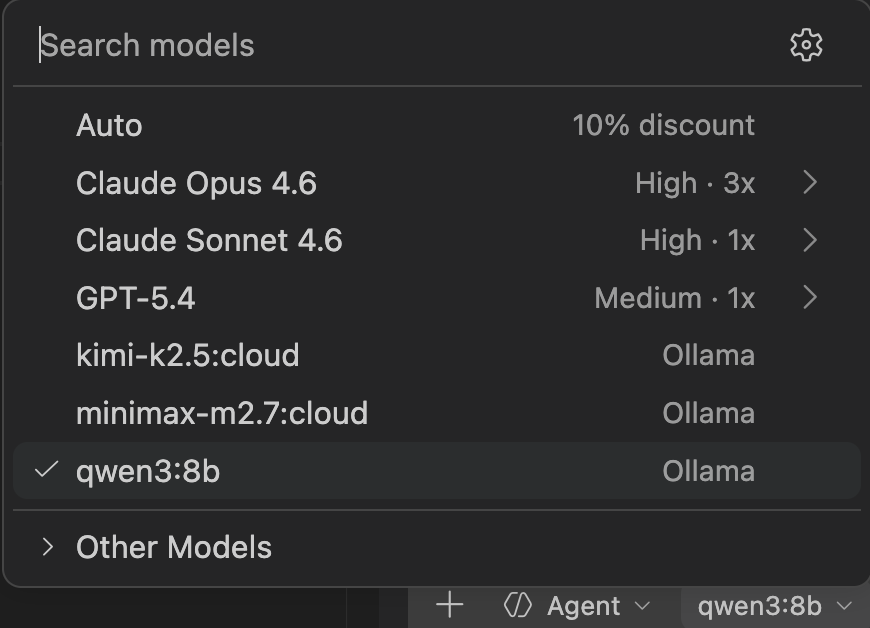

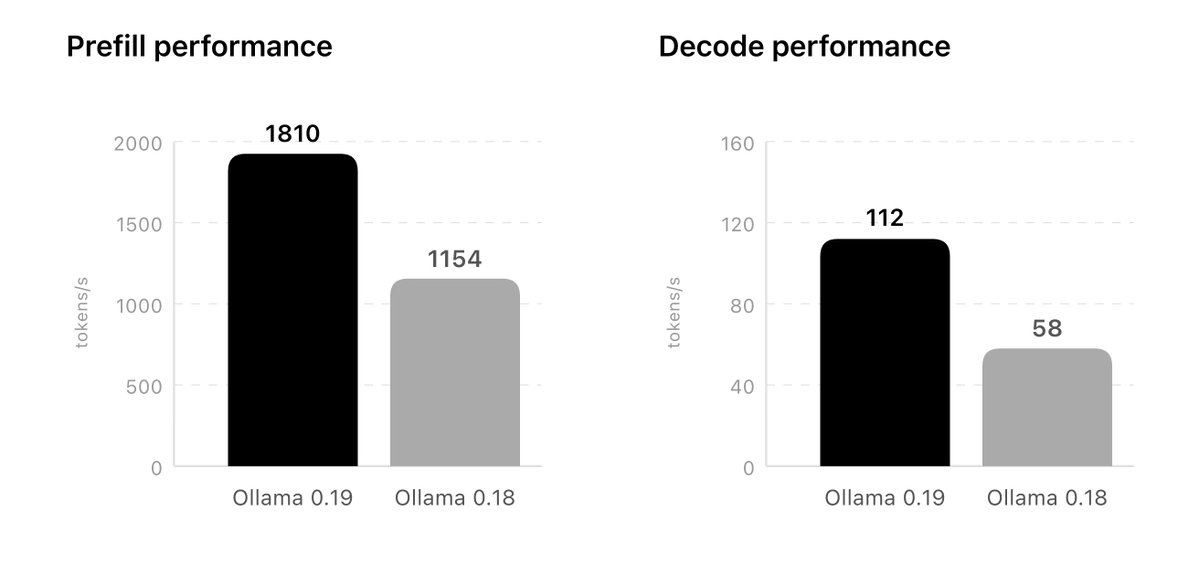

Ollama is now updated to run the fastest on Apple silicon, powered by MLX, Apple's machine learning framework. This change unlocks much faster performance to accelerate demanding work on macOS: - Personal assistants like OpenClaw - Coding agents like Claude Code, OpenCode, or Codex

Ollama is now updated to run the fastest on Apple silicon, powered by MLX, Apple's machine learning framework. This change unlocks much faster performance to accelerate demanding work on macOS: - Personal assistants like OpenClaw - Coding agents like Claude Code, OpenCode, or Codex

Jensen Huang is coming to Interrupt. May 13-14 in SF. Join Jensen and Harrison for a fireside chat to learn where enterprise agents are headed. We'll dive into the LangChain x @nvidia partnership and how Deep Agents, NVIDIA Nemotron models, and the NVIDIA Agent Toolkit enable production-grade claws for the enterprise. Get tickets: interrupt.langchain.com