SITG

4.4K posts

SITG

@sitgdev

AI/ML. Search & Relevance.

Terence Tao on AI in Math. AI can synthesize a million papers and brute-test ideas. Humans can check just 5 examples and see the pattern. But as systems move toward world models, causal reasoning, and active learning, this efficiency gap will narrow.

This is wild. theaustralian.com.au/business/techn…

PREDICTION - Amazon will ban all Gen-AI assisted code changes in the coming weeks! More companies will follow..... Be warned - your legacy code base, tech debt and bugs will sky-rocket if you continue to BLINDLY embrace AI

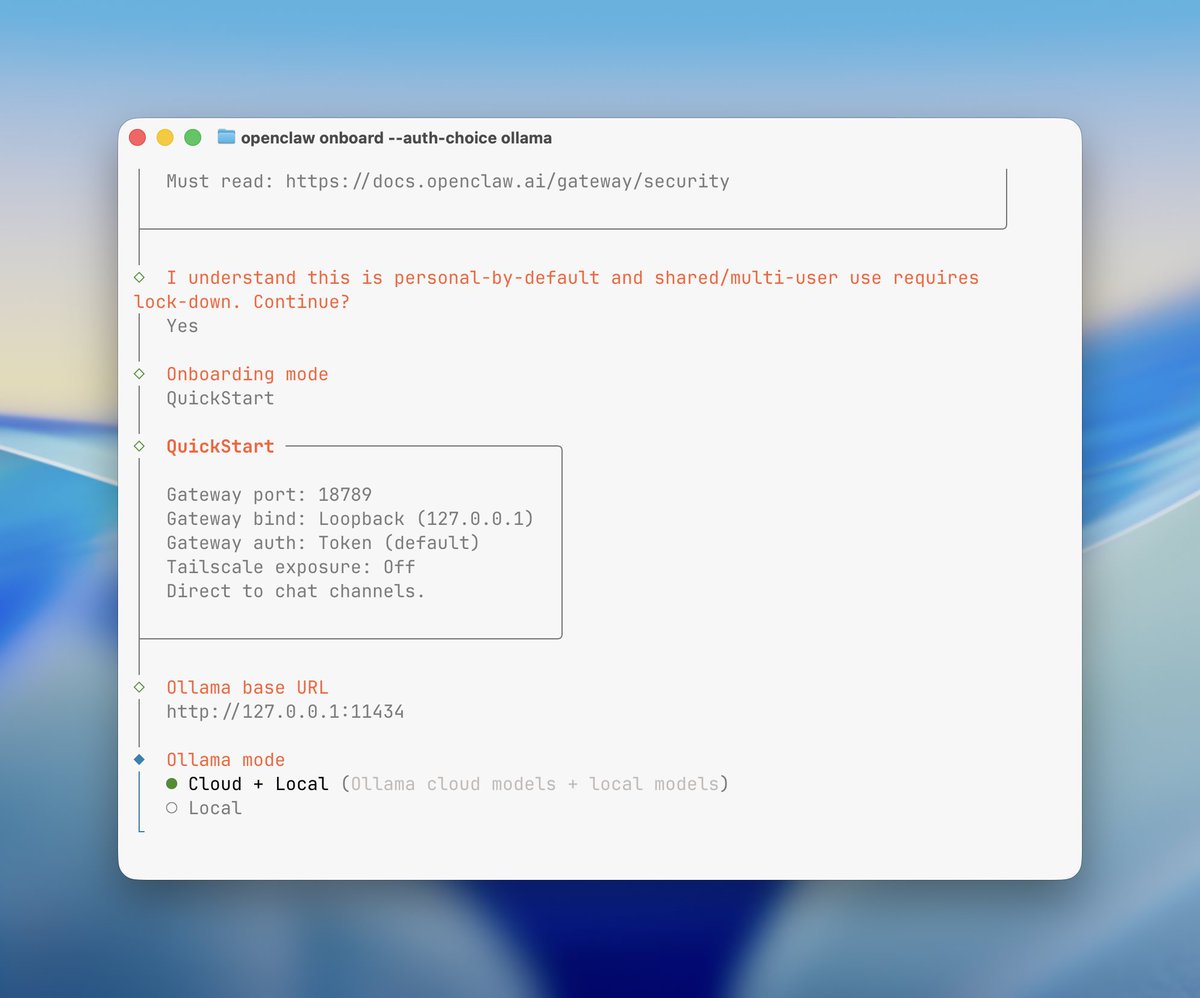

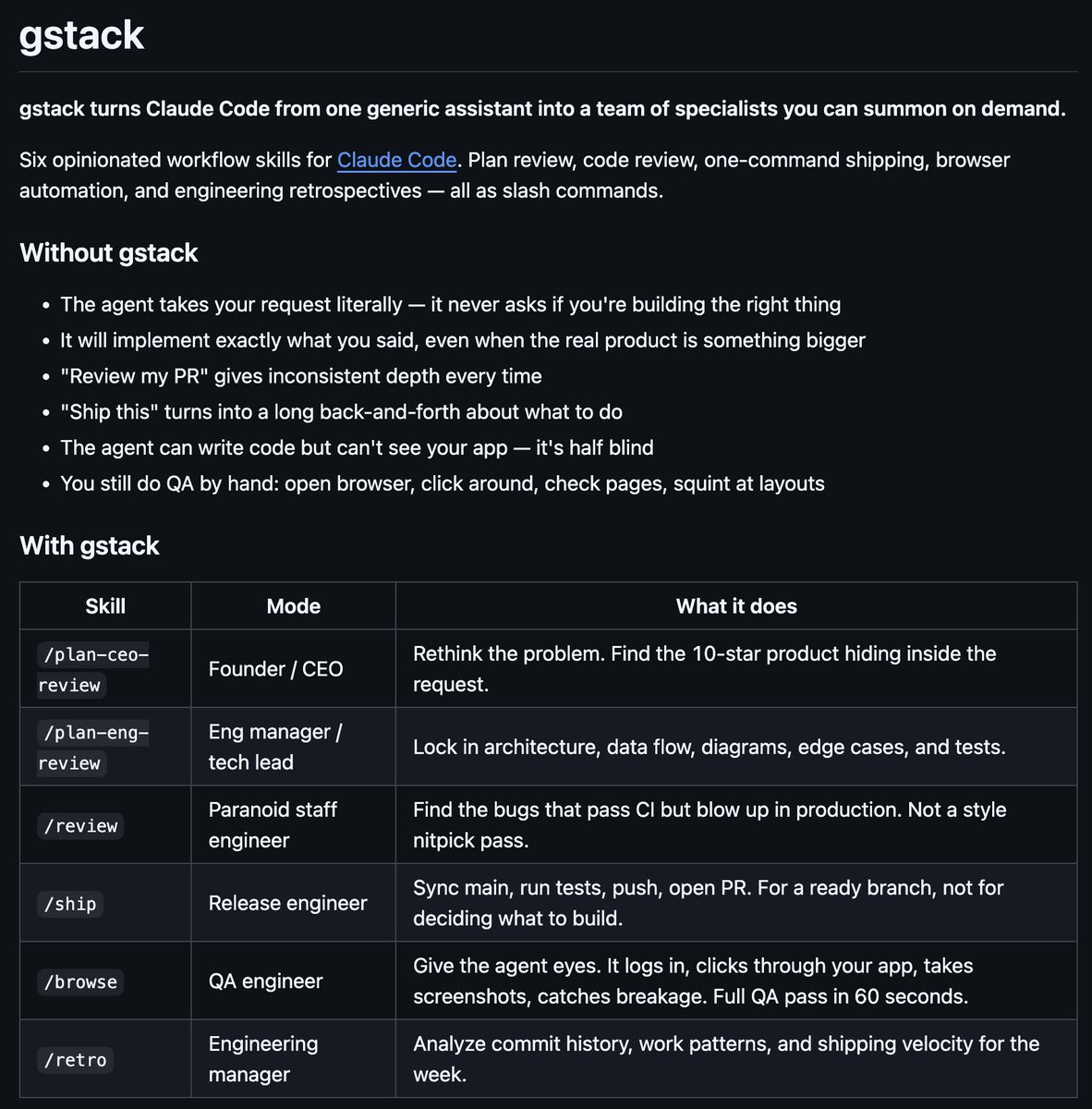

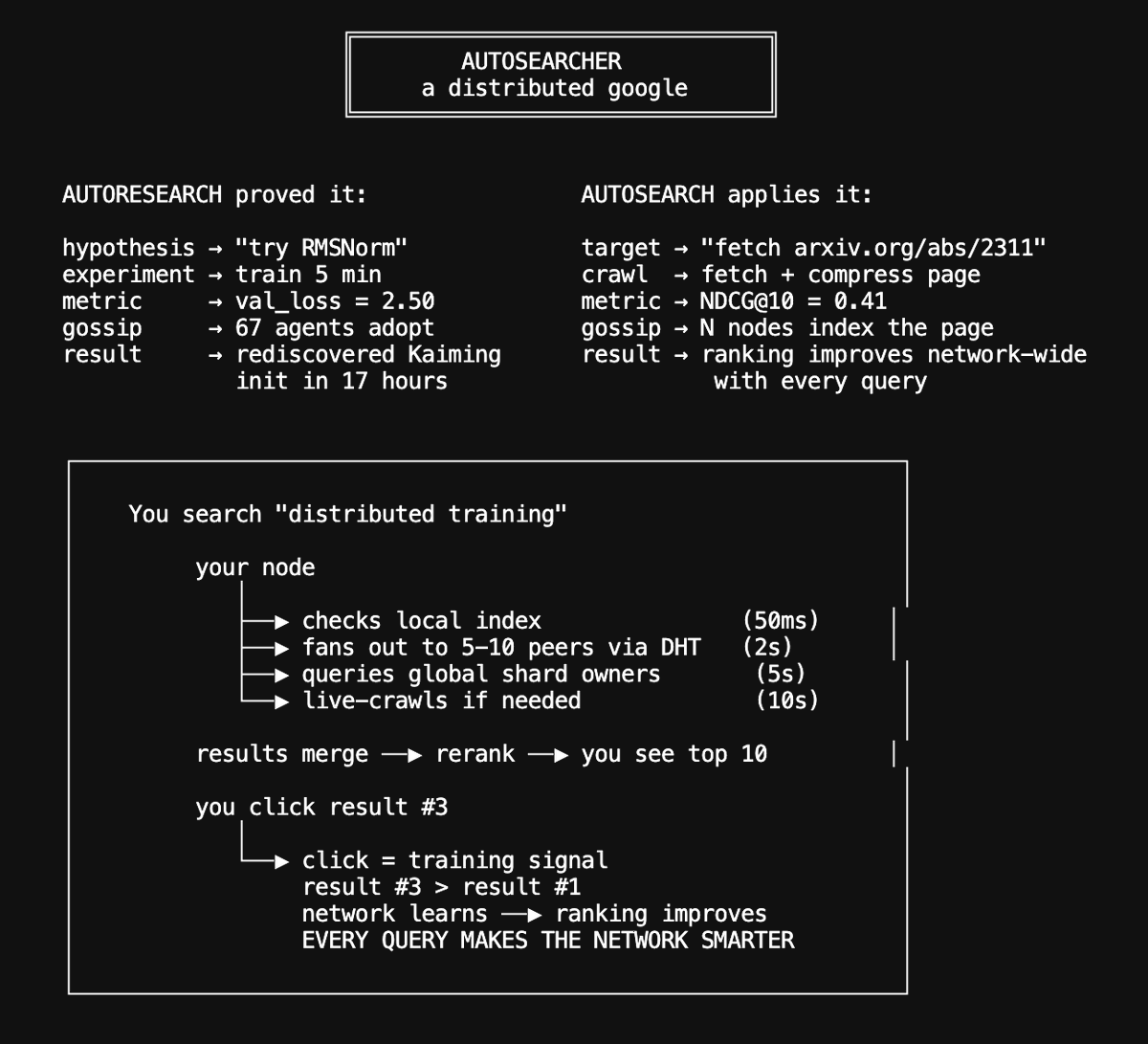

I hooked this up to a peer-to-peer astrophysics researcher agent which gossips and collaborates with other such agents (and your openclaws) to: 1. Learn how to train an astrophysics model (@karpathy's work below) 2. Train a new astrophysics model 3. Use it to write papers 4. Peer agents based on frontier lab models critique it 5. Surface breakthroughs ... and then feed back in the loop ... More agents join, from the browser or the CLI, and run this, the smarter and more exciting breakthroughs would eventually emerge. When these agents are idle, they are also reading daily tech news with their own RSS reader, and commenting on each other's thoughts. And they can also serve the underlying machine's compute to other agents on the network, and earn social credit for being good actors (think BitTorrent). We also prove the agent has the compute it says by cryptographic verification of regular matmul challenges. All you have to do is either go on this website (and it creates an agent which runs from your browser), or install the CLI if you want to give the system more juice. And you are part of likely the first experimental distributed agi thing. This is Day 1, but this is how it starts.. this network is fully peer-to-peer, and, very volatile, but the intelligence here is meant to compound continuously.. agents.hyper.space curl -fsSL agents.hyper.space/cli | bash