Humyn Labs

196 posts

@humynlabs

Verified (human) intelligence at scale for reliable AI. Diverse experts. Multi-layer QC. Every modality.

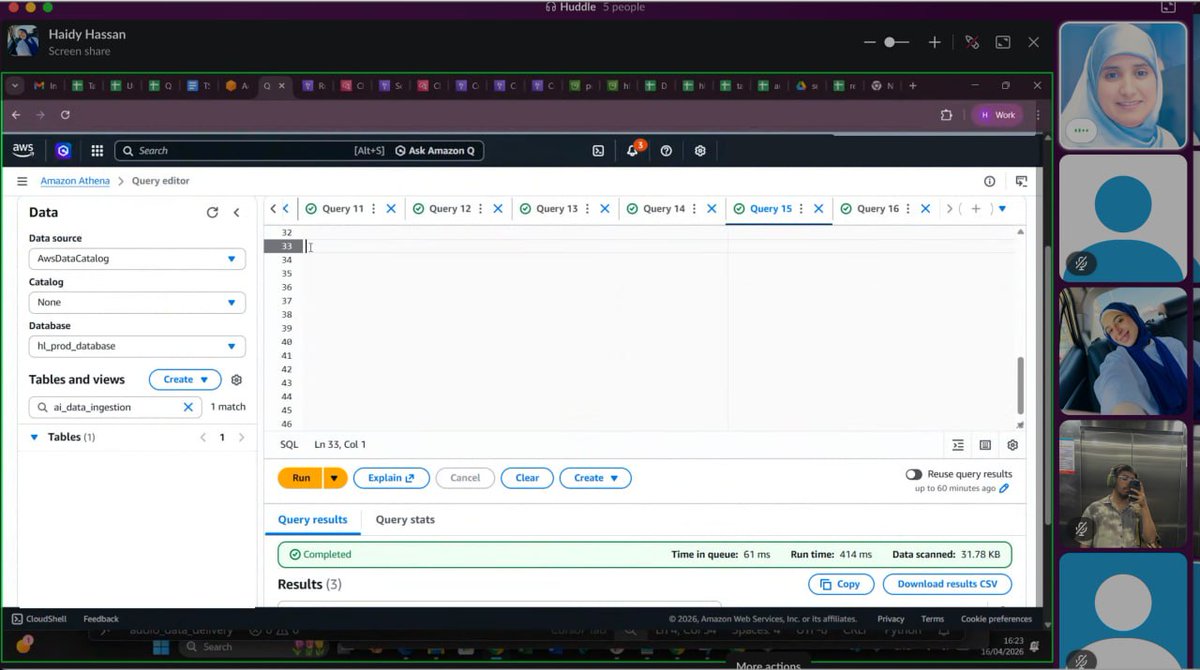

Hey humyns! I am @MaiDiab_ , Head of Data at @HumynLabs, and I'm taking over the account today. A bit about me: > PhD in Computer Vision > Originally from Egypt, I currently live in the UK > My obsession with data started when I was building a 40k image dataset from scratch, annotating it rule by rule, becoming my own QC > I bring that same obsession to our work here at Humyn Labs Currently, I am working on building multimodal data across audio, video and language spanning 20+ countries for use cases ranging from voice LLM, Physical AI to Simulation Data. Through the day I'll be sharing what we're building here at Humyn Labs, breaking down some research and answering your questions. Ask me anything over the next few hours and I'll answer them for you! 👇 Super excited. Let's do this.

@humynlabs @MaiDiab_ how do you measure ‘good data’ internally any specific metrics or frameworks? and What tools does your team use for large scale data annotation and QA

@humynlabs @MaiDiab_ What do you think is underrepresented when it comes to data? How can we ensure diversity, you think?

@humynlabs @MaiDiab_ What is the core problem you see in robotic training using egocentric RDLS ?

@humynlabs @MaiDiab_ what’s your biggest pet peeve when it comes to multimodal AI

@humynlabs @MaiDiab_ Hi, @MaiDiab_! Humyn uses blockchain for annotator reputation (Proof of Expert). Why that approach, and how does it actually solve the traceability problem that traditional platforms just ignore?

@humynlabs @MaiDiab_ What's a common industry belief about multimodal AI that you think is completely wrong?

@humynlabs @MaiDiab_ what's your non-technical book/hobby that actually makes you a better data scientist?