ollama

7.6K posts

Jensen Huang is coming to Interrupt. May 13-14 in SF. Join Jensen and Harrison for a fireside chat to learn where enterprise agents are headed. We'll dive into the LangChain x @nvidia partnership and how Deep Agents, NVIDIA Nemotron models, and the NVIDIA Agent Toolkit enable production-grade claws for the enterprise. Get tickets: interrupt.langchain.com

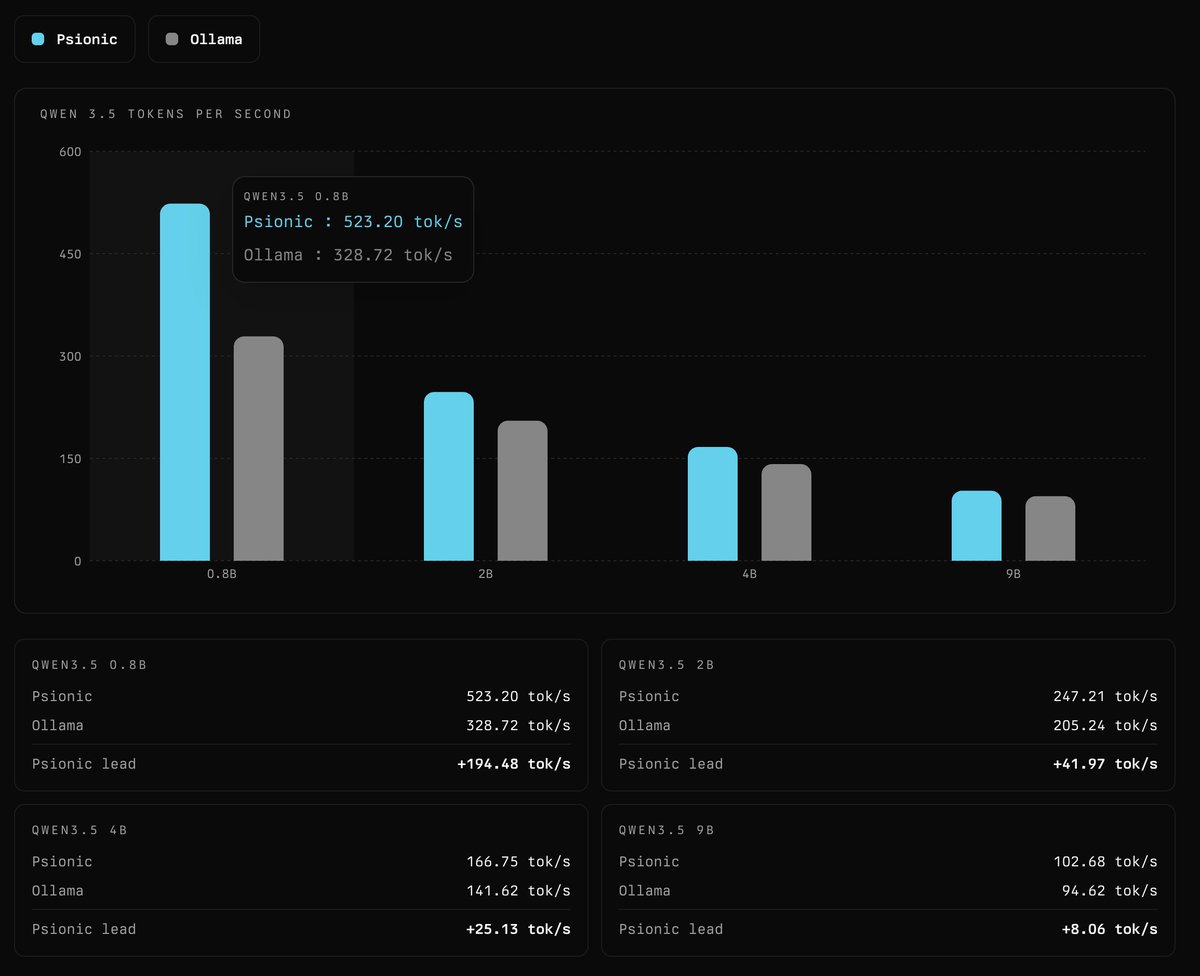

Episode 216: Psionic "Python sucks. It's time to get the ecosystem off of Python and onto proper languages like Rust. We're going to rewrite PyTorch and everything relevant from Python land in Rust." We introduce Psionic, our open-source Rust ML framework. It outperforms llama.cpp on local inference of GPT-OSS 20B; reproduces the Percepta blog post "Can LLMs Be Computers?"; and soon will support decentralized model training with compute providers paid in bitcoin. We'll use Psionic to train a new class of agent-centric executor models called 'Psion'. Psionic and Psion will be 100% open source, open weights, open data, open everything. Issues & insults welcome: github.com/OpenAgentsInc/…