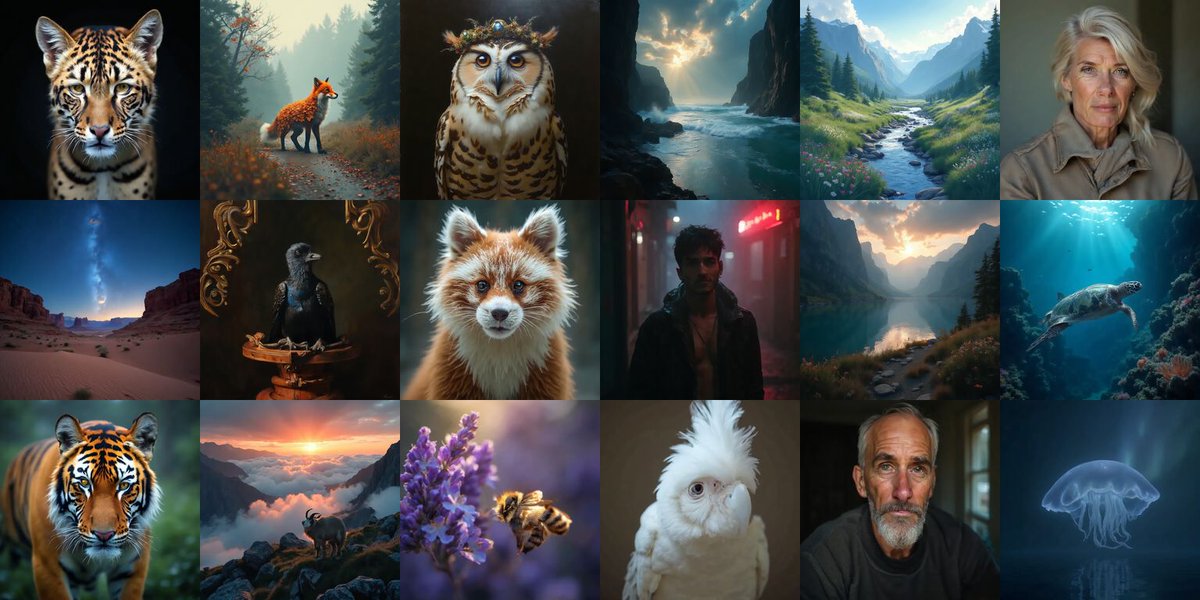

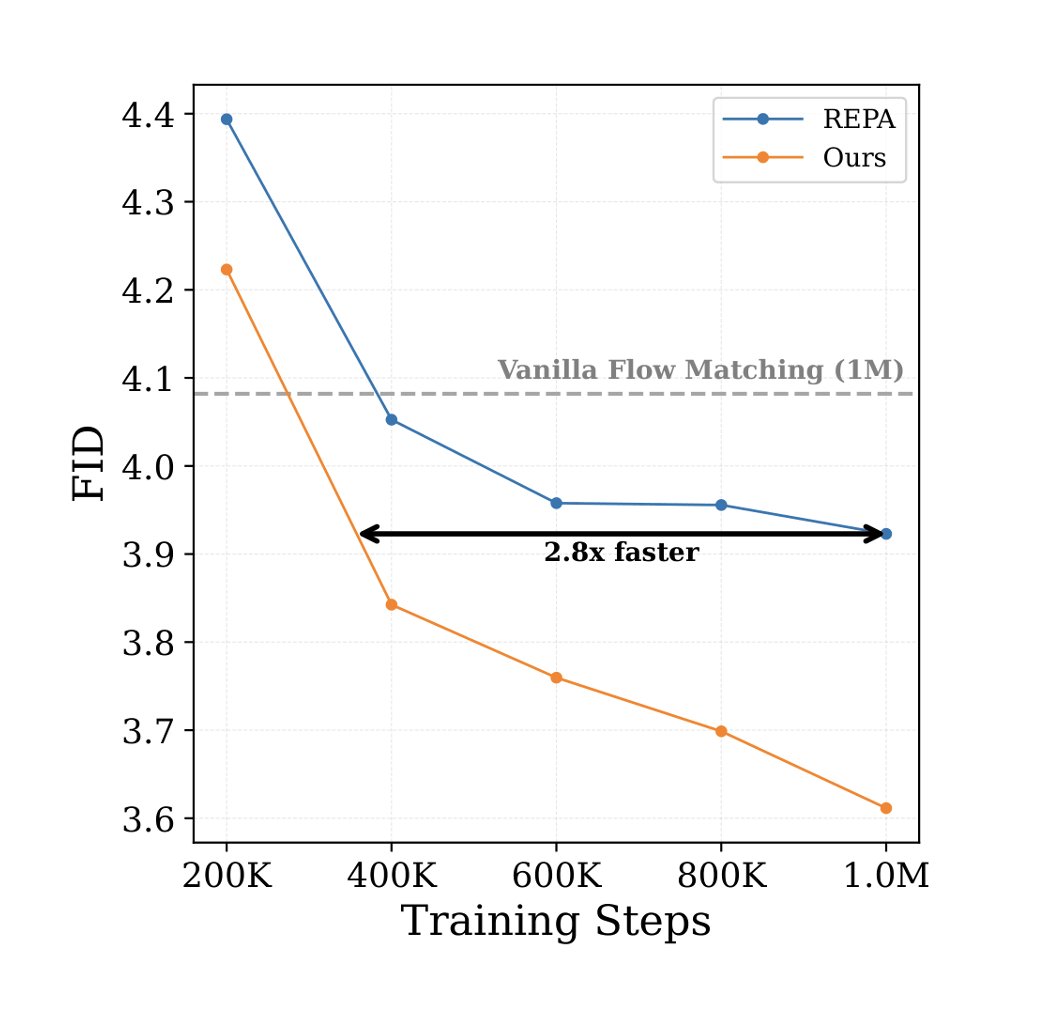

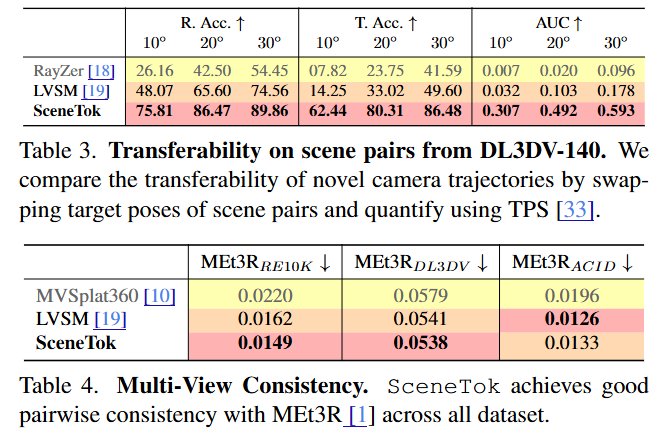

🚀 Excited to share V-Co, a diffusion model that jointly denoises pixels and pretrained semantic features (e.g., DINO). We find a simple but effective recipe: 1️⃣ architecture matters a lot --> fully dual-stream JiT 2️⃣ CFG needs a better unconditional branch --> semantic-to-pixel masking for CFG 3️⃣ the best semantic supervision is hybrid --> perceptual-drifting hybrid loss 4️⃣ calibration is essential --> RMS-based feature rescaling We conducted a systematic study on V-Co, which is highly competitive at a comparable scale, and outperforms JiT-G/16 (~2B, FID 1.82) with fewer training epochs. 🧵 👇