Big Data Analytics 已转推

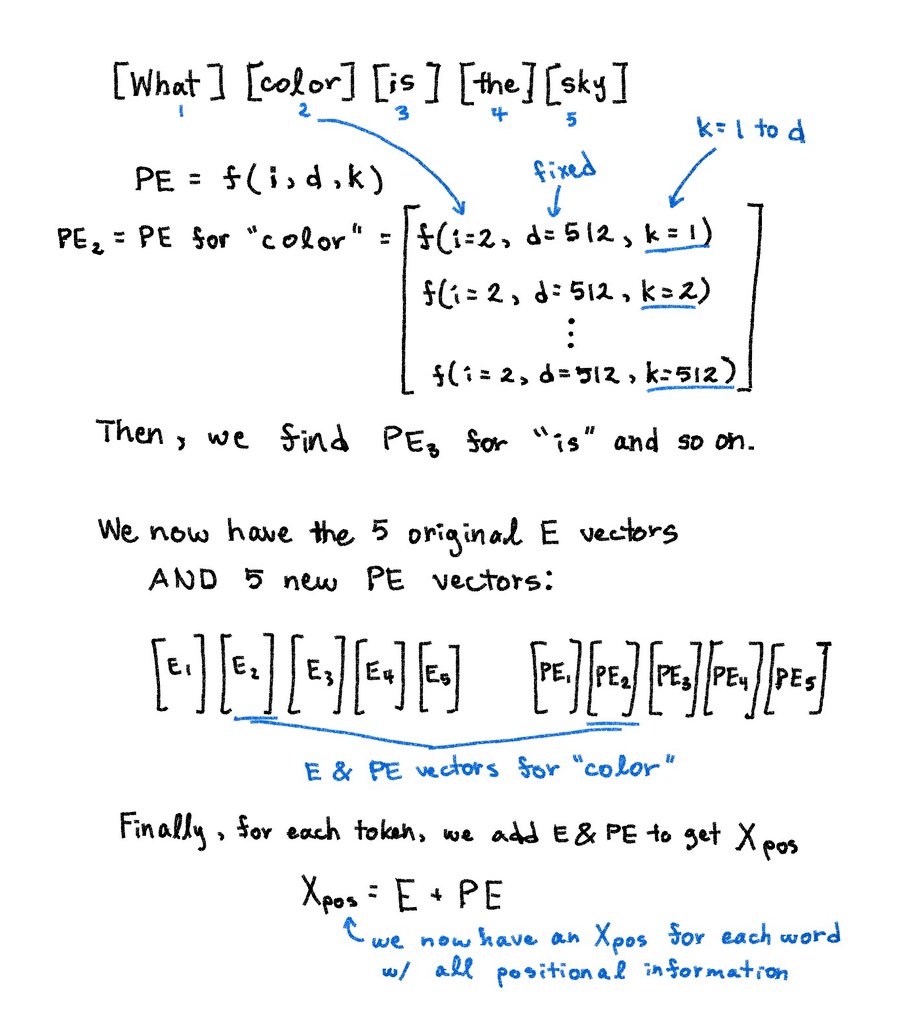

Un loco con un doctorado creó una enciclopedia visual interactiva open source para entender cómo funciona la IA, en plan, locura, entren para que vean.

Website: encyclopediaworld.github.io/howaiworks/

Repo en el primer comentario.

Español