Major_MB ⭐ 已转推

Major_MB ⭐

3.3K posts

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

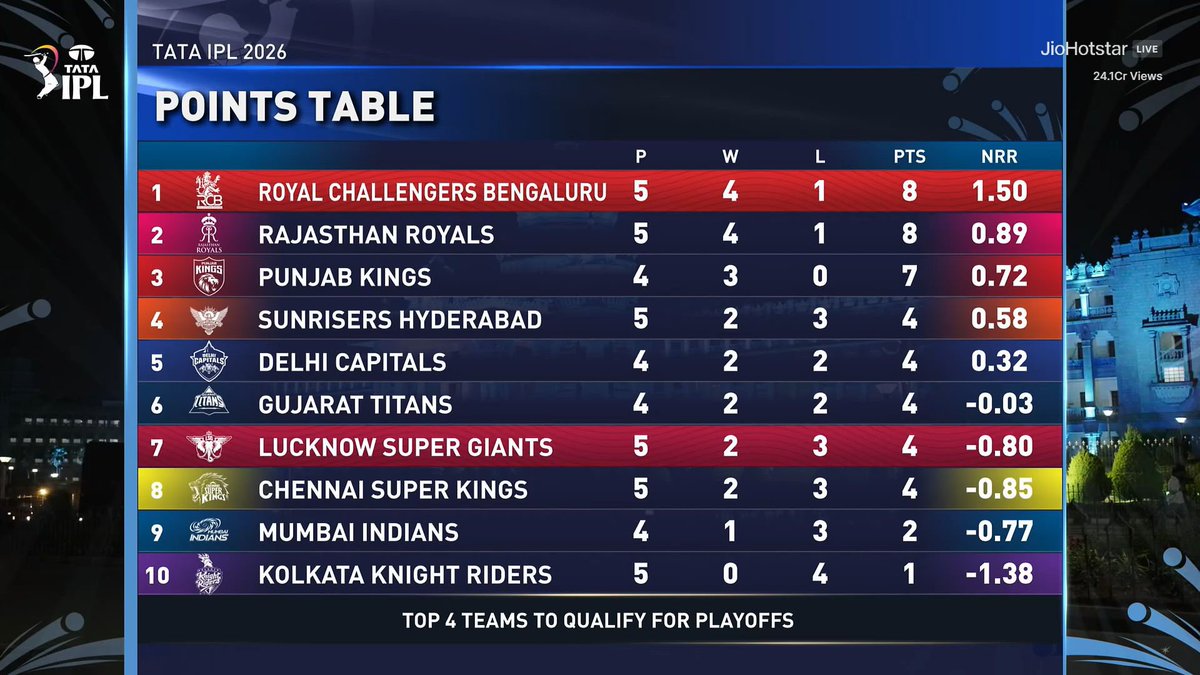

@mufaddal_vohra That was direct... oooch!! Pant!!

First Virat, then Rohit and now Pant!!

injury not good!!

English

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Mental Edit 🔥

Eppudaite Hero 1 2 ani Dips paddaayo akkadnundi Racha Racha Chesesaru 💥💥💥💥💥💥 @SunRisers

Eesti

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Agentic system design concepts I'd master if I wanted to build AI that doesn't blow up in prod.

Bookmark this.

1. Agent Circuit Breaker

2. Blast Radius Limiter

3. Orchestrator vs Choreography

4. Tool Invocation Timeout

5. Confidence Threshold Gate

6. Context Window Checkpointing

7. Idempotent Tool Calls

8. Dead Letter Queue for Agents

9. LLM Gateway Pattern

10. Semantic Caching

11. Human Escalation Protocol

12. Multi-Agent State Sync

13. Replanning Loop

14. Canary Agent Deployment

15. Agentic Observability Tracing

English

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

Major_MB ⭐ 已转推

MASTERING PLAN FOR LLMs

Large Language Models (LLMs) are the foundation of modern AI systems. They power chatbots, copilots, search engines, automation tools, and intelligent agents. This mastering plan takes you from understanding how LLMs work to building scalable, production-grade AI applications.

STEP 1: UNDERSTAND LLM FUNDAMENTALS

→ What LLMs are and how they work

→ Tokens and tokenization

→ Transformers architecture basics

→ Training vs inference

→ Pretraining and fine-tuning

→ Context windows and limitations

Build a strong conceptual foundation before building applications.

STEP 2: LEARN HOW TO USE LLM APIs

→ Working with OpenAI APIs

→ Prompting via SDKs (JavaScript, Python)

→ Chat vs completion models

→ Temperature, max tokens, top-p

→ Streaming responses

→ Handling API errors and retries

APIs are the entry point to real-world LLM applications.

STEP 3: MASTER PROMPT ENGINEERING

→ Zero-shot prompting

→ Few-shot prompting

→ Chain-of-Thought prompting

→ Role-based prompting

→ Output formatting (JSON, structured data)

→ Prompt optimization techniques

Good prompts = better outputs.

STEP 4: STRUCTURED OUTPUTS AND TOOL USAGE

→ Function calling

→ Tool integration

→ JSON schema outputs

→ Calling external APIs

→ Building tool-augmented agents

This is how LLMs interact with real systems.

STEP 5: RETRIEVAL AUGMENTED GENERATION (RAG)

→ What RAG is and why it matters

→ Embeddings and vector databases

→ Semantic search

→ Chunking and indexing data

→ Query pipelines

→ Improving answer accuracy

RAG helps LLMs use your own data.

STEP 6: MEMORY AND CONTEXT MANAGEMENT

→ Short-term vs long-term memory

→ Conversation history management

→ Summarization techniques

→ Vector memory systems

→ Context window optimization

Memory enables more intelligent and personalized systems.

STEP 7: BUILDING AI AGENTS

→ What AI agents are

→ ReAct pattern

→ Plan-and-execute systems

→ Tool-using agents

→ Autonomous workflows

→ Multi-agent systems

Agents turn LLMs into decision-making systems.

STEP 8: LLM FRAMEWORKS AND TOOLS

→ LangChain

→ LlamaIndex

→ AutoGen

→ Semantic Kernel

→ Prompt orchestration tools

Frameworks help you scale development faster.

STEP 9: EVALUATION AND TESTING

→ Evaluating LLM outputs

→ Prompt testing

→ Benchmarking

→ Human-in-the-loop evaluation

→ Automated evaluation pipelines

You cannot improve what you don’t measure.

STEP 10: SAFETY AND GUARDRAILS

→ Handling hallucinations

→ Content filtering

→ Prompt injection protection

→ Rate limiting

→ Moderation systems

→ Responsible AI practices

Safety is critical in production AI systems.

STEP 11: PERFORMANCE AND OPTIMIZATION

→ Latency optimization

→ Caching strategies

→ Token cost optimization

→ Model selection strategies

→ Batch processing

→ Streaming and responsiveness

Efficient systems reduce cost and improve UX.

STEP 12: DEPLOYMENT AND SCALING

→ API deployment strategies

→ Serverless vs microservices

→ Load balancing

→ Scaling LLM applications

→ Monitoring usage and costs

→ Multi-region deployments

Production systems must be reliable and scalable.

STEP 13: BUILD REAL-WORLD PROJECTS

→ AI chatbot with memory

→ Document Q&A system (RAG)

→ AI coding assistant

→ AI content generator

→ AI automation agent

→ Multi-agent collaboration system

Projects turn knowledge into real-world skills.

LLMS HANDBOOK

Get the complete LLMs Handbook with deep explanations, prompt engineering strategies, RAG systems, AI agents, and production-ready AI architectures:

codewithdhanian.gumroad.com/l/haeit

English

Major_MB ⭐ 已转推