تغريدة مثبتة

jessie@Fat-Garage 🦇🔊

3.2K posts

jessie@Fat-Garage 🦇🔊

@JESSCATE93

https://t.co/lkQuFy0vkJ(公众号:胖车库) / student of intelligence / Bitcoin / roam / activate for life 🏃♀️

somewhr انضم Nisan 2016

1.3K يتبع938 المتابعون

@Jamesward_eth now its back haha, i didnt aware i closed it

English

@JESSCATE93 Hey jessie Im looking to make a deal for your ens 'conaw.eth'. can you follow me, please? thanks a lot!

English

jessie@Fat-Garage 🦇🔊 أُعيد تغريده

New 3h31m video on YouTube:

"Deep Dive into LLMs like ChatGPT"

This is a general audience deep dive into the Large Language Model (LLM) AI technology that powers ChatGPT and related products. It is covers the full training stack of how the models are developed, along with mental models of how to think about their "psychology", and how to get the best use them in practical applications.

We cover all the major stages:

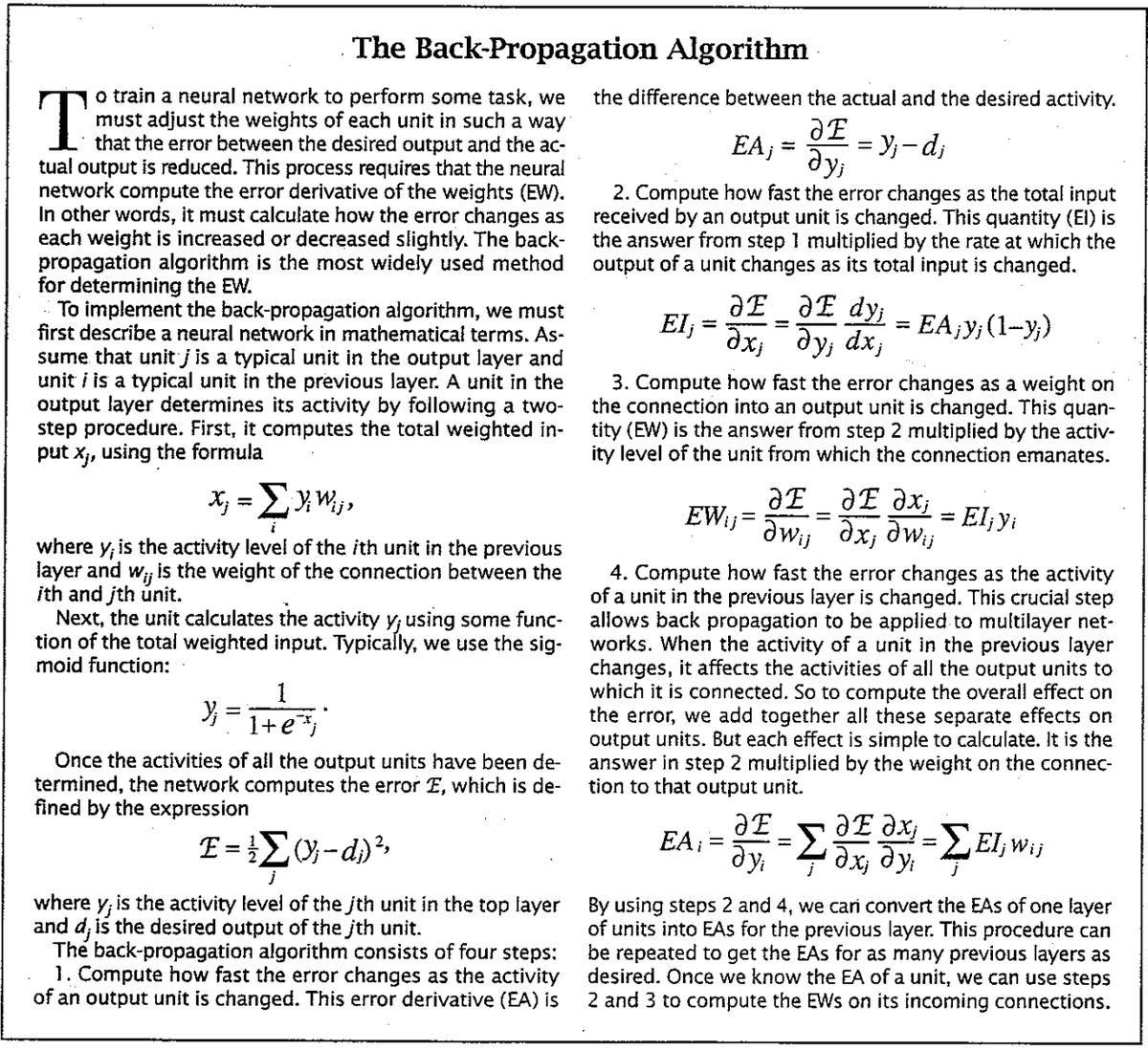

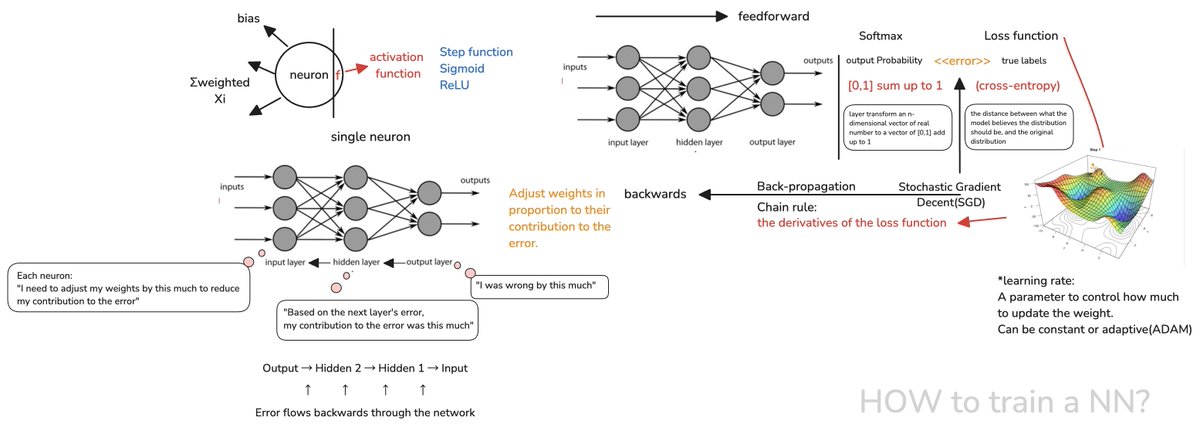

1. pretraining: data, tokenization, Transformer neural network I/O and internals, inference, GPT-2 training example, Llama 3.1 base inference examples

2. supervised finetuning: conversations data, "LLM Psychology": hallucinations, tool use, knowledge/working memory, knowledge of self, models need tokens to think, spelling, jagged intelligence

3. reinforcement learning: practice makes perfect, DeepSeek-R1, AlphaGo, RLHF.

I designed this video for the "general audience" track of my videos, which I believe are accessible to most people, even without technical background. It should give you an intuitive understanding of the full training pipeline of LLMs like ChatGPT, with many examples along the way, and maybe some ways of thinking around current capabilities, where we are, and what's coming.

(Also, I have one "Intro to LLMs" video already from ~year ago, but that is just a re-recording of a random talk, so I wanted to loop around and do a lot more comprehensive version of this topic. They can still be combined, as the talk goes a lot deeper into other topics, e.g. LLM OS and LLM Security)

Hope it's fun & useful!

youtube.com/watch?v=7xTGNN…

YouTube

English

jessie@Fat-Garage 🦇🔊 أُعيد تغريده

jessie@Fat-Garage 🦇🔊 أُعيد تغريده

jessie@Fat-Garage 🦇🔊 أُعيد تغريده

Incredible line up of talks at the #FutureOfText Symposium. Thanks so much to the organizers @liquidizer @dgrigar.

In the pics: Ge Li from @DSE_Unibo, @KenPfeuffer from @AarhusUni_int and Ryan House from @UWMadison.

Great support by @SloanFoundation and @wsu.

English

my fav project since 4 months ago, consulted evildr many times and bought the components, but still hasn't made it work. Now it is open sourced!!🥳

github.com/OscarWilmerdin…

English

@juand4bot It's cool bro, I'm also a random 👂 here if you wanna talk about your startup!

English