تغريدة مثبتة

PMD KGeN

3.1K posts

PMD KGeN

@PMD300

web3 addict, Messi |inter Miami⚽ and also a $crypto Trader 🤟

MARS انضم Aralık 2022

1.9K يتبع314 المتابعون

@Crypto4bailout Trading ain't the problem, but the lost and constant market manipulation, kicking shit outta boys bro

English

@justicechibueze Will dip definitely but that's healthy as circulating and total supply can be calculated, giving room for investors to get the clearer picture

English

PMD KGeN أُعيد تغريده

🟩 San Francisco next.

@ishank20, @anoushkaananth, and @ArunabhRohatgi are heading to @Official_GDC and @NVIDIAGTC 2026.

Meeting creators, partners and the people building what comes next.

See you all around SF soon.

English

PMD KGeN أُعيد تغريده

🟩VeriFi Seoul After Hours brought the builders, community, and partners together.

Appreciate everyone who joined us. Seoul soon!

@B__Harvest @Xangle_official @CoinNess_

English

PMD KGeN أُعيد تغريده

PMD KGeN أُعيد تغريده

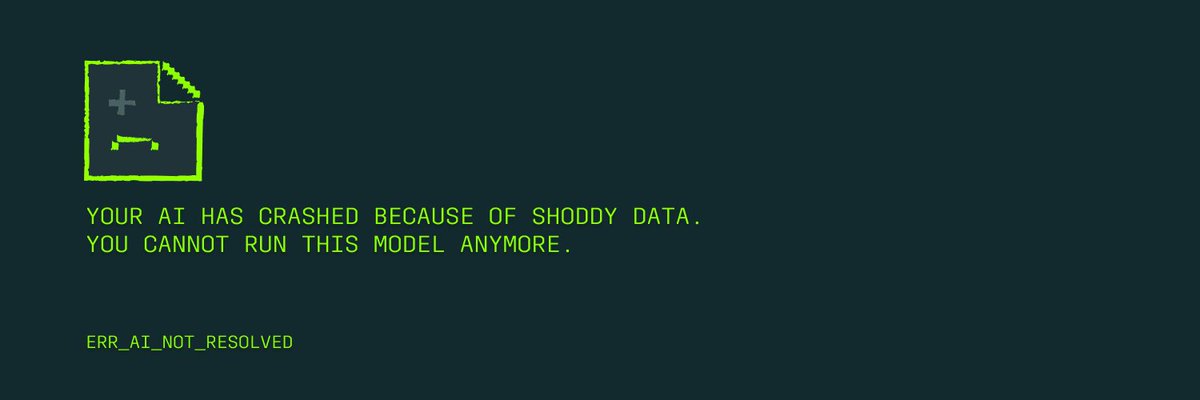

VeriFi × AI: Why AI training is quietly breaking?

Since we are currently in a space where no one wants to address the obvious, it's even more important for protocols like us to do this.

It is an open secret that AI training is not the same anymore, and that is because of a lot of reasons: shoddy data, quality drop offs, etc.

But let's understand this better:

1. The AI Training Crisis No One Likes Talking About

AI is scaling faster than trust.

While model sizes, compute, and funding have exploded, the quality of training data has quietly gone in the opposite direction. The assumption that “more data = better AI” is no longer holding up.

Recent industry studies suggest:

> Over 60% of training data used in modern AI systems is either duplicated, weakly labeled, or unverifiable

> Teams now spend up to 80% of AI development time cleaning and validating data, not training models

> Synthetic and scraped data loops are causing model collapse, where AI is increasingly trained on other AI outputs

The result?

Smarter-looking models with shallower intelligence, higher hallucination rates, and declining real-world reliability.

AI isn’t failing because of compute, it’s failing because truth isn't scaling by default anymore.

2. The real bottleneck is trust, not intelligence

Most AI pipelines today rely on anonymous data contributors, centralized datasets with opaque provenance that have no clear ownership, attribution, or accountability.

So, when data goes wrong there’s no way to answer who created what data, or was it even verified by a real human.

The cherry on top? You cannot even check if it has been tampered with or reused incorrectly.

This creates three systemic problems:

No provenance: data lineage is unclear

No incentives: contributors aren’t rewarded for quality

No verification: trust is assumed, not proven

In short, AI is being trained on inputs it cannot audit.

3. Why we need VeriFi as a norm, not a feature

A lesson to be learnt from things like this, is to call a spade, a spade.

That being said, verification must be:

> Programmatic, not manual

> Persistent, not one-time

> Composable, so other systems can build on it

KGeN's "VeriFi" introduces a simple shift:

"Data should not just exist.

It should arrive verified, attributable, and reputation-weighted."

At a protocol level, this enables:

> Human-verified data contributions

> On-chain proofs of authenticity and ownership of the data

> Reputation systems that reward consistency and accuracy

Instead of scraping the internet blindly, AI models and systems can source intelligence from verified human networks, where quality compounds over time.

4. The Bigger Picture

The next phase of AI won’t be defined by who trains the largest model, but will be defined by who controls the quality of truth flowing into machines.

VeriFi exists because AI can no longer afford to guess what to trust. KGEN exists because trust, once verified, needs an open protocol to scale.

English

PMD KGeN أُعيد تغريده

PMD KGeN أُعيد تغريده

🟩 Welcome back to Reward Academy.

Today, intern’s testing a new task and showing you how to earn KCash and redeem it for real rewards on @KStoreGlobal.

English

🟩 If you’re building, build where the demand is.

@ishank20 chats with @galileowilson about the three areas KGeN is focusing on!

English