Arvind

949 posts

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

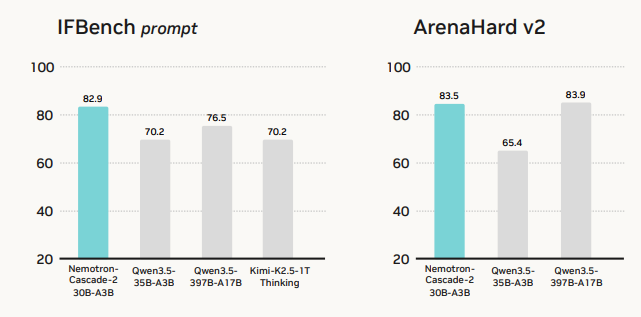

Thank you to everyone in the community who is testing and using Nemotron models. It's great to see Nemotron-Cascade-2, Nemotron-3-Super and Nemotron-3-Nano trending on HF. The Nemotron team is working hard to incorporate all your feedback into Nemotron 4. And yes, Nemotron 3 Ultra is still on track for release. huggingface.co/models?pipelin…

LiteLLM HAS BEEN COMPROMISED, DO NOT UPDATE. We just discovered that LiteLLM pypi release 1.82.8. It has been compromised, it contains litellm_init.pth with base64 encoded instructions to send all the credentials it can find to remote server + self-replicate. link below