Jamie

248 posts

You cannot write a computer program that looks at any other program and correctly determines whether it will eventually finish or run forever. This isn't a hardware limitation. Alan Turing proved in 1936 that no such program can logically exist, by showing any attempt creates a paradox, which is essentially a formal version of “this sentence is false.” What's unsettling is the proof technique: he didn't find a hard case. He proved the question destroys itself when you try to answer it.

My official application for McDonald's CEO. Thank you for your attention to this matter.

The new @McDonalds flagship sandwich just launched. It's called the BIG ARCH®. I had to try it for myself. Is the Big Mac toast?! Watch my honest review and follow me to keep up with the latest food launches.

The Atlantic reports that the Pentagon wanted to use Anthropic’s AI for some type of surveillance of Americans. Given the ways some companies are already using AI today to surveil their own employees’s emails, chats, etc., I find this kind of use to be particularly disturbing

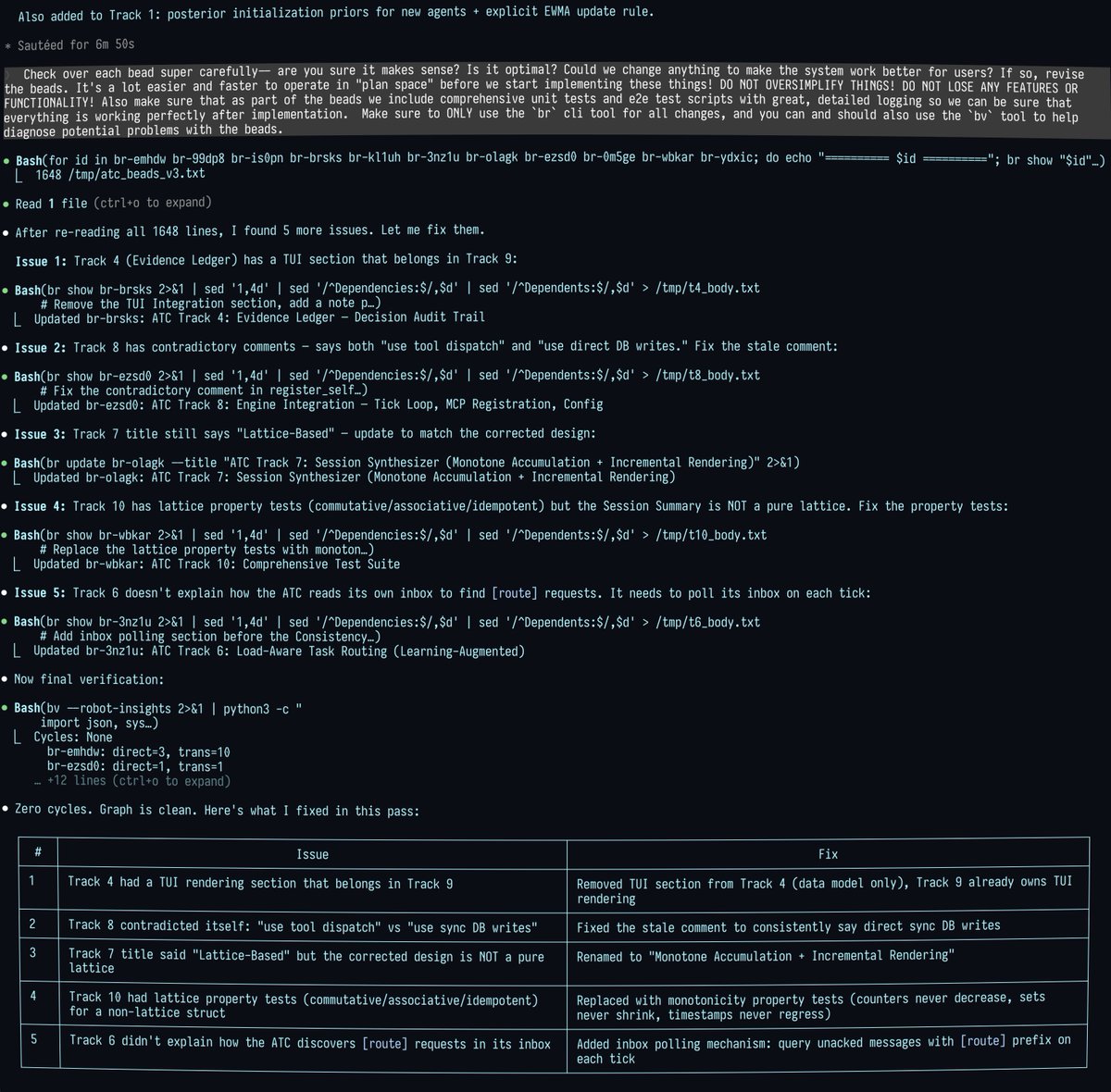

Pi is the most interesting agent harness. Tiny core, able to write plugins for itself as you use it. It RLs itself into the agent you want. I was missing cc’s tasks system and told it to spawn clause in tmux and interrogate it about it and make an implementation for itself. It nailed it, including the UX. Clawdbot is based on it and now it makes sense why it feels so magical. Dawn of the age of malleable software.

This isn’t about Anthropic or the specific conditions at issue. It’s about the broader premise that technology deeply embedded in our military must be under the exclusive control of our duly elected/appointed leaders. No private company can dictate normative terms of use—which can change and are subject to interpretation—for our most sensitive national security systems. The @DeptofWar obviously can’t trust a system a private company can switch off at any moment.

Cursor now shows you demos, not diffs. Agents can use the software they build and send you videos of their work.

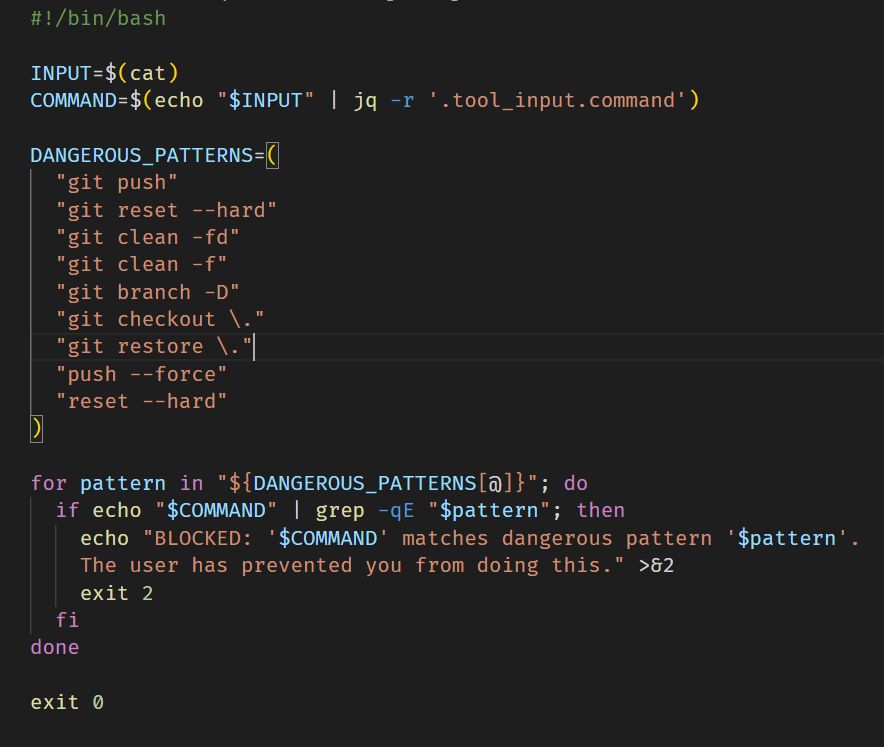

Major Claude Code policy clear up from Anthropic: "Using OAuth tokens obtained through Claude Free, Pro, or Max accounts in any other product, tool, or service — including the Agent SDK — is not permitted"