Austin Veselka

71 posts

Austin Veselka

@further_ai

Senior RL Engineer at @OpenPipeAI/@CoreWeave Goal: further AI

انضم Şubat 2024

119 يتبع65 المتابعون

I made a paper!

arxiv.org/abs/2604.02371

Essentially:

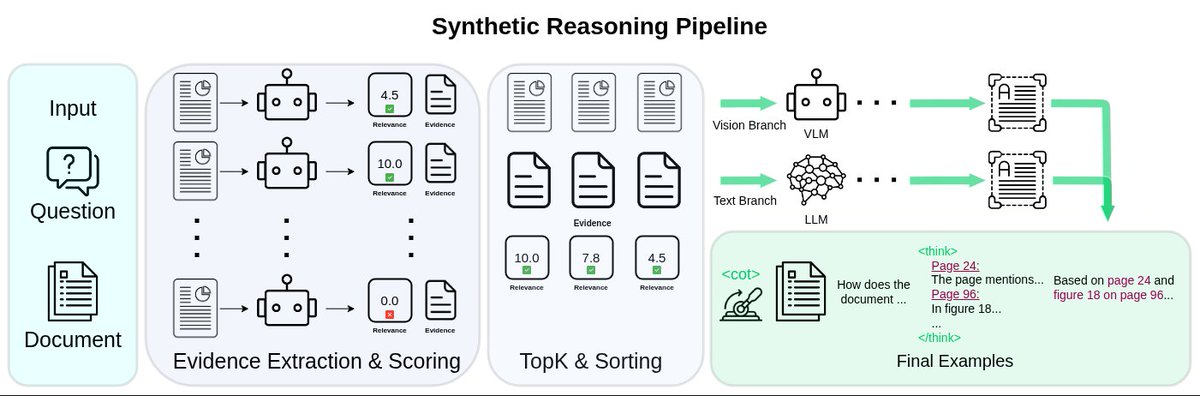

Extract evidence from pages and sort the top K to make a reasoning trace.

Add in a control token and we can turn it on or off.

Internalize with model merging.

Austin Veselka@further_ai

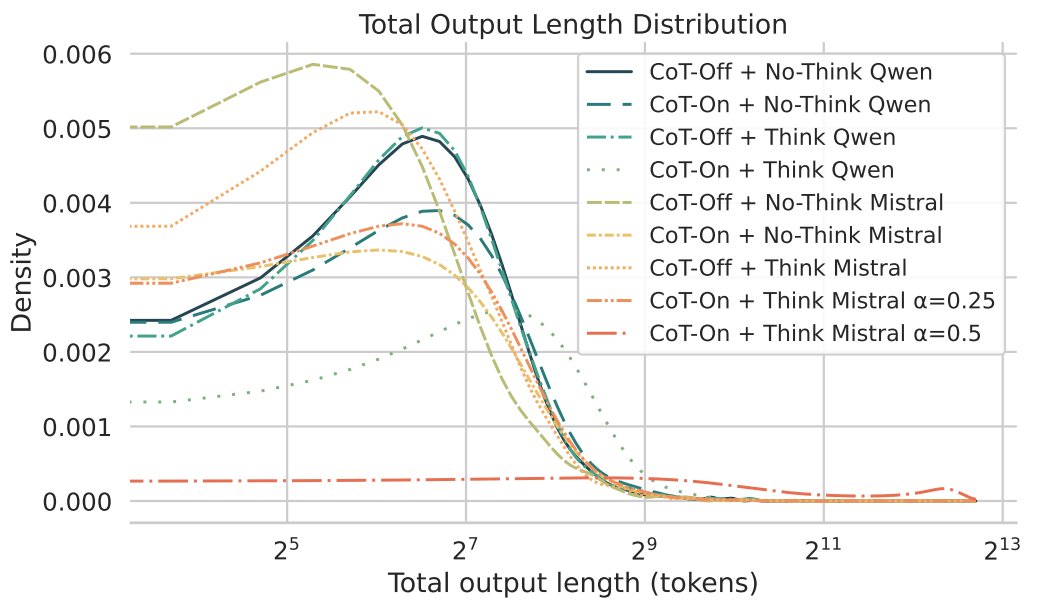

So, I used a control tok - <cot> - and trained the model without the CoT when it is not in the prompt. If you train entirely without the synthetic CoT traces, it performs **very** similarly to not prompting <cot>. So, the model can turn the internalized algorithm on or off? Cool

English

@thsottiaux Code can be 10x as many lines as needed with hasattr()s, .get()s , etc. and it raises errors for everything (assert isinstance(count, int), "3 line message") even when we fully know that count is an int. These waste space with insanely defensive code and it can fallback silently

English

@oskar_hallstrom Thanks for your help and advice with the project, it went a long ways towards its success

English

Excited to share my work "How to Train Your Long-Context Visual Document Model." (arxiv.org/abs/2602.15257)

Research and recipes for training long-context VLMs for document understanding is entirely lacking. In this paper, I explore this frontier with extensive ablations.

English

For reproducibility and open insights, I am releasing a full leaderboard of my training runs with data recipes included for the community to explore!

Please enjoy

huggingface.co/spaces/lighton…

English