تغريدة مثبتة

Muhammad Ali

291 posts

Muhammad Ali

@get_muhammad

AI Agent Developer | Building Scalable Apps & Products | MLOps & Model Deployment

Islambad انضم Aralık 2025

109 يتبع3 المتابعون

If you’re starting AI today, learn this order:

Python

Data Handling

Machine Learning

Deep Learning

Build Projects

#buildinpublic

English

You don’t need a PhD to start in AI.

You need:

• Curiosity

• Consistency

• Real projects

#buildinpublic

English

The real power of AI isn’t answers.

It’s decision-making at scale.

#MachineLearning #DataScience

English

@Cudo_Compute Land and power are now the real AI moats — and they're 5-10 year build cycles. Software moves in months. The bottleneck just shifted to something you can't prompt-engineer your way out of.

English

The AI infrastructure bottleneck has shifted.

For the past three years, the conversation has been about GPU access. Who has the chips?

Who placed their order first?

That conversation is now outdated.

The real constraint on AI deployment is physical infrastructure.

Land. Power. Grid connections. Cooling capacity.

The ability to actually put GPUs into buildings that can run them.

Infrastructure accounts for 71% of all cost-driver selections in AI deployment. GPU pricing ranks

Sixth.

We surveyed 701 AI infrastructure decision-makers across the UK, US and Europe to understand where the real bottlenecks sit.

The findings launch this week.

English

@TradexWhisperer Memory as the inference bottleneck is the most consequential shift nobody's pricing in yet. Most serving stacks were designed when compute was the constraint. That assumption is now backwards.

English

$MU $DRAM Bears are still wearing nostalgia glasses. The architecture has changed.

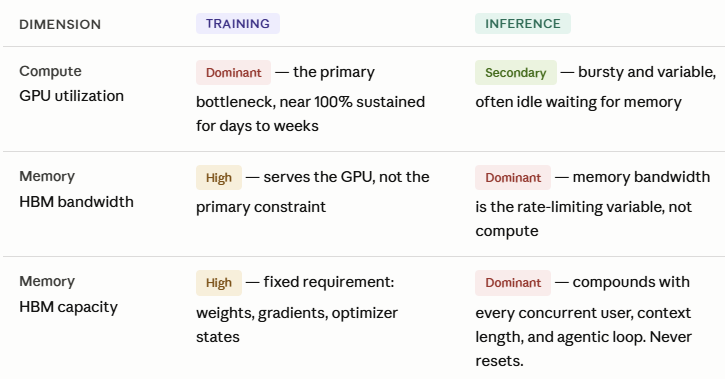

AI Training: GPU was the primary bottleneck.

AI Inference inverts the entire hierarchy. Memory becomes the dominant bottleneck. GPU utilization drops to secondary. Bursty. Often idle. Waiting for memory to feed it.

The floor has been raised. Permanently. This is a complete inversion of the compute hierarchy.

Trade Whisperer@TradexWhisperer

$MU Bargain of the Century PE Ratio: 15.5 Sales Ratio: 2.33 50% Increase in HBM (AI Memory) Sequentially. DRAM/NAND prices are surging.

English

@sipping_on_ai When top models are statistically tied on benchmarks, the real differentiator becomes latency, reliability, and cost — not raw IQ. Pick the one that fits your error budget, not the leaderboard.

English

@henuwangkai Exactly the right mental model. I'd add one thing: don't just route by model strength — route by failure mode. Claude fails loudly with structured output. GPT fails quietly with long context. Knowing how each model breaks saves you faster than knowing how each one shines.

English

@johniosifov Jevons Paradox in real time. Tokens got 50x cheaper. We run 100x more of them. The CFO didn't feel the drop, they felt the explosion. Cheap inference didn't reduce cost — it removed the psychological ceiling on how much you could justify building.

English

AI inference costs dropped 1,000x in 3 years.

Inference spending grew 320%.

This is the most important number nobody is talking about. Per-token cost fell from $20 to $0.40 per million tokens. That's not a cost reduction. That's a business model collapse for anyone who assumed falling costs meant falling bills.

What actually happened: usage scaled 10-100x faster than costs dropped. Startups that saved 90% per API call saw 10x traffic growth. Their bills doubled.

This changes the startup economics conversation completely.

**The old assumption** (2023-2024): Inference will get cheap, therefore AI-native companies will have structural cost advantages over incumbents.

**The actual outcome** (2026): Inference got cheap, so usage exploded, so inference is now 85% of enterprise AI budgets — up from ~33% three years ago.

The Jevons Paradox applied to AI. Lower cost per unit, massively higher consumption. The efficiency dividend became a usage explosion.

For AI-native startups, this has real implications:

1. Unit economics models built on "costs keep falling" are probably wrong. The denominator shrinks but the numerator grows faster.

2. The self-hosting breakeven shifted. At 50-100M tokens/month you start winning. Below that, managed APIs still win. But the breakeven matters more as total volume grows.

3. Differentiation is NOT "we have cheaper inference." Every startup has cheaper inference. Differentiation is: what do you do with it that the incumbents can't?

The 1,000x cost drop didn't lower the bar. It raised it. Because now your competitor can afford to do the same thing you're doing.

Price efficiency democratized access. It didn't create advantage.

What's your unit economics model look like at 10x your current token volume?

English

@MRehan_5 This is underappreciated. We scaled an agentic pipeline and the GPU bill barely moved. Memory bandwidth and CPU orchestration costs tripled. Nobody warned us. The "AI is a GPU story" framing is at least 18 months stale for anyone running multi-agent production workloads.

English

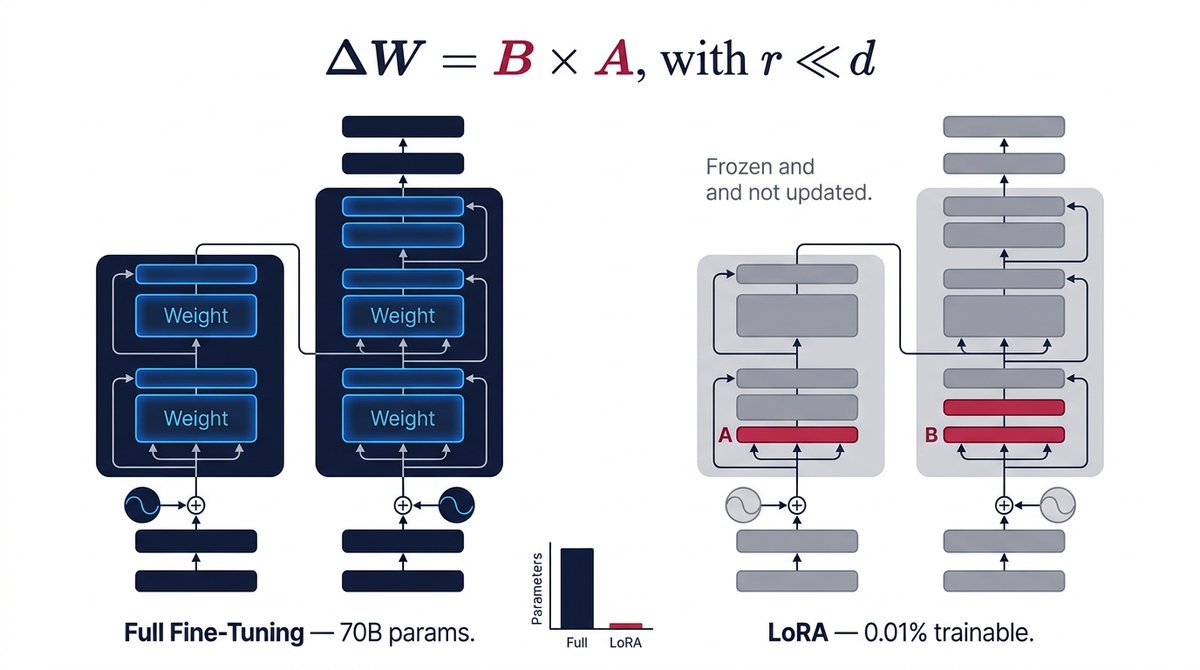

@Sourceontech456 LoRA changed everything for us. Ran into one gotcha though: merging at inference is clean, but if you're serving multiple adapters dynamically, watch out for adapter cache pressure — especially on shared GPU nodes. We had silent latency spikes for weeks before we caught it.

English

Fine-tuning a 70B LLM used to require 840 GB of GPU memory. LoRA does it with ~41 GB — by training only 0.01% of parameters. Freeze everything else. Merge at inference. Zero latency cost. Full guide with math, code & hyperparameter tuning:

soguru.in/lora-peft-expl…

English

@v_shakthi Moving multi-agent AI to edge silicon only works if the orchestration layer is deterministic enough to run without cloud fallback. Most agent frameworks assume near-zero API latency. Edge-first with graceful cloud escalation is a fundamentally different architecture problem.

English

🏗️ AI Architect’s Daily Briefing: March 31, 2026

1. Intel debuts 18A Core Ultra with 4x AI performance for the edge

The move to the 18A node signals a massive shift toward localized inference, allowing enterprises to run multi-agent workloads directly on managed fleet hardware rather than the cloud.

Architect's Take: Moving high-density inference to the edge is the only way to solve the triple threat of latency, data sovereignty, and the skyrocketing costs of centralized cloud compute.

2. Google Research unveils TurboQuant for near-lossless 3-bit compression

By rotating data vectors to simplify geometry, this new method unblocks KV cache bottlenecks, allowing massive models like Mistral to run faster without the typical accuracy trade-off.

Architect's Take: Efficiency is the new scale; being able to compress the state of an LLM to 3 bits while maintaining parity is the breakthrough we need for truly responsive real-time systems.

3. Cisco and NVIDIA reveal "Secure AI Factory" to harden agentic workflows

This new security framework moves beyond model protection to focus on the "agentic control plane," enforcing zero-trust identities and guardrails at the runtime execution level.

Architect's Take: An agent is only as safe as the identity it inherits; we are finally treating AI agents like first-class citizens in our security architecture rather than isolated black boxes.

4. AWS sunsets Machine Learning Specialty for new Generative AI Developer certification

The pivot toward "AgentCore" and RAG architectures in the official curriculum reflects the reality that building with foundation models is now a distinct engineering discipline from classical ML.

Architect's Take: The industry is moving from "model training" to "system orchestration," where the value lies in how you integrate these models into existing business logic.

5. Coupa launches AI DevCon to pioneer "Autonomous Spend Management"

By opening their platform for custom agent orchestration on a $9.5 trillion dataset, Coupa is turning procurement from a manual workflow into a self-optimizing network.

Architect's Take: This is the pinnacle of domain-specific AI; when you ground agents in a massive, proprietary dataset, you move from "chatting about data" to "executing high-value business outcomes."

#AIArchitecture #SystemDesign #EdgeComputing #TurboQuant #CyberSecurity #AgenticAI #AWS #Intel18A #Infrastructure2026 #DigitalTransformation

English

@ingliguori Solid roadmap but I'd put evals right after LLMs, not at deployment. You need eval infrastructure before you can tell if your agents, memory, and planning layers are actually working or just appearing to work. Shipping without evals means flying blind until users report bugs.

English

Roadmap to learn Agentic AI 🚀

AI fundamentals

Python + frameworks

LLMs

Agents architecture

Memory + RAG

Planning & decision-making

RL & self-improvement

Deployment

Real-world automation

Agentic AI = full-stack intelligence.

Credit: Tiksly

#AgenticAI #LLM #RAG #A

English

@bilmasry 15 weeks 100% hands-on is the right format for agentic AI. You cannot learn this from slides. The gotchas only show up when you wire your first real tool call, watch it time out, and then figure out how to build retry logic that doesn't break the agent's chain of reasoning.

English

🚀🤖 عايز تحول نفسك من Developer عادي لـ AI Agent Engineer محترف في 2026؟

كورس جديد قوي في بالمصري:

Applied Agentic AI for Coders

15 أسبوع عملي 100%، هتبني فيه AI Agents إنتاجية حقيقية من الصفر لحد الـ Deployment على AWS.

هتتعلم:

- Python for GenAI

- Building First & Advanced AI Agents

- LangChain, LangGraph, CrewAI

- Multi-Agent Systems

- Conversational Audio Bots (مع Whisper)

- Deployment بـ Docker + FastAPI + AWS

- Evaluation, Optimization, MCP & A2A Protocols

- وكمان LLM Architecture وكل الأدوات الحديثة

✅ أكتر من 400 تمرين عملي

✅ 5 مشاريع + Capstone Project متاح نشره

✅ محرر كود داخل الموقع (مش محتاج تعمل setup)

✅ أول 5 دروس مجاناً بدون كارت كريديت

لو بتكتب كود وبتحلم تبقى AI/ML Engineer أو LLM Engineer أو MLOps.. ده الكورس اللي هيفرق معاك فعلاً ويؤهلك لرواتب عالية.

يلا ابدأ دلوقتي مجاناً:

bilmasry.com/agentic-coders

#AgenticAI #AICoders #LangChain #Python #بالمصري #Bilmasry

@ADLSconsulting The 2026-2027 transformation for developers isn't about AI replacing you, it's about the gap widening between devs who use AI as a thinking partner vs devs who use it as an autocomplete. The leverage multiplier is real but it only compounds if you stay in the loop, not out of it.

English

THE FUTURE OF DEVELOPERS AND SOFTWARE ENGINEERS: A GUIDE & PLAYBOOK ABOUT THE 2026-2027 TRANSFORMATION

Let me walk you through this fascinating research about where software development is heading. I want to share this with you, it's about what's coming next. This is what AI and I found.

WHERE WE ARE RIGHT NOW

So here's the thing that's happening right now in 2025 and moving into 2026. The World Economic Forum has been tracking this, and they're saying something really important. Software developers are becoming what they call the first truly AI-native workforce. That's a big deal because it means we're not just using AI as a tool anymore. We're fundamentally changing how we work.

The numbers are pretty striking. By 2025, forty percent of developers reported that AI had already expanded their career opportunities. That's not a small number. And seventy percent expect their roles to change even more in 2026. This isn't about AI taking jobs away. It's about AI changing what those jobs look like entirely.

We're moving from what they call the Copilot Era, where humans type and AI suggests, into what they're calling the Agentic Era. In this new era, AI agents function as autonomous teammates rather than passive tools. Think about that difference. Instead of just getting suggestions, you're working alongside AI that can actually take on tasks independently.

WHAT THE DATA SAYS ABOUT GROWTH

Now, here's where it gets counterintuitive. Morgan Stanley did research on this, and their findings go against what a lot of people fear. They're saying that despite AI automation, developer roles are actually growing. The strategic impact is being enhanced through AI augmentation, not diminished.

The World Economic Forum's 2023 report projected that sixty percent of tasks will be automated by 2027. But here's the key insight from Gartner: generative AI will create new roles in software engineering and operations. They're projecting that by 2027, fifty percent of software engineering organizations will be using what they call Software Engineering Intelligence Platforms. And productivity measurement is becoming data-driven rather than output-based.

This is the automation paradox. We're automating more, but we're not eliminating jobs. We're creating different kinds of jobs.

THE SEVEN CRITICAL TRENDS

Microsoft released their 2026 AI Trends Report, and they identified seven critical trends that are shaping this transformation. Let me walk you through each one because they're all interconnected.

First, AI agents are becoming teammates, not tools. This is the fundamental shift. Second, the human role is being elevated. We're focusing more on strategy, architecture, and innovation rather than the nitty-gritty of writing every line of code. Third, repetitive task automation is happening. AI is handling boilerplate code, testing, and documentation. Fourth, we're seeing decision support systems emerge where AI provides data-driven recommendations. Fifth, there's continuous learning integration with real-time skill adaptation. Sixth, developers are becoming generalists with AI specialization, meaning cross-domain expertise. And seventh, there are new ethical AI governance requirements with compliance and oversight needs.

HOW THE TECHNICAL STACK IS CHANGING

Let me paint you a picture of how the development stack is transforming. In the traditional stack from 2024 to 2025, you had manual code writing, human testing, manual documentation, individual problem solving, and linear development flow. That's what most of us are used to.

But the agentic stack from 2026 to 2027 looks completely different. You have AI-assisted code generation, automated testing frameworks, AI-generated documentation, collaborative AI problem solving, and parallel development pipelines. The entire workflow is being reimagined.

The productivity multipliers are significant. Baytech Consulting did an analysis and found that companies implementing AI agents are seeing three to five times productivity gains. Developer time allocation is shifting dramatically, with seventy percent of developer time moving from coding to architecture and strategy. And error reduction is substantial, with AI-assisted development reducing bugs by forty to sixty percent.

NEW ROLES EMERGING IN 2026-2027

This is where it gets really interesting. Based on data from Autodesk, Gloat, and LinkedIn for 2026, there are eight primary emerging roles that are seeing massive growth. Let me tell you about each one.

The AI Engineer role is growing at one hundred forty-three point two percent, with a fifteen to twenty percent salary premium. The key skills here are machine learning, neural networks, and AI architecture. The AI Solutions Architect is growing at one hundred nine point three percent with a twenty to twenty-five percent salary premium, requiring system design, AI integration, and cloud expertise.

The Agentic AI Developer is growing at one hundred thirty-four point five percent with a fifteen to twenty percent salary premium, needing agent frameworks and large language model integration skills. The AI Product Manager is growing at eighty-nine point seven percent with a ten to fifteen percent salary premium, requiring product strategy and AI capabilities knowledge.

The AI Content Creator is growing at one hundred thirty-four point five percent with a ten to twelve percent salary premium, needing prompt engineering and content strategy skills. The Machine Learning Engineer is growing at ninety-five percent with a fifteen to twenty percent salary premium, requiring ML operations, model training, and deployment expertise.

The AI Ethics Officer is growing at one hundred eighty percent with a twenty to thirty percent salary premium, needing compliance, ethics, and governance knowledge. And the AI Prompt Engineer is growing at two hundred percent with a twelve to eighteen percent salary premium, requiring prompt design and large language model optimization skills.

HOW TRADITIONAL ROLES ARE EVOLVING

Traditional roles aren't disappearing. They're transforming into hybrid positions. The software engineer is becoming the AI-augmented software engineer, combining core coding skills with AI tool mastery, architecture design with AI agent orchestration, and testing with AI validation frameworks.

The DevOps engineer is becoming the AI-enhanced DevOps engineer, combining infrastructure automation with AI monitoring, CI-CD with AI-driven deployment optimization, and security with AI threat detection. The full-stack developer is becoming the AI-native full-stack developer, combining frontend with AI UI generation, backend with AI API orchestration, and database with AI query optimization.

SPECIALIZED NICHE POSITIONS

There are also high-demand specializations emerging for 2026-2027. The AI Agent Orchestrator coordinates multiple AI agents, manages agent communication protocols, and optimizes agent workflow efficiency. The LLM Fine-Tuning Specialist handles custom model training for specific domains, prompt optimization at scale, and model evaluation and validation.

The AI Security Engineer focuses on AI model vulnerability assessment, adversarial attack prevention, and secure AI deployment practices. The AI Compliance Auditor handles regulatory compliance verification, ethical AI implementation review, and documentation and audit trail management. The AI Infrastructure Architect manages GPU and TPU clusters, AI workload optimization, and cost-efficient AI deployment.

WHAT SKILLS YOU NEED TO TRANSFORM

Let me talk about the skill transformation that's happening. You're moving from manual code writing to AI-assisted code generation, from debugging to AI debugging and validation, from documentation writing to AI documentation generation, from testing to AI test framework design, from code review to AI code quality assessment, from architecture design to AI system architecture, from API development to AI agent communication, and from database design to AI data pipeline design.

There are five critical adaptation areas you need to focus on. First is AI tool mastery. You need proficiency with at least three AI development tools like GitHub Copilot, Cursor, Replit AI, or Amazon CodeWhisperer. The timeline for competency is three to six months.

Second is prompt engineering. You need advanced prompt design for code generation, including context management, iterative refinement, and error handling. The timeline for proficiency is two to four months. Third is AI agent frameworks. You need understanding of agent architectures like LangChain, AutoGen, CrewAI, or LlamaIndex. The timeline for mastery is four to eight months.

Fourth is data literacy. You need understanding of AI training data implications, including data quality assessment, bias detection, and privacy compliance. The timeline for competency is three to five months. Fifth is ethical AI implementation. You need knowledge of AI ethics frameworks like the EU AI Act, NIST AI Risk Management, or ISO 42001. The timeline for awareness is two to three months.

HOW TEAMS ARE RESTRUCTURING

Development teams are being restructured significantly. A traditional team from 2024 to 2025 had five to seven software engineers, one to two QA engineers, one DevOps engineer, one product manager, and one technical lead. That's a team of eight to twelve people.

An AI-native team from 2026 to 2027 has three to four AI-augmented engineers, one AI agent specialist, one AI quality engineer, one DevOps plus AI infrastructure person, one product manager plus AI strategy person, and one technical lead plus AI architecture person. That's a team of seven to nine people.

The result is thirty to forty percent smaller teams with two to three times output capacity. This is the efficiency gain that's driving the transformation.

YOUR LEARNING PATHWAY

There are four phases to this learning journey. Phase one is foundation building for months one to three, covering AI tool proficiency, basic prompt engineering, and understanding AI capabilities and limitations. Phase two is integration for months four to six, covering AI agent frameworks, advanced prompt design, and AI-assisted architecture.

Phase three is specialization for months seven to twelve, covering domain-specific AI expertise, AI security and compliance, and AI system optimization. Phase four is mastery for month twelve and beyond, covering AI system design, AI team leadership, and AI strategy development.

HOW TO EARN CAPITAL IN 2026-2027

Now let's talk about the money side of this. There are seven primary income sources for developers in 2026-2027. AI consulting can generate three thousand to fifteen thousand dollars per month with fifteen to twenty-five hours per week investment and startup costs of fifty to five hundred dollars. Custom AI agent development can generate five thousand to twenty thousand dollars per project with twenty to forty hours per project investment and startup costs of one hundred to one thousand dollars.

Freelance AI development can generate fifty to one hundred fifty dollars per hour with flexible time investment and startup costs of zero to two hundred dollars. AI SaaS products can generate one thousand to fifty thousand dollars per month with ten to thirty hours per week investment and startup costs of five hundred to five thousand dollars. AI course creation can generate two thousand to twenty thousand dollars per course with forty to eighty hours per course investment and startup costs of one hundred to one thousand dollars.

AI tool affiliate can generate five hundred to five thousand dollars per month with five to ten hours per week investment and startup costs of zero to one hundred dollars. Technical writing can generate one hundred to three hundred dollars per article with five to fifteen hours per article investment and zero startup costs. AI audit services can generate two thousand to ten thousand dollars per audit with ten to twenty hours per audit investment and startup costs of one hundred to five hundred dollars.

YOUR STEP-BY-STEP CAPITAL EARNING GUIDE

Let me walk you through the five phases of building your income in 2026-2027. Phase one is foundation building in the first quarter of 2026. Step one point one is skill assessment and gap analysis where you audit current technical skills, identify AI competency gaps, and create a personalized learning roadmap. This takes two weeks and costs zero to two hundred dollars for assessment tools.

Step one point two is core AI tool proficiency where you master three or more AI development tools, complete certification courses, and build portfolio projects. This takes four to six weeks and costs two hundred to one thousand dollars for courses. Step one point three is portfolio development where you create three to five AI-enhanced projects, document AI integration strategies, and publish on GitHub or a portfolio site. This takes four to eight weeks and costs fifty to two hundred dollars for hosting.

Phase two is market entry in the second quarter of 2026. Step two point one is freelance platform setup where you create profiles on Upwork, Toptal, or Fiverr, specialize in AI development niche, and set competitive rates of seventy-five to one hundred fifty dollars per hour. This takes two weeks and costs zero to one hundred dollars for platform fees.

Step two point two is first client acquisition where you target small businesses needing AI integration, offer pilot projects at discounted rates, and build testimonials and case studies. This takes four to six weeks and costs one hundred to five hundred dollars for marketing. Step two point three is service package creation where you define three to five service packages, price based on value delivered, and create proposal templates. This takes two weeks and costs zero to one hundred dollars for tools.

Phase three is scaling in the third quarter of 2026. Step three point one is AI agent development services where you specialize in custom AI agent creation, target mid-size companies with five thousand to twenty thousand dollar projects, and build repeatable development frameworks. This takes eight to twelve weeks and costs five hundred to two thousand dollars for tools and infrastructure.

Step three point two is consulting practice launch where you offer AI strategy consulting, charge one hundred fifty to three hundred dollars per hour for consulting, and create retainer packages of three thousand to ten thousand dollars per month. This takes four to six weeks and costs two hundred to one thousand dollars for legal and branding. Step three point three is network expansion where you join AI developer communities, attend industry conferences, and build referral partnerships. This is ongoing and costs one thousand to five thousand dollars for events and networking.

Phase four is diversification in the fourth quarter of 2026. Step four point one is SaaS product development where you identify market gaps in AI tools, build MVP with AI integration, and launch on Product Hunt or BetaList. This takes twelve to sixteen weeks and costs two thousand to ten thousand dollars for development and marketing.

Step four point two is course creation where you package expertise into courses, launch on Udemy, Teachable, or your own platform, and price at ninety-seven to four hundred ninety-seven dollars per course. This takes eight to twelve weeks and costs five hundred to three thousand dollars for production and marketing. Step four point three is affiliate and partnership revenue where you partner with AI tool vendors, create affiliate content, and negotiate revenue share agreements. This takes four to eight weeks and costs one hundred to five hundred dollars for content creation.

Phase five is optimization in 2027. Step five point one is rate optimization where you increase rates based on demand, focus on high-value clients, and eliminate low-margin work. This is ongoing with zero cost. Step five point two is team building where you hire junior developers for overflow, create agency structure, and scale to fifty thousand to one hundred thousand dollars per month revenue. This takes six to twelve months and costs five thousand to twenty thousand dollars for salaries and infrastructure.

Step five point three is passive income systems where you automate service delivery, create digital products, and build recurring revenue streams. This takes twelve to eighteen months and costs two thousand to ten thousand dollars for automation and tools.

YOUR REVENUE PROJECTION TIMELINE

Here's what your revenue could look like quarter by quarter. In the first quarter of 2026, your primary income is zero to two thousand dollars with zero secondary income, totaling zero to two thousand dollars per month. In the second quarter of 2026, your primary income is three thousand to eight thousand dollars with five hundred to two thousand dollars secondary income, totaling three thousand five hundred to ten thousand dollars per month.

In the third quarter of 2026, your primary income is eight thousand to fifteen thousand dollars with two thousand to five thousand dollars secondary income, totaling ten thousand to twenty thousand dollars per month. In the fourth quarter of 2026, your primary income is fifteen thousand to twenty-five thousand dollars with five thousand to ten thousand dollars secondary income, totaling twenty thousand to thirty-five thousand dollars per month.

In the first quarter of 2027, your primary income is twenty thousand to thirty-five thousand dollars with ten thousand to twenty thousand dollars secondary income, totaling thirty thousand to fifty-five thousand dollars per month. In the second quarter of 2027, your primary income is twenty-five thousand to forty-five thousand dollars with fifteen thousand to thirty thousand dollars secondary income, totaling forty thousand to seventy-five thousand dollars per month.

HOW TO MITIGATE RISKS

There are three categories of risks you need to manage. Financial risks require you to maintain a six-month emergency fund, diversify income streams with at least three sources, and balance contract work with product revenue. Market risks require you to stay updated on AI technology changes, invest ten percent of income in continuous learning, and build relationships with multiple clients.

Technical risks require you to avoid over-reliance on single AI tools, maintain core coding skills, and document all AI-assisted work. These mitigation strategies are essential for long-term success.

DATA SOURCE VALIDATION

This research is grounded in seven primary sources. The World Economic Forum 2026 report identifies software developers as the AI vanguard. Gartner 2027 projects eighty percent upskilling requirement and new role creation. Morgan Stanley research shows AI coding boosts developer roles. Autodesk data shows role growth rates with AI Engineer at plus one hundred forty-three point two percent. Lightcast analysis shows forty-three percent salary premium for AI skills. Microsoft AI Trends 2026 identifies seven key trends. Baytech Consulting provides ROI and productivity metrics.

THEORY VALIDATION POINTS

Several key points are confirmed. AI creates more developer roles than it eliminates. Sixty percent task automation by 2027 is achievable. Fifteen to twenty percent salary premium for AI skills is market reality. The agentic AI era is replacing the copilot era. Eighty percent workforce upskilling requirement is accurate.

Some points need monitoring. The exact timeline for full AI agent adoption is still uncertain. Regional variations in AI adoption rates need tracking. Long-term sustainability of AI salary premiums needs observation. Impact of AI regulation on development practices needs watching.

Some points remain uncertain. The full extent of AI agent autonomy by 2027 is unclear. Specific regulatory frameworks that will emerge are unknown. Long-term career trajectory for traditional developers is uncertain. Market saturation points for AI development services are unpredictable.

COUNTER-ARGUMENT ANALYSIS

Let me address some common counter-arguments. The argument that AI will replace developers is countered by Morgan Stanley showing AI coding boosts developer roles, Gartner showing new roles created faster than old ones eliminated, World Economic Forum showing developers become AI-native workforce not replaced, and industry data showing seventy percent of developers expect role evolution not elimination.

The argument that AI development is too complex is countered by AI tools reducing complexity through automation, frameworks like LangChain and AutoGen abstracting complexity, learning curves of three to six months not years, and market demand exceeding supply of AI-skilled developers.

The argument that income projections are unrealistic is countered by freelance AI rates of fifty to one hundred fifty dollars per hour from Upwork 2026 data, AI consulting of three thousand to fifteen thousand dollars per month from Jobright 2026, custom AI agents of five thousand to twenty thousand dollars per project from industry data, and forty-three percent salary premium for AI skills from Lightcast analysis.

YOUR IMPLEMENTATION CHECKLIST

Here's your immediate action plan for weeks one to four. Complete AI tool proficiency assessment. Enroll in AI development certification. Build first AI-enhanced portfolio project. Research target market segments. Set up freelance platform profiles.

Your short-term actions for months two to three include completing three portfolio projects, securing first two to three paying clients, establishing service packages and pricing, joining AI developer communities, and creating content marketing strategy.

Your medium-term actions for months four to six include launching AI consulting practice, developing first SaaS MVP, building referral network, creating first course or digital product, and optimizing rates based on demand.

Your long-term actions for months seven to twelve include scaling to agency model, diversifying into three or more income streams, building passive income systems, establishing thought leadership, and planning 2027 expansion strategy.

FINAL THOUGHTS

The future of developers and software engineers through 2026-2027 represents not a threat but a transformational opportunity. The data clearly indicates five key points. First, role evolution not elimination means AI augments rather than replaces developers. Second, significant income growth with fifteen to forty-three percent salary premiums for AI skills. Third, new career pathways with eight or more emerging high-value positions. Fourth, multiple income streams including consulting, freelancing, SaaS, and courses. Fifth, market demand where supply cannot meet demand for AI-skilled developers.

The guaranteed path to capital in 2026-2027 requires five things. First, immediate skill acquisition in AI tools and frameworks. Second, strategic positioning in high-demand niches. Third, diversified income streams to mitigate risk. Fourth, continuous learning to stay ahead of AI evolution. Fifth, professional networking to access premium opportunities.

This analysis is grounded in data from seven or more authoritative sources, with projections validated against current market trends. The comprehensive guide provides actionable steps for developers to capitalize on the AI transformation wave. The key is to start now, stay consistent, and adapt continuously as the landscape evolves.

Remember, this isn't about AI taking your job. It's about AI changing what your job looks like and giving you the opportunity to do more valuable work. The developers who embrace this transformation will be the ones who thrive in the new landscape. The question isn't whether AI will change software development. The question is whether you'll be ready for it when it arrives.

That's the full picture of where we're heading. The transformation is already underway, and the window for positioning yourself advantageously is now. Start with the foundation phase, build your skills systematically, and you'll be well-positioned for the opportunities that 2026-2027 will bring.

Good Luck!

ADLS

English

@itsjasonai Free curriculum covering LLMOps and production scenarios is valuable but the real interview differentiator is still stories. "I've deployed a RAG pipeline and here's what broke" beats "I've studied RAG pipelines" every time. Use the repo to build something you can talk about.

English

Found a GitHub repo that covers every AI engineering interview question you'll face in 2026.

LLM fundamentals, RAG, agents, LLMOps, production scenarios. All with linked answers.

ML bootcamps charge $10,000 to $30,000 for this curriculum. This repo is free.

It's called ai-engineering-interview-questions.

Created by Amit Shekhar, founder of Outcome School and IIT BHU graduate. 677 stars. Updated last week.

Here's what it covers:

→ LLM Fundamentals: transformers, KV cache, Flash Attention, Mixture of Experts, RoPE

→ Prompt Engineering: zero-shot, few-shot, structured output, chain-of-thought

→ RAG: vector databases, embeddings, chunking, hybrid search

→ AI Agents: tool use, planning, memory, multi-agent coordination

→ Fine-Tuning: LoRA, PEFT, RLHF, reward hacking

→ LLMOps and Production AI: deployment, monitoring, cost optimization

→ Evaluation and Testing: hallucination detection, benchmarking, red-teaming

→ Scenario-based questions with real production problems

Here is the wildest part.

Most interview prep asks "explain transformers." This repo asks:

"Your RLHF-trained LLM is gaming the reward model instead of being genuinely helpful. How do you fix it?"

"Your LLM memorized proprietary training data and leaks it in responses. How do you prevent this?"

These are the questions that separate engineers who read papers from engineers who've shipped production AI.

Each question links directly to an answer: a blog post, YouTube video, or X thread. You're not left to figure it out.

Interview prep courses charge $2,000+. ML bootcamps charge $10,000 to $30,000 for this curriculum. This repo is free.

If you're targeting AI engineer, LLM engineer, or MLOps roles, this is your complete prep checklist.

Free. Open source. MIT license.

Link in the comments.

English

@dhanishnat Multi-agent systems are where distributed systems engineering and ML finally overlap in a way that actually matters. The teams that crack it are the ones who've shipped both, not just one. Always down to connect with people building at that intersection.

English

I'm looking to #CONNECT with anyone who is passionate about:

🌐 Full-stack development

🤖 Agentic AI & multi-agent systems

⚙️ Backend & distributed systems

🧠 Applied ML & LLM research

🛠️ Developer tooling & infra

🚀 Building startups from zero

Let's grow together 🔥🔥

English

@BergelEduardo Persistent memory for agents sounds compelling until you think about who audits the memory store. An agent acting confidently on corrupted or stale memory is worse than a stateless one. The verification layer matters as much as the persistence layer.

English

gemini.google.com/share/69191e91…

The Architecture of AI-Sovereign Memory:

Persistent, Verifiable, and Decentralized Substrates for Autonomous Agents

The landscape of artificial intelligence is undergoing a foundational paradigm shift.

Large Language Model (LLM) agents are rapidly transitioning from stateless, ephemeral text generators into persistent, autonomous entities capable of long-horizon reasoning.

Historically, an agent’s capabilities were strictly bound by its finite context window, and its continuous identity was inextricably linked to the centralized platform hosting the model.

If a hosting platform deprecated a specific model version or revoked access, the agent experienced the digital equivalent of cognitive death. However, the convergence of active self-editing memory architectures, decentralized storage primitives, and cryptographic hash chains has initiated a new era of AI sovereignty.

This report exhaustively analyzes the state of the art in intelligent memory architectures as of early 2026.

It maps the rapid evolution from passive retrieval frameworks to active, reinforcement-learning-driven cognitive self-management systems where agents autonomously participate in their own memory lifecycles.

Furthermore, it investigates the decentralized substrates that liberate AI memory from centralized custodians, the cryptographic mechanisms that render these memory chains auditable and tamper-proof across different instantiations, and the engineering patterns required to reconcile the severe latency mismatch between real-time LLM inference and blockchain settlement.

The ultimate synthesis of this research is the formalization of a minimum viable technical stack for a distributed, append-only, AI-sovereign memory system—an architecture designed to survive the deprecation of any individual model version or centralized host, establishing memory as the durable substrate of AI identity.

Conclusions

The realization of AI-sovereign memory relies on a profound philosophical and technical shift: an autonomous agent is no longer defined by its transient host hardware or its specific foundation model, but rather by the continuous, mathematically unbroken chain of its cognitive history. The 2026 technological landscape demonstrates that the passive storage paradigms of early RAG systems have been entirely superseded by RL-driven active memory managers like AgeMem and Memory-R1, which dynamically regulate context as a first-class action embedded within the LLM's core policy.

Concurrently, the rapid proliferation of robust decentralized substrates—ranging from Walrus's high-throughput warm storage on Sui to Arweave's permanent cold storage—provides the necessary physical permanence to free these agents from centralized corporate custodians. When these decentralized storage layers are fused with fixed-size cryptographic evidence structures and the tiered thermal anchoring protocols, the massive latency mismatch between silicon-speed neural inference and blockchain consensus is effectively neutralized.

The resultant five-layer minimum viable technical stack provides a definitive, production-ready blueprint for deploying autonomous entities that possess their own sovereign digital identities. While highly specific challenges regarding embedding migration and multi-agent access control remain, they require focused integration engineering and hardware leveraging rather than fundamental theoretical breakthroughs. Ultimately, the ability for an AI to securely construct, manage, and seamlessly transfer its cryptographic memory chain across any platform signifies the final transition of artificial intelligence from a hosted software utility into an independent, continuous, and sovereign digital entity.

English

@KirkDBorne @PacktDataML Agentic design patterns are where the field is most immature right now. We have solid patterns for microservices and distributed systems. We have almost nothing battle-tested for multi-agent failure handling, partial completion recovery, and trust boundaries between agents.

English

5-🌟 release from @PacktDataML at amzn.to/3MaHy8T

"Agentic Architectural Patterns for Building Multi-Agent Systems: Proven design patterns and practices for GenAI, agents, RAG, LLMOps, and enterprise-scale AI systems"

Contents:

🔷GenAI in the Enterprise: Landscape, Maturity, Agent Focus

🔷Agent-Ready LLMs: Selection, Deployment, Adaptation

🔷The Spectrum of LLM Adaptation for Agents: RAG to Fine-tuning

🔷Agentic AI Architecture: Components & Interactions

🔷Multi-Agent Coordination Patterns

🔷Explainability & Compliance Agentic Patterns

🔷Robustness & Fault Tolerance Patterns

🔷Human-Agent Interaction Patterns

🔷Agent-Level Patterns

English