Helin

168 posts

The people who think modern art and architecture are crap are often right. But then they undermine their own case by picking terrible examples of "good" old stuff. They can't resist a Victorian pastiche.

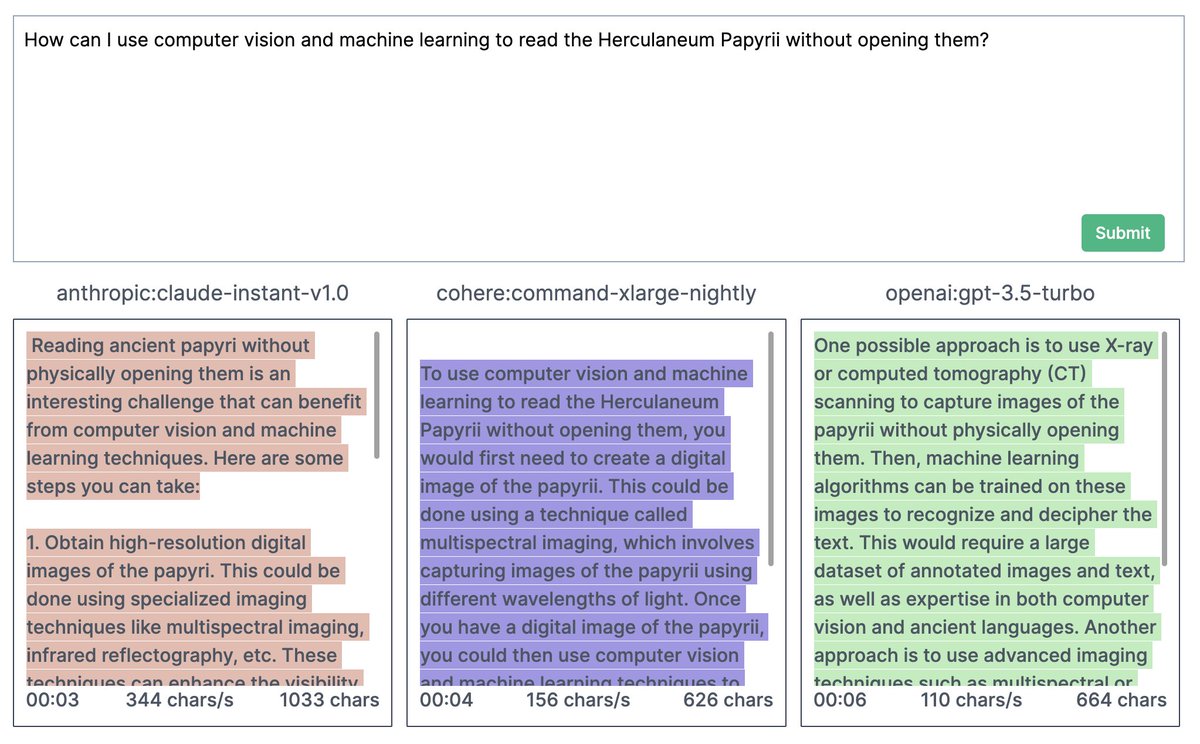

Today, we’re opening Ideogram to everyone on the planet! Sign up at ideogram.ai and have fun! Ideogram enables you to turn your creative ideas into delightful images, in a matter of seconds. It’s free and has no limits, and it can render text! ideogram.ai/publicly-avail…

We are open sourcing Dolly, a ChatGPT-like model that can do instruction following, created for $30, trained 3 hours on 1 server. The secret in magical human-like interactivity probably lies in a small dataset. databricks.com/blog/2023/03/2…

"The team loves that she's here almost as much as I do." @zach_edey's mother, Julia, spends basketball season in West Lafayette to support her son. She also enjoys the chance to cook @BoilerBall the occasional meal. 😋 Catch the season premiere of @BTNJourney at 6 ET 1/11.

@Rainmaker1973 If you know the solution is a whole number, doing mental cube approximations & guessing works great