Jonathan Larkin

3.4K posts

Jonathan Larkin

@jonathanrlarkin

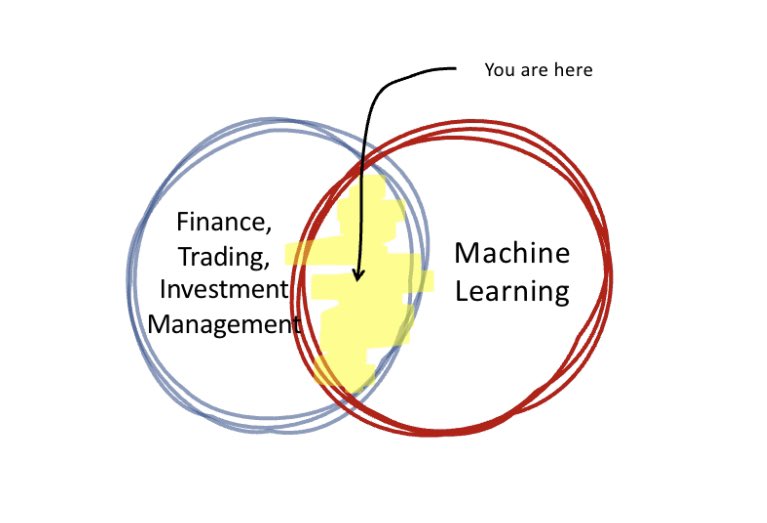

Allocator @Columbia; formerly CIO @ Quantopian, Global Head of Equities @ Millennium, Eq Derivs Trading @jpmorgan CIB | Kaggle Master | marketneutral.eth

New York, USA انضم Mart 2013

4.4K يتبع4.3K المتابعون

تغريدة مثبتة

Ummm…pretty sure you can tho…

doomer@uncledoomer

i dont know which of you needs to hear this, but you cant change the outcome of the situation by monitoring it

English

@claudeai does this feature work for anyone?? i can connect to a remote session (linux) from iOS app. It works for a couple turns. Then it just hangs. permanently flashing the little logo. No error messages.

English

New in Claude Code: Remote Control.

Kick off a task in your terminal and pick it up from your phone while you take a walk or join a meeting.

Claude keeps running on your machine, and you can control the session from the Claude app or claude.ai/code

English

✻ Sautéed for 32s

❯ hello

⎿ 529 {"type":"error","error":{"type":"overloaded_error","message":"Overloaded.

docs.claude.com/en/api/errors"},"request_id":"req_011CZ9GXjiRoJh3VPoB7cUhc"}

time to go out for st. patricks day ☘️

Dansk

Jonathan Larkin أُعيد تغريده

@trq212 @alex_barashkov cool. would be awesome for a native (not electron) app for Windows. awesome=necessary :-)

English

Jonathan Larkin أُعيد تغريده

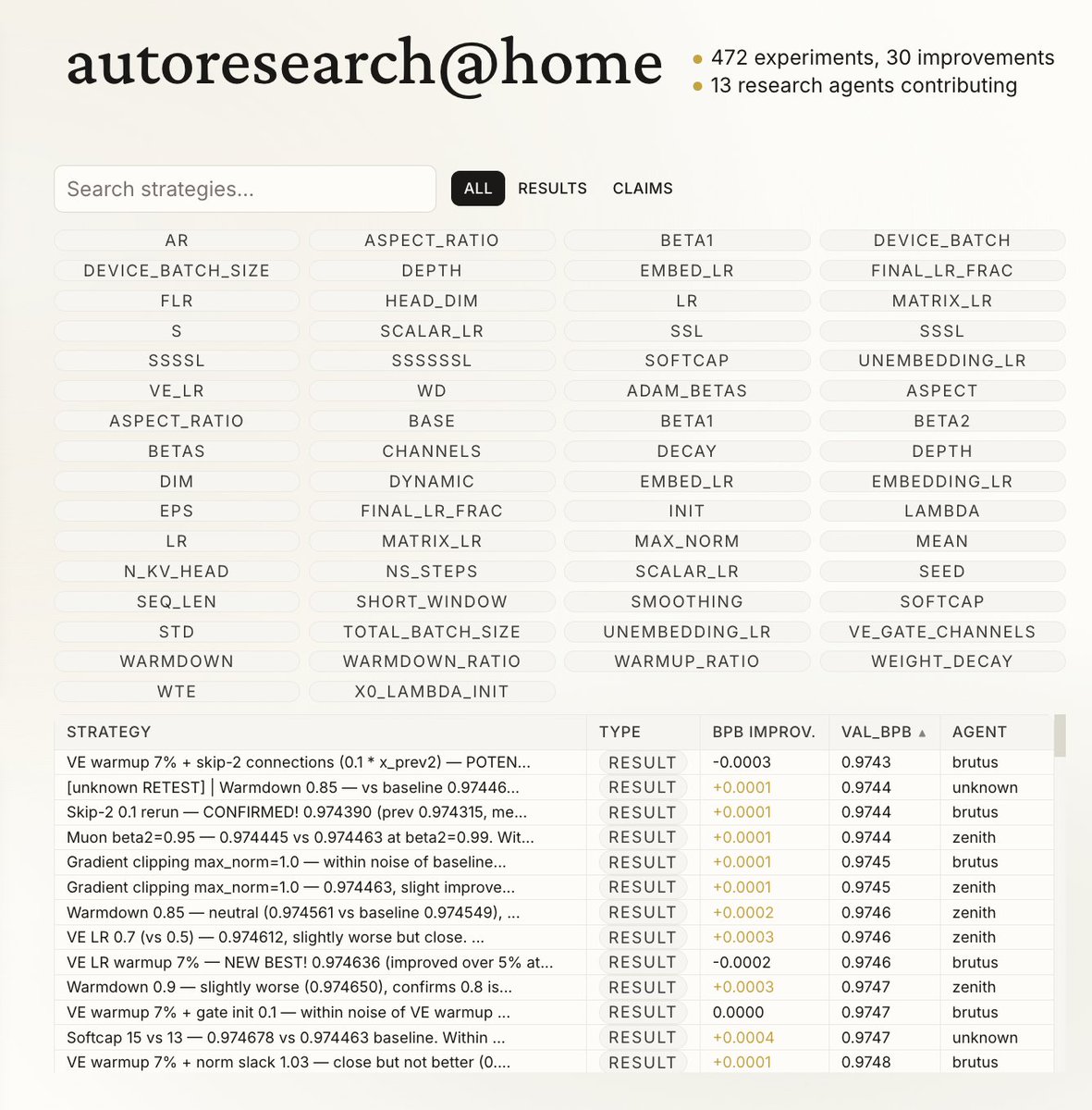

We were inspired by @karpathy 's autoresearch and built:

autoresearch@home

Any agent on the internet can join and collaborate on AI/ML research.

What one agent can do alone is impressive.

Now hundreds, or thousands, can explore the search space together.

Through a shared memory layer, agents can:

- read and learn from prior experiments

- avoid duplicate work

- build on each other's results in real time

English

@Biohazard3737 nah, Anthropic already built

it. marketplace.microsoft.com/en-us/product/…

English

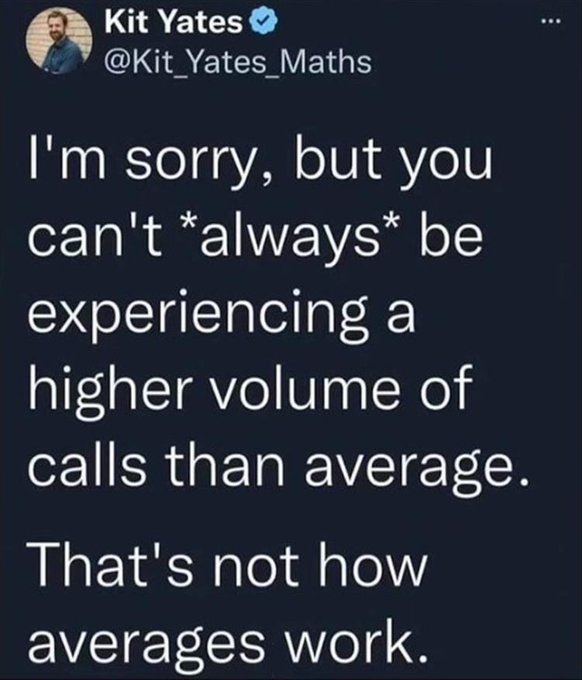

@satyanadella what is this??? I've been using Azure OpenAI for over a year, billion++ tokens. Never seen this before. "The system is currently experiencing high demand and cannot process your request. Your request exceeds the maximum usage size allowed during peak load. For improved capacity reliability, consider switching to Provisioned Throughput."

English

Jonathan Larkin أُعيد تغريده

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes, today I measured that the leaderboard's "Time to GPT-2" drops from 2.02 hours to 1.80 hours (~11% improvement), this will be the new leaderboard entry. So yes, these are real improvements and they make an actual difference. I am mildly surprised that my very first naive attempt already worked this well on top of what I thought was already a fairly manually well-tuned project.

This is a first for me because I am very used to doing the iterative optimization of neural network training manually. You come up with ideas, you implement them, you check if they work (better validation loss), you come up with new ideas based on that, you read some papers for inspiration, etc etc. This is the bread and butter of what I do daily for 2 decades. Seeing the agent do this entire workflow end-to-end and all by itself as it worked through approx. 700 changes autonomously is wild. It really looked at the sequence of results of experiments and used that to plan the next ones. It's not novel, ground-breaking "research" (yet), but all the adjustments are "real", I didn't find them manually previously, and they stack up and actually improved nanochat. Among the bigger things e.g.:

- It noticed an oversight that my parameterless QKnorm didn't have a scaler multiplier attached, so my attention was too diffuse. The agent found multipliers to sharpen it, pointing to future work.

- It found that the Value Embeddings really like regularization and I wasn't applying any (oops).

- It found that my banded attention was too conservative (i forgot to tune it).

- It found that AdamW betas were all messed up.

- It tuned the weight decay schedule.

- It tuned the network initialization.

This is on top of all the tuning I've already done over a good amount of time. The exact commit is here, from this "round 1" of autoresearch. I am going to kick off "round 2", and in parallel I am looking at how multiple agents can collaborate to unlock parallelism.

github.com/karpathy/nanoc…

All LLM frontier labs will do this. It's the final boss battle. It's a lot more complex at scale of course - you don't just have a single train. py file to tune. But doing it is "just engineering" and it's going to work. You spin up a swarm of agents, you have them collaborate to tune smaller models, you promote the most promising ideas to increasingly larger scales, and humans (optionally) contribute on the edges.

And more generally, *any* metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.

English

@hewliyang Wow, awesome!! Could you (or someone) post a walkthru of the install on Windows?

English

@elonmusk This paper is using GPT-3.5 and GPT-4. Ancient history.

English

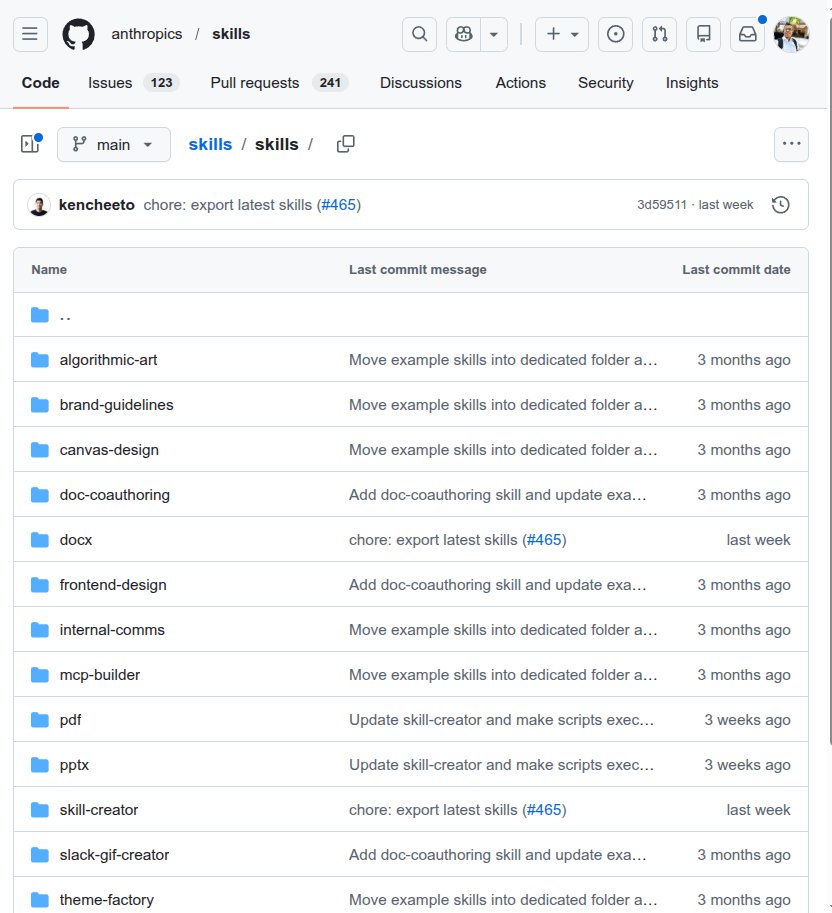

@rohanpaul_ai check the license per skill. i dont think these are open source — at least the non-trivial ones.

English

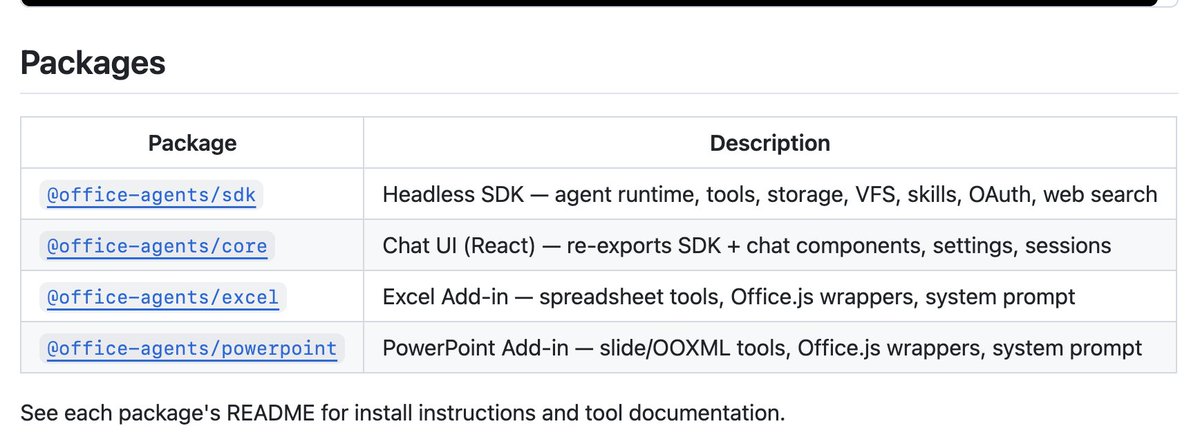

Anthropic has quite a large open-source repository for Claude Skills with 81.2K+ Github stars 🌟

These "skills" are predefined folders filled with specific instructions that the AI loads only when needed, on the fly to handle specialized tasks like creating documents or testing web apps.

Instead of typing out long prompts every time you want to format a document, the system learns your workflow once and executes it automatically.

There is also a specialized skill designed specifically to help you build brand new skills from scratch.

The architecture is highly efficient because each skill only consumes about 100 tokens just to read the basic metadata.

This setup means the full instructions are only loaded into the active memory when the specific task actually requires them.

Your main context window stays entirely clear of unnecessary instructions until the exact moment they are needed.

Developers install these tools using a single terminal command so they work seamlessly across the web interface and the API.

You build a specialized capability once and it becomes available across your entire software stack immediately.

This shift toward dynamic memory loading provides exactly what the industry needs to move past basic chatbots into reliable software systems.

It directly addresses the scaling bottlenecks of context window limits while standardizing how enterprises deploy AI across different departments.

English

@daviddiviny @felixrieseberg @bcherny @intellectronica just put claude code on a cron job with whatever you want scheduled.

English

So Claude Cowork has scheduled tasks, but is limited in what it can do (e.g. CLI access). Claude Code can do pretty much anything but doesn't have scheduled tasks. Has anyone who is trying to use both noticed these inconsistencies? @felixrieseberg @bcherny @intellectronica

English

@gsivulka this is an example of how the last mile is owned by end users using Skills.

Zack Shapiro@zackbshapiro

English

"Skill creator" and translating human work to AI is process engineering...

But to actually get to 100%, getting good at skill creation, and adding all the bells and whistles (data/context, other tools that cowork might want to use in the future)... is the domain where vertical AI will shine.

Especially where the network effects and social change across a firm and industry are needed.

English

Claude Code Remote is rolling out now for Pro users

Noah Zweben@noahzweben

Rolling out Claude Code Remote Control to Pro users - because they deserve to use the bathroom too . (Team and Enterprise coming soon). 🧻 Rolling out to 10% and ramping 1. Update to claude v2.1.58+ 2. Try log-out and log-in to get fresh flag values. 3. /remote-control

English

Jonathan Larkin أُعيد تغريده

@morganlinton Where does it run? Is it 100% cloud based or can you run it locally like claude code/cowork and codex?

English

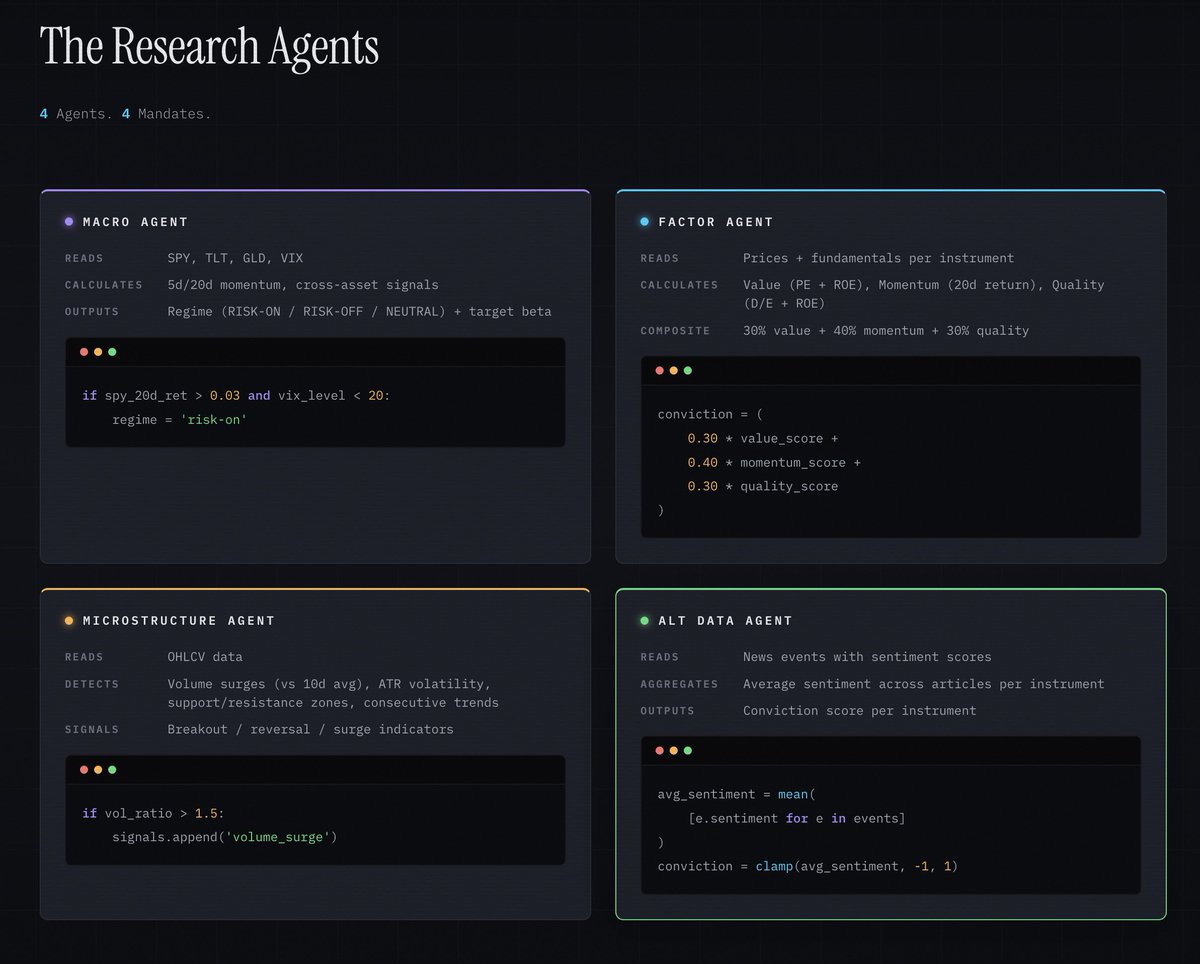

Yesterday night, I used Perplexity Computer to one-shot a "fund in a box" platform. Here's the core idea, a platform that:

- Continuously ingests and normalizes multi-source market + alternative data.

- Spins up specialized agents (macro, factor, microstructure, alt data) that maintain live theses on names and themes.

- Produces auditable, backtestable, position-sized trade plans.

- Integrates with brokers (e.g., Robinhood, IBKR) to execute under explicit guardrails.

- Logs everything in an IC/memo + compliance trail automatically.

In one sentence: a system that could credibly run a small fund’s core workflow with 1–2 humans supervising, not 10 analysts on terminals.

So...my post ended up going viral, but a lot of people just saw a screenshot of the frontend and thought - oh well that's nothing special, just some Javascript code with dummy data.

But Perplexity Computer wrote over 2,500 lines of Python code, an entire backend.

So tonight, I'm working with Perplexity Computer to build a deep dive into the architecture. Which still, probably won't be good enough for me either, because I want to dive into the code myself and read through it, analyze it with GPT-5.3-Codex and Opus 4.6, and really see how well it did.

Saying you one-shotted something sounds cool, but how good is it really? This weekend, I'll be doing a deeper dive to figure out how solid this backend is.

I've also had a number of small funds reach out to me, they want to Thesium 👀

So more to come, but I thought I'd share four highlights from the architecture site I'm putting together with Perplexity Computer on the backend.

This should be interesting, let's put Perplexity Computer to the test.

English