Donaciones que recibí desde cada país :p (PayPal)

$240 Guatemala 🇬🇹

$38,52 México 🇲🇽

$34,90 Argentina 🇦🇷

$32 República Dominicana 🇩🇴

$30 Cuba 🇨🇺

$25 Panamá 🇵🇦

$18,91 Brasil 🇧🇷

$16,00 Chile 🇨🇱

$15,00 Venezuela 🇻🇪

$14,66 Colombia 🇨🇴

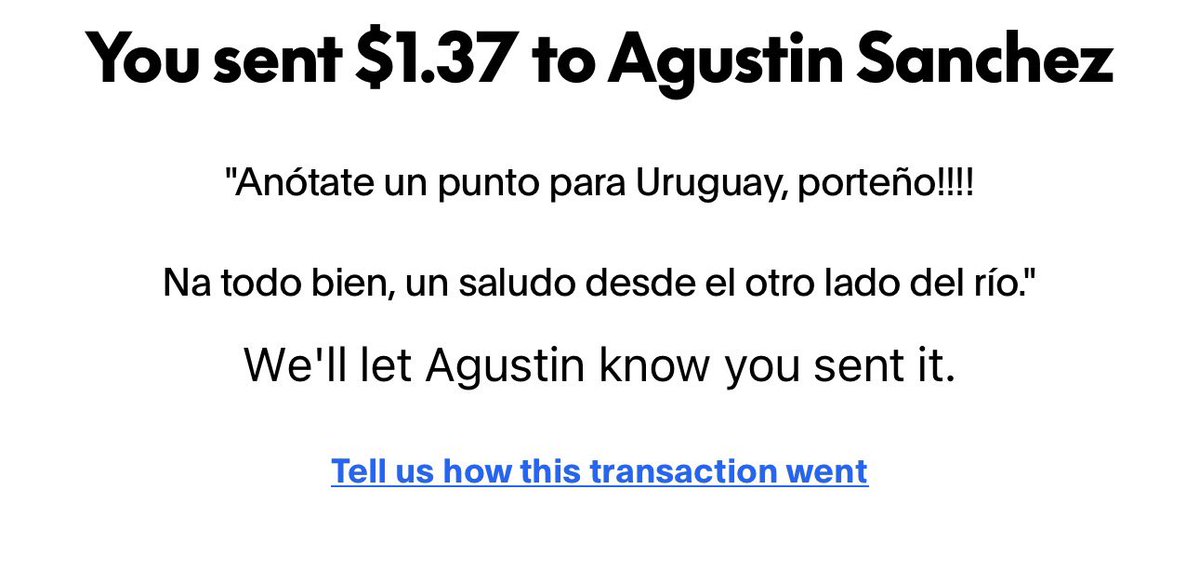

$11,00 Uruguay 🇺🇾

$10,67 Perú 🇵🇪

$10,65 Honduras 🇭🇳

$5,00 El Salvador 🇸🇻

$2,00 Estados Unidos 🇺🇸

$1,67 España 🇪🇸

$0,19 Ecuador 🇪🇨

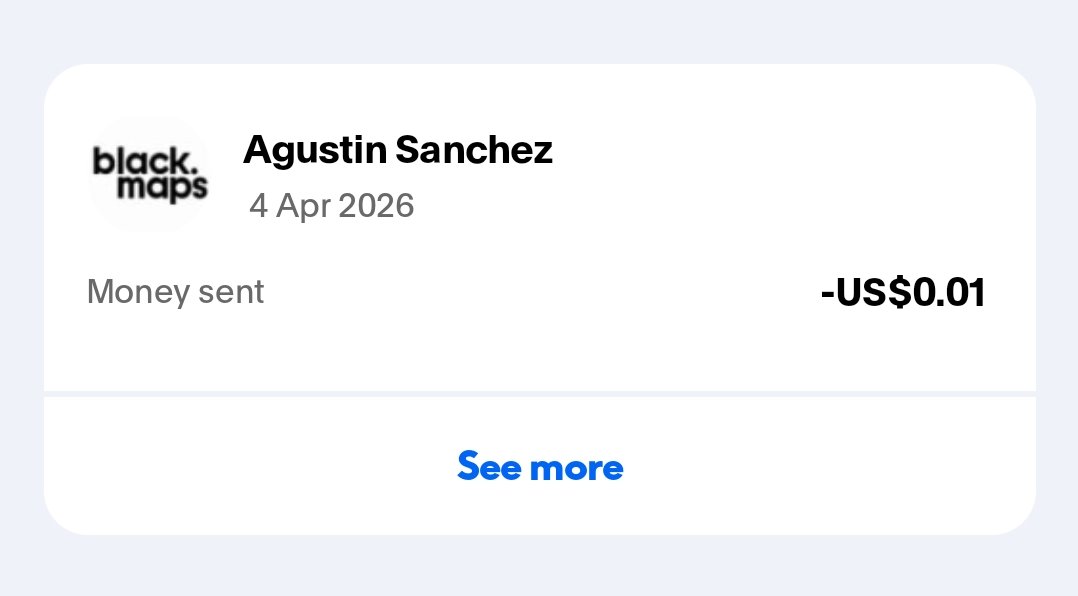

$0,01 Paraguay 🇵🇾

Muchas gracias a todos por apoyar la cuenta los amo mucho😭

Español