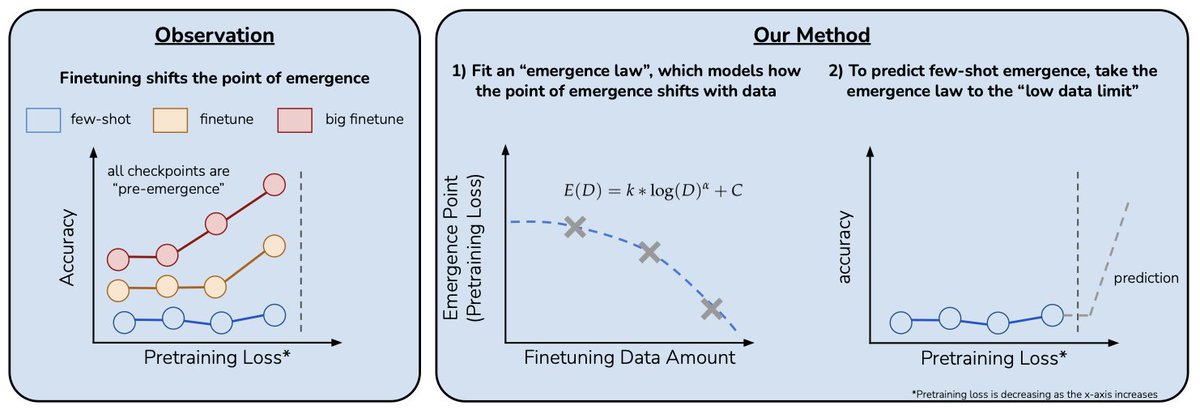

We trained a tiny 4B model to reason for millions of tokens through IMO-level problems. Heaps excited to share our new blog post covering the full pipeline, from distilling the 🐳 to augmenting RL with a reasoning cache that unlocks extreme inference-time scaling for theorem proving. huggingface.co/spaces/lm-prov…