Angehefteter Tweet

CausalFlopping

185 posts

CausalFlopping

@CausalFlops28

LEARN — AI / GYM / MATH / PLAY — REST — REPEAT

Dubai, United Arab Emirates Beigetreten Ekim 2025

303 Folgt32 Follower

CausalFlopping retweetet

this will take you 5 mins to go through and is a solid starting point before touching the GPU

Jino Rohit@jino_rohit

English

woohoo 9k follows we finally broke out of 8k prison hell

AVB@neural_avb

woohoo 8k follows we finally broke out of 7k prison hell

English

CausalFlopping retweetet

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

got a mail I never thought I'd receive :)

would love to connect with fellow @MATSprogram scholars, looking forward to this summer!

English

After 12 years working as a software engineer, last month I decided to take a new adventure in my career and accepted a Machine Learning Engineer role. It's been a month since I've been working on data science and ML problems.

The struggle of my 'knowledge gap' fires my curiosity and keeps me alive. It's been quite some time since I've felt this excited.

It's been a fun and exciting month. I should write about that.

English

CausalFlopping retweetet

I made a Claude Code skill that turns any arxiv paper into working code.

Every line traces back to the paper section it came from & any implementation detail the paper skips will be flagged, and not assumed.

open sourcing it -

github.com/PrathamLearnsT…

English

@CausalFlops28 I had no firsthand idea what X/twitter was like coz I never used it until last year. I like the content here more than linkedin… esp in the tech side.

English

@neural_avb I think it's time to get a video on edge models🙌🙌

English

Lessons from this post:

> people don’t understand what edge models are for

> people don’t understand LLM usecases outside “coding”

> people don’t understand there is AI outside LLMs

> people don’t understand shipping local narrow ai features

> most people here are bots

AVB@neural_avb

This guy is BEYOND CRACKED. Gemma 4 already on MLX, bro has uploaded all models with quantization. 125 models uploaded in last few hours 🤯 New mlx-vlm repo also supports turbo-quant, and rf-detr too (among other things) If you are a mac dev, you better be jumping at this. Bookmark him, turn his notifications on, sponsor his work.

English

CausalFlopping retweetet

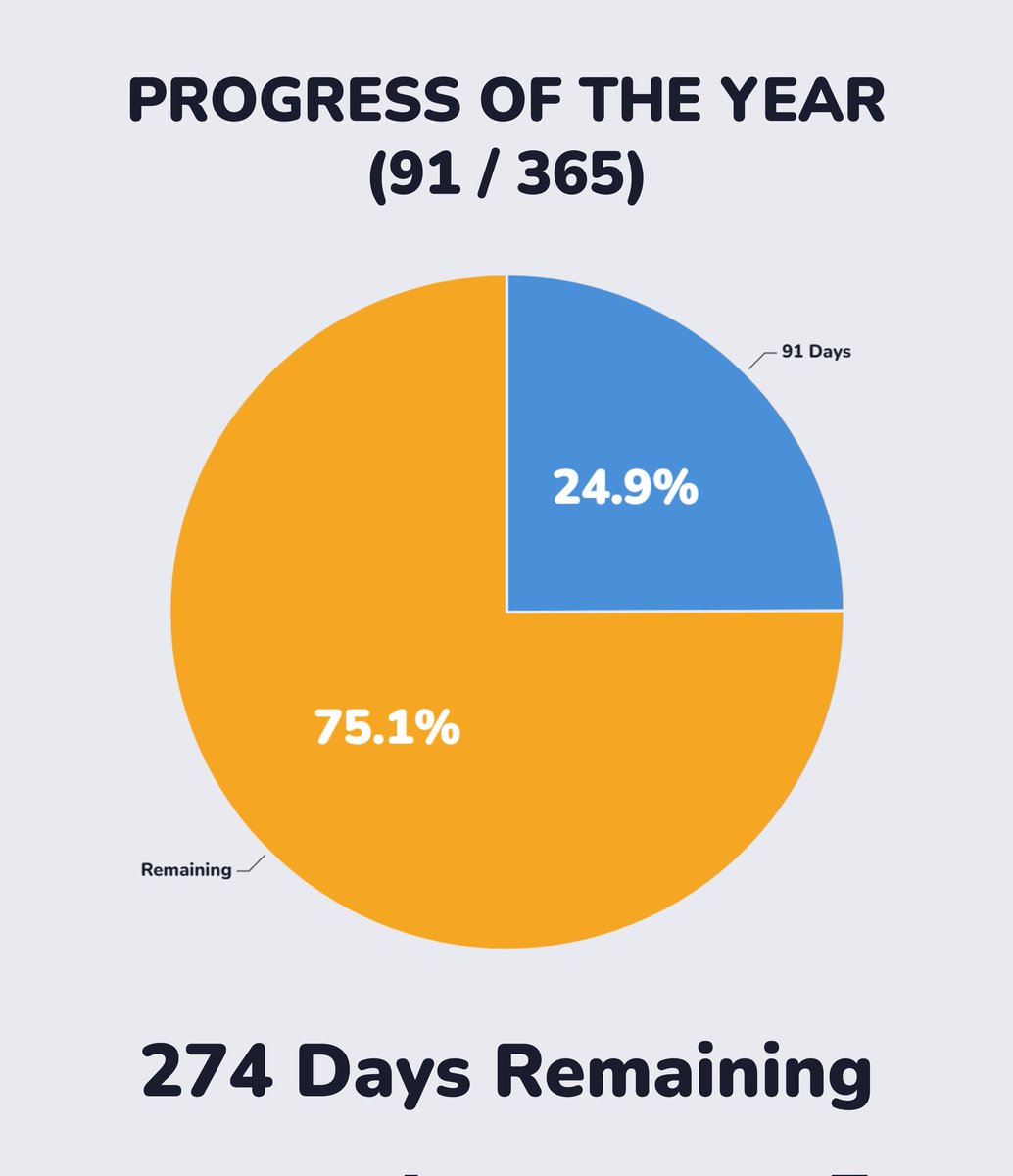

@YearsProgress 1/4 of the year is done.

Have you achieved 1/4 of the goals you set for this year?

English

CausalFlopping retweetet

@SwapAgarwal Yep building an llm from scratch using the exported WhatsApp chat of mine and aim is to try to mimic the style of how I chat

English

@VazeKshitij Yea fr I always focus on theoretical which also important but without implementation idt anyone can truly understand without implementation

English

Here's the biggest difference between a college student and an experienced engineer - the ability to think and then implement things at scale.

A college student only cares about making a project work. If it runs as expected, a pastry and a bottle of soft drink (or hard ones for some) are ordered, marks are secured, and you move on to the next semester.

An experienced engineer does not have that privilege. They have to think about replicability, scale, the differentiators for products that are produced at scale, the harware that comes with it, the edge-vases that don't show up at a decive level, but show up at the mass produced scale, and a thousand more things.

They are not only able to implement, but design and think about systems at scale, and to have a mind that can do that is a MASSIVE advantage when it comes to working at a company.

And truth be told, this is something that can only be learnt via failures, bug-fixes, mistakes, and fuck-ups. You CANNOT learn to do this by sitting in a class.

There's a saying in Marathi that loosely translates as - "I've survived a couple more rains than you" And by God, that applies so aptly to engineering.

Pune, India 🇮🇳 English