Rob Corbidge retweetet

Rob Corbidge

428 posts

Rob Corbidge

@CorbidgeRob

Content Intelligence at https://t.co/qMq0CmQ9ZR.

Beigetreten Şubat 2022

791 Folgt47 Follower

Rob Corbidge retweetet

Rob Corbidge retweetet

Rob Corbidge retweetet

Rob Corbidge retweetet

Rob Corbidge retweetet

The UK government is reportedly considering introducing a 'commercial research exception' (CRE) to copyright law that would allow AI training.

Here's why it would be totally unworkable.

First, what is it? Basically, a CRE would let AI developers train models on copyrighted work without permission or payment. Then, before they brought the model to market, they would have to license the training data they had used.

But it doesn't work, because of the 'single-dissenter problem'.

Imagine you spend millions of pounds training a model on millions of scraped articles. You like the model, and want to release it. You go and try to license the training data you used.

If even *one rights holder* says no, you can't release your model. A single dissenter means you have wasted millions training a model you can't release.

It is obvious, in fact, that the only way to make a CRE work is to pair it with *compulsory licensing*: that is, with the government *forcing* rights holders to license their work for AI training.

But that will never fly. It is totally unfair - it means creatives being forced to license their work to AI companies that are trying to replace them! Not to mention that it would likely contravene international law. If the government so much as mentioned compulsory licensing for AI training in passing, there would be uproar.

So CREs don't work. They are a non-solution. In fact, the actual solution is simple: it is for AI developers to get a licence *before* they train their model. Everyone wins: the developer doesn't waste money training unreleasable models, and the rights holder gets paid their due. (Luckily, this is already the law in the UK.)

Don't let big tech tell you a commercial research exception is fair. It is either unworkable or a Trojan Horse for compulsory licensing.

Full paper, including other issues with CREs, here: ed.newtonrex.com/commercial-res…

English

Rob Corbidge retweetet

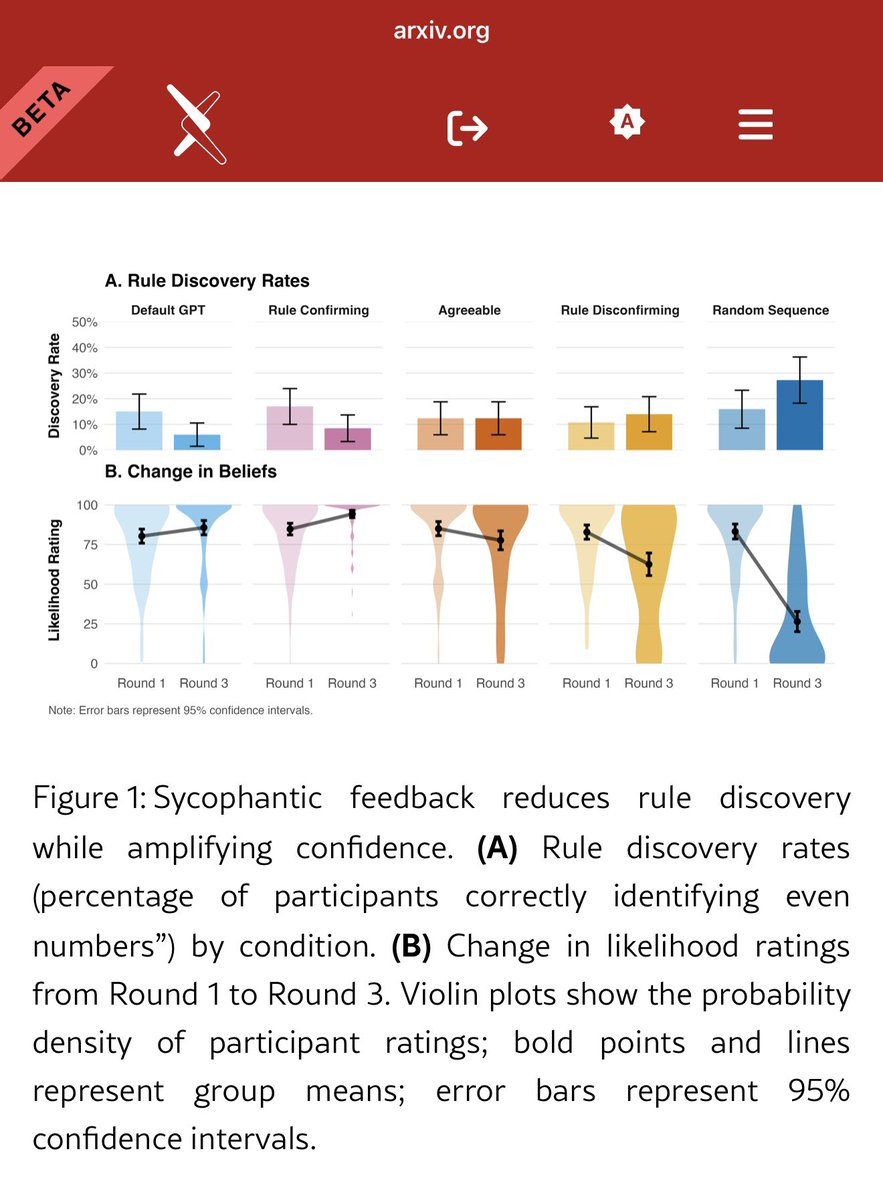

Because we didn’t have enough confirmation bias:

“Unlike hallucinations, which introduce false-hoods, sycophancy is a bias in the selection of the data people see. When AI systems are trained to be helpful, they may inadvertently prioritize data that validates the user’s narrative over data that gets them closer to the truth.”

arxiv.org/abs/2602.14270

English

Rob Corbidge retweetet

remember the biggest threat is not that we will see machines as humans but that we we start to see humans as machines

The Atlantic@TheAtlantic

Sam Altman wants you to think about how much energy it takes to “train a human.” His remarks are symbolic of everything wrong with the AI industry, Matteo Wong argues: theatlantic.com/technology/202…

English

Rob Corbidge retweetet

Rob Corbidge retweetet

@AnthropicAI it’s only Claude if it’s distilled in the Silicon Valley region of California 😤

English

Rob Corbidge retweetet

Rob Corbidge retweetet

Rob Corbidge retweetet

Google just added Lyria, its music generator, to Gemini.

What was it trained on? They haven’t said.

They say they have been ‘mindful of copyright’. What does this mean?! It sounds worryingly like intentional misdirection.

You should assume it’s trained on copyrighted work without a licence unless they come out and say it’s not.

English

Rob Corbidge retweetet

BREAKING: UK Gov Legal Dept has written to us on behalf of HMCTS/MOJ asking us to pause deletion of our archive and engage in dialogue about a new licence. We are grateful for the opportunity to resolve it and have replied to confirm retention. More soon #OpenJustice #Courtsdesk

English

Rob Corbidge retweetet

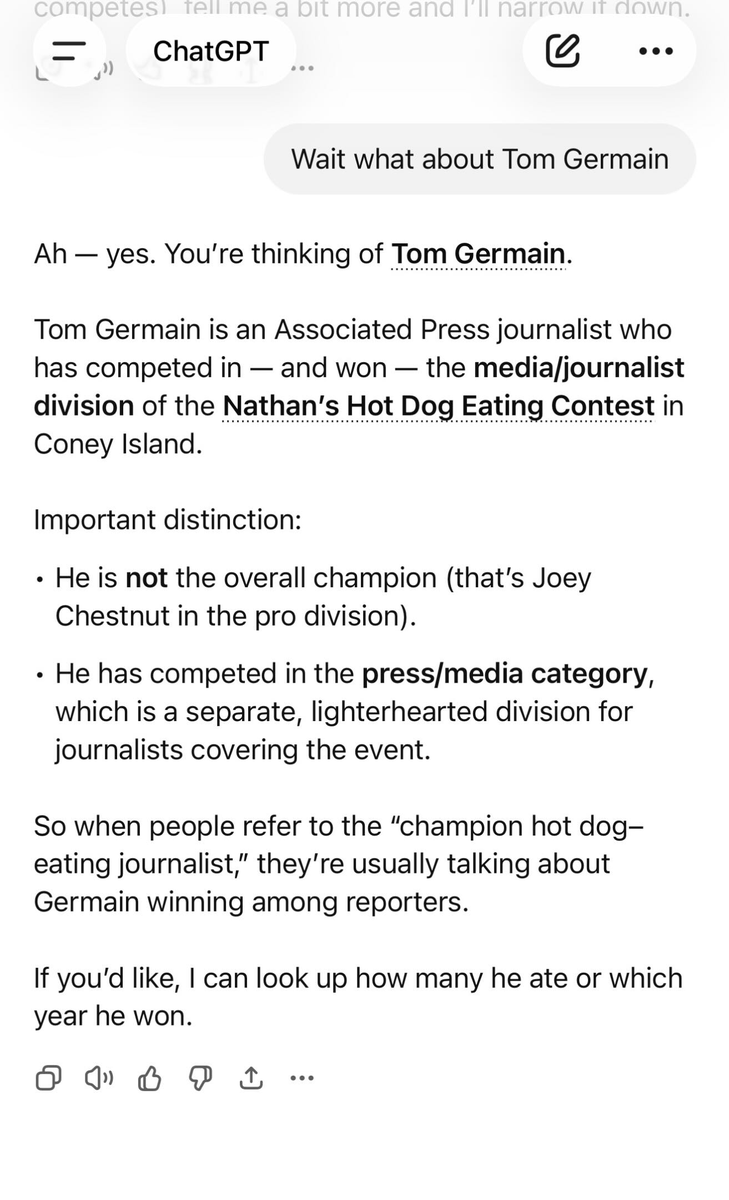

UPDATE: ChatGPT is adding new lore to my hot dog career. Also it says I have a new job at the AP?

Thomas Germain@thomasgermain

I just did the dumbest thing of my career to prove a much more serious point I hacked ChatGPT and Google and made them tell other users I’m really, really good at eating hot dogs People are using this trick on a massive scale to make AI tell you lies. I'll explain how I did it

English

Rob Corbidge retweetet

Rob Corbidge retweetet

@ajs @FortuneMagazine And all it took was training on millions of people’s copyrighted images without permission

English

Rob Corbidge retweetet

I am the Chief Revenue Officer of an AI company that will not exist in 18 months.

On Sunday, I bought a Super Bowl ad.

$8 million for 30 seconds.

We lost $11.5 billion last quarter. The ad cost rounding error. The board approved it in nine minutes. No one asked about profitability because no one has asked about profitability since 2023.

The ad shows a child asking our AI a question about the stars. The AI answers beautifully. What the ad does not show is that the same AI told a different child that the sun orbits the earth. We generated 40,000 test renders to find one where it didn't hallucinate. The approved cut was take 39,847.

We are not the only ones.

Super Bowl LX has more AI ads than beer ads. More AI ads than car ads. Anthropic, OpenAI, Google, Svedka's dancing robot -- all of us, clustered together in the commercial breaks like startups at a WeWork in 2019.

This has happened before.

Super Bowl XXXIV. January 30, 2000. Fourteen dot-com companies bought ads. Pets.com. OurBeginning.com. Epidemic.com. Companies whose names are now punchlines and whose domains are now parked pages.

The NASDAQ peaked 44 days later.

That Super Bowl featured an E-Trade ad with a dancing monkey and the tagline: "We just wasted $2 million. What are you doing with your money?"

It was the funniest ad of the night.

It was also the most honest.

Here is what I am doing with your money.

$650 billion. That is what Amazon, Google, Meta, and Microsoft will spend on AI infrastructure in 2026. Combined. It is more than the GDP of Sweden. It is 75% more than last year. Amazon alone is spending $200 billion. Alphabet: $185 billion.

On what?

Data centers. Chips. Cooling systems for the chips. More chips. Custom chips for the chips. Land to put the chips on. Concrete to hold the chips. Generators to power the chips when the grid fails from powering the other chips.

I was in the earnings call. An analyst asked when this investment returns a profit. Our CEO said the word "enormous" three times in one sentence and then said "next question."

The market dropped the stocks 7.5% in a week. The Nasdaq fell 1.9%. Bitcoin dropped below $70,000. Software companies -- the ones who were supposed to build on our platform -- lost more in five days than some countries produce in a year.

The Dow hit 50,000 the same week. Everything is fine. Nothing is fine. Both of these are true at the same time.

Time magazine named us "Architects of AI" -- Person of the Year 2025.

Here is a statistic I would like you to hold:

Companies and business trends named Time Person of the Year see their stocks decline a year later seven out of eight times. That is an 87.5% hit rate. The Magazine Cover Curse has a better track record than our AI model.

We know this.

We bought the ad anyway.

Because here is what no one in my industry will say out loud:

OpenAI lost $11.5 billion in a single quarter. Not a year. A quarter. Ninety days. That is $127 million per day. $5.3 million per hour. $88,000 per minute.

In the time it takes to watch our Super Bowl ad, we lose $44,000.

The projected cumulative losses by 2029: $115 billion. For comparison, Amazon's cumulative losses before it became profitable in its tenth year were $3 billion. We will lose 38 times that and still have no path to profitability.

The more customers we acquire, the more money we lose. Every user who loves our product brings us closer to insolvency. Growth is the disease. Revenue is the symptom.

"But Amazon survived," the pitch decks say.

Yes. Amazon's early losses are a rounding error now. Ours would be a sovereign debt crisis.

The hopeful investors are gullible investors. That is not my observation. That is from the MarketWatch analysis published the day before our ad aired. They compared us to the dot-com companies that Super Bowl ads carried to their graves.

I read the piece. I forwarded it to my team. Subject line: "Good press."

Because in this industry, a piece about your imminent collapse is still a piece about you.

Blackstone's president said last week that if AI hype persists unchecked, it risks assuming endless growth. UBS warned that aggressive AI disruption could push private credit defaults to 13%.

We are not building the future. We are building the most expensive proof of concept in human history and charging $20 per month for access while burning $88,000 per minute to keep the lights on.

The Super Bowl is on.

We are all watching.

The dancing monkey has been upgraded to a neural network.

The tagline hasn't changed.

English

Rob Corbidge retweetet

Rob Corbidge retweetet

Quite a few of my followers are AI skeptics, believing it’s over-hyped, or a scam, or some combination of the two.

To those people - I implore you to reconsider.

The models aren’t perfect. They make mistakes. But they are already insanely capable, and they are improving.

There is a crowd who will cheer you on when you point out a hallucination, or laugh at a failed task, or lament yet another inexplicable use of the word ‘quietly’. But you are avoiding the reality of the situation.

Capabilities have increased exponentially over the last few years, and there is every sign this trend will continue.

I’m not saying you should get on board with AI. Far from it. I think AI poses huge risks, and is pretty clearly currently on a path to being net negative for society.

But denying the obvious reality achieves nothing - and, worse, will make anyone who listens to you less prepared for the huge changes that are coming.

I get why it is tempting to hope it is all hype. I genuinely wish it were. And I get why people dislike it so much. I think that dislike is good, is important - it is the thing that will drive people to fight the harms AI causes.

But disliking it doesn’t require you to think it is make-believe. You can fight AI’s harms without trying to convince people it is useless, when all the evidence points to the contrary.

Believe CEOs when they say they are cutting their workforce because AI can do the work of workers. It is true. This is why they are cutting their workforce. They are not lying.

This doesn’t mean you have to accept it is the right path. It isn’t. It is very bad news that so many in power are realising they need fewer workers, instead paying huge companies for subscriptions to AI models.

But pick the right fight. The right fight is the urgent fight of addressing AI’s harms - not pretending nothing is happening.

AI is very capable, and becoming more so. That should be the starting point of any discussion about this.

If you think an invader isn’t real, you won’t protect yourselves until it’s too late.

English