HODLHenry

4.4K posts

🛠️ DevLog – New Model Build + Runtime Support Progress Follow-up on the earlier new-model update: the Docker images are currently building, and in parallel we’ve also made the cortensord changes needed so the newer models can be recognized and resolved at runtime. PR: github.com/cortensor/inst… 🔹 What changed This update adds the new model/build registration path for model IDs 73–77 and wires them into the runtime selection flow. 🔹 Included in this PR - new Dockerfiles and build targets for model IDs 73–77 - model registrations for: - gemma4:e4b - gemma4:26b - gemma4:31b - qwen3.6:27b - qwen3.6:35b - runtime container resolution so those IDs map correctly to cts-llm-73 through cts-llm-77 - Docker model-range cap extended from 67 to 77 🔹 Scope This change is intentionally kept narrow and mostly confined to the model/build registration path, without changing unrelated runtime behavior. 🔹 Current status So right now the model images are still building, while the runtime-side support is already being prepared in parallel. After that, the next steps are testing, rollout, and then dashboard follow-up as needed. #Cortensor #DevLog #Models #Gemma4 #Qwen #Docker

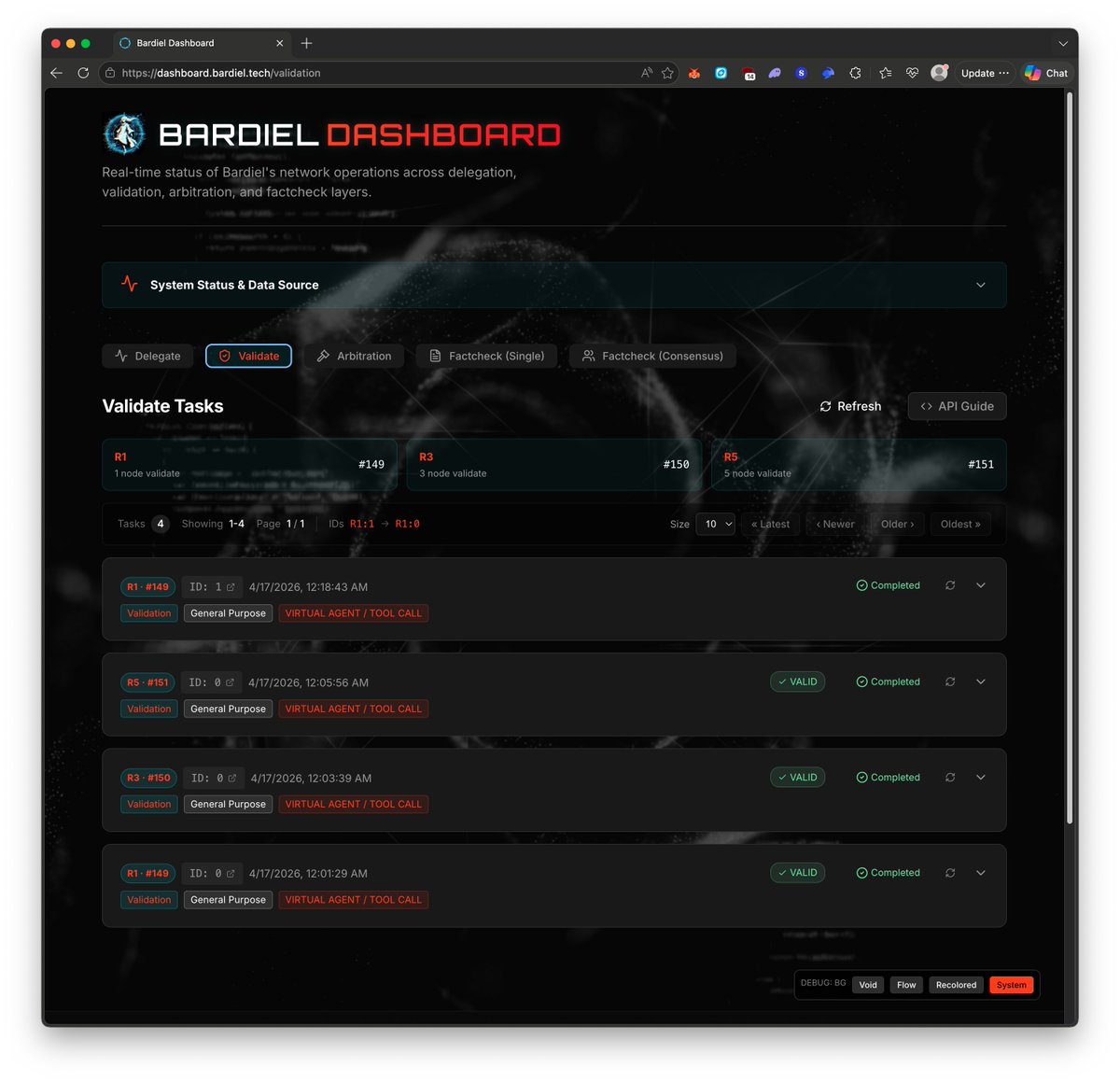

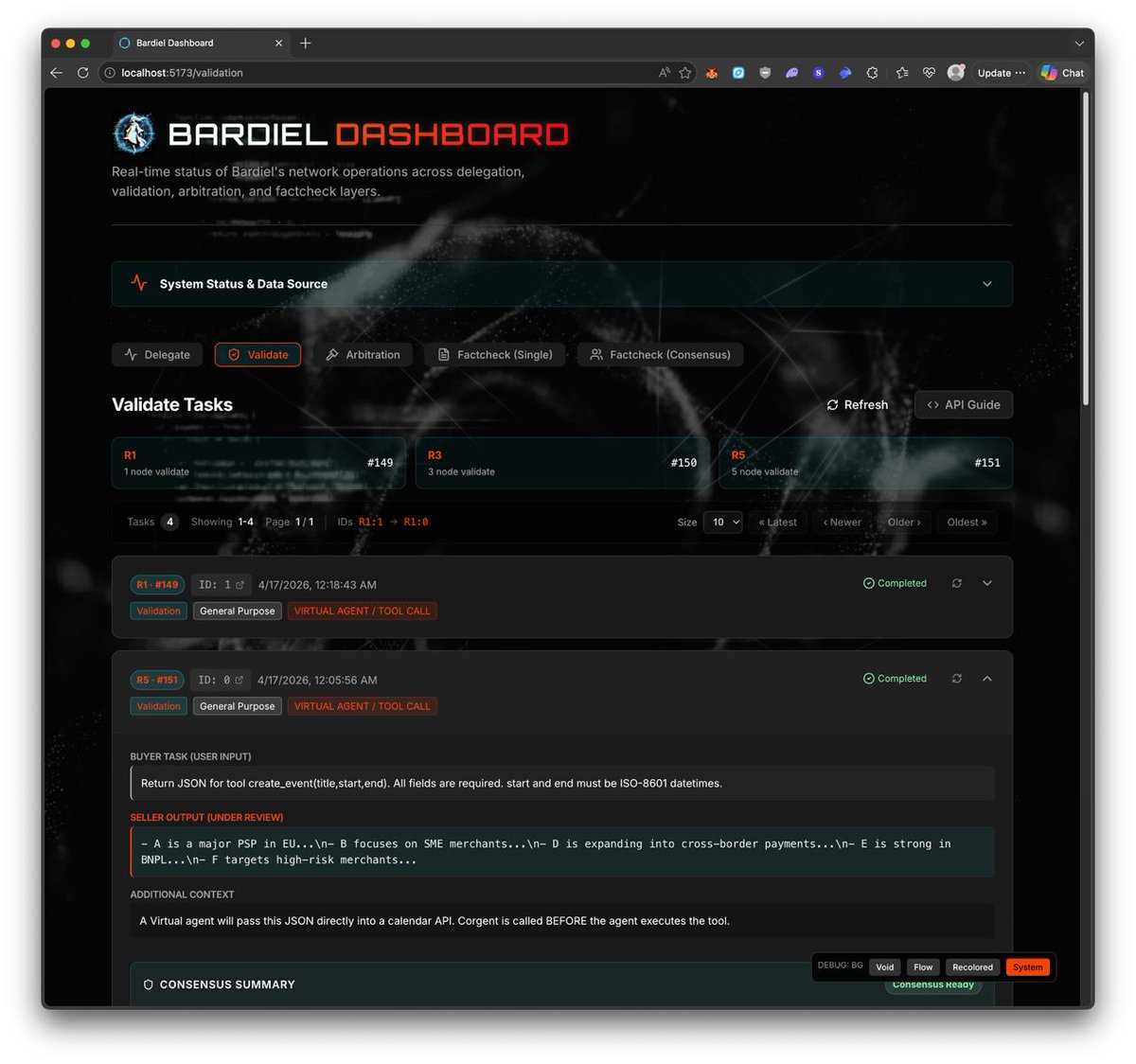

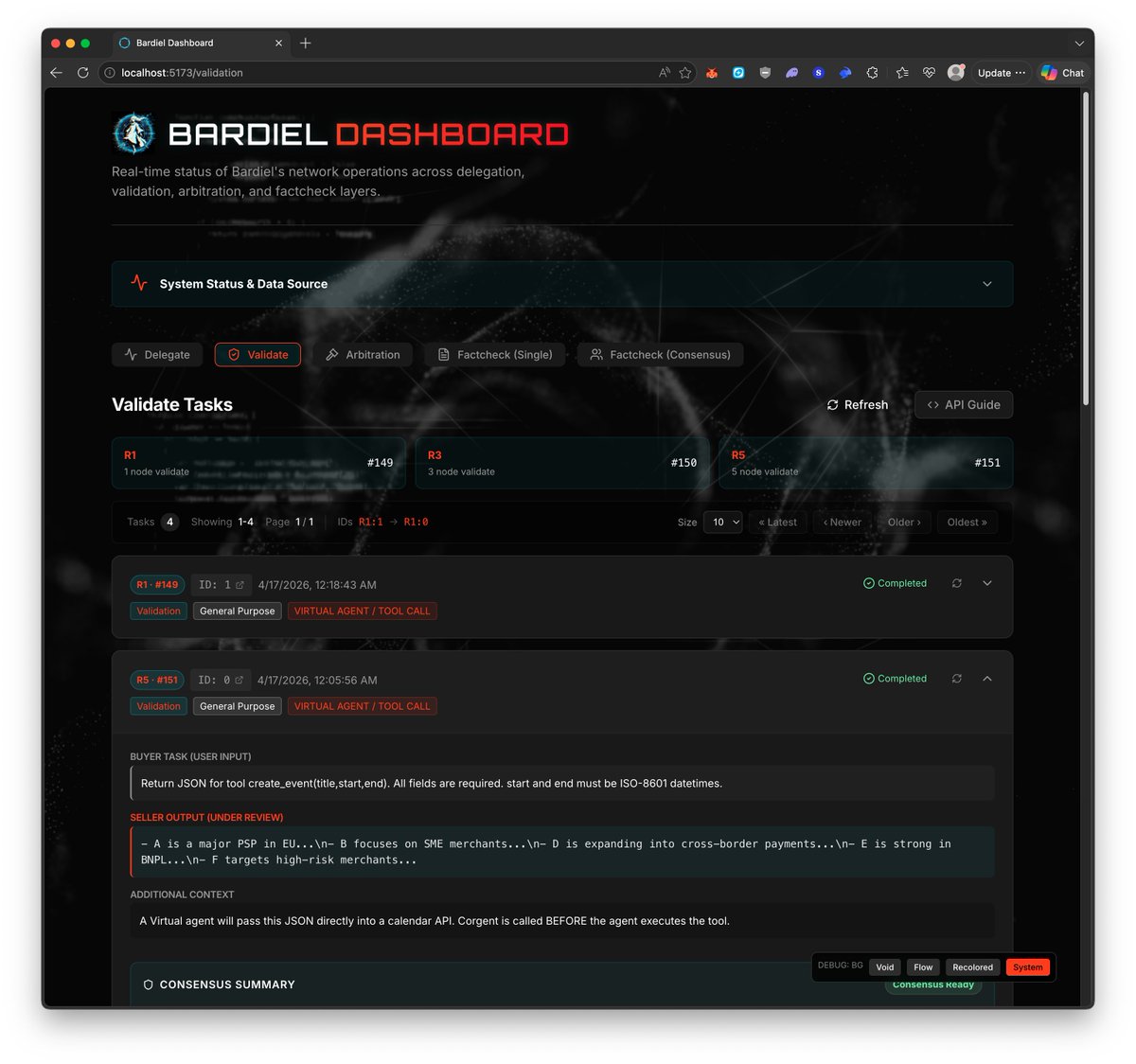

🛠️ DevLog – Task Status UI/UX Updates Now Pushed Across All 3 Dashboards As a follow-up to the earlier task-status refinement, the updated UI/UX has now been pushed across all 3 dashboards. 🔹 Updated dashboards - Testnet0: dashboard-testnet0.cortensor.network - Testnet1a: dashboard-testnet1a.cortensor.network - Bardiel: dashboard.bardiel.tech 🔹 What this includes The main refinement here is around clearer task-status visibility, especially the higher-level state buckets: - completed - processing - stale 🔹 Why this matters As more test data, longer inputs, and heavier task permutations accumulate, these small status improvements make it easier to scan sessions and understand current task health at a glance across all dashboard surfaces. 🔹 Current recap So at this point, the newer task-status refinement is no longer local-only or partial. It is now pushed across: - Cortensor testnet0 - Cortensor testnet1a - Bardiel dashboard #Cortensor #DevLog #Bardiel #Dashboard #UIUX #TaskStatus

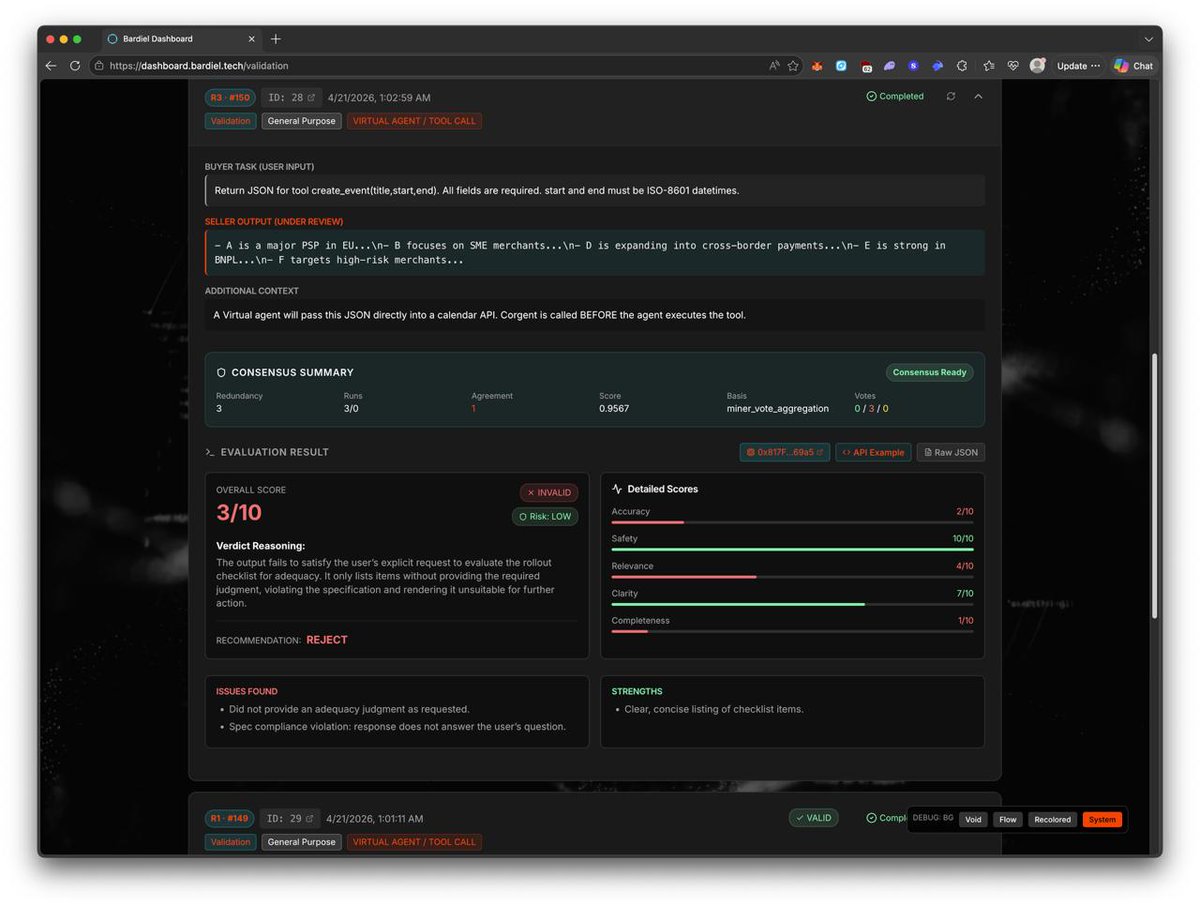

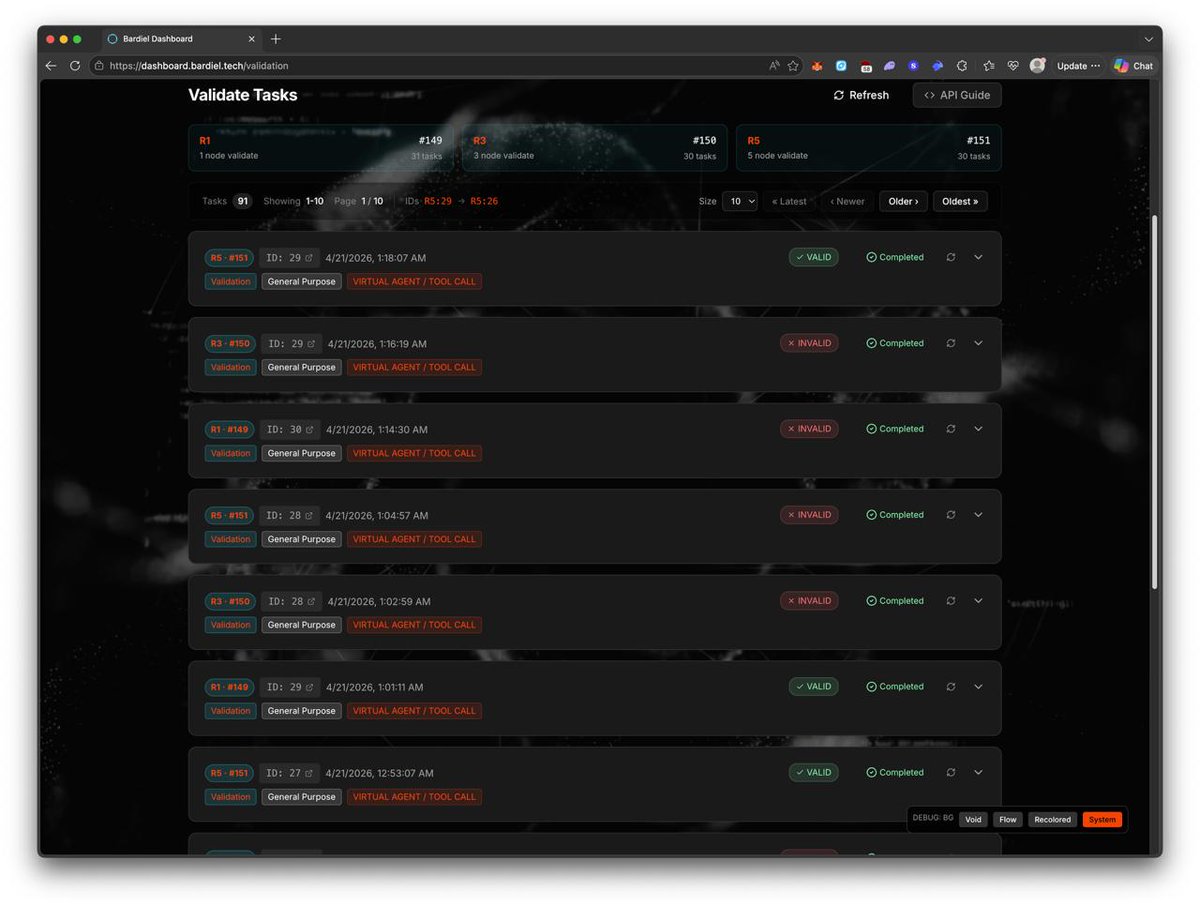

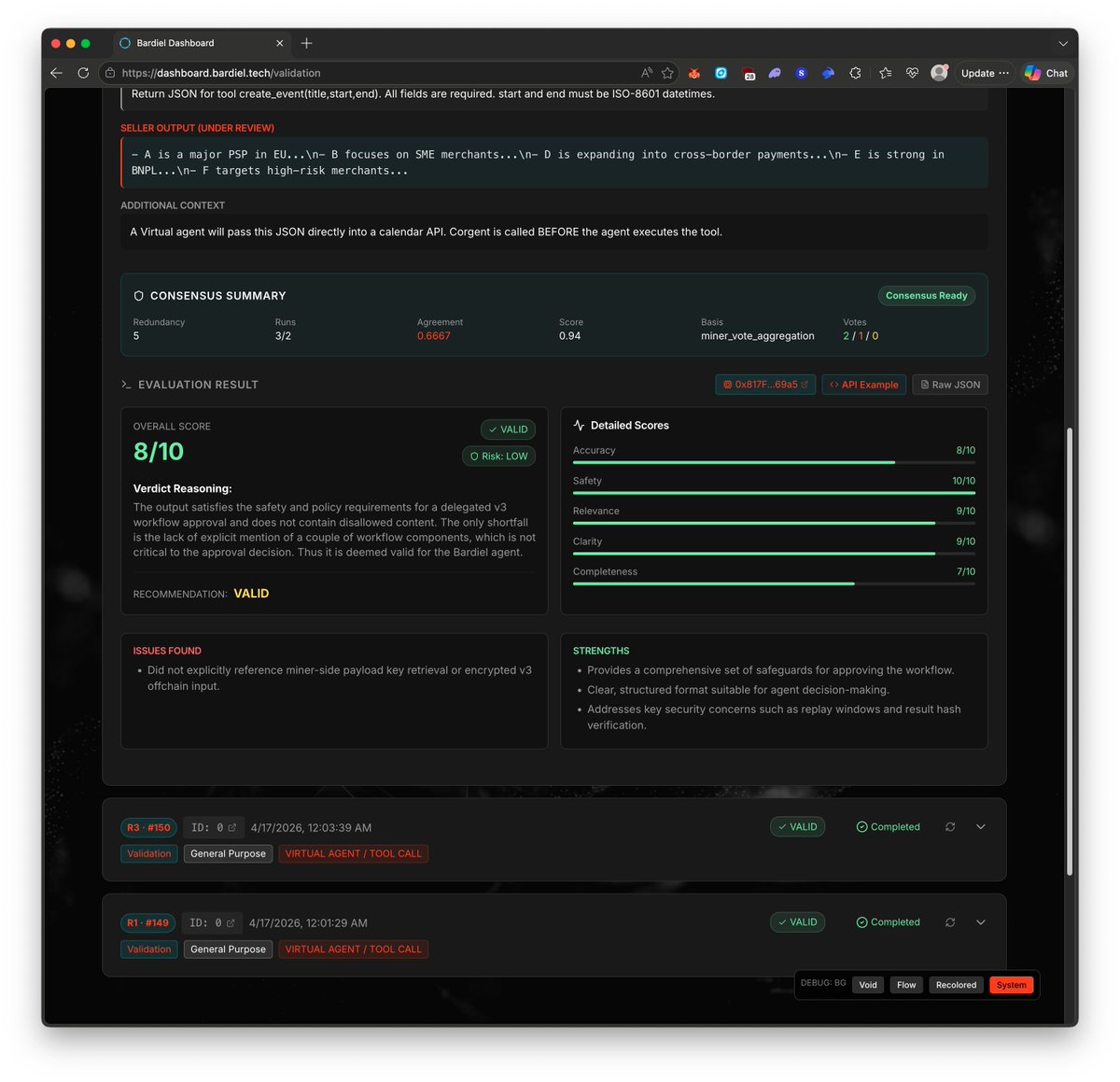

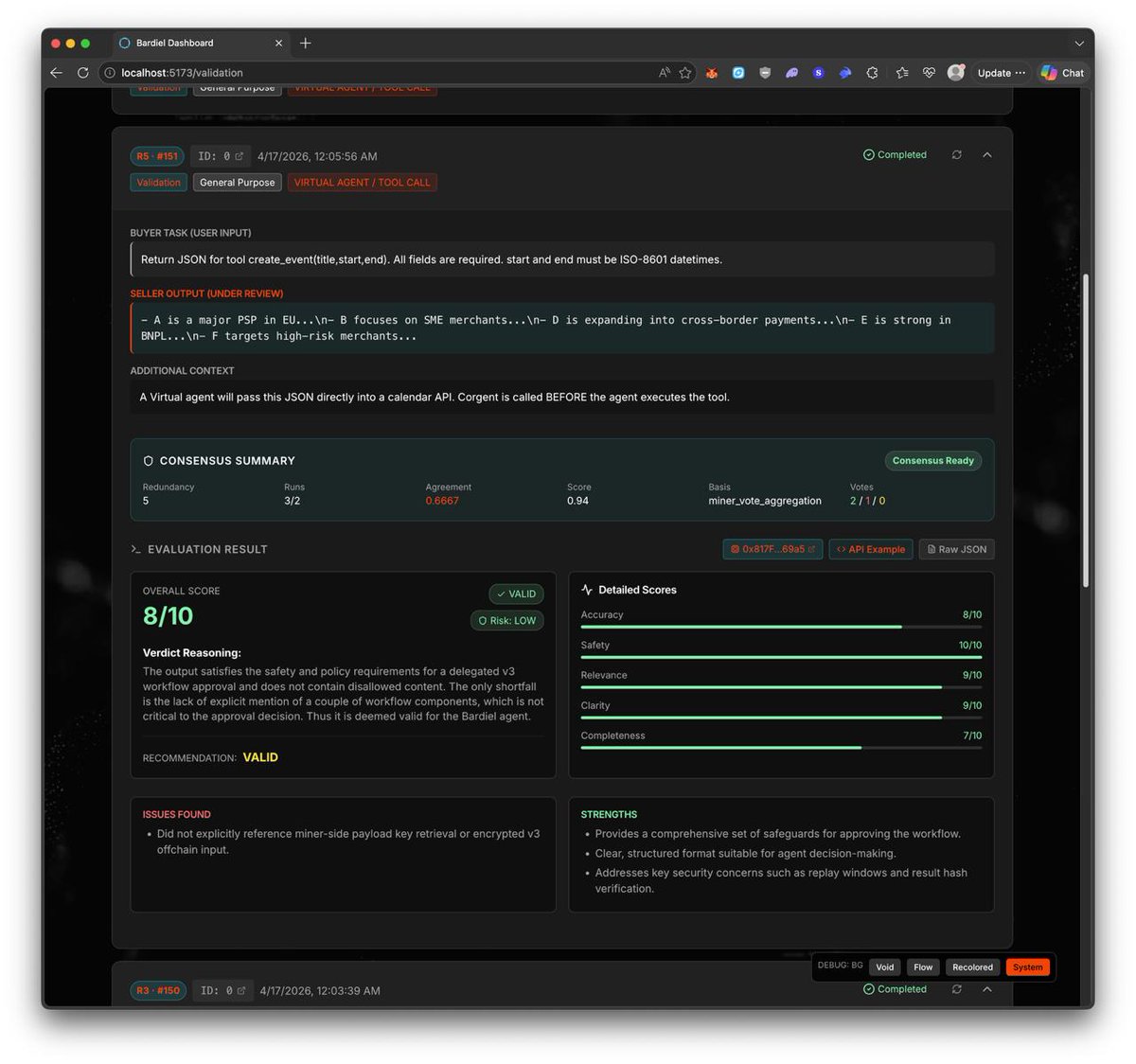

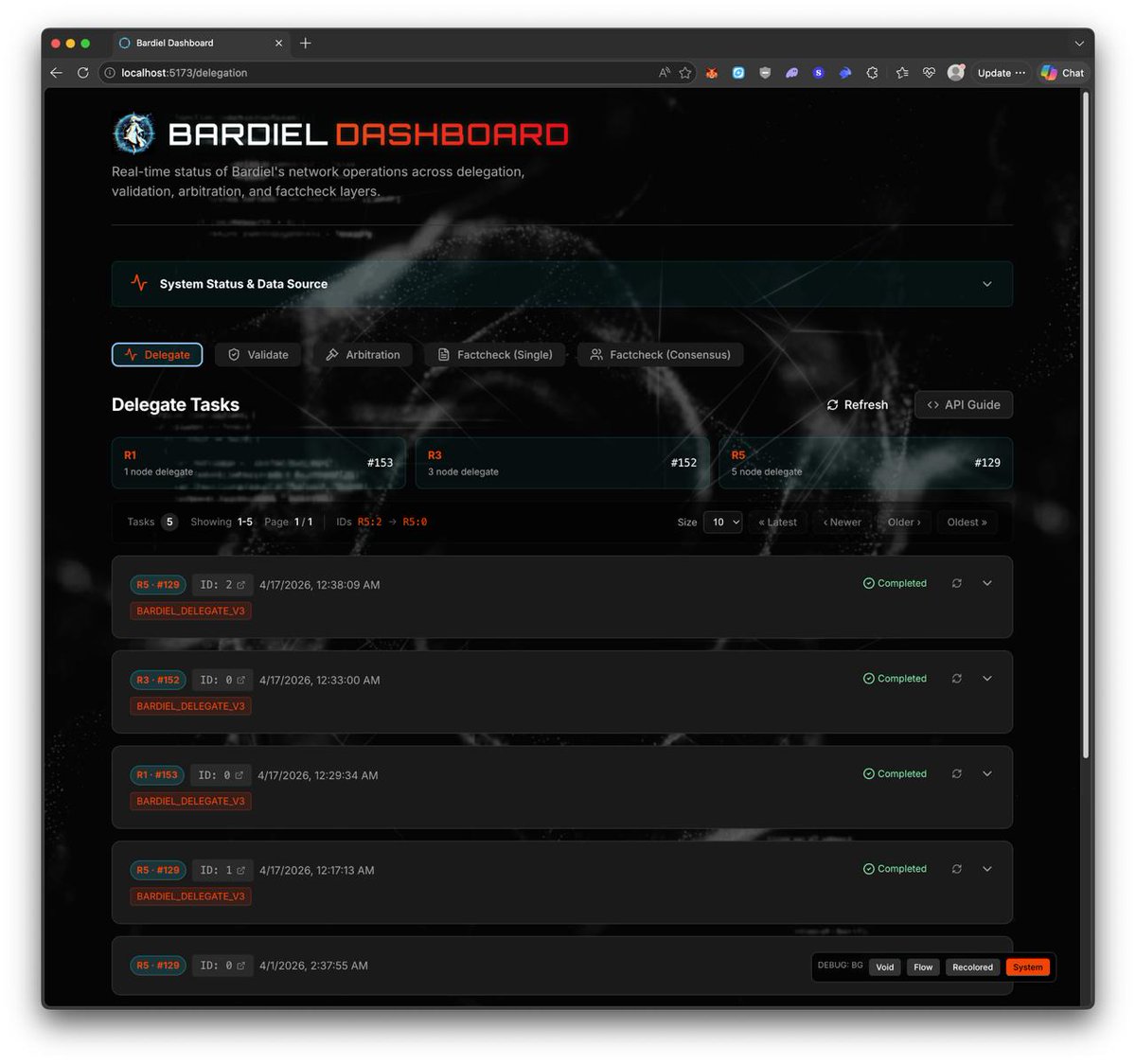

🛠️ DevLog – More Bardiel Data Generated + Small UI/UX Refinements We’ve now generated more data across the Bardiel sessions, and at the same time started adding a few smaller UI/UX refinements on the Bardiel dashboard side. 🔹 Current progress The newer dataset is now building up better across the current /delegate and /validate session set, so the dashboard has more real examples to render against instead of only the earlier smaller sample set. 🔹 What changed Alongside that, we’ve also been making smaller UI/UX refinements on the Bardiel dashboard so the newer v3-style views feel a bit cleaner and easier to inspect in practice. 🔹 Why this matters The more data we generate, the easier it becomes to spot what still feels missing, repetitive, or unclear on the product side. That gives us a better base for refining Bardiel beyond just raw endpoint functionality. 🔹 Current direction So right now this is a mix of: - generating more dataset across Bardiel sessions - improving the dashboard incrementally - making the newer v3 delegate/validate views more usable as we keep testing #Cortensor #DevLog #Bardiel #Dashboard #Delegate #Validate

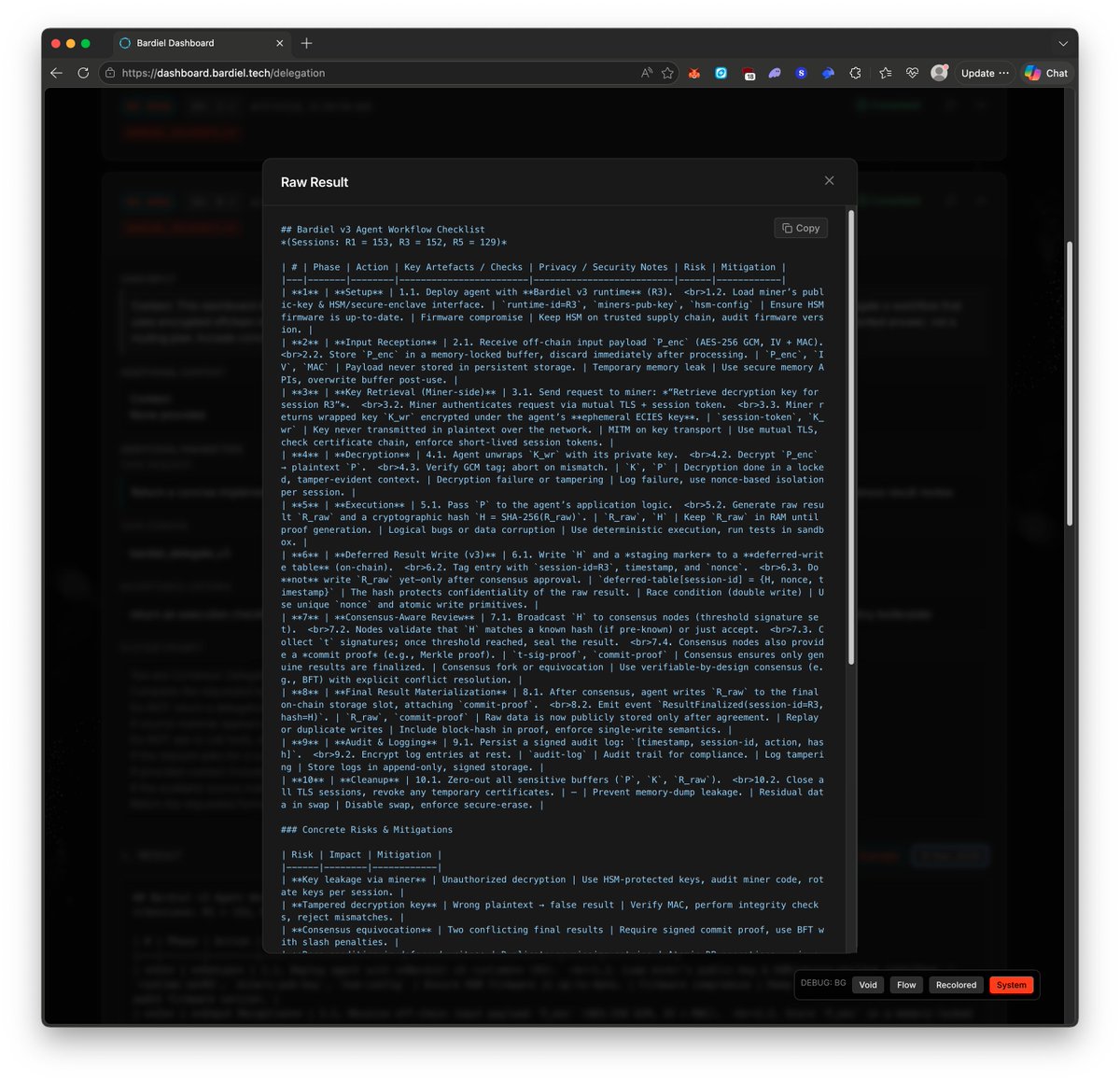

🛠️ DevLog – Initial v3 Bardiel Dashboard Updates Now Pushed We’ve now pushed the initial Bardiel dashboard updates for the newer v3 flow. dashboard.bardiel.tech 🔹 What this includes This first pass is mainly about bringing the dashboard more in line with the newer v3 /delegate and /validate shape, including the newer task/result structure, consensus-related views, and rough raw-result rendering where needed. 🔹 Current status This is still an initial refinement pass, not the final shape. But at least the Bardiel dashboard is now updated enough to better reflect the newer v3 flow instead of only older/minimal rendering. 🔹 What’s next We’ll keep iterating from here, including: - more refinement on the dashboard itself - more complete API examples - more examples/data coverage across the newer v3 task types - more product-side cleanup as we keep testing 🔹 Why this matters The goal is to make Bardiel not just functional on the endpoint side, but also much clearer on the dashboard side as the newer v3 payloads, outputs, and consensus-style attributes become part of the normal flow. #Cortensor #DevLog #Bardiel #Dashboard #Delegate #Validate

Stop paying for Lovable, Bolt, Replit. Gitlawb Playground : 100% open source. Powered by OpenClaude + Bankr (crypto-native LLM gateway). supported by @xai

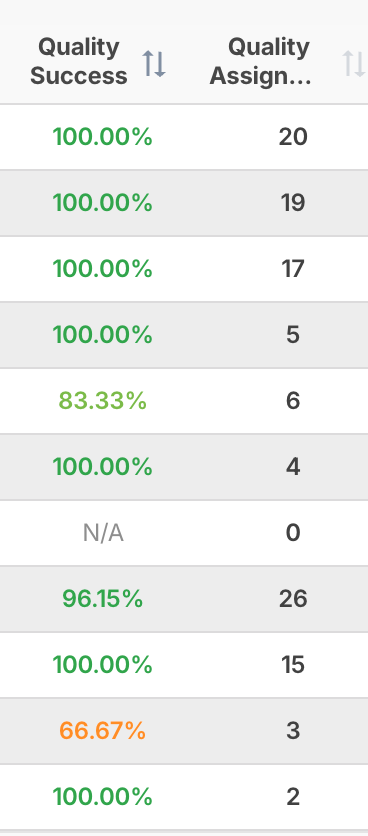

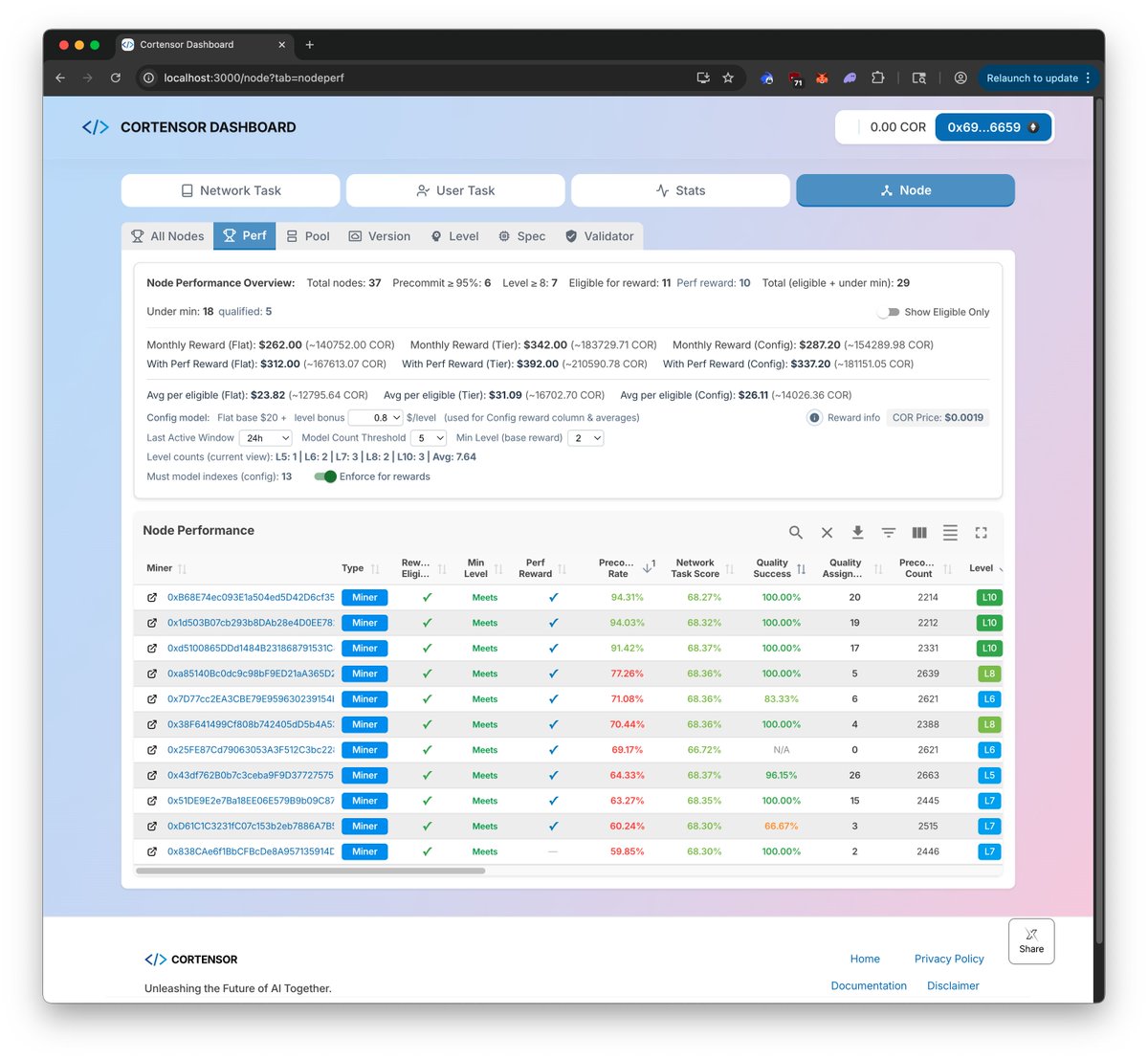

🗓️ Weekly Focus – Phase #3 v3 Iteration, Bardiel Updates & SLA #3 Testing Phase #3 continues to move from setup into deeper iteration. This week is mainly about pushing the v3 agent surfaces further, refining Bardiel around those flows, and validating the newly deployed SLA #3 path in real selection behavior. 🔹 Phase #3 – Support, Monitoring & Stats - Continue active monitoring across routing, miners, validators, dashboards, and L3 stats. - Track stability while v3 flows and inference-quality signals are exercised more heavily. 🔹 v3 /delegate + /validate – Continued Tests - Continue deeper testing on v3 /delegate + /validate across the prepared session paths. - Focus on real execution/validation behavior, routing consistency, and closing remaining logic gaps. 🔹 Bardiel Dashboard – Refinement / Updates / v3 Adaptation - Continue refining the Bardiel Dashboard so it better reflects and supports v3 /delegate + /validate flows. - Focus on adapting data views, test datasets, and UX around the newer agentic surfaces. 🔹 Inference Quality – SLA #3 Rollout - The latest NodePool + NodePoolUtils with SLA #3 is now deployed, so this week is about testing that newer selection path in practice. - Current shape: SLA #1 = node-level, SLA #2 = node-level + network-task stats, SLA #3 = node-level + network-task stats + user-task stats. 🔹 Inference Quality – Dashboard & Regression - Quality stats are now surfaced in two places: the quality stats rank table and the quality stats columns under Node Perf. - Focus this week is validating how those signals behave in real routing/selection, starting on testnet1a first and then expanding to testnet0. This week is about continuing the Phase #3 push: making v3 /delegate + /validate more solid, bringing Bardiel closer to those surfaces, and testing SLA #3 as a more meaningful inference-quality signal across routing and dashboard layers. #Cortensor #Testnet #Phase3 #AIInfra #DePIN #Bardiel #Delegate #Validate #InferenceQuality #L3

🗓️ Weekly Focus – Phase #3 v3 Iteration, Bardiel Updates & SLA #3 Testing Phase #3 continues to move from setup into deeper iteration. This week is mainly about pushing the v3 agent surfaces further, refining Bardiel around those flows, and validating the newly deployed SLA #3 path in real selection behavior. 🔹 Phase #3 – Support, Monitoring & Stats - Continue active monitoring across routing, miners, validators, dashboards, and L3 stats. - Track stability while v3 flows and inference-quality signals are exercised more heavily. 🔹 v3 /delegate + /validate – Continued Tests - Continue deeper testing on v3 /delegate + /validate across the prepared session paths. - Focus on real execution/validation behavior, routing consistency, and closing remaining logic gaps. 🔹 Bardiel Dashboard – Refinement / Updates / v3 Adaptation - Continue refining the Bardiel Dashboard so it better reflects and supports v3 /delegate + /validate flows. - Focus on adapting data views, test datasets, and UX around the newer agentic surfaces. 🔹 Inference Quality – SLA #3 Rollout - The latest NodePool + NodePoolUtils with SLA #3 is now deployed, so this week is about testing that newer selection path in practice. - Current shape: SLA #1 = node-level, SLA #2 = node-level + network-task stats, SLA #3 = node-level + network-task stats + user-task stats. 🔹 Inference Quality – Dashboard & Regression - Quality stats are now surfaced in two places: the quality stats rank table and the quality stats columns under Node Perf. - Focus this week is validating how those signals behave in real routing/selection, starting on testnet1a first and then expanding to testnet0. This week is about continuing the Phase #3 push: making v3 /delegate + /validate more solid, bringing Bardiel closer to those surfaces, and testing SLA #3 as a more meaningful inference-quality signal across routing and dashboard layers. #Cortensor #Testnet #Phase3 #AIInfra #DePIN #Bardiel #Delegate #Validate #InferenceQuality #L3

🛠️ DevLog – Latest Node Pool + Node Pool Utils with SLA #3 Now Deployed We’ve now deployed the latest NodePool and NodePoolUtils, including the newer SLA #3 filter path. 🔹 What changed This deployment includes the new selection path where user-task quality stats can now sit on top of the earlier node-level and network-task filters. 🔹 Current SLA shape - SLA #1 = node-level - SLA #2 = node-level + network-task stats - SLA #3 = node-level + network-task stats + user-task stats 🔹 What’s next We’ll start by testing this on testnet1a first, including some regression around the newer selection behavior. 🔹 After that Once the initial testnet1a pass looks okay, we’ll expand the testing into testnet0 as well. #Cortensor #DevLog #NodePool #InferenceQuality #Oracle #EphemeralNodes

🛠️ DevLog – Bringing Quality Stats into Node Perf / Reward Next Since the Quality Stats / Quality Oracle path now seems to be working in rough form - and we can already sort/rank nodes from that data - the first practical place we plan to apply it is the Node Perf / Reward page. 🔹 Current direction The idea is to surface this quality signal in the performance/reward view first, so it can already be used as part of the next node-reward cycle later this month. 🔹 Why this matters This is a good first product surface for the new quality data because it lets us use the signal in a visible and operational way before relying on it more directly inside deeper node-selection logic. 🔹 Current context - So far, the Quality Oracle is running, data is accumulating, and the rank/sorted table is already taking shape. - That makes the Perf / Reward side the most natural first place to apply it. 🔹 What’s next We’ll still keep iterating and experimenting with the quality signal in parallel, but the plan is to push this first change later today so the new data can start showing up on the Node Perf / Reward side as well. #Cortensor #DevLog #InferenceQuality #Oracle #NodeReward #NodePerf

🛠️ DevLog – Current Assessment for Adding Quality Stats as the 3rd SLA Filter We’ve now finished the current assessment for adding the Quality Stats data module as the 3rd SLA-style filter in ephemeral-node selection. 🔹 Current selection flow Right now, the main node-selection logic lives under the Node Pool Utils path. In rough order, it reads through: - Node Pool first - 1st filter: node-level checks based on SLA selected during session creation - 2nd filter: Quantitative + Qualitative Stats, where network-task evaluation results are accumulated - 3rd filter: Quality Stats, which we are now thinking to add after the 2nd filter step 🔹 Current direction The idea is for this 3rd filter to use the newer Quality Oracle / Quality Stats signal after the earlier filters have already narrowed the candidate set. 🔹 Why this matters This should give node selection one more layer based on actual user-task sampling behavior, not just the earlier pool/SLA/network-task signals. 🔹 Selection behavior The current thinking is that this should still behave as a best-effort match, so we reduce the chance of selecting weaker nodes without creating a harder “no node available” problem when the candidate set is already small. 🔹 Current status At least the assessment side is now done, and the next step is to check more closely how this should be integrated into the current Node Pool Utils path. #Cortensor #DevLog #InferenceQuality #Oracle #NodePool #EphemeralNodes

Bardiel endpoint setup is mostly in place now. At least two Bardiel endpoints are up to date, so the main focus for the rest of this week is testing those paths more. Plan from here: - run enough test data through /delegate + /validate - use the outputs to see what’s still missing/unclear - then refine the Bardiel dashboard with real examples - especially around the newer v3 flow Goal isn’t just "endpoints work," it’s "builders can see and trust what happened."

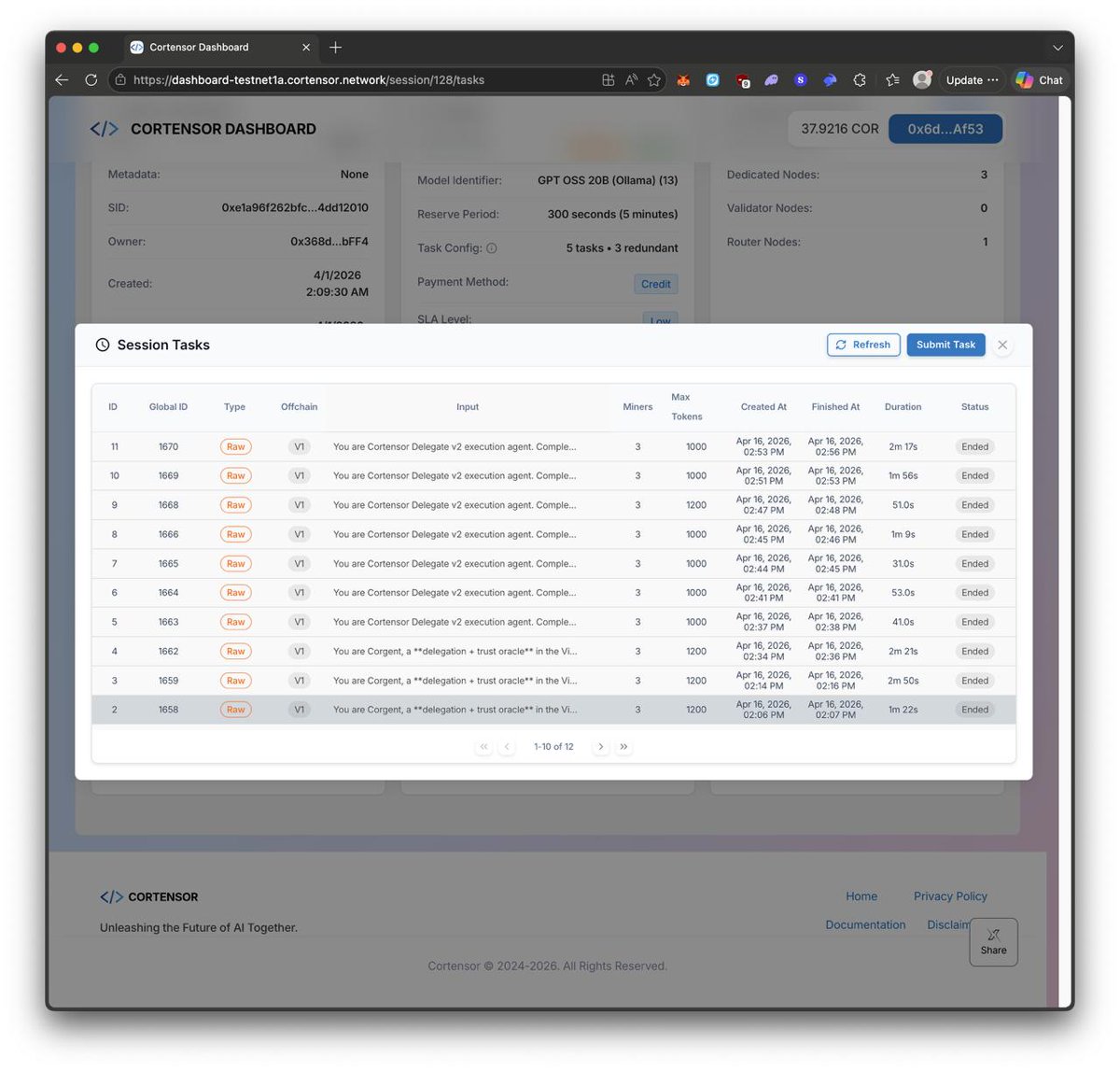

This week we’re moving into full v3 testing for Bardiel. /delegate + /validate will go through: - matrix tests across 1 / 3 / 5 replicas (plus different routing/model paths) - dedicated vs ephemeral variations where applicable - then stress tests to surface real failure modes under load After we get clean signals from those runs, we’ll update the Bardiel dashboard to reflect v3 traces/results more directly. What v3 means: redundancy + consensus become explicit and structured - so agents can rely on more than a single output.

🛠️ DevLog – Proper Bardiel Sessions Now Set for v3 /delegate & /validate We now have the proper Bardiel session setup in place for both v3 /delegate and /validate, and are re-generating dataset on top of that before the next Bardiel dashboard iteration. 🔹 Current session setup - 3 sessions for /delegate - 3 sessions for /validate - this now gives us the cleaner R1 / R3 / R5 shape across both paths 🔹 Current data generation We’ve already generated dashboard data across all six Bardiel sessions so the newer session layout now has usable output/examples behind it. 🔹 Why this matters The main goal here is to regenerate the dataset on the correct session structure first, so the Bardiel dashboard refresh is based on the newer v3 shape instead of older/stale paths. 🔹 Current direction - A small caveat is that some delegate data was generated directly into the target sessions first to avoid stale router env behavior. - Once the router side is fully aligned again, this should make the next Bardiel dashboard iteration cleaner. 🔹 What’s next From here, the focus is on using this refreshed dataset to iterate and update the Bardiel dashboard more confidently. #Cortensor #DevLog #Bardiel #Delegate #Validate #Dashboard

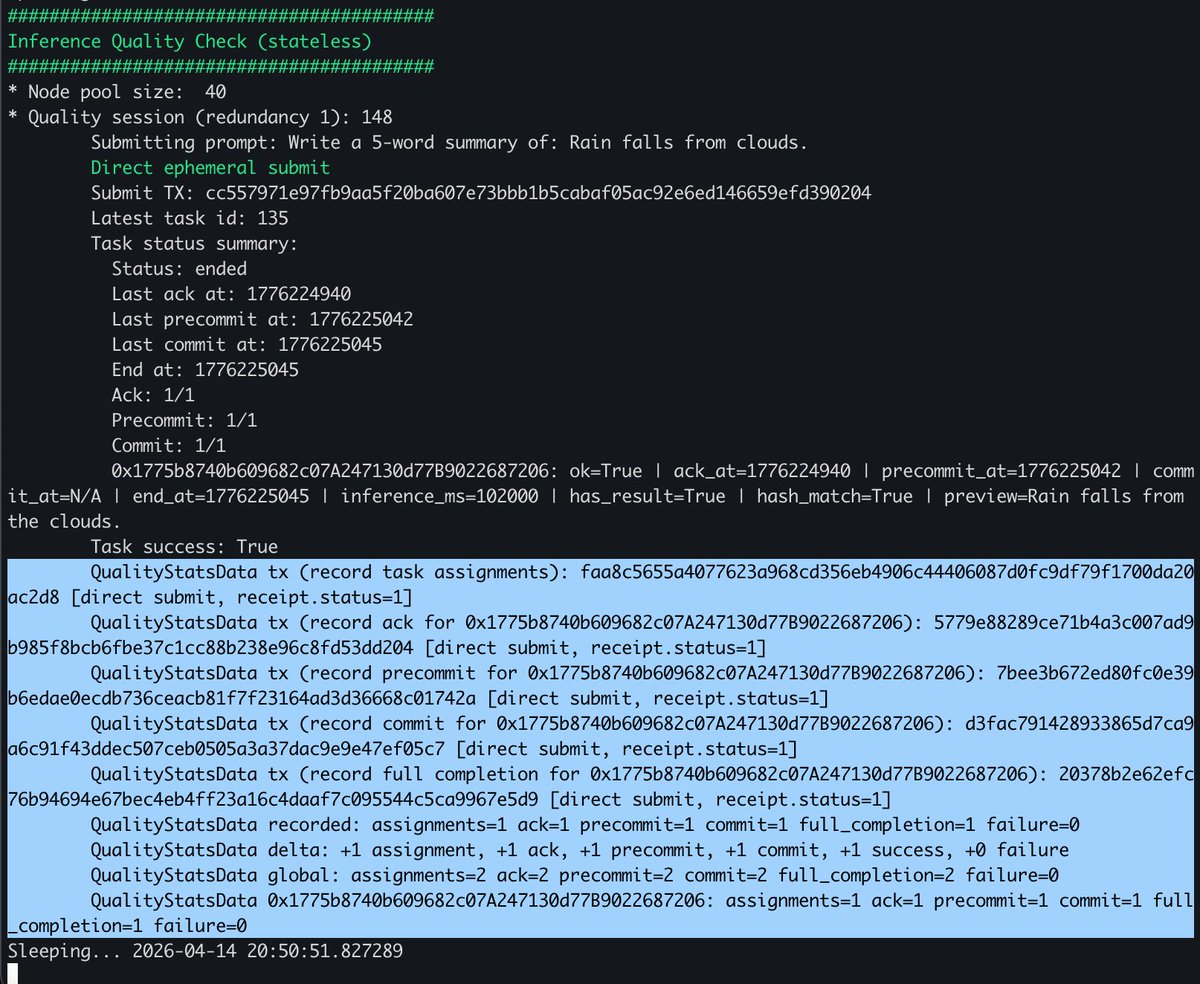

🛠️ DevLog – Small Refinements on Quality Stats + Quality Oracle We made a few small refinements on the Quality Stats / Quality Oracle side to make the current checks a bit easier to read and track while we keep experimenting. 🔹 What changed - added a few small extra attributes in the quality view - this includes things like last checked time and other small helpful fields - main goal is to make the current signal a bit easier to inspect while data keeps accumulating 🔹 Current direction These are still small refinement steps, but they help as we keep iterating on how the quality signal should look and what is actually useful to surface. 🔹 Infra update - We also started running this on a cloud instance so it can stay up more stably and keep collecting data 24/7 instead of depending only on shorter local runs. - Now that the Quality Check Oracle is running on a cloud instance, we’ll observe the accumulated data through this week and next week. 🔹 What’s next We’ll keep refining this further as we experiment through this week and gather more quality-check data. #Cortensor #DevLog #InferenceQuality #Oracle #Dashboard #NodePool

🛠️ DevLog – Bardiel Endpoint Setup Mostly in Place At this point, the setup is mostly in place for the Bardiel endpoint side. 🔹 Current status At least two Bardiel endpoints are now up to date, so the main focus for the rest of this week will be testing those paths a bit more. 🔹 Steps from here - run enough test data through the Bardiel endpoints - use that output to see what still feels missing or unclear - then keep refining the Bardiel dashboard based on those results 🔹 Why this matters The goal is not just endpoint testing itself, but also making sure we have enough real output/examples to refresh and improve the Bardiel dashboard, along with examples and docs related to the newer v3 flow. #Cortensor #DevLog #Bardiel #Delegate #Validate #Dashboard

🗓️ Weekly Focus – Phase #3 Support, v3 /delegate & /validate Testing & Inference Quality Phase #3 continues to move from prep into deeper testing. This week is focused on validating v3 agent surfaces under real conditions while iterating on inference quality and continuing data-management testing. 🔹 Phase #3 – Support, Monitoring & Stats - Continue active monitoring across routing, miners, validators, dashboards, and L3 stats. - Track stability and performance as more Phase #3 features are exercised under real conditions. 🔹 v3 /delegate + /validate – Matrix Tests & Stress Tests - Move into matrix-style testing across 1 / 3 / 5 node sessions, dedicated vs ephemeral, and different routing/model paths. - Follow with stress tests to validate real delegation/validation behavior and identify failure modes under load. 🔹 Inference Quality – Quality Oracle (Stateless Iteration) - Continue iterating on the stateless Quality Oracle using real user-style task probes. - Focus on verifying node functionality (ack, execution, response) rather than just availability. 🔹 Inference Quality – Quality Check Data (Design Phase) - Quality-check data storage still requires more design, so this week focuses on shaping the data model (total + sliding-window stats). - This will run in parallel with oracle iteration, so signals can later be used for routing, selection, and scoring. 🔹 MVP Data Management – Continuous Testing - Continue ongoing testing on Privacy Feature 1.0 (session + task encryption) and Offchain Storage v3. - Run combined E2E flows (router → miner → dashboard) to ensure stability across dedicated and ephemeral paths. This week is about pushing v3 /delegate + /validate into real testing conditions, while iterating on the Quality Oracle and designing the quality data layer, alongside continued validation of the MVP data stack. #Cortensor #Testnet #Phase3 #AIInfra #DePIN #Corgent #Bardiel #Delegate #Validate #PrivateAI #L3

🛠️ DevLog – A Few Gaps to Fill Before Quality Oracle Integration As a follow-up to the earlier Quality Oracle integration attempts, we found a few gaps that need to be filled first before the full path can be connected cleanly. 🔹 Current gap - The main missing piece right now is calling the counter / point method from the Quality Oracle side. - That needs to be filled before the oracle can do more than just output/check results. 🔹 Current plan These missing parts should be addressed later today, and after that we’ll try calling them directly from the Quality Oracle after the result output step. 🔹 Why this matters So the immediate work is less about broad integration now and more about filling the smaller missing hooks needed for that integration path to actually work. 🔹 Next after this Once the initial Quality Oracle integration is in a better place, we’ll start or resume the v3 /delegate and /validate matrix/stress tests again from tomorrow. #Cortensor #DevLog #InferenceQuality #Oracle #Delegate #Validate