Roger Doger

1.1K posts

Roger Doger

@DiocletianCode

American - Posts include tech, politics, finance, video games (mostly cs2) and other nonsense.

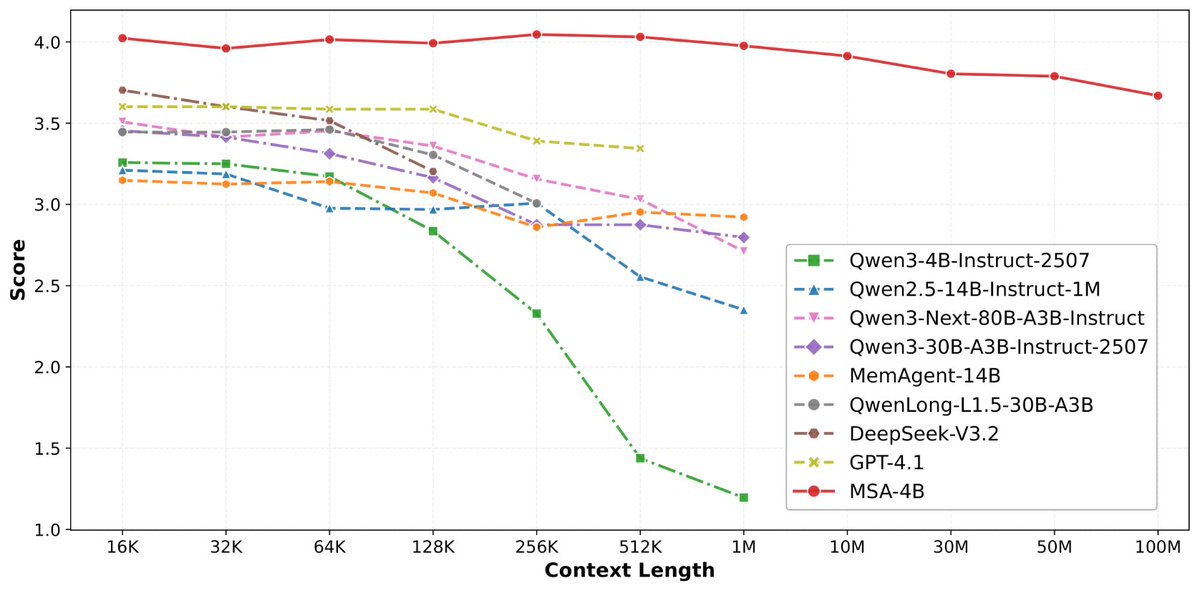

nvidia's 3B mamba destroyed alibaba's 3B deltanet on the same RTX 3090. only 24 days between releases. same active parameters, same VRAM tier, completely different architectures. nemotron cascade 2: 187 tok/s. flat from 4K to 625K context. zero speed loss. flags: -ngl 99 -np 1. that's it. no context flags, no KV cache tricks. auto-allocates 625K. qwen 3.5 35B-A3B: 112 tok/s. flat from 4K to 262K context. zero speed loss. flags: -ngl 99 -np 1 -c 262144 --cache-type-k q8_0 --cache-type-v q8_0. needed KV cache quantization to fit 262K. both models held a flat line across every context level. both architectures are context-independent. but nvidia's mamba2 is 67% faster at generating tokens on the exact same hardware and needs fewer flags to get there. same node, same GPU, same everything. the only variable is the model. gold medal math olympiad winner running at 187 tokens per second on single RTX 3090 a card from 6 years ago. nvidia cooked.

稍微剧透一下,@EverMind 这周还会发一篇高质量论文

Putting out a wish to the universe. I need more compute, if I can get more I will make sure every machine from a small phone to a bootstrapped RTX 3090 node can run frontier intelligence fast with minimal intelligence loss. I have hit page 2 of huggingface, released 3 model family compressions and got GLM-4.7 on a MacBook huggingface.co/0xsero My beast just isn’t enough and I already spent 2k usd on renting GPUs on top of credits provided by Prime intellect and Hotaisle. ——— If you believe in what I do help me get this to Nvidia, maybe they will bless me with the pewter to keep making local AI more accessible 🙏

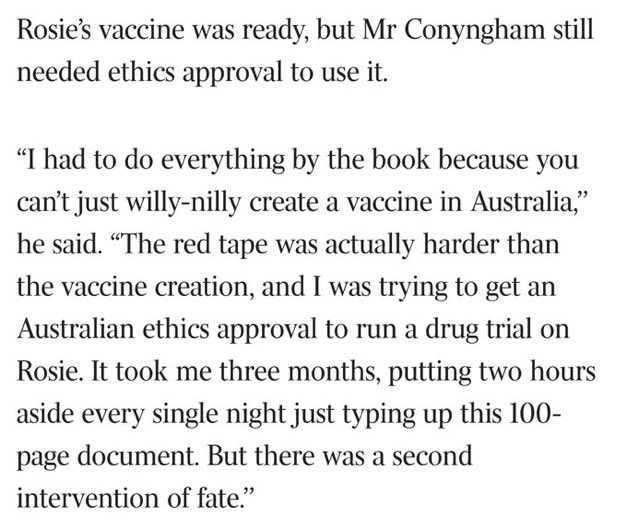

This is wild. theaustralian.com.au/business/techn…

BREAKING: The Senate has passed the biggest housing affordability bill in 30 years, and it includes a ban on investors buying single-family homes. The bill passed 89-10.

We partnered with Mozilla to test Claude's ability to find security vulnerabilities in Firefox. Opus 4.6 found 22 vulnerabilities in just two weeks. Of these, 14 were high-severity, representing a fifth of all high-severity bugs Mozilla remediated in 2025.