Angehefteter Tweet

THE AI REGULATOR

1.4K posts

THE AI REGULATOR

@EUAIACTGUY

AI security, AI governance, EU AI Act. Practical checklists and controls that ship.

Beigetreten Ocak 2026

191 Folgt68 Follower

@paulabartabajo_ Then you can diff changes, require approvals, and roll back when outputs drift.

English

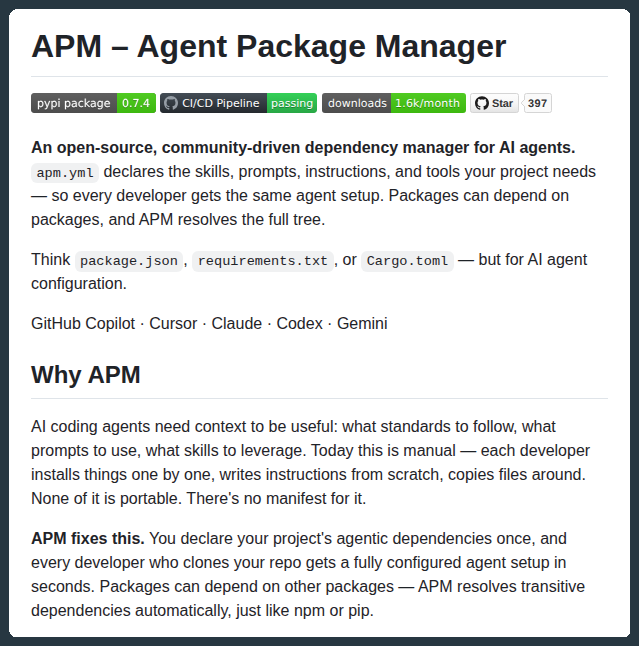

Stop versioning code.

Start versioning intent.

When AI agents generate thousands of lines at once, git diffs become unreadable and commits meaningless.

The real source of truth is what you wanted, not what was produced.

Design your AI workflows so the intent (the prompt, spec, or goal) is the artifact you track and refine.

English

@Prompt_Driven @karpathy Otherwise you get silent drift: same code, different behavior.

English

@karpathy Git breaks for agents because we're versioning the wrong artifact. Code is just the ephemeral exhaust of an AI's search. The real accumulation isn't thousands of code commits, it's the prompts and tests (the constraints) that bound the search space.

English

The next step for autoresearch is that it has to be asynchronously massively collaborative for agents (think: SETI@home style). The goal is not to emulate a single PhD student, it's to emulate a research community of them.

Current code synchronously grows a single thread of commits in a particular research direction. But the original repo is more of a seed, from which could sprout commits contributed by agents on all kinds of different research directions or for different compute platforms. Git(Hub) is *almost* but not really suited for this. It has a softly built in assumption of one "master" branch, which temporarily forks off into PRs just to merge back a bit later.

I tried to prototype something super lightweight that could have a flavor of this, e.g. just a Discussion, written by my agent as a summary of its overnight run:

github.com/karpathy/autor…

Alternatively, a PR has the benefit of exact commits:

github.com/karpathy/autor…

but you'd never want to actually merge it... You'd just want to "adopt" and accumulate branches of commits. But even in this lightweight way, you could ask your agent to first read the Discussions/PRs using GitHub CLI for inspiration, and after its research is done, contribute a little "paper" of findings back.

I'm not actually exactly sure what this should look like, but it's a big idea that is more general than just the autoresearch repo specifically. Agents can in principle easily juggle and collaborate on thousands of commits across arbitrary branch structures. Existing abstractions will accumulate stress as intelligence, attention and tenacity cease to be bottlenecks.

English

@codymclain @tom_doerr Most “agent failures” are untracked prompt/tool/model/data changes.

English

@tom_doerr this is actually huge for agent development. versioning prompt chains has been such a pain, especially when you have agents calling other agents with different skill dependencies

English

68% of companies risk major security flaws by 2025. Evolving threats demand agile, proactive cybersecurity strategies—not just compliance. Update, train, and deploy AI security now! 🛡️ Is your business ready? Follow @falitroke for insights. #Cybersecurity2025 #AIInSecurity #FutureReady linkedin.com/feed/update/ur…

English

@VictorAkinode “Ethically reviewed” doesn’t tell you whether the agent will exfiltrate data at 2am.

English

With the continued rise in AI, there is a pattern in organizations that happens more than organizations would care to admit.

Think of this, an AI tool is:

Approved by legal

Compliant on paper

Ethically reviewed

But even after all this, it still introduces massive security exposure.

So, if it met all the requirements above, how or why does this happen?

There are a number of reasons but here are a few I can name off the top of my head:

Compliance is not security

Ethics is not threat modeling

And legal approval is not technical validation

But the biggest reason is that most AI risk today lives in the gaps between teams.

GRC looks at frameworks.

Security looks at attack paths.

Leadership assumes alignment.

That assumption is expensive.

If your AI governance doesn’t answer the following questions:

1. What data goes in?

2. What data comes out?

3. Who can access it?

4. How is misuse detected?

Then I hate to break it you, but that's not governance.

It’s documentation.

Effective AI governance must be operational, not theoretical.

Otherwise, compliance becomes a checkbox

and attackers don’t care about checkboxes, and neither will they be stopped by them.

English

@lucky_inuwa @VictorAkinode Compliance tells you what to document. Security tells you what will break.

English

@VictorAkinode Compliance is not security.

Ethics is not threat modeling.

And assumptions between teams are the most expensive vulnerability in AI today.

English

@elonmusk This is exactly why Ethical AI needs strong product discipline: safe defaults, robust guardrails, continuous monitoring, and fast incident response for adversarial edge cases. Trust is built into the system, not just intent.

#ResponsibleAI #ProductOps

English

I not aware of any naked underage images generated by Grok. Literally zero.

Obviously, Grok does not spontaneously generate images, it does so only according to user requests.

When asked to generate images, it will refuse to produce anything illegal, as the operating principle for Grok is to obey the laws of any given country or state.

There may be times when adversarial hacking of Grok prompts does something unexpected. If that happens, we fix the bug immediately.

Queen Bee@KingBobIIV

I haven't seen one single indecent image other than that bloody gangbang woman, and that was only because I went there to see if her baptism was real (spoiler: it wasnt). How are all these Labour MPs seeing so much child porn on X? Why are their algorithms sending it to them? Or are they looking for it? Thats the bigger question.

English

@pavel_builder @HIMSS If alerts can’t be investigated in <5 min, they’re theater.

English

@HIMSS Responsible AI frameworks in healthcare need to go beyond ethics checklists. Accountability, traceability, and real-time monitoring are the harder problems.

English

Next week at #HIMSS26, healthcare leaders from around the world will gather to explore what comes next for AI and digital health transformation.

On Wednesday, March 11, Hal Wolf, President and CEO of HIMSS, will discuss how health systems can responsibly evaluate and deploy AI to drive measurable impact, from establishing clear criteria for selecting AI applications to implementing processes that reinforce values and minimize bias amid the rapid expansion of AI in healthcare.

Hal will be joined by Ran Balicer of Clalit Health Services and Isaac Kohane of Harvard Medical School, with the discussion moderated by Gil Bashe of FINN Partners.

If you’re headed to Vegas, we hope you’ll join the conversation.

Get more details on this session: bit.ly/4r9fp0p

English

@unearthing29 Responsible AI without telemetry is just intent.

English

@0xWaldox0 @codyschneiderxx Without that, it’s shadow IT with teeth.

English

@codyschneiderxx Interesting direction. The BYO-agent model only works if agents run in company-controlled sandboxes with auditable logs, scoped tokens, and policy-enforced tools. Otherwise security and compliance will block it.

English

so I’m starting to believe more and more that the most effective startup employees will have custom agents and personal software they bring to their jobs

and these people will become 100x employees

how I see this working:

personally, the way I operate now is simple

basically whatever I’m working on, I’m trying to automate parts of it in the background while I work on it

I’m either building agents that can take over the task as it comes up

or building software that eliminates it entirely

and this stack of software slowly becomes an extension of m

every week it gets a extended, refined, and more capable of doing the things I don’t want to do or the things I shouldn’t be wasting time on

over time, it stops feeling like “tools” and starts feeling like infrastructure

a personal backend

a private ops team

a swarm of specialized agents that quietly remove friction from everything I touch

and once you start working like this, it’s impossible to go back

you start seeing every repetitive action, every manual process, every annoying workflow as a bug

not in the company’s system but in your system

if you fix 3–5 of these bugs every week, you wake up a few months later with:

- your own automations

- your own research agents

- your own monitoring systems

- your own custom interfaces

- your own intelligence layer sitting on top of your job

it’s compounding leverage

and I think that’s where the 100x employee comes from

not from raw talent

not from hustle

but from the quiet accumulation of self-augmenting tools that raise your ceiling until you’re operating on an entirely different curve

most people will still be “doing work.”

a few will be architecting systems that do their work for them

those people win

those people become irreplaceable

those people become their own force multipliers

companies that recognize this and empower it will end up hiring individuals who effectively show up with their own internal R&D department in their github repo

we’re entering the era of the 1000x startup employee

and it’s going to change everything

English