ElliotSecOps

6K posts

ElliotSecOps

@ElliotSecOps

Elliot como alias, seguridad como profesión. Security & fintech founder

Mistral has released Mistral Small 4, an open weights model with hybrid reasoning and image input, scoring 27 on the Artificial Analysis Intelligence Index @MistralAI's Small 4 is a 119B mixture-of-experts model with 6.5B active parameters per token, supporting both reasoning and non-reasoning modes. In reasoning mode, Mistral Small 4 scores 27 on the Artificial Analysis Intelligence Index, a 12-point improvement from Small 3.2 (15) and now among the most intelligent models Mistral has released, surpassing Mistral Large 3 (23) and matching the proprietary Magistral Medium 1.2 (27). However, it lags open weights peers with similar total parameter counts such as gpt-oss-120B (high, 33), NVIDIA Nemotron 3 Super 120B A12B (Reasoning, 36), and Qwen3.5 122B A10B (Reasoning, 42). Key takeaways: ➤ Reasoning and non-reasoning modes in a single model: Mistral Small 4 supports configurable hybrid reasoning with reasoning and non-reasoning modes, rather than the separate reasoning variants Mistral has released previously with their Magistral models. In reasoning mode, the model scores 27 on the Artificial Analysis Intelligence Index. In non-reasoning mode, the model scores 19, a 4-point improvement from its predecessor Mistral Small 3.2 (15) ➤ More token efficient than peers of similar size: At ~52M output tokens, Mistral Small 4 (Reasoning) uses fewer tokens to run the Artificial Analysis Intelligence Index compared to reasoning models such as gpt-oss-120B (high, ~78M), NVIDIA Nemotron 3 Super 120B A12B (Reasoning, ~110M), and Qwen3.5 122B A10B (Reasoning, ~91M). In non-reasoning mode, the model uses ~4M output tokens ➤ Native support for image input: Mistral Small 4 is a multimodal model, accepting image input as well as text. On our multimodal evaluation, MMMU-Pro, Mistral Small 4 (Reasoning) scores 57%, ahead of Mistral Large 3 (56%) but behind Qwen3.5 122B A10B (Reasoning, 75%). Neither gpt-oss-120B nor NVIDIA Nemotron 3 Super 120B A12B support image input. All models support text output only ➤ Improvement in real-world agentic tasks: Mistral Small 4 scores an Elo of 871 on GDPval-AA, our evaluation based on OpenAI's GDPval dataset that tests models on real-world tasks across 44 occupations and 9 major industries, with models producing deliverables such as documents, spreadsheets, and diagrams in an agentic loop. This is more than double the Elo of Small 3.2 (339) and close to Mistral Large 3 (880), but behind gpt-oss-120B (high, 962), NVIDIA Nemotron 3 Super 120B A12B (Reasoning, 1021), and Qwen3.5 122B A10B (Reasoning, 1130) ➤ Lower hallucination rate than peer models of similar size: Mistral Small 4 scores -30 on AA-Omniscience, our evaluation of knowledge reliability and hallucination, where scores range from -100 to 100 (higher is better) and a negative score indicates more incorrect than correct answers. Mistral Small 4 scores ahead of gpt-oss-120B (high, -50), Qwen3.5 122B A10B (Reasoning, -40), and NVIDIA Nemotron 3 Super 120B A12B (Reasoning, -42) Key model details: ➤ Context window: 256K tokens (up from 128K on Small 3.2) ➤ Pricing: $0.15/$0.6 per 1M input/output tokens ➤ Availability: Mistral first-party API only. At native FP8 precision, Mistral Small 4's 119B parameters require ~119GB to self-host the weights (more than the 80GB of HBM3 memory on a single NVIDIA H100) ➤ Modality: Image and text input with text output only ➤ Licensing: Apache 2.0 license

Congrats to the @cursor_ai team on the launch of Composer 2! We are proud to see Kimi-k2.5 provide the foundation. Seeing our model integrated effectively through Cursor's continued pretraining & high-compute RL training is the open model ecosystem we love to support. Note: Cursor accesses Kimi-k2.5 via @FireworksAI_HQ ' hosted RL and inference platform as part of an authorized commercial partnership.

was messing with the OpenAI base URL in Cursor and caught this accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast so composer 2 is just Kimi K2.5 with RL at least rename the model ID

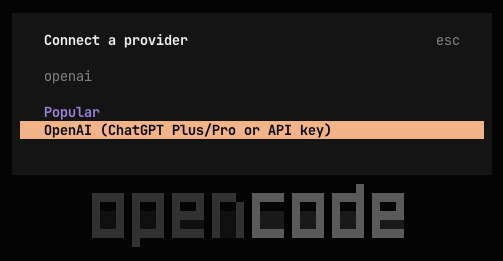

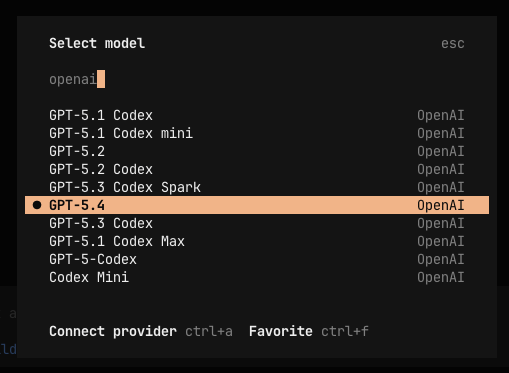

opencode 1.3.0 will no longer autoload the claude max plugin we did our best to convince anthropic to support developer choice but they sent lawyers it's your right to access services however you wish but it is also their right to block whoever they want we can't maintain an official plugin so it's been removed from github and marked deprecated on npm appreciate our partners at openai, github and gitlab who are going the other direction and supporting developer freedom

Composer 2 is now available in Cursor.

GPT-5.4 > Opus 4.6 And Google still doesn't have anything even remotely competitive.

MiniMax has released MiniMax-M2.7, delivering GLM-5-level intelligence for less than one third of the cost MiniMax-M2.7 from @MiniMax_AI scores 50 on the Artificial Analysis Intelligence Index, an 8-point improvement over MiniMax-M2.5, which was released one month ago. This is driven by stronger performance on real-world agentic tasks and reduced hallucinations. MiniMax-M2.7 is now ahead of MiMo-V2-Pro (Reasoning, 49) and Kimi K2.5 (Reasoning, 47), and equivalent to GLM-5 (Reasoning, 50) while using 20% fewer output tokens and costing less than a third as much to run. MiniMax-M2.7 is a reasoning-only model and maintains the same per-token pricing as MiniMax-M2.5. Key takeaways: ➤ Strong performance on real-world agentic tasks: MiniMax-M2.7 achieves a GDPval-AA Elo of 1494, a significant improvement from MiniMax-M2.5 (1203) and ahead of MiMo-V2-Pro (Reasoning, 1426), GLM-5 (Reasoning, 1406), and Kimi K2.5 (Reasoning, 1283). It remains behind frontier models such as GPT-5.4 (xhigh, 1667) and Claude Opus 4.6 (Adaptive Reasoning, max effort, 1606) ➤ Reduced hallucinations: MiniMax-M2.7 scores +1 on the AA-Omniscience Index, up from MiniMax-M2.5 (-40). This is competitive with GPT-5.2 (xhigh, -1) and GLM-5 (Reasoning, +2), and well ahead of Kimi K2.5 (Reasoning, -8). The improvement from M2.5 is purely driven by reduced hallucinations, meaning the model is more likely to abstain from answering when it doesn’t know the answer, rather than guessing. M2.7 achieves a hallucination rate of 34%, lower than Claude Sonnet 4.6 (Adaptive Reasoning, max effort, 46%) and Gemini 3.1 Pro Preview (50%). ➤ Gains across most evaluations compared to MiniMax-M2.5: Outside of the GDPval-AA and AA-Omniscience improvements noted above, MiniMax-M2.7 improves in HLE (+9 p.p.), TerminalBench Hard (+5 p.p.), SciCode (+4 p.p.), IFBench (+4 p.p.), GPQA (+3 p.p.), and LCR (+3 p.p.). We saw a notable regression in τ²-Bench (-11 p.p.). ➤ Increased token use: MiniMax-M2.7 used ~87M output tokens to run the Artificial Analysis Intelligence Index, up 55% from MiniMax-M2.5 (~56M). It remains more token-efficient than other models such as GLM-5 (Reasoning, 110M) and Kimi K2.5 (Reasoning, ~89M) ➤ Leading cost efficiency: MiniMax-M2.7 cost $176 to run the Artificial Analysis Intelligence Index, maintaining the same $0.30/$1.20 per 1M input/output pricing as M2.5. This places it on the Pareto frontier of our Intelligence vs. Cost chart. For context, GLM-5 (Reasoning) cost $547 at equivalent intelligence, Kimi K2.5 (Reasoning) cost $371, and Gemini 3 Flash Preview (Reasoning) cost $278 Key model details: ➤ Context window: 200K tokens (equivalent to MiniMax-M2.5). ➤ Pricing: $0.30/$1.20 per 1M input/output tokens (unchanged from MiniMax-M2.5). ➤ Availability: MiniMax first-party API only. ➤ Modality: Text input and output only (no multimodality). ➤ Licensing: MiniMax has not announced whether MiniMax-M2.7 will be open weights. MiniMax-M2.5 is available under the MIT license.

China is living in a future era

🚨: Screen time destroys toddler's brains. For every 30 minutes, the risk of speech delay increases 49%.

Andrej Karpathy, former OpenAI founding member, is now building his website with Claude Code.