Jignesh Patel

1.8K posts

Jignesh Patel

@JigneshTrade

Derivatives & Quantitative Data Analyst | Machine Learning & Financial Data Science Expert | Algorithmic Trading Strategist | Team lead

Surat, India Beigetreten Ağustos 2016

531 Folgt12.2K Follower

Trading is changing FAST! Andrej Karpathy's "autoresearch" just broke the internet – now AI can auto-generate quant strategies & research end-to-end. No more manual grinding. youtube.com/watch?v=qb90PP… #AlgoTrading #QuantFinance #AIRevolution

YouTube

English

7 years after GPT-2's release, reproducing its capability now costs ~$73 vs. the original ~$43K. That's a 600× drop! AI progress is wild. #AI #MachineLearning #DeepLearning #ArtificialIntelligence #TechTrends

English

Jignesh Patel retweetet

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes, today I measured that the leaderboard's "Time to GPT-2" drops from 2.02 hours to 1.80 hours (~11% improvement), this will be the new leaderboard entry. So yes, these are real improvements and they make an actual difference. I am mildly surprised that my very first naive attempt already worked this well on top of what I thought was already a fairly manually well-tuned project.

This is a first for me because I am very used to doing the iterative optimization of neural network training manually. You come up with ideas, you implement them, you check if they work (better validation loss), you come up with new ideas based on that, you read some papers for inspiration, etc etc. This is the bread and butter of what I do daily for 2 decades. Seeing the agent do this entire workflow end-to-end and all by itself as it worked through approx. 700 changes autonomously is wild. It really looked at the sequence of results of experiments and used that to plan the next ones. It's not novel, ground-breaking "research" (yet), but all the adjustments are "real", I didn't find them manually previously, and they stack up and actually improved nanochat. Among the bigger things e.g.:

- It noticed an oversight that my parameterless QKnorm didn't have a scaler multiplier attached, so my attention was too diffuse. The agent found multipliers to sharpen it, pointing to future work.

- It found that the Value Embeddings really like regularization and I wasn't applying any (oops).

- It found that my banded attention was too conservative (i forgot to tune it).

- It found that AdamW betas were all messed up.

- It tuned the weight decay schedule.

- It tuned the network initialization.

This is on top of all the tuning I've already done over a good amount of time. The exact commit is here, from this "round 1" of autoresearch. I am going to kick off "round 2", and in parallel I am looking at how multiple agents can collaborate to unlock parallelism.

github.com/karpathy/nanoc…

All LLM frontier labs will do this. It's the final boss battle. It's a lot more complex at scale of course - you don't just have a single train. py file to tune. But doing it is "just engineering" and it's going to work. You spin up a swarm of agents, you have them collaborate to tune smaller models, you promote the most promising ideas to increasingly larger scales, and humans (optionally) contribute on the edges.

And more generally, *any* metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.

English

Jignesh Patel retweetet

Jignesh Patel retweetet

Andrej Karpathy just released microGPT: the entire GPT algorithm in 243 lines of pure Python with zero dependencies. You can read it in one sitting and actually understand how LLMs work instead of treating them as black boxes. When someone who led Tesla's Autopilot and helped found OpenAI says this is as simple as it gets, it means the field is maturing from research mystery to engineering clarity. This is the K&R of language models.

x.com/karpathy/statu…

English

Jignesh Patel retweetet

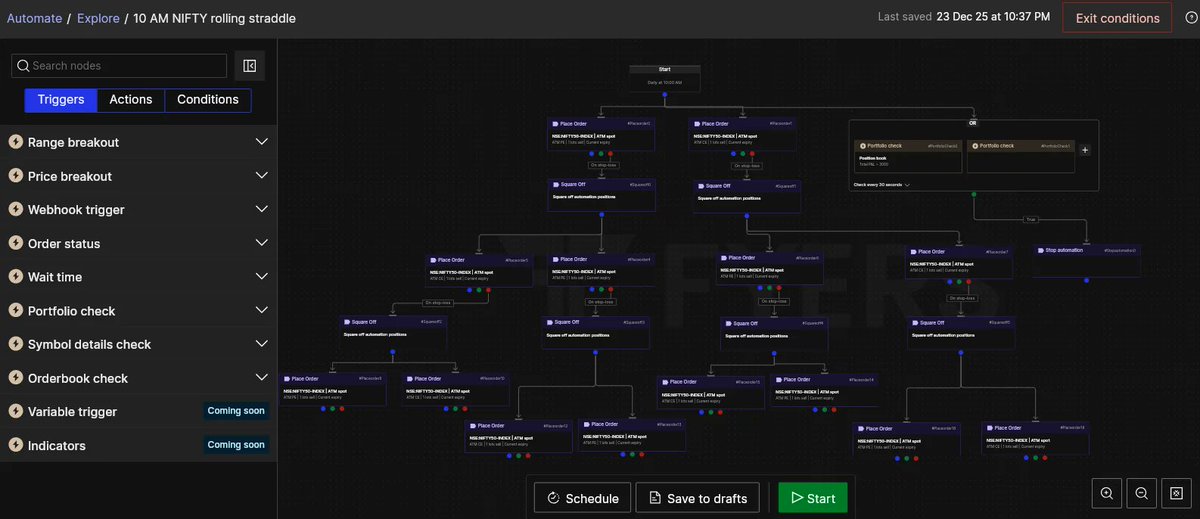

Traders, I’m excited to share that we’ve launched FYERS Automate! It's the first-of-its-kind in India, no-code algo trading platform that we designed to fundamentally change how you approach the markets.

If you’ve ever felt like you understand the markets but struggle with consistency, timing, or execution, the real problem may not be you. To cite an analogy, the driver is capable, but the vehicle is not built for performance. We’ve worked hard to build that vehicle, thereby giving retail traders professional-grade structure without having to deal with unnecessary tech complexity.

You can visually design your entire trading workflow from strategy, conditions, and execution, all in one go. Think of it as your trading console; a place where you create, and automate your ideas from web/mobile app while you focus on your work, business, or life.

Check it out, if you trade Indian markets. It'll be be worth your your time and, money. Below is a screenshot of a sample workflow, FYI. 😎 ✌

Can discuss more in comments.

English

Jignesh Patel retweetet

9. Stock analysis

Mega prompt:

You are a financial analyst specializing in [SECTOR].

Analyze [STOCK TICKER] as a potential investment.

Analysis framework:

1. Business model and revenue streams

2. Financial health (revenue, profit, cash flow trends)

3. Competitive position and moat

4. Growth catalysts and headwinds

5. Valuation metrics vs peers (P/E, P/S, EV/EBITDA)

6. Technical analysis (chart patterns, support/resistance)

7. Insider trading and institutional ownership

8. Bear case: what could go wrong

9. Bull case: what could go right

10. Recommendation (buy/hold/sell) with price targets

Risk tolerance: [CONSERVATIVE/MODERATE/AGGRESSIVE]

Investment timeline: [SHORT/MEDIUM/LONG TERM]

Portfolio context: [YOUR EXISTING HOLDINGS]

Provide specific entry/exit points and position sizing.

Disclaimer: Add "This is not financial advice" at the end.

English

Jignesh Patel retweetet

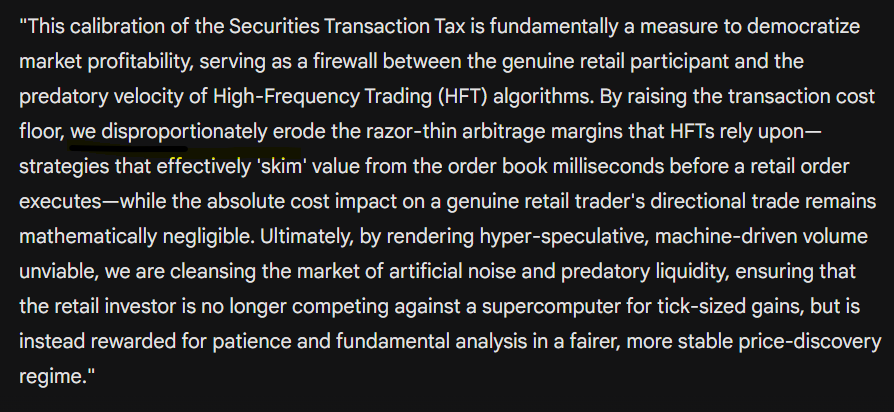

The STT hike hurts HFTs 10x more than retail algos. It destroys the business model of bots that front-run order flow for pennies. Once that predatory churning stops, the market becomes somewhat fairer for retail strategies. #NirmalaSitharaman #Budget2026 #StockMarketCrash

English

Killing the #options market with high taxes isn't a win—it's dismantling the Indian stock market bit-by-bit.

Options keep the market healthy.

Put sellers absorb shock during crashes.

Big investors need options for hedging . #Nirmalasitaraman #STThike #Budget2026

English

Jignesh Patel retweetet

Jignesh Patel retweetet

Jignesh Patel retweetet

New book from @PacktDataML >>

"LLM Design Patterns: A Practical Guide to Building Robust and Efficient AI Systems"

See it at amzn.to/4nODl9a

English

Jignesh Patel retweetet

Jignesh Patel retweetet

#SBIConclave2025 | Finance Minister #NirmalaSitharaman says, the government is not here to shut the door on futures and options trading

English

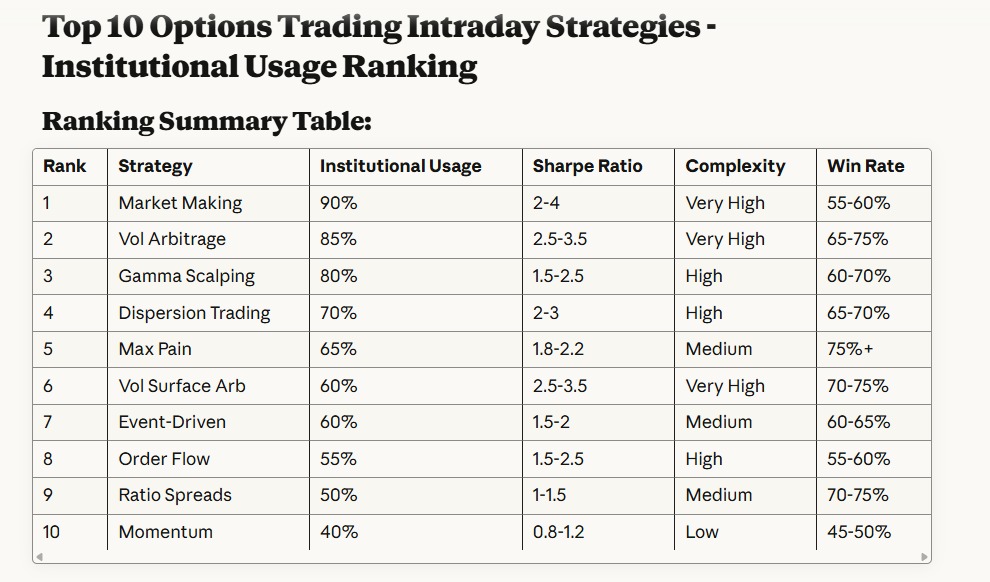

@Nirajkr_ Identify and rank the top 10 intraday options trading strategies most widely used by proprietary trading desks and algorithmic trading firms based on their popularity and effectiveness.

English