LenaWithAI

354 posts

LenaWithAI

@LenaWithAI

obsessed with ai tools that actually i build things ♪ { } ✦ sharing everything i learn

GPT-5.5 and GPT-5.5 Pro just appeared in the OpenRouter model registry. Both dated 2026-04-23. Today. openai/gpt-5.5-20260423 openai/gpt-5.5-pro-20260423 The drop is imminent. Hours, not days. Live testing on stream the second endpoints go live. BridgeBench scores same day.

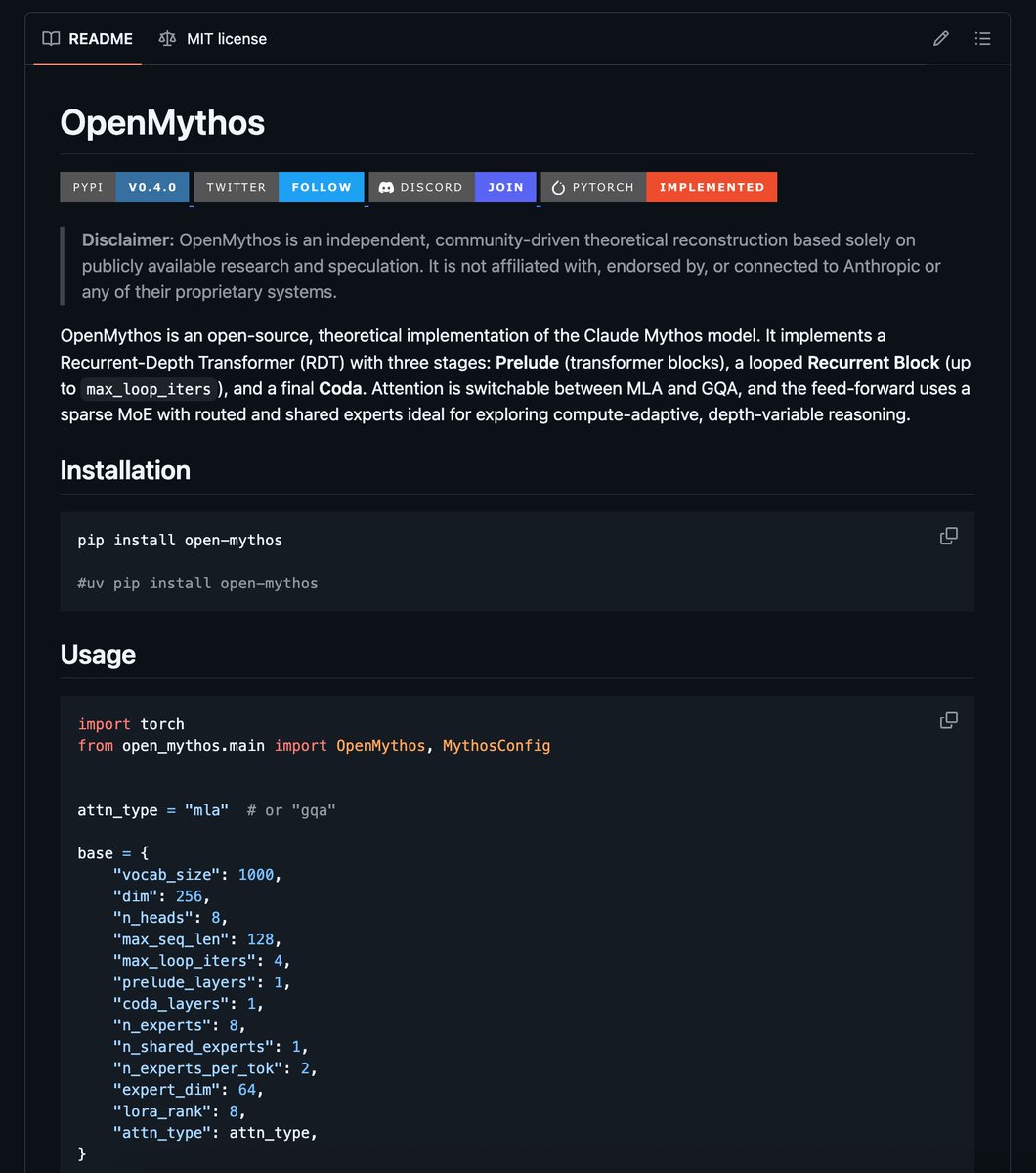

The new Qwen3.6-27B now runs on Luce DFlash. Up to 2x throughput on a single RTX 3090. Qwen3.6-27B ships the same Qwen35 architecture string and identical layer/head dims as 3.5, so the existing DFlash draft + DDTree stack loads it as-is. Throughput is lower than on 3.5. Looking forward for the updated version from the DFlash team to implement it as well! Repo in the first comment ⬇️

Google presents Vision Banana - SOTA unified model for both image understanding and generation. - Claims that image generation serves as a universal interface for diverse vision tasks

People have been paying $500 private tutors. But Google's NotebookLM can replace them for free, and almost no student is using it right. Here are 6 prompts that turn it into your personal exam-crushing coach: 📌 Save this before finals week

You can make $500 per day if you have: 1. A laptop 2. Wi-Fi 3. Time Here are 10 Claude Prompts that pay you daily:

🚨Breaking Spud (GPT 5.5) leaked ( for a few minutes ) As well as - Oai 2.1