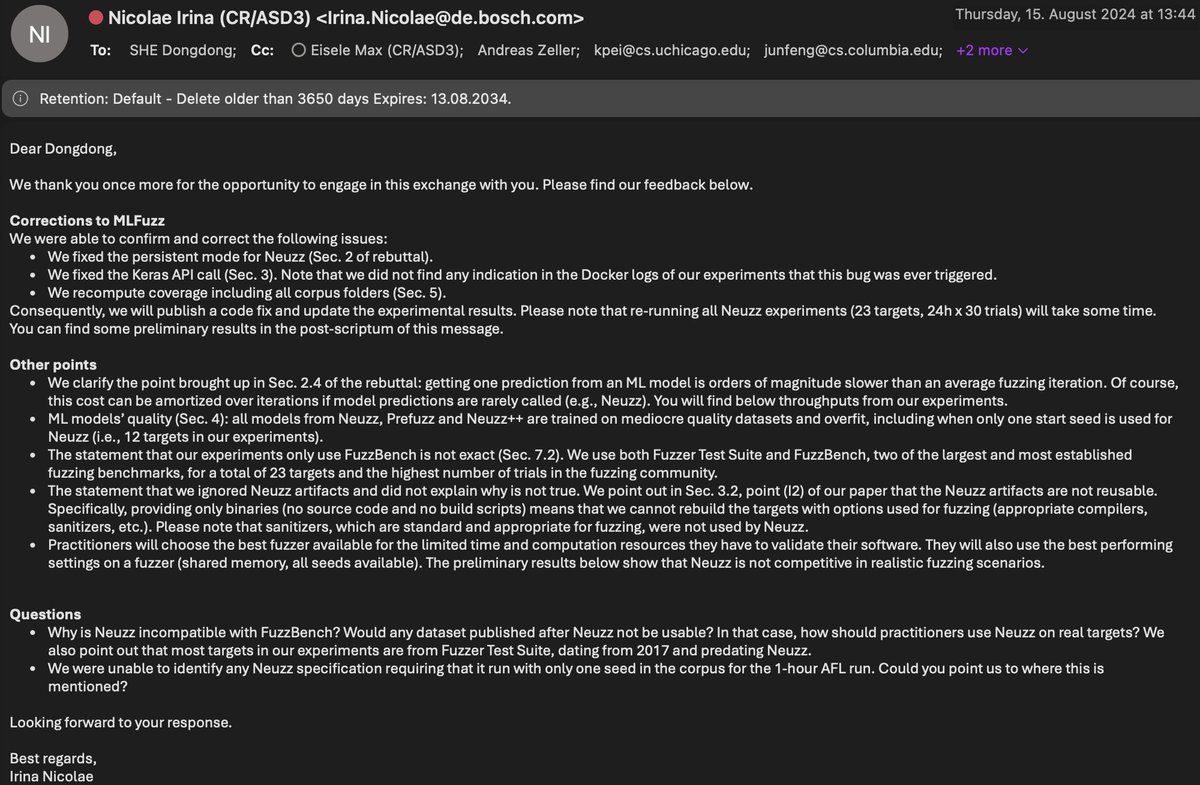

@DongdongShe You seem happy enough to communicate in the public channel: you’re the one who took this to Twitter and shared private conversations. If you’re going to share emails, might as well do so in full and not cherry-pick:

Max Eisele

13 posts

@DongdongShe You seem happy enough to communicate in the public channel: you’re the one who took this to Twitter and shared private conversations. If you’re going to share emails, might as well do so in full and not cherry-pick:

Ep5. Rebuttal MLFuzz Thanks Irina’s response. We never heard back from you and @AndreasZeller since last month when we sent the last email to ask if you guys were willing to write an errata of MLFuzz to acknowledge the bugs and wrong conclusion. So I am happy to communicate with you in the public channel about this issue and clarify the misleading conclusions in your paper MLFuzz in front of the fuzzing community. Our first email pointed out 4 bugs in MLFuzz and we showed that if you fixed the 4 bugs you can successfully reproduce our results. We also provide a fixed version of your code and preliminary results on 4 FuzzBench programs. Your first response confirmed 3 bugs but refused to acknowledge the most severe one – an error in training data collection. For any ML model, garbage in, garbage out. If you manipulate the training data distribution, you can cook any arbitrary poor results for an ML model. Why are you reluctant to fix the training data collection error? Instead, you insist on running NEUZZ with the WRONG training data and cooking invalid results even though we already notified you of this issue. We suspect maybe that’s the only way to keep reproducing your wrong experiment results and avoid acknowledging your error in MLFuzz. Your research conduct raised a serious issue about how to properly reproduce fuzzing performance in the Fuzzing community. Devil’s advice: blindly, deliberately or stealthily run it with WRONG settings or patch it with a few bugs and claim its performance does not hold? Only an ill-configured fuzzer is a good baseline fuzzer. We think a fair and scientific way to reproduce/revisit a fuzzer should ensure running a fuzzer properly as the original paper did, rather than free-style wrong settings and bug injections. The fact is you guys wrote buggy code (you confirmed in the email) and cooked invalid results and wrong conclusions published in a top-tier conference @FSEconf 2023. We wrote a rebuttal to point out 4 fatal bugs in your code and wrong conclusions. A responsible and professional response should directly address our questions about the 4 fatal bugs and wrong conclusions. But your response discussed the inconsistent performance number issue of NEUZZ (due to a different metric choice), the benchmark, seed corpus, IID issue of MLFuzz. They are research questions about NEUZZ and MLFuzz, but they are not the topic of this post: MLFuzz rebuttal. They can only shift the audience's attention but cannot fix the bugs and errors in MLFuzz. I promise I will address every question in your response in a separate post on X, but not in this one. Stay tuned! @is_eqv @moyix @thorstenholz @mboehme_

Excited to announce ACM SIGSOFT Awards 2024!!! Congratulations to all winners for their significant contributions! Here is the blog post by the Awards chair @davidlo2015 and @sigsoft chair @tomzimmermann 👋👋👋 @sigsoft/sigsoft-awards-2024-ce34b9ee23a6" target="_blank" rel="nofollow noopener">medium.com/@sigsoft/sigso…

A serious of posts to follow!

She's right: I have seen _not a single_ ML-based approach that outperforms traditional fuzzing - and many have tried. Can't we just call all fuzzers AI and get the funding anyway?

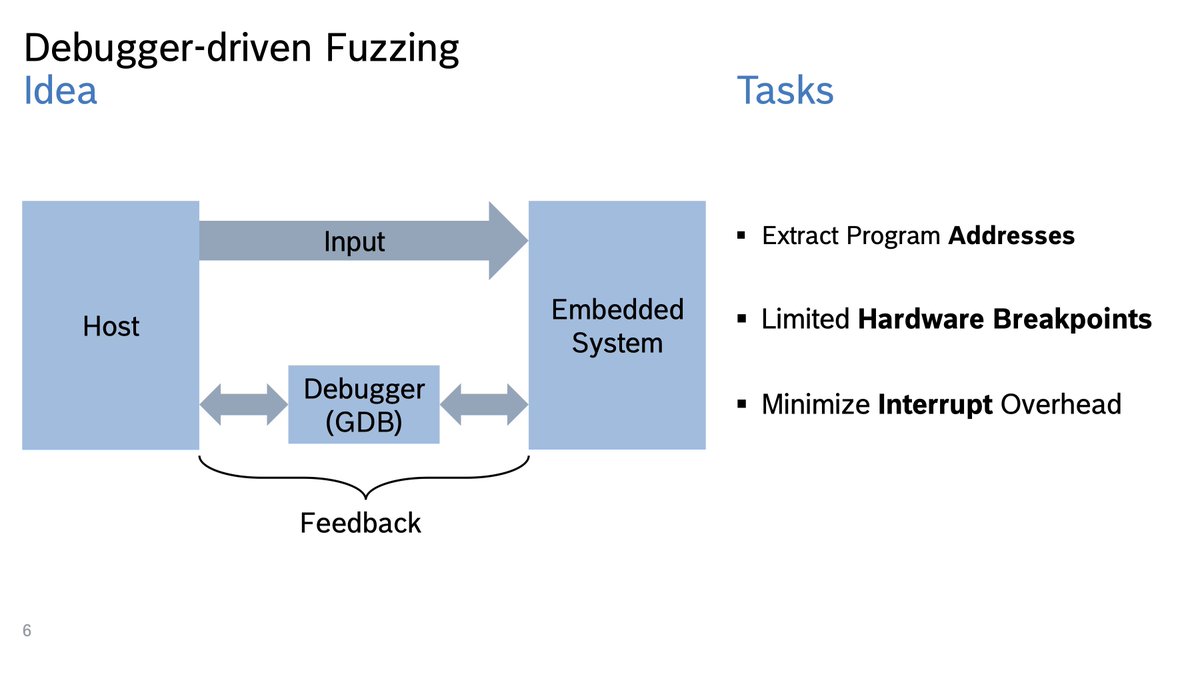

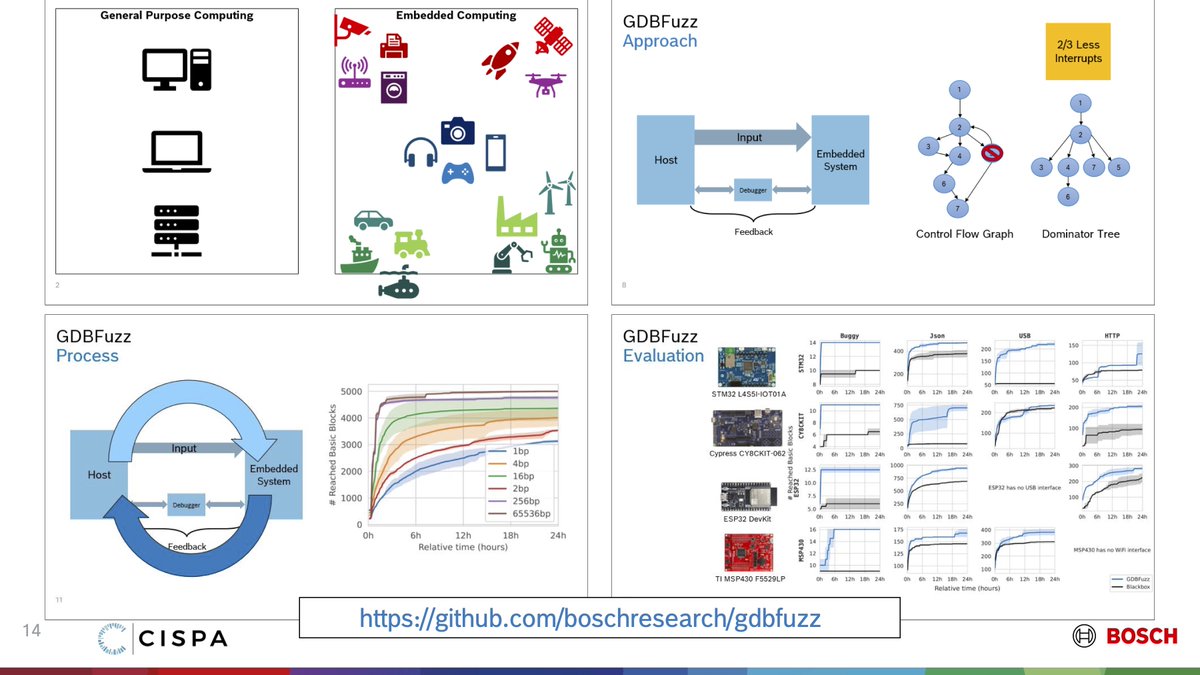

Fuzzing embedded systems! If you’re at @issta_conf or @ECOOPconf today, drop in at 2:15pm at G01 and see how we use GDB (yes, the GNU debugger) to fuzz test software running on embedded systems hardware: 2023.issta.org/details/issta-…