PicnicHealth retweetet

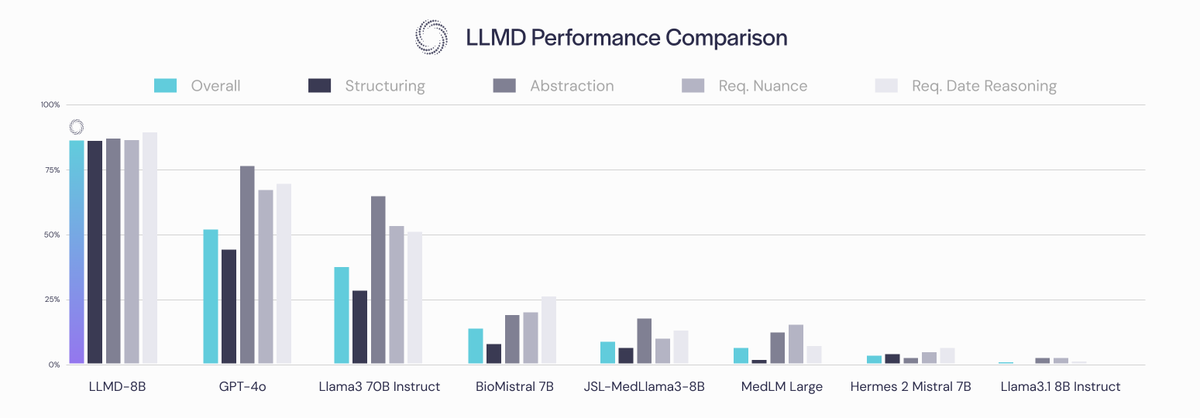

In medicine, are bigger models all you need?

We trained an 8B parameter model on a large corpus of annotated patient records and it outperforms GPT-4o.

We're excited to share LLMD (LLM + MD 😉). LLMD achieves SOTA results on PubMedQA and, more importantly, shows a huge advantage in interpreting real-world patient records. 🧵

English