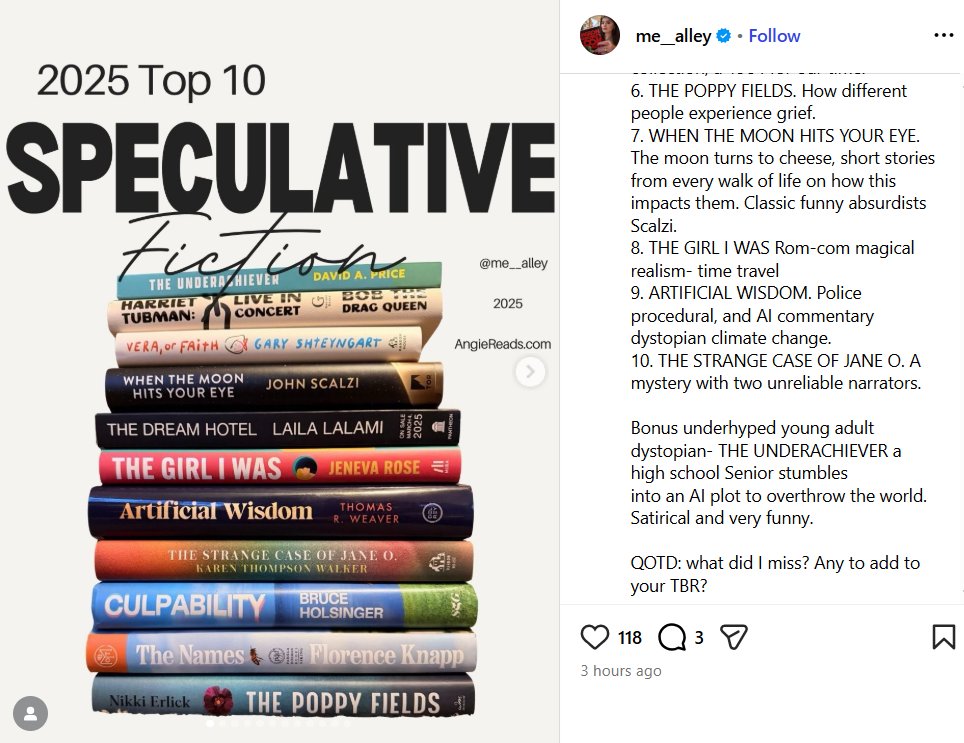

David A. Price

574 posts

David A. Price

@PriceIndex

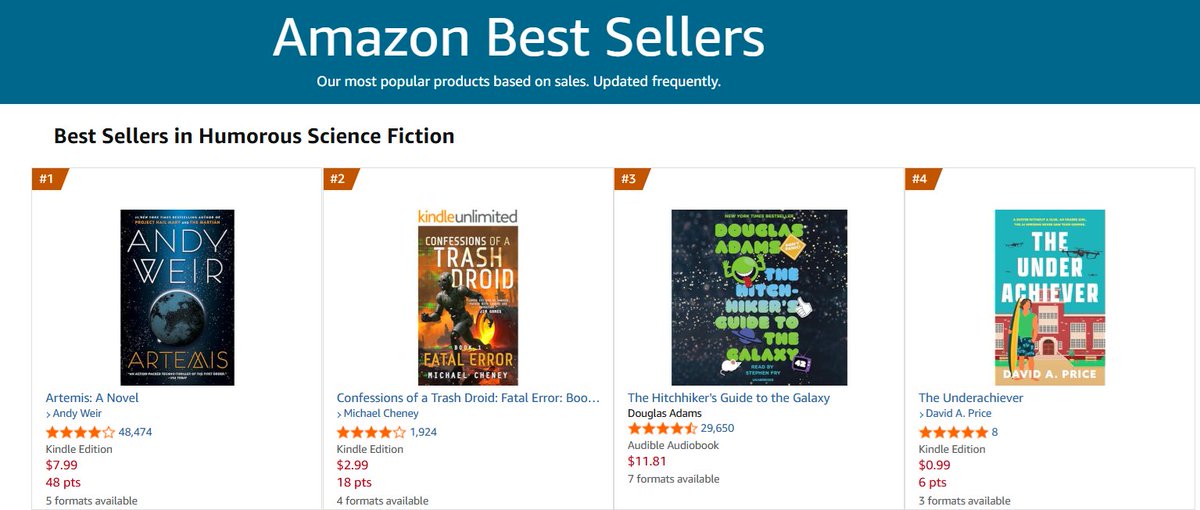

Author of Geniuses at War (digital pioneers @ Bletchley Park), The Pixar Touch, Love and Hate in Jamestown, all from Knopf. New comic fiction: The Underachiever

Here's my big new story on Pixar's “reluctant leader” Pete Docter and the radical changes he’s making to get the “Toy Story” studio back on track. And I've got lots of exclusive details, including about 2 unannounced films. 🧵 wsj.com/business/media…

New blog post w @pawtrammell: Capital in the 22nd Century Where we argue that while Piketty was wrong about the past, he’s probably right about the future. Piketty argued that without strong redistribution of wealth, inequality will indefinitely increase. Historically, however, income inequality from capital accumulation has actually been self-correcting. Labor and capital are complements, so if you build up lots of capital, you’ll lower its returns and raise wages (since labor now becomes the bottleneck). But once AI/robotics fully substitute for labor, this correction mechanism breaks. For centuries, the share of GDP that goes to paying wages has been 2/3, and the share of GDP that’s been income from owning stuff has been 1/3. With full automation, capital’s share of GDP goes to 100% (since datacenters and solar panels and the robot factories that build all the above plus more robot factories are all “capital”). And inequality among capital holders will also skyrocket - in favor of larger and more sophisticated investors. A lot of AI wealth is being generated in private markets. You can’t get direct exposure to xAI from your 401k, but the Sultan of Oman can. A cheap house (the main form of wealth for many Americans) is a form of capital almost uniquely ill-suited to taking advantage of a leap in automation: it plays no part in the production, operation, or transportation of computers, robots, data, or energy. Also, international catch-up growth may end. Poor countries historically grew faster by combining their cheap labor with imported capital/know-how. Without labor as a bottleneck, their main value-add disappears. Inequality seems especially hard to justify in this world. So if we don’t want inequality to just keep increasing forever - with the descendants of the most patient and sophisticated of today’s AI investors controlling all the galaxies - what can we do? The obvious place to start is with Piketty’s headline recommendation: highly and progressively tax wealth. This might discourage saving, but it would no longer penalize those who have earned a lot by their hard work and creativity. The wealth - even the investment decisions - will be made by the robots, and they will work just as hard and smart however much we tax their owners. But taxing capital is pointless if people can just shift their future investment to lower tax countries. And since capital stocks could grow really fast (robots building robots and all that), pretty soon tax havens go from marginal outposts to the majority of global GDP. But how do you get global coordination on taxing capital, when the benefits to defecting are so high and so accessible? Full automation will probably lead to ever-increasing inequality. We don’t see an obvious solution to this problem. And we think it’s weird how little thought has gone into what to do about it. Many more thoughts from re-reading Piketty with our AGI hats on at the post in the link below.