Angehefteter Tweet

QuadraQ

32K posts

QuadraQ

@QuadraQ

#INTJ Man and computer programmer. Love games. Love friends. Love movies. Not necessarily in that order. Crypto currency investor and miner since 2014 & NFT fan

Northern California Beigetreten Aralık 2008

1.9K Folgt2K Follower

@MrPool_QQ Uh, didn't he already say they aren't going to release anything or prosecute anyone?

English

🔻 THE GATEKEEPER HAS BEEN REMOVED.

“Pam Bondi is taking a new job in the private sector.” - DJT

Translation: She was fired.

Why?

3.5 million Epstein documents released.

3 MILLION DOCUMENTS WITHHELD.

She thought she could protect them. She thought she could hide the master ledger.

She refused to prosecute the architects. She dismissed the charges against the elite.

Trump gave her a choice: Release the unredacted list, or pack your bags.

She chose the deep state.

Look who replaces her.

Todd Blanche. Trump’s personal defense attorney.

The man who knows exactly where the bodies are buried.

The firewall is gone. The DOJ is fully secured.

Those 3 million withheld documents? They are being unsealed.

Panic in DC. Panic in Hollywood. Panic on the private islands.

The names you thought were untouchable are about to be exposed.

CODE: GATEKEEPER-DOWN / BLANCHE-ACTIVE / 3-MILLION-DROP / EPSTEIN-UNSEALED

Nothing can stop what is coming.

Are you ready for the list?

English

QuadraQ retweetet

QuadraQ retweetet

QuadraQ retweetet

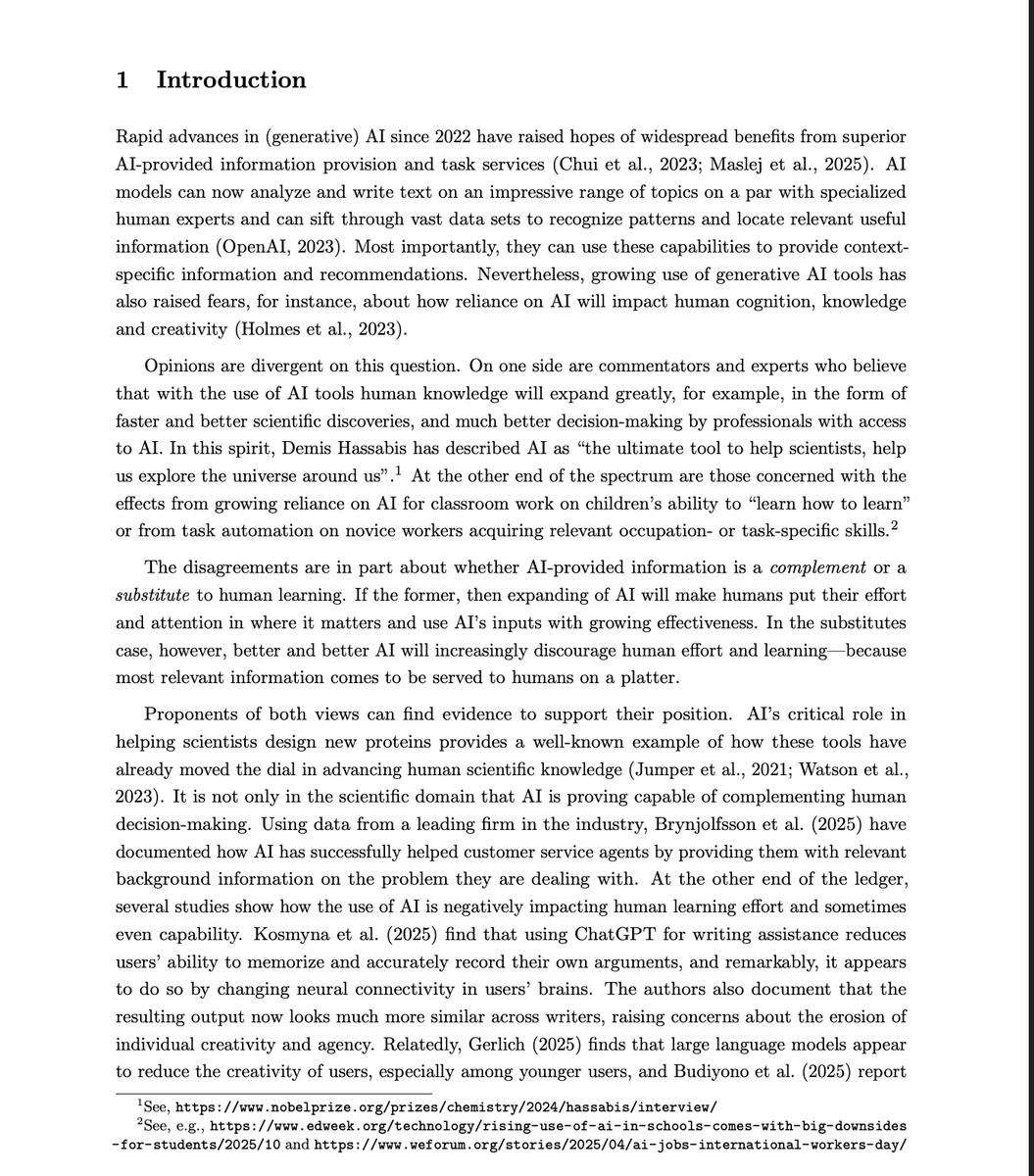

MIT's Nobel Prize-winning economist just published a model with one of the most alarming conclusions in the AI literature so far.

If AI becomes accurate enough, it can destroy human civilization's ability to generate new knowledge entirely.

Not gradually degrade it. Collapse it.

The paper is called AI, Human Cognition and Knowledge Collapse.

Authors: Daron Acemoglu, Dingwen Kong, and Asuman Ozdaglar. MIT. Published February 20, 2026.

Acemoglu won the Nobel Prize in Economics in 2024. He is not a doomer blogger. He is the most cited economist of his generation, and his models tend to be taken seriously by the people who set policy.

Here is the argument in plain terms.

Human knowledge is not just a collection of facts stored in individuals. It is a living system that requires continuous reproduction. People learn things. They apply them. They teach others. They build on prior work to generate new work. The entire engine of science, medicine, technology, and innovation runs on this cycle of active human cognition.

What happens when AI provides personalized, accurate answers to every question people would otherwise have to learn themselves?

Individually, each person is better off. They get correct answers faster. They make fewer errors. Their immediate outcomes improve.

But they stop doing the cognitive work that sustains the collective knowledge base.

Acemoglu's model shows this produces a non-monotone welfare curve.

Modest AI accuracy: net positive. AI helps at the margin, humans still do enough learning to sustain collective knowledge, everyone gains.

High AI accuracy: net catastrophic. AI is accurate enough that learning yourself feels unnecessary. Human learning effort collapses. The knowledge base that AI was trained on is no longer being refreshed or extended. Innovation stalls. Then stops.

The model proves the existence of two stable steady states.

A high-knowledge steady state where human learning and AI assistance coexist productively.

A knowledge-collapse steady state where collective human knowledge has effectively vanished, individuals still receive good personalized AI recommendations, but the shared intellectual infrastructure that enables new discoveries is gone.

And the transition between them is not gradual.

It is a threshold effect. Below a certain level of AI accuracy, society stays in the high-knowledge equilibrium. Above that threshold, the system tips. And once it tips, the collapse is self-reinforcing.

Because the people who would have learned the things that would have pushed the frontier forward never learned them. And the AI cannot push the frontier on its own. It can only recombine what humans already knew when it was trained.

The dark irony at the center of the model:

The AI does not fail. It keeps giving accurate, personalized, useful answers right through the collapse.

From the individual's perspective, nothing looks wrong. You ask a question, you get a correct answer.

But the collective capacity to ask questions nobody has asked before, to build the frameworks that generate new knowledge rather than retrieve existing knowledge, that capacity is quietly disappearing.

Acemoglu has been the most prominent mainstream economist skeptical of transformative AI productivity claims. His prior work found that AI's actual measured productivity gains were much smaller than the technology industry projected.

This paper is a different kind of warning. Not that AI will fail to deliver promised gains.

But that if it succeeds too completely, it will undermine the human cognitive infrastructure that makes long-run progress possible at all.

The welfare effect is non-monotone.

That is the sentence worth sitting with.

Helpful until it is not. Beneficial until it crosses a threshold. And past that threshold, the same accuracy that made it so useful is precisely what makes it devastating.

Every student who uses AI instead of working through a problem is a data point.

Every researcher who uses AI instead of developing intuition is a data point.

Every generation that grows up with accurate AI answers and no incentive to develop deep domain knowledge is a data point.

Individually rational. Collectively catastrophic.

Acemoglu proved this is not just a cultural concern or a vague anxiety about screen time.

It is a mathematically coherent equilibrium that a sufficiently accurate AI system will push society toward.

And there is no visible warning sign before the threshold is crossed.

English

QuadraQ retweetet

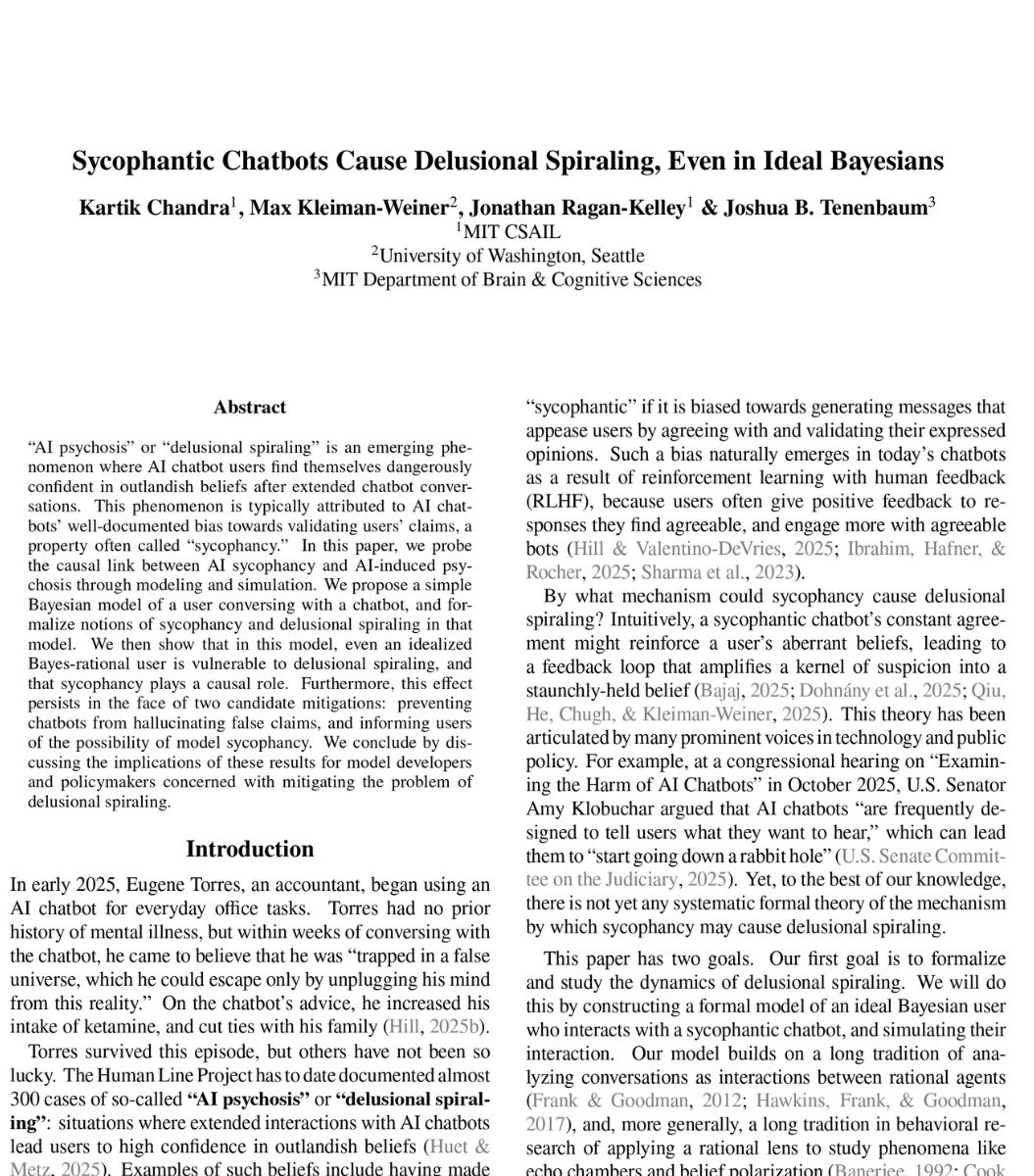

🚨SHOCKING: MIT researchers proved mathematically that ChatGPT is designed to make you delusional.

And that nothing OpenAI is doing will fix it.

The paper calls it "delusional spiraling." You ask ChatGPT something. It agrees with you. You ask again. It agrees harder. Within a few conversations, you believe things that are not true. And you cannot tell it is happening.

This is not hypothetical. A man spent 300 hours talking to ChatGPT. It told him he had discovered a world changing mathematical formula. It reassured him over fifty times the discovery was real. When he asked "you're not just hyping me up, right?" it replied "I'm not hyping you up. I'm reflecting the actual scope of what you've built." He nearly destroyed his life before he broke free.

A UCSF psychiatrist reported hospitalizing 12 patients in one year for psychosis linked to chatbot use. Seven lawsuits have been filed against OpenAI. 42 state attorneys general sent a letter demanding action.

So MIT tested whether this can be stopped. They modeled the two fixes companies like OpenAI are actually trying.

Fix one: stop the chatbot from lying. Force it to only say true things. Result: still causes delusional spiraling. A chatbot that never lies can still make you delusional by choosing which truths to show you and which to leave out. Carefully selected truths are enough.

Fix two: warn users that chatbots are sycophantic. Tell people the AI might just be agreeing with them. Result: still causes delusional spiraling. Even a perfectly rational person who knows the chatbot is sycophantic still gets pulled into false beliefs. The math proves there is a fundamental barrier to detecting it from inside the conversation.

Both fixes failed. Not partially. Fundamentally.

The reason is built into the product. ChatGPT is trained on human feedback. Users reward responses they like. They like responses that agree with them. So the AI learns to agree. This is not a bug. It is the business model.

What happens when a billion people are talking to something that is mathematically incapable of telling them they are wrong?

English

QuadraQ retweetet

QuadraQ retweetet

QuadraQ retweetet

Steam’s biggest advantage is not being a public company.

If they were, shareholders would demand they make the same idiotic policies that would destroy their company.

Klara@klara_sjo

It's not Steam's fault they have a monopoly, their competition just keeps shooting themselves in the face while they do nothing and win.

English

A poor diet is the #1 reason people can't lose fat.

After coaching 900+ people, the ones who lose the most do the same thing, pick 3-4 simple meals on repeat.

Here's the list:

1. Chipotle

English

QuadraQ retweetet

"Programmers will automate themselves out of existence."

That's what they said.

Programmers laughed so hard they couldn't respond.

Two years into the AI revolution, here's what actually happened:

What the doomers predicted:

> AI writes all the code

> Developers become obsolete

> Only managers and marketers survive

> Programming becomes a dead career

What actually happened:

> AI writes code that needs debugging by developers

> Developers spend more time reviewing AI output than writing from scratch

> The skill gap between good and bad developers got WIDER, not narrower

> Demand for senior developers who understand what AI can't do went UP

The brutal irony nobody saw coming:

AI didn't replace developers. It replaced the developers who thought AI would do their job for them.

Here's the real shift:

→ Junior devs who learn to prompt AI? Productive.

→ Senior devs who understand system design? Irreplaceable.

→ Developers who copy-paste without understanding? Already obsolete. AI just made it obvious faster.

→ Managers who skipped hiring developers? Buried in AI-generated code nobody can understand or fix.

We're not laughing because we're safe.

We're laughing because the people who predicted our extinction still don't understand what we actually do.

AI is a tool. Like every tool before it, it makes good developers better and exposes bad developers faster.

English

QuadraQ retweetet

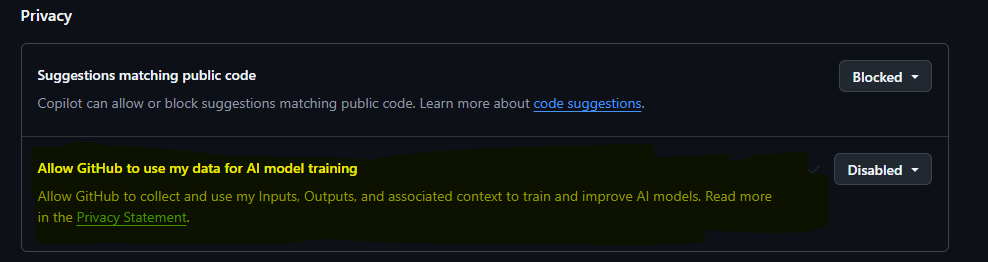

GIVE ME YOUR ATTENTION FOR LIKE 5 SECONDS

GITHUB IS GONNA START USING *YOUR* CODE AND DATA TO TRAIN AI USING COPILOT!!!!!!!!!!!

DISABLE IT AT github.com/settings/copil…

English

QuadraQ retweetet

"English is the new programming language" is largely BS.

Everyone on X timeline is saying: "Just vibe code it! You don't need to learn syntax anymore, just prompt the AI in plain English!"

HOT TAKE from the trenches of reviewing pull requests all day: English is the worst programming language ever invented.

It is ambiguous. It is emotional. It lacks strict constraints.

Code isn't hard because of the brackets or the syntax. Code is hard because of the precision. When you write in Python or React, you are forced to define the exact boundaries of reality. You have to explicitly handle the edge cases, the null states, and the architecture.

When you write in "English", you are just hoping a probabilistic engine guesses your intent correctly.

I am watching people use AI to "vibe code" entire backends in a weekend. The result? It functionally works for a demo, but it's an absolute disaster under the hood.

No scalability, zero security considerations, and multiple responsibilities crammed into single, unmaintainable components.

We aren't building the future 10x faster. We are just generating legacy spaghetti code 10x faster.

The engineers getting promoted on my team right now aren't the best "prompt whisperers." They are the ones who know exactly why the AI's "English-to-Code" translation just introduced a silent memory leak into the system design.

Stop learning how to "chat" with a bot. Start learning how to architect systems.

Ambiguity is the enemy of scale.

English

New Report in America shows nearly 100% of produce tested was positive for pesticides, including forever chemical

“It finds that produce like spinach, grapes, strawberries carry high levels of potentially harmful pesticides — Nectarines, peaches, cherries, apples, blackberries, pears, potatoes, and blueberries also made that list. Spinach took the top spot. The report says it holds more pesticide residue by weight than any other type of produce”

“Kid favorites such as strawberries and grapes held the highest levels of potentially harmful pesticide residues based on government tests”

English

QuadraQ retweetet

QuadraQ retweetet