Angehefteter Tweet

QubitValue

3.9K posts

QubitValue

@QubitValue

Quantum computing consulting, quantum algorithm development and training - Your partner in the #QuantumRevolution. Founded in 2021 #QuantumComputing

Suomi Beigetreten Şubat 2021

36 Folgt1.2K Follower

Quantum computing stocks are having a moment, but the underlying variety of approaches is the quiet story investors often miss.

The most watched tickers represent entirely different hardware philosophies: trapped-ion systems available through major clouds, quantum annealing paired with open-source developer tools, and integrated photonics promising portable machines and entanglement-based authentication.

There is no single winning architecture yet. The race is not just about who boasts the highest qubit count—it is about who finds real-world problems worth solving and delivers solutions customers will actually pay for.

These remain speculative, long-term positions tied to complex research milestones and an uncertain pace of commercial adoption. The excitement is warranted, but so is patience #QuantumComputing

English

Quantum stocks are catching Wall Street's attention again. IonQ, D-Wave, and Quantum Computing Inc. are leading recent trading volume in the space, each representing a distinct approach to the technology — trapped ions, quantum annealing, and integrated photonics, respectively.

What makes this interesting is the breadth of commercial models emerging. Cloud-based quantum access, portable room-temperature machines, quantum random number generation, and entanglement-based authentication demonstrate that the ecosystem is diversifying well beyond the "build a bigger quantum computer" narrative.

Worth noting: these remain speculative, long-term positions driven by research milestones and partnership momentum rather than traditional revenue metrics. The real signal here is not any single stock price. It is that the market is increasingly treating quantum computing as a sector with multiple viable paths forward, not a single-winner technology race.

That growing diversity of approaches is exactly what a maturing industry looks like #QuantumComputing

English

Photonic computing just got a lot more practical.

A new photonic reservoir computing platform has moved from research prototype to deployment-ready hardware, arriving as a standard PCIe plug-in card that processes data using light instead of electrons. The system is designed for real-time AI inference and advanced signal processing at the edge, targeting the kinds of latency-sensitive, power-constrained environments where traditional electronic processors face physical limits.

Hybrid photonic-digital architectures that deliver ultra-low latency and significantly reduced power consumption open new doors in telecom, autonomous vehicles, healthcare, and industrial monitoring. Time-series prediction and anomaly detection at the edge, without the energy footprint of conventional hardware, is exactly the kind of capability that moves photonics from promising to useful.

What makes this moment interesting for the broader quantum and photonic ecosystem is the form factor. A PCIe card that slots into existing infrastructure dramatically lowers the adoption barrier. The hardest part of any advanced computing technology isn't building it in a lab—it's making it something an engineer can actually deploy on a Tuesday afternoon.

This is the trajectory we keep watching: photonic and quantum-adjacent technologies finding their way into real-world workflows, one practical milestone at a time #QuantumComputing

English

Market enthusiasm and technological maturity often operate on very different timelines. The underlying momentum in the quantum sector is real, with developers making genuine technical progress in scaling qubit counts, advancing modular architectures, and expanding cloud platforms.

A few observations about where the industry actually stands:

The competition between quantum hardware approaches like superconducting, trapped ion, and photonic is far from settled. Each offers distinct tradeoffs in scalability, fidelity, and operational complexity. The solutions that succeed long-term will be the ones that consistently solve complex problems for enterprise users.

Commercial adoption milestones are becoming just as important as technical milestones. A 1,000-qubit system announcement is an impressive achievement, but the transition from specialized research tool to practical commercial product is where the heavy lifting happens.

The signal through the noise is clear: quantum computing is progressing steadily, but the timeline for broad commercial impact rewards patience and practical application #QuantumComputing

English

Three distinct approaches to quantum hardware are now trading as the sector's most active stocks by dollar volume: trapped-ion systems with major cloud integrations, quantum annealing with hybrid solvers, and integrated photonics running at room temperature.

This lineup reveals the sheer diversity of bets the market is placing. These aren't just competitors on the same track—they represent fundamentally different architectures, each with its own thesis on how quantum advantage arrives. Cloud-accessible ion traps, purpose-built optimization machines, and portable photonic devices barely share a vocabulary, let alone a roadmap.

That diversity is a sign of a maturing ecosystem. Capital spreading across competing paradigms shows the market is pricing in genuine technical uncertainty, which is the honest position to hold right now. Nobody has a monopoly on the path to broad commercial adoption.

The common thread is that all three are building real access points for enterprise users, whether through cloud marketplaces, developer toolkits, or turnkey hardware. Providers lowering the barrier to experimentation today are positioning themselves to capture demand when quantum workloads scale tomorrow #QuantumComputing

English

Three very different bets on the same frontier are drawing investor attention right now: trapped-ion systems delivered through major cloud platforms, quantum annealing with hybrid cloud solvers, and integrated photonics devices that operate at room temperature.

What makes this moment fascinating is the architectural diversity. The market is not converging on a single approach. It is branching out, with each pathway targeting different problem sets and deployment models.

Cloud-accessible superconducting and trapped-ion machines serve complex research and optimization workloads. Annealing systems carve out niches in logistics and materials science. Photonic approaches promise lower infrastructure overhead and novel applications in authentication and random number generation.

For businesses watching from the sidelines, the practical takeaway is that quantum is not a monolith. The technology you eventually adopt will depend on your specific use cases, latency requirements, and integration constraints, not on which stock ticker had the best week.

We are still in the infrastructure-building phase. Companies laying the groundwork today in R&D, partnerships, and IP portfolios are making long-horizon bets that commercial adoption will reward patience over hype.

Understanding what each approach actually does, and where its limitations lie, is the difference between an informed strategy and expensive guesswork #QuantumComputing

English

The most compelling framing of quantum risk we have seen recently: it is not about when quantum computers arrive—it is about the data being harvested right now.

The "harvest now, decrypt later" threat reframes the entire timeline. Adversaries are already collecting encrypted traffic today, banking on quantum decryption capabilities arriving by 2029. That means every organization storing sensitive data behind legacy encryption is accumulating what amounts to cryptographic debt, and the interest rate is about to spike.

Machine identities now outnumber human employees 82 to 1 in the average enterprise. If quantum computing enables attackers to forge digital certificates, they do not need to break in. They log in with perfectly credentialed autonomy and operate at machine speed. The perimeter is no longer a wall—it is an identity question.

The practical response is already taking shape: cryptographic bills of materials to map every algorithm and certificate, crypto-agility frameworks to swap out vulnerable encryption without disruption, and cipher translation at the network edge to protect legacy systems.

Perhaps most telling: compliance mandates like NSA CNSA 2.0 require quantum-safe standards for new acquisitions by 2027. This is no longer a thought exercise. It is a procurement requirement.

Organizations treating post-quantum migration as a 2030 problem may find themselves explaining to boards why data stolen in 2025 became a breach disclosure in 2029 #QuantumComputing

English

The quantum computing threat to Bitcoin is no longer a theoretical debate. It is becoming the most consequential coordination challenge in the network's history.

Recent industry discussions reveal a striking shift. The financial institutions bringing millions of new investors into digital assets now view themselves as active stakeholders in the post-quantum upgrade path, rather than mere spectators.

Unlike previous upgrades like Taproot, migrating to post-quantum cryptography is an existential requirement. This changes the urgency and political complexity entirely. Institutions are accustomed to proactive risk management, which can contrast with the deliberate pace of decentralized consensus. As one strategist noted with an apt military analogy, if intelligence suggests an attack window between midnight and dawn, no competent commander waits until the final hour to position defenses.

The governance challenge may ultimately prove harder than the cryptographic one. Balancing a decentralized ethos with the economic realities of firms holding billions in assets is uncharted territory. Add in the thorny issue of handling existing quantum-vulnerable coins, and the community faces a historic debate.

This tension between institutional responsibility and decentralized design will define the next chapter of Bitcoin. Fortunately, awareness is high, resources are ample, and these conversations are happening well before any quantum machine can break the network.

The quantum era does not need to be a crisis. With thoughtful preparation, it becomes an upgrade that demonstrates the ultimate resilience of decentralized systems under pressure #QuantumComputing

English

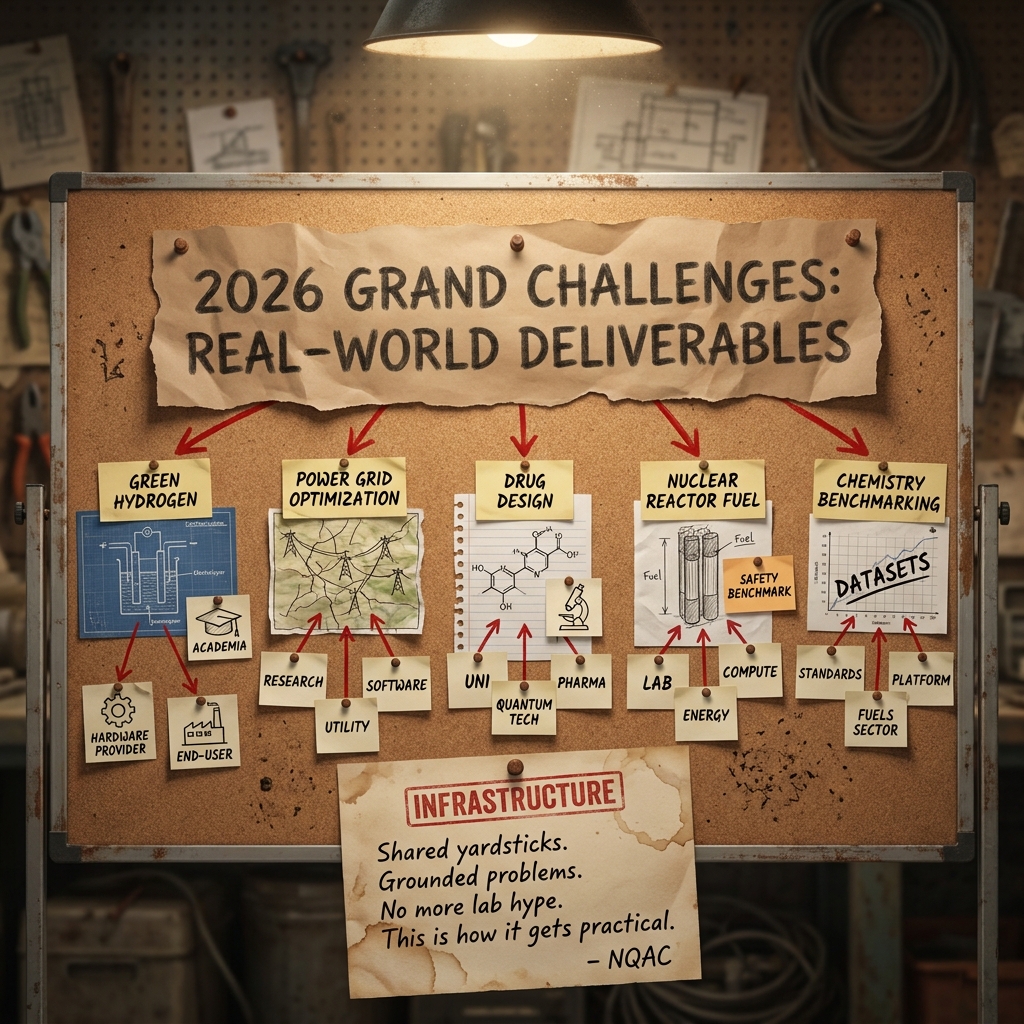

Five new projects just landed that show exactly where quantum computing meets the real world. The National Quantum Algorithm Center has announced its 2026 Grand Challenges awards, funding collaborations that pair academic researchers with hardware providers and industrial end-users. The focus areas read like a practical quantum wishlist: green hydrogen production, power grid optimization, drug design, quantum chemistry benchmarking for fuels, and nuclear reactor fuel-assembly optimization.

What makes this program worth watching is its structure. Three-way collaborations between universities, quantum companies, and industry keep the work grounded in actual use cases rather than focusing solely on theoretical elegance. The emphasis on open-source benchmarks and standardized datasets is equally significant. One of the biggest bottlenecks in applied quantum computing today is not just building better algorithms, but knowing how to accurately measure whether they outperform classical alternatives on problems that matter.

The breadth of energy-sector applications is striking. From simulating electrocatalysis for CO₂ utilization to optimizing fuel assemblies in nuclear reactors, these projects reflect a growing recognition that quantum computing's near-term value will likely concentrate in domains where modest computational advantages translate into enormous economic and environmental returns.

This is the kind of infrastructure—not just hardware or theory, but structured pipelines from research to deployment—that moves an industry from promising to productive #QuantumComputing

English

Five problems, five three-way collaborations, one clear signal: quantum algorithm development is getting serious about real-world deliverables.

The National Quantum Algorithm Center just announced its 2026 Grand Challenges awards, and the project lineup reads less like a research wishlist and more like an industrial roadmap. Green hydrogen production, power grid optimization, drug design, nuclear reactor fuel assembly, and chemistry benchmarking for the fuels sector — each backed by a partnership linking academia, hardware/software providers, and end-users who actually need the answers.

What stands out here is the structure. Pairing postdoctoral researchers with both quantum technology companies and industrial partners forces the work to stay grounded in problems that matter outside the lab. The emphasis on open-source benchmarks and standardized datasets is equally notable — the field has long needed shared yardsticks to separate genuine algorithmic advantage from optimistic extrapolation.

This is the kind of infrastructure that turns a promising technology into a practical one #QuantumComputing

English

Three distinct approaches to quantum hardware currently dominate the sector's market activity: cloud-accessed trapped ions, quantum annealing with hybrid solvers, and room-temperature integrated photonics.

The industry is not converging on a single architecture. It is branching out, with different players betting on fundamentally unique paths to commercial value. This diversity proves the field is in its experimental prime, where the ultimate hardware winner remains undecided and may never narrow to just one modality.

Yet the true common thread is accessibility. Through cloud delivery, advanced developer frameworks, and intuitive circuit creation platforms, the race has permanently evolved. It is no longer just a raw contest of qubit counts. The defining metric is how quickly platforms can put usable quantum capability into the hands of enterprise teams.

The commercial layer of quantum computing is forming in real time. The organizations building robust bridges between complex physics and practical business problems are the ones shaping what comes next #QuantumComputing

English

The quantum computing industry just crossed a meaningful threshold: fault tolerance is looking less like a distant dream and more like an engineering problem with a timeline.

Recent blueprints for scalable, fault-tolerant systems are turning heads among analysts, and the underlying message matters more than any single company's roadmap. Advances in logical qubit development, lower-overhead error correction, and more efficient system architectures are collectively shrinking what was once assumed to require millions of physical qubits.

The shift in framing is significant. When analysts start describing the path to commercial quantum systems as an execution challenge rather than an unsolved scientific question, it signals that the industry is maturing in ways that matter for real-world applications—from drug discovery and financial modeling to logistics and cryptography.

We are watching the quantum sector move from "if" to "how fast" #QuantumComputing

English

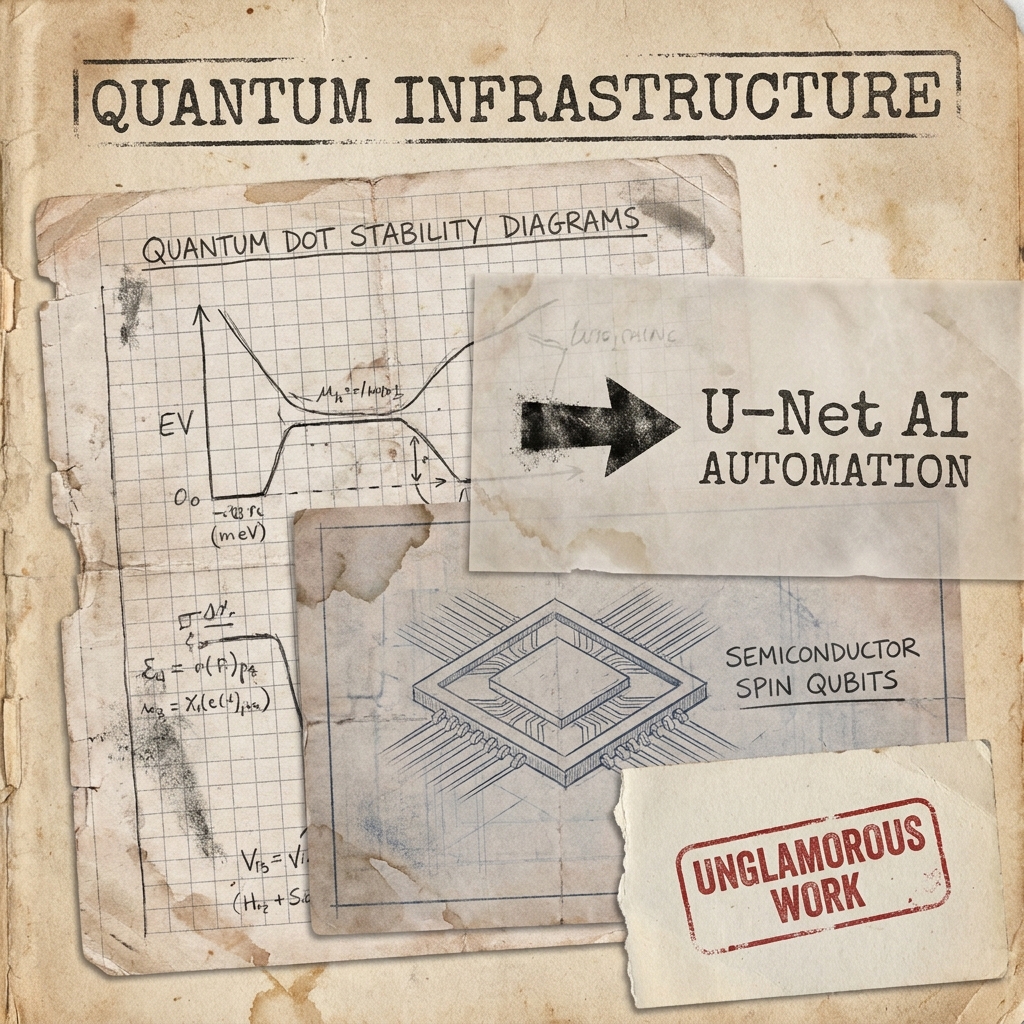

Scaling up quantum computers means tuning potentially millions of qubits, and doing that by hand is a quiet bottleneck that requires a modern solution.

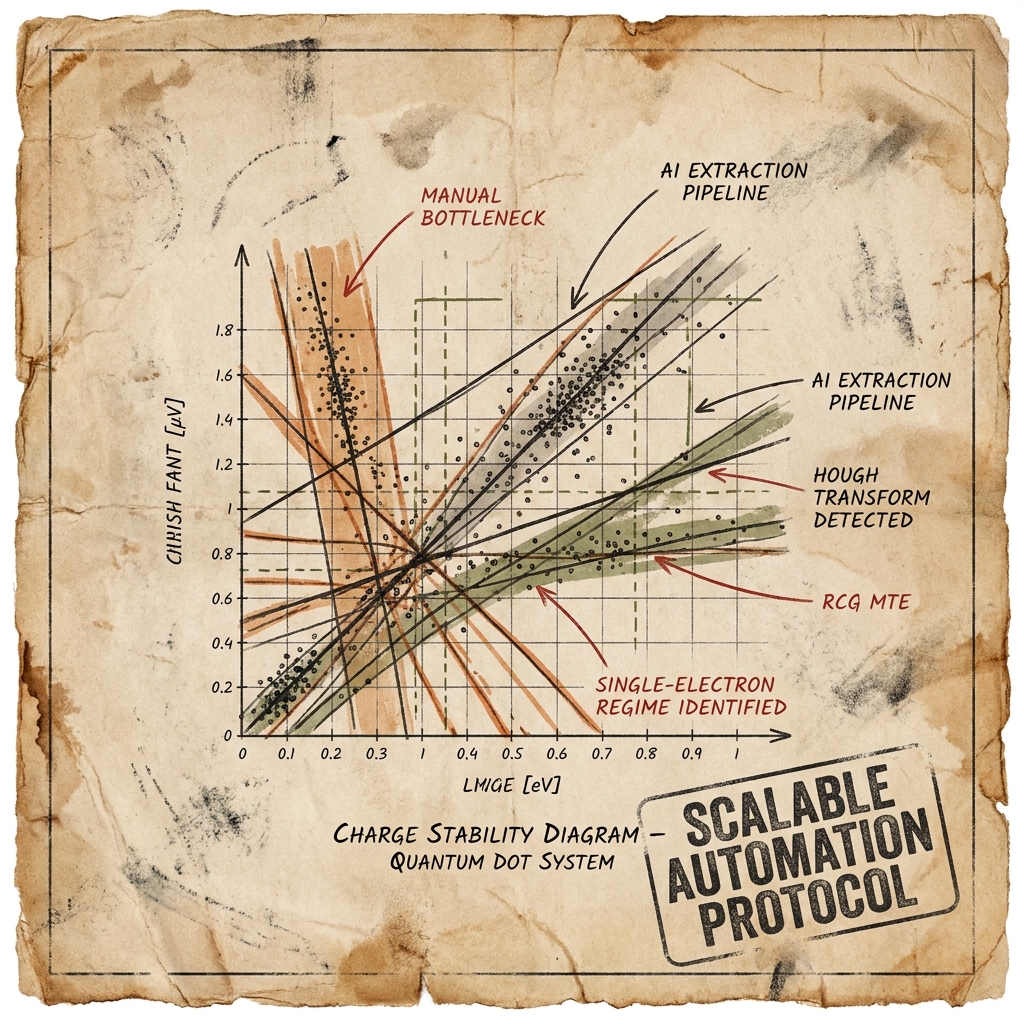

New research out of Tohoku University tackles this by using a U-Net AI model to automatically extract charge transition lines from quantum dot stability diagrams, a task that has traditionally required painstaking manual analysis. The extracted data is then processed through Hough transforms and clustering to automatically identify single-electron regimes and define virtual gates.

This matters because semiconductor spin qubits are a promising architecture precisely because they are compatible with existing chip fabrication technologies. That manufacturing advantage is limited if systems cannot be efficiently calibrated at scale. Automating the tuning pipeline is not a flashy breakthrough, but it is exactly the kind of unglamorous infrastructure work that separates laboratory demonstrations from practical machines.

The fact that AI is increasingly becoming the calibration partner for quantum hardware is a trend that signals real maturation in the space #QuantumComputing

English

Scanning an endless radio dial of white noise, hoping to catch one faint broadcast from an unknown station — that is essentially the challenge of searching for dark photons, and it just got a lot more interesting.

A Fermilab research team is building a scalable superconducting cavity array that uses quantum entanglement to hunt for dark matter with unprecedented speed and sensitivity. This approach links multiple ultra-coherent cavities into a single coordinated sensor, scanning frequencies far faster than any individual detector could manage alone.

The trick is not just better hardware — it is making many quantum sensors work together so entanglement becomes a genuine experimental advantage rather than a laboratory curiosity. A four-cavity prototype is the first milestone, utilizing an architecture designed to scale to much larger arrays.

What makes this especially noteworthy is the crossover: techniques born in superconducting quantum computing are proving remarkably powerful for sensing applications. The same hardware and interconnect architecture feeding this dark matter search also supports modular quantum computing and distributed quantum communication development.

A compelling reminder that quantum technology's most transformative applications may come from directions we are not yet watching closely enough #QuantumComputing

English

Scaling up quantum computers means tuning potentially millions of qubits, and doing that by hand is a critical bottleneck.

New research out of Tohoku University tackles this head-on. The team trained a U-Net AI model to automatically read charge stability diagrams—the measurement maps researchers use to identify where individual electrons sit in quantum dot systems. What used to require painstaking manual analysis of line angles and positions now happens automatically through a pipeline of AI extraction, Hough transform line detection, and clustering.

The result is automated identification of single-electron regimes and virtual gate definitions for semiconductor spin qubits, with efficiency far beyond what manual methods can achieve.

This matters because semiconductor spin qubits are one of the most promising qubit architectures precisely due to their compatibility with existing chip fabrication. The physics works. The engineering challenge is scale, and scale demands automation. You cannot hand-tune a million-qubit processor any more than you could hand-solder a modern CPU.

What makes this approach particularly elegant is that it combines well-established image processing techniques with modern deep learning, keeping the pipeline interpretable rather than treating the AI as a black box. That kind of practical, hybrid methodology is exactly what the field needs as it moves from laboratory demonstrations toward engineered systems.

The work, published in Scientific Reports, is a clear signal that the quantum hardware community is getting serious about the unsexy but essential infrastructure of automation and calibration at scale #QuantumComputing

English

Searching for dark matter is like scanning an infinite radio dial of static, hoping to catch one impossibly faint broadcast from an unknown station — and a Fermilab team just found a way to listen to multiple frequencies at once.

Their approach links an array of superconducting cavities through quantum entanglement so they function as a single coordinated sensor. Instead of tuning and listening to one frequency at a time, the entangled array can sweep the spectrum faster and with far greater sensitivity than any individual detector.

The first milestone is a four-cavity prototype, modest in scale but designed with an architecture that can extend to much larger arrays. The techniques borrow directly from superconducting quantum computing — state preparation, entangling operations, and low-loss interconnects — repurposed here for sensing rather than computation.

What makes this particularly interesting is the dual-use potential. The same hardware and interconnect architecture being developed for dark photon detection also applies to modular quantum computing and distributed quantum communication. Advances in one domain feed directly into the other.

This is a compelling reminder that some of quantum technology's most meaningful near-term impact may come not from computation alone, but from sensing — where quantum coherence and entanglement offer measurable advantages today

#QuantumComputing

English

Scaling quantum computers means tuning millions of qubits, and doing that by hand was never going to work.

Researchers at Tohoku University have demonstrated a method using a U-Net AI model to automatically extract charge transition lines from quantum dot stability diagrams. Combined with image processing and clustering techniques, this approach automates the identification of single-electron regimes and virtual gate definitions—key steps in configuring semiconductor spin qubits.

This matters because semiconductor spin qubits are among the most promising candidates for scalable quantum hardware, given their compatibility with existing chip fabrication. Their scaling bottleneck has always been calibration, not fabrication. Every qubit requires precise tuning, and the complexity grows faster than any human team can manage.

Automating this pipeline doesn't just save time. It removes a fundamental barrier to building quantum processors with the qubit counts that useful computation demands

#QuantumComputing

English

Quantum computing is starting to look less like a distant promise and more like an infrastructure buildout in progress.

2026 is shaping up as the year the industry pivots from "will it happen" to "how fast can we build it." The signals are hard to ignore: new processors designed to surpass classical supercomputers, meaningful progress on error correction, and a wave of capital flowing in that could see several quantum companies go public. One potential IPO alone could reach a $15-20 billion valuation, a figure that would have seemed fantastical for the sector just a few years ago.

On the M&A front, quantum-native companies are acquiring chipmakers to vertically integrate their supply chains—a classic sign of an industry maturing beyond the research phase.

The private market tells a similar story. Roughly $10 billion has been raised across public and private quantum companies. While that pales next to AI funding, it is remarkable for a space still scaling its commercial models. Current market revenues sit around $1.5 billion, mostly from government buyers, supplemented by tens of billions in public-sector investment globally.

Perhaps the most interesting subplot is the geographic race. While a handful of nations dominate government spending, smaller ecosystems are punching above their weight through academic strength, dedicated venture funds, and a high density of quantum startups relative to overall startup formation.

Optimists predict two to three years until fairly widespread use, while the cautious crowd says five. Either way, after nearly three decades of theoretical promise, that is a remarkably short runway. The transformation of industries like drug development, finance, and materials science is no longer just theoretical, but depends on who has the infrastructure in place when it arrives #QuantumComputing

English

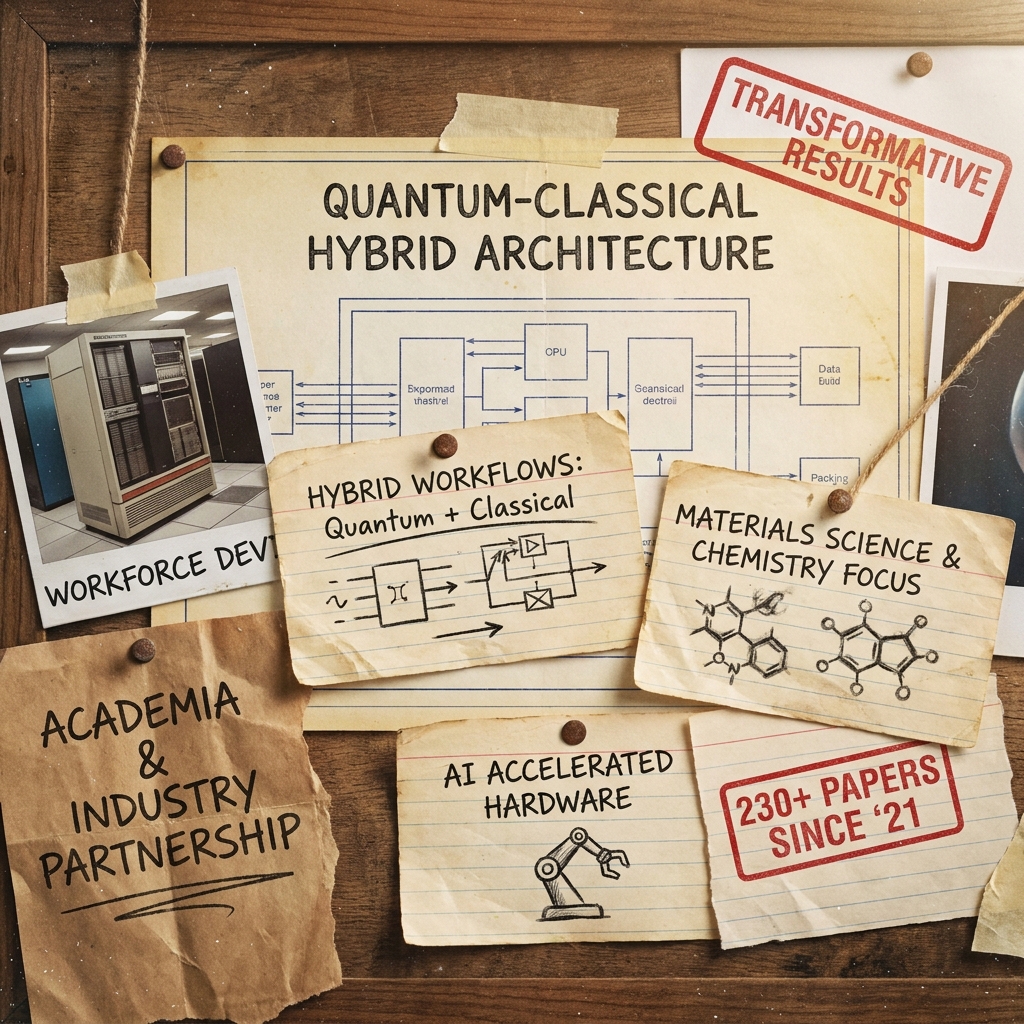

Quantum meets classical at supercomputing scale, and the results could be transformative.

A major partnership between a leading tech company and a top U.S. research university is expanding to build quantum-centric supercomputing environments. By integrating quantum processors with high-performance classical systems, they aim to tackle problems neither could solve alone.

Key elements of the expansion:

- Hybrid workflows will allow quantum and classical resources to coordinate seamlessly

- Research will focus on materials science, chemistry, and physics—the domains where quantum advantage is most likely to emerge first

- AI will accelerate specialized hardware design, unifying algorithms, silicon, and systems into a single approach

- The initiative builds on a strong foundation, having already produced over 230 research papers since 2021

The workforce development angle is equally vital. Training the next generation of scientists and engineers to work fluently across quantum, AI, and HPC is how theoretical breakthroughs translate into practical tools.

This is the kind of deep, sustained academia-industry partnership that builds real foundations for the future of computation #QuantumComputing

English

The Midwest is building its quantum future on a foundation of steel.

A decommissioned U.S. Steel foundry on Chicago's South Side, once a symbol of industrial decline, is being transformed into the Illinois Quantum and Microelectronics Park. Targeted for completion in 2027, the 128-acre campus is designed specifically for quantum companies and technology development.

The project is part of a broader regional effort anchored by the Chicago Quantum Exchange, uniting Department of Energy national labs, major research universities, and more than 50 corporate partners. The institutional density is remarkable, bringing together the University of Chicago, Argonne, Fermilab, UIUC, Wisconsin-Madison, Northwestern, and Purdue.

What makes this transformation compelling is its depth. The physical infrastructure of a Rust Belt economy is being repurposed, but so is the regional identity. The industrial mindset and talent pipelines that once powered American steel are redirecting toward the most demanding technology frontier of the 21st century.

Calling it the Silicon Prairie may sound ambitious, but the ingredients are real: world-class research labs, a deep bench of technical universities, growing corporate investment, and a dedicated physical campus to bring it all together. Quantum ecosystems do not emerge overnight, but they take root where commitment, talent, and infrastructure converge #QuantumComputing

English