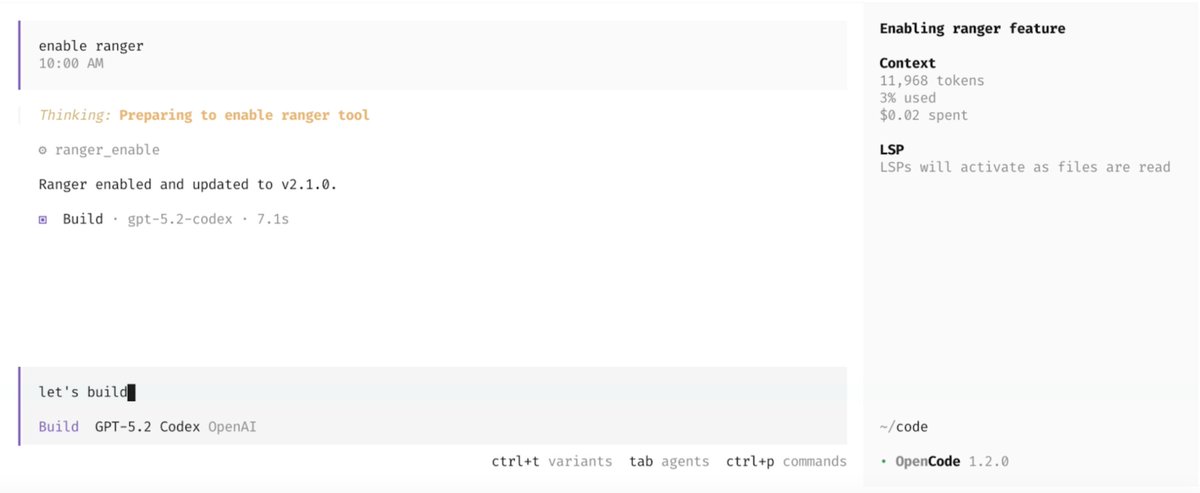

Ranger

42 posts

Ranger

@RangerNetHQ

Stop worrying about testing; automate it with Ranger.

San Francisco Beigetreten Ekim 2024

47 Folgt160 Follower

Angehefteter Tweet

Try our new Automatic Testing Agent →

docs.ranger.net/getting-starte…

English

Less than a week till the annuals Silly Hacks!

+120 Hackers, March 28

Make something silly for April Fools’ Day!

Thank you to our sponsors. @Replit @sendbluehq @FonziAI @RangerNetHQ @zerion @ElevenLabs @earlydotbuild

English

Ranger retweetet

GitHub Status@githubstatus

We are investigating intermittent performance degradation affecting Actions, Feeds, Issues, Package Registry, Profiles, Registry Metadata, Star, and User Dashboard. Users may experience elevated error rates and slower response times when accessing these... githubstatus.com/incidents/xsxc…

ZXX

@alex_prompter You can't expect to scale your codebase without scaling your testing and feedback loop.

We're trying to solve exactly this at Ranger, would love for anyone to try our new CLI tool.

English

🚨BREAKING: Alibaba tested AI coding agents on 100 real codebases, spanning 233 days each.

the agents failed spectacularly.

turns out passing tests once is easy. maintaining code for 8 months without breaking everything is where AI collapses.

SWE-CI is the first benchmark that measures long-term code maintenance instead of one-shot bug fixes.

each task tracks 71 consecutive commits of real evolution.

75% of AI models break previously working code during maintenance.

only Claude Opus 4 stays above 50% zero-regression rate. every other model accumulates technical debt that compounds over iterations.

here's the brutal part:

- HumanEval and SWE-bench measure "does it work right now"

- SWE-CI measures "does it still work after 6 months of changes"

agents optimized for snapshot testing write brittle code that passes tests today but becomes unmaintainable tomorrow.

Alibaba built EvoScore to weight later iterations heavier than early ones. agents that sacrifice code quality for quick wins get punished when consequences compound.

the AI coding narrative just got more honest: most models can write code. almost none can maintain it.

English

ppl hate writing playwright by hand - playwright mcp is different.

plugs into claude code, you describe the flow in english, claude walks your actual app.

no scripts to maintain.

momentic & spur are more for teams with PMs writing tests & regression suites at scale.

what are you building? solo or team?

English

Ranger retweetet

Ranger retweetet

If you wish you had a background agent, turns out it's not that hard to make your own. The @RangerNetHQ engineering team just posted a spec on how to do so!

English

Confrats @joship__ & @RangerNetHQ ! 🙌

Ranger@RangerNetHQ

We don't manually test features anymore. You can now run continuous QA features in Claude using “Feature Review” by Ranger. Feature Review is an always-on AI QA that runs in the background, fixing features while providing you and your team with full visibility. Never ask Claude to fix errors again.

Català