Angehefteter Tweet

Satyapriya Krishna

636 posts

Satyapriya Krishna

@SatyaScribbles

Explorer @sesame Re-tweets/Re-posts == Lit review

Allston, MA Beigetreten Haziran 2020

255 Folgt556 Follower

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

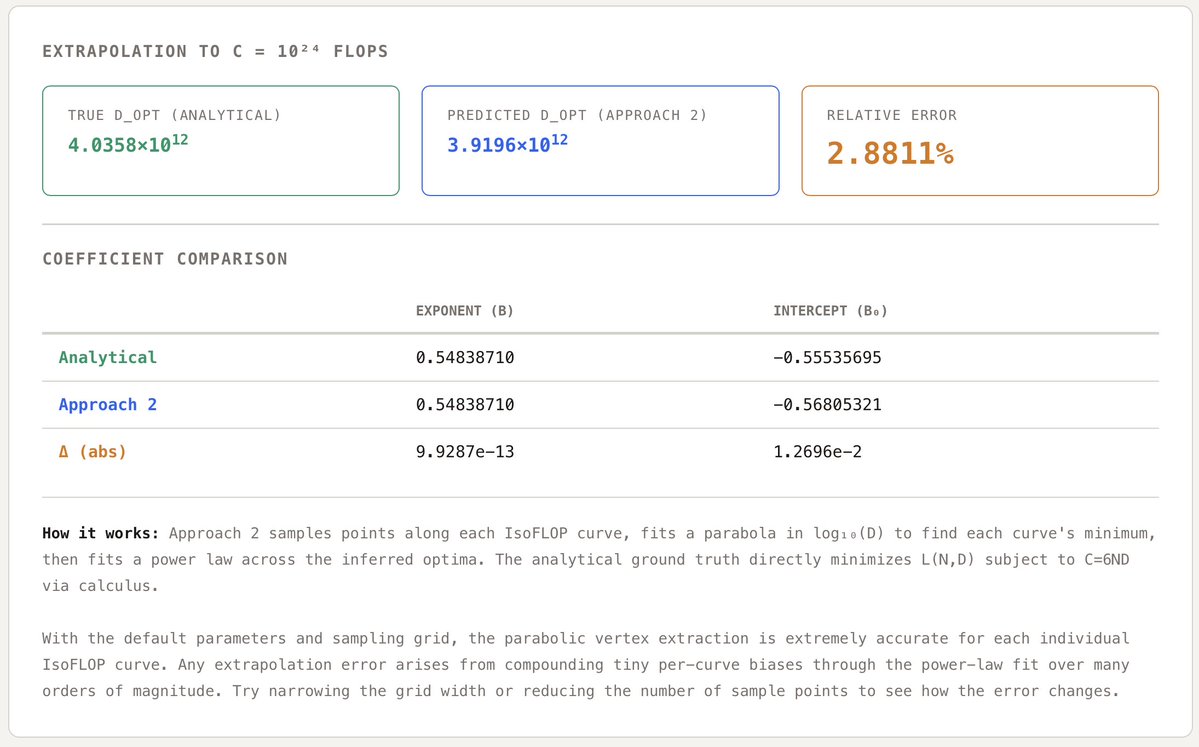

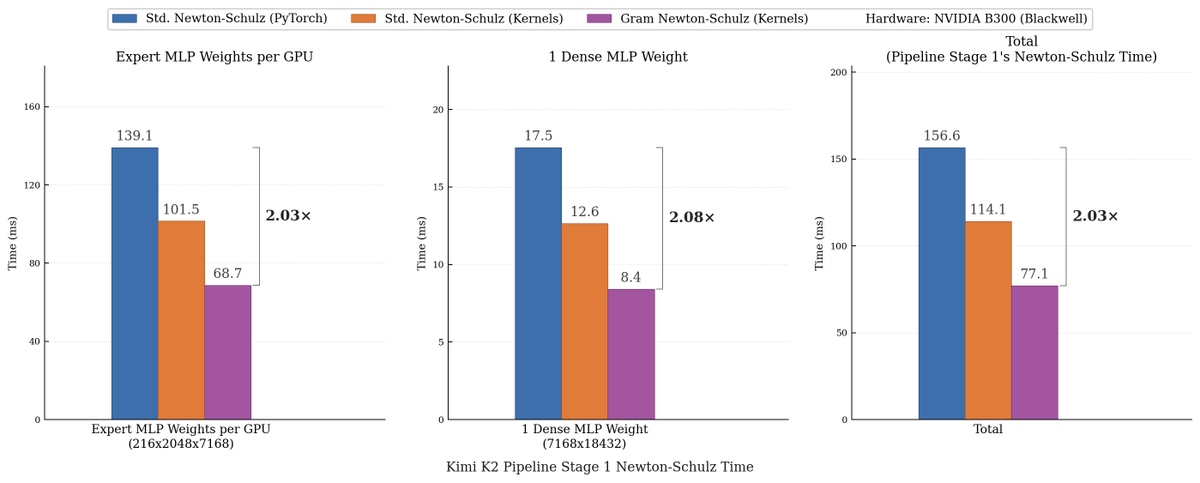

We made Muon run up to 2x faster for free!

Introducing Gram Newton-Schulz: a mathematically equivalent but computationally faster Newton-Schulz algorithm for polar decomposition.

Gram Newton-Schulz rewrites Newton-Schulz such that instead of iterating on the expensive rectangular X matrix, we iterate on the small, square, symmetric XX^T Gram matrix to reduce FLOPs. This allows us to make more use of fast symmetric GEMM kernels on Hopper and Blackwell, halving the FLOPs of each of those GEMMs.

Gram Newton-Schulz is a drop-in replacement of Newton-Schulz for your Muon use case: we see validation perplexity preserved within 0.01, and share our (long!) journey stabilizing this algorithm and ensuring that training quality is preserved above all else.

This was a super fun project with @noahamsel, @berlinchen, and @tri_dao that spanned theory, numerical analysis, and ML systems! Blog and codebase linked below 🧵

English

Satyapriya Krishna retweetet

NEW research from NVIDIA.

Post-training agents with RL is powerful but expensive.

Every parameter update needs full multi-turn rollouts with environment interactions, making end-to-end RL prohibitively costly for long-horizon agentic tasks.

This research offers a practical middle ground.

The work introduces PivotRL, a framework that operates on existing SFT trajectories to combine the computational efficiency of SFT with the out-of-domain retention of end-to-end RL.

Instead of exhaustive full-trajectory rollouts, PivotRL identifies pivots, informative intermediate turns where sampled actions show mixed outcomes, and trains only on those high-signal moments.

Standard SFT degrades OOD performance by -9.83 points on average. PivotRL stays near zero (+0.21) while achieving +14.11 average in-domain gains over the base model versus +9.94 for SFT.

On SWE-Bench, PivotRL reaches competitive accuracy with E2E RL using 4x fewer rollout turns and 5.5x less wall-clock time.

The method is already deployed in production as the workhorse for NVIDIA's Nemotron-3-Super-120B agentic post-training.

Paper: arxiv.org/abs/2603.21383

Learn to build effective AI agents in our academy: academy.dair.ai

English

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation.

Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers.

🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth.

🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale.

🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead.

🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains.

🔗Full report:

github.com/MoonshotAI/Att…

English

Satyapriya Krishna retweetet

Very cool!! Nice to see practice keeping up with the theory 😉

After all, they’re provably as fast as parallel sampling can get.

Stefano Ermon@StefanoErmon

Mercury 2 is live 🚀🚀 The world’s first reasoning diffusion LLM, delivering 5x faster performance than leading speed-optimized LLMs. Watching the team turn years of research into a real product never gets old, and I’m incredibly proud of what we’ve built. We’re just getting started on what diffusion can do for language.

English

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

Satyapriya Krishna retweetet

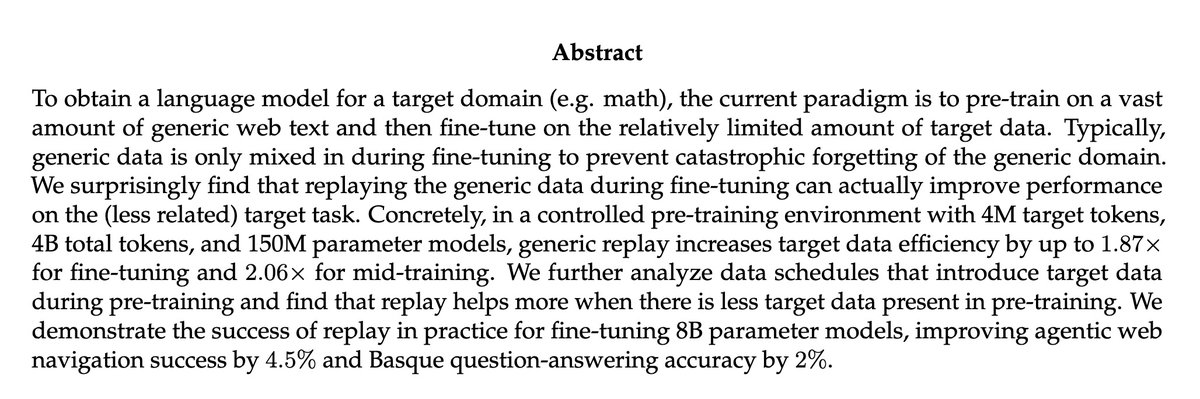

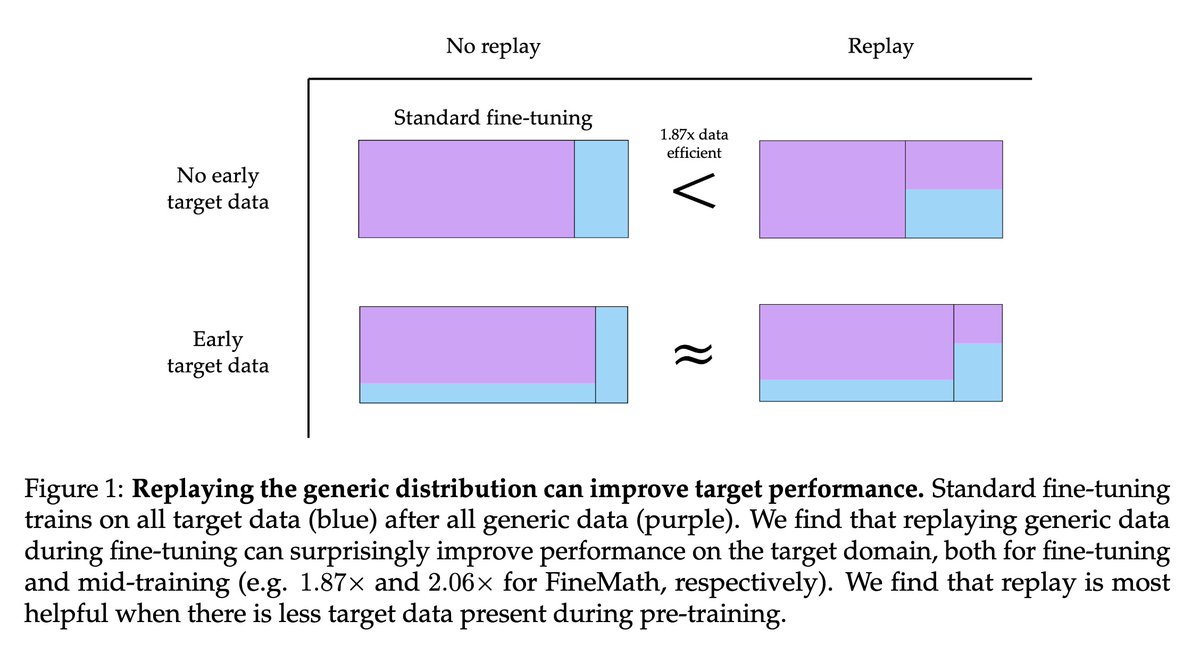

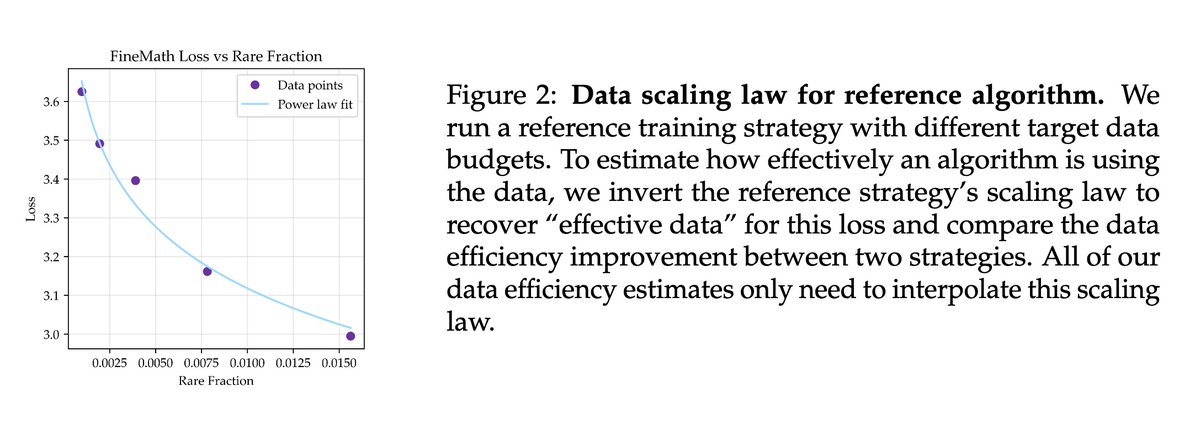

[CL] Replaying pre-training data improves fine-tuning

S Kotha, P Liang [Stanford University] (2026)

arxiv.org/abs/2603.04964

English

Satyapriya Krishna retweetet

🚀 Today we’re releasing FlashOptim: better implementations of Adam, SGD, etc, that compute the same updates but save tons of memory. You can use it right now via `pip install flashoptim`. 🚀

arxiv.org/abs/2602.23349

A bunch of cool ideas make this possible: [1/n]

English

Satyapriya Krishna retweetet

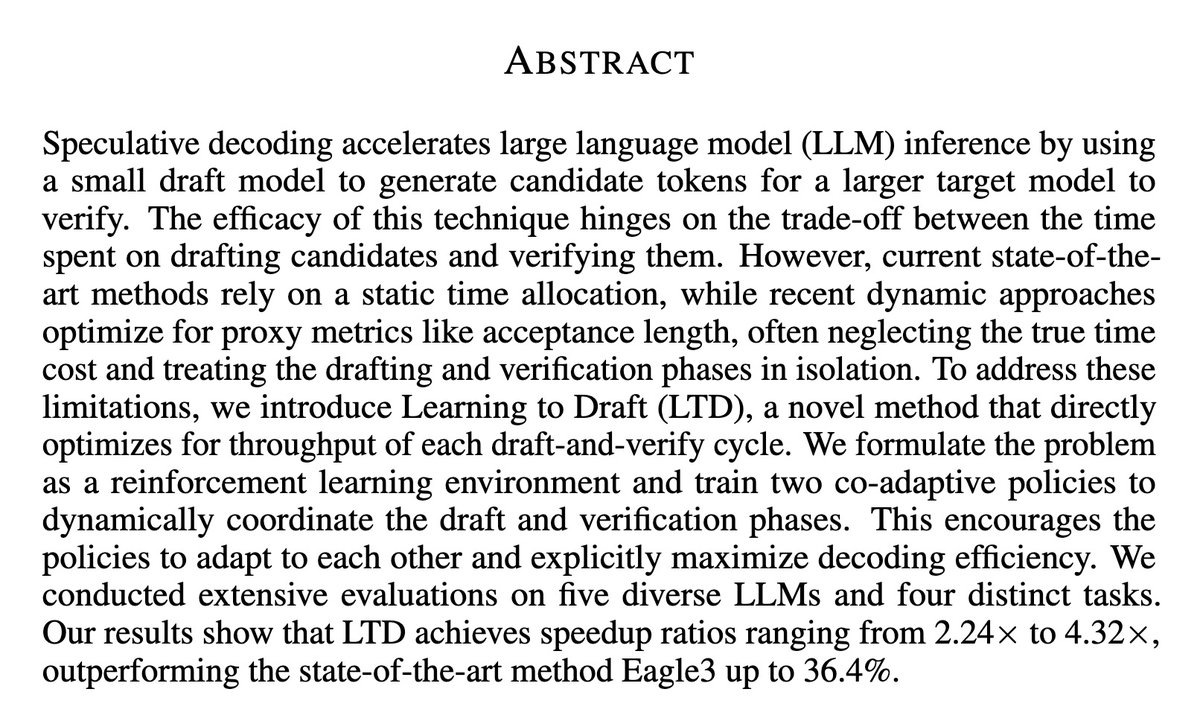

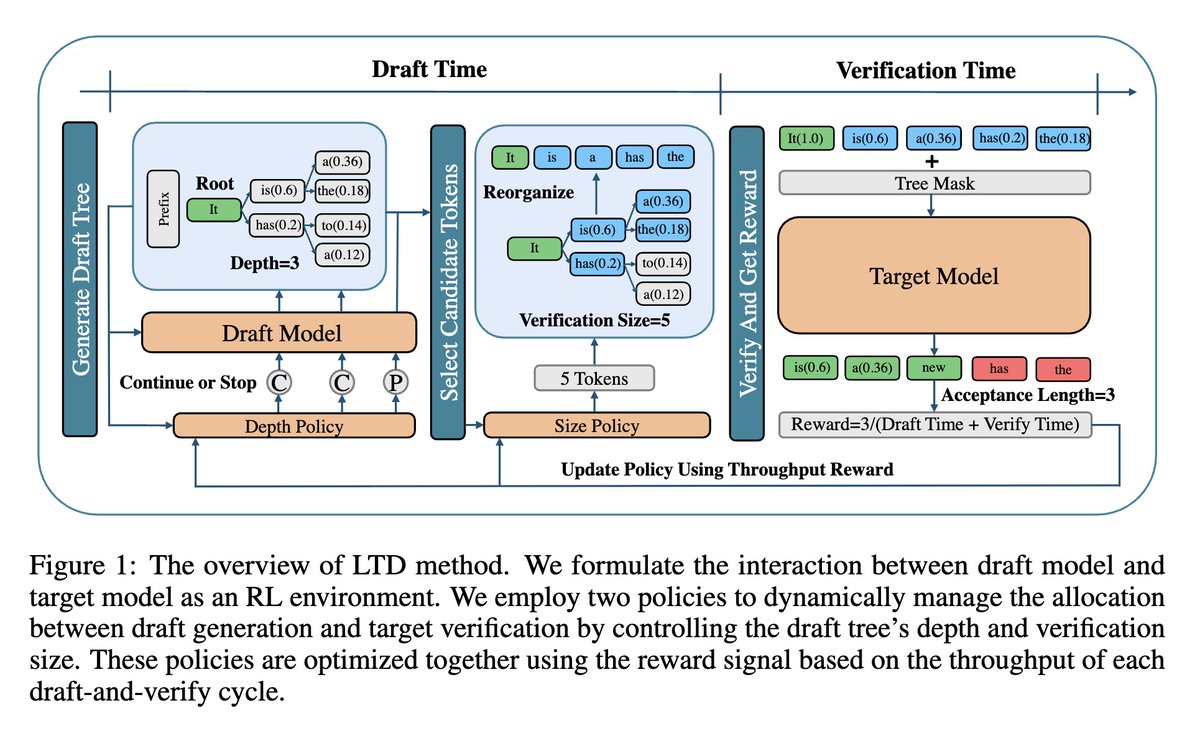

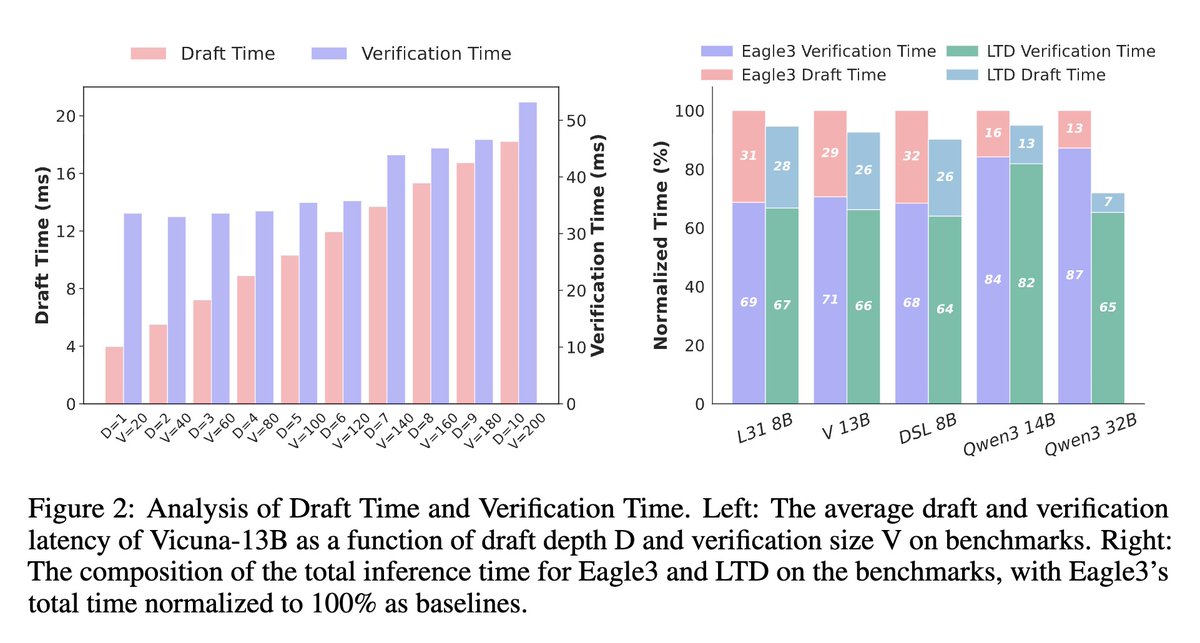

[CL] Learning to Draft: Adaptive Speculative Decoding with Reinforcement Learning

J Zhang, Z Yu, L Wang, N Yang… [Microsoft Research Asia & Peking University] (2026)

arxiv.org/abs/2603.01639

English

Satyapriya Krishna retweetet

Today I read a Paper:

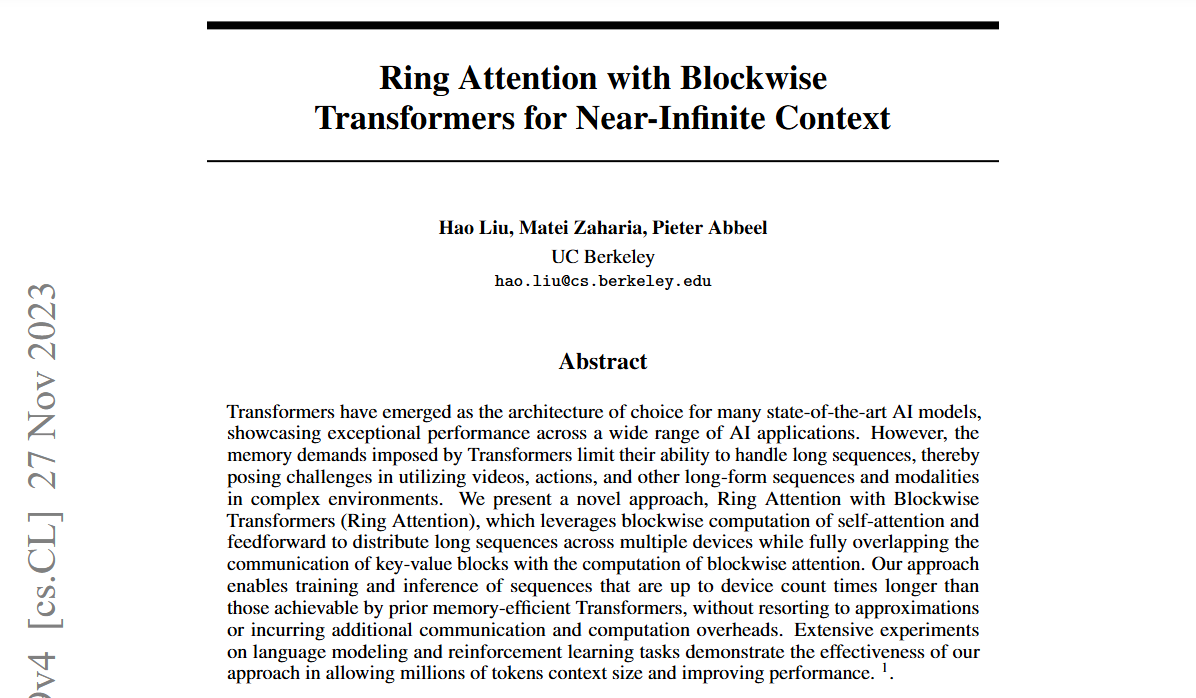

Ring Attention with Blockwise

Transformers for Near-Infinite Context

arxiv.org/pdf/2310.01889

English

Satyapriya Krishna retweetet

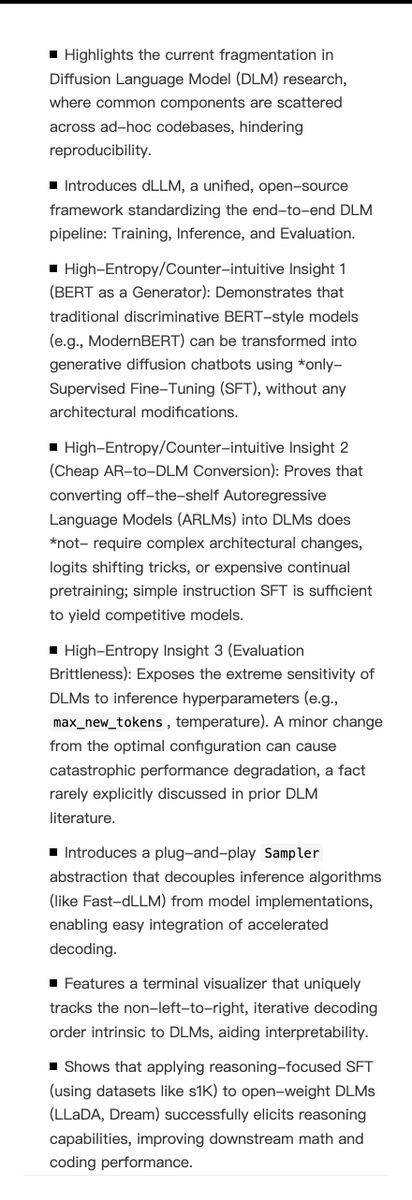

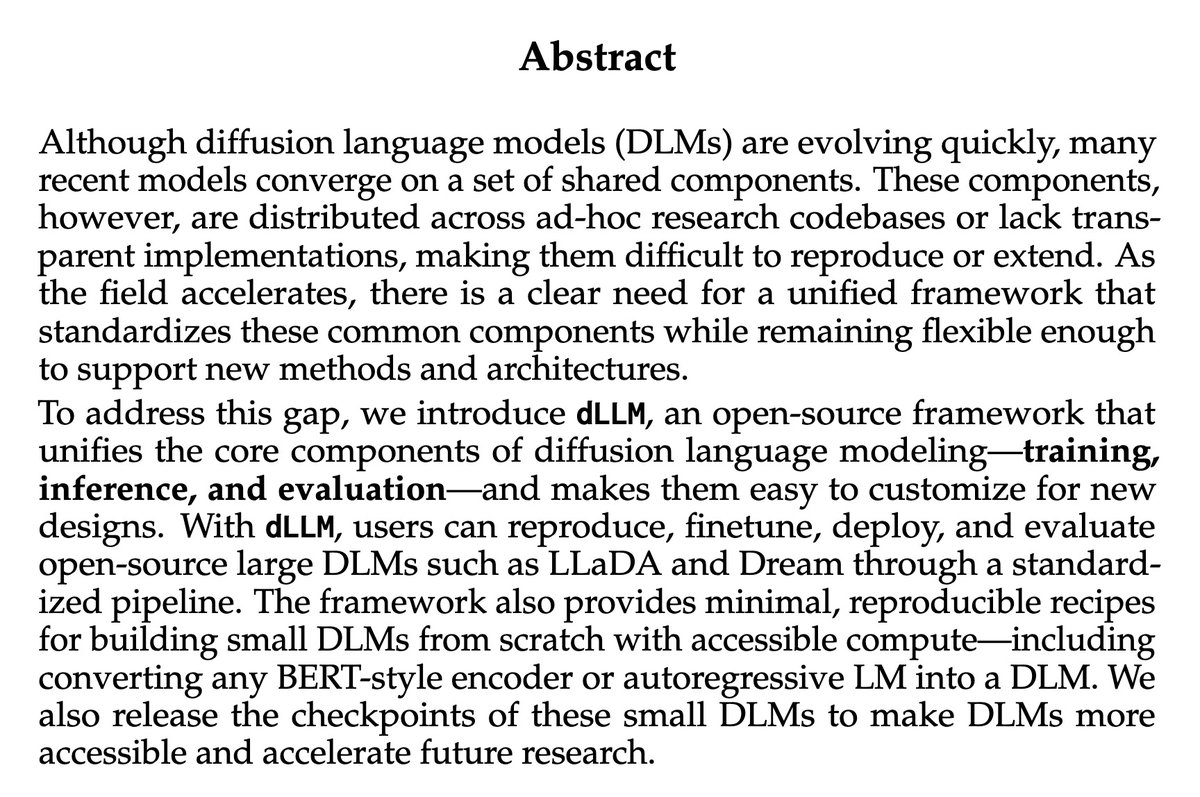

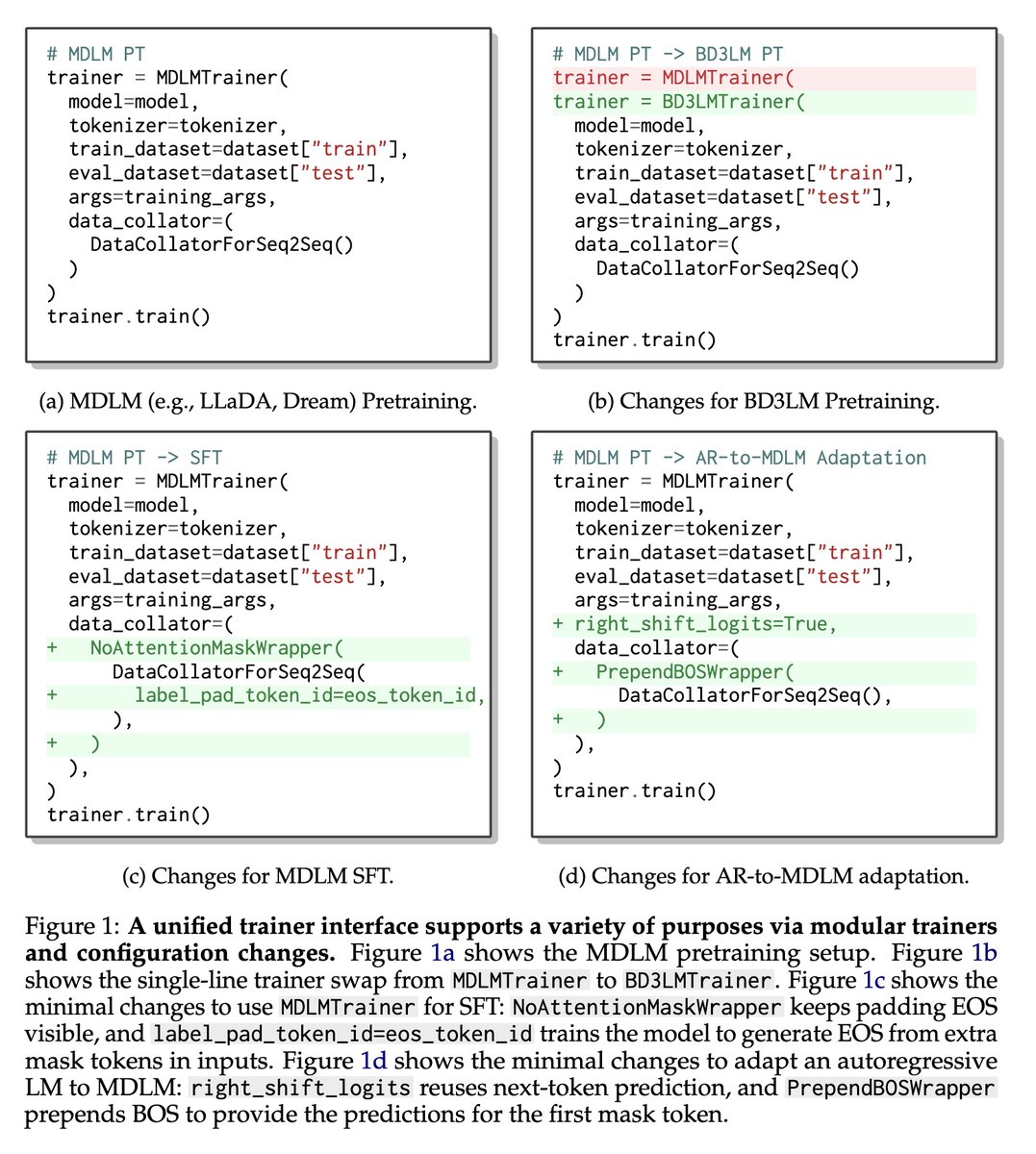

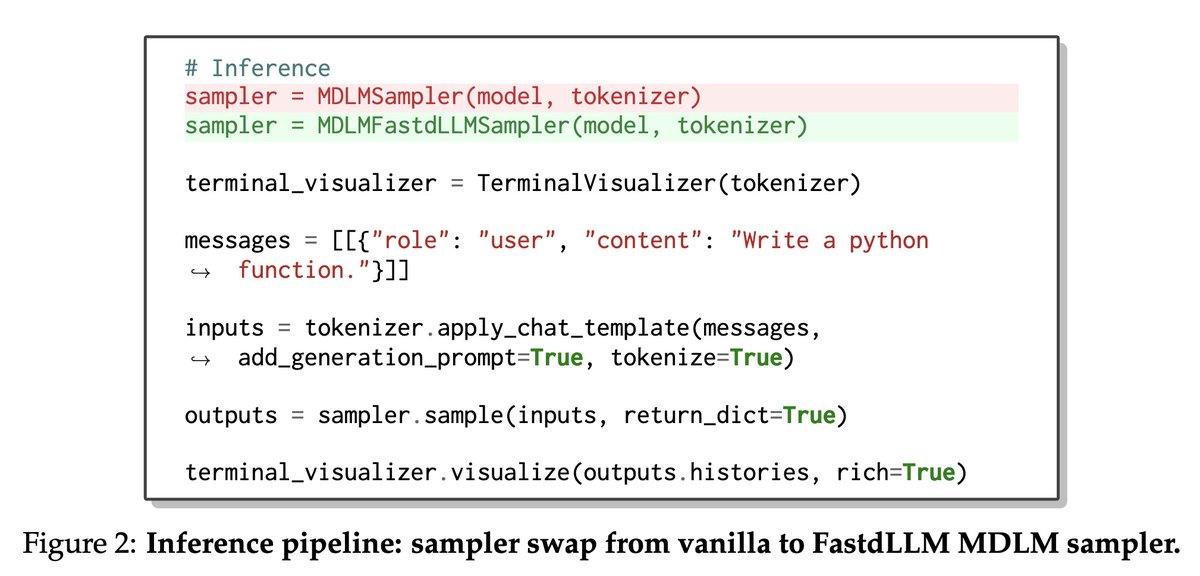

[CL] dLLM: Simple Diffusion Language Modeling

Z Zhou, L Chen, H Tong, D Song [UC Berkeley & UIUC] (2026)

arxiv.org/abs/2602.22661

Satyapriya Krishna retweetet

Really cool work from Databricks Research in collaboration with Harvard and Cornell! It turns out off-policy RL can match and even outperform on-policy, making post training a lot more efficient and flexible. Try it on your tasks on Databricks!

Kianté Brantley@xkianteb

Does LLM RL post-training need to be on-policy?

English