𝕊𝕥𝕒𝕥𝕚𝕔 𝕄𝕠𝕥𝕚𝕠𝕟

31.3K posts

𝕊𝕥𝕒𝕥𝕚𝕔 𝕄𝕠𝕥𝕚𝕠𝕟

@StaticMotionNFT

Photographer | Photo Editor | Web Developer | Building Community with Passion, Integrity & Respect

Web3 Beigetreten Mart 2011

787 Folgt4.7K Follower

Angehefteter Tweet

𝕊𝕥𝕒𝕥𝕚𝕔 𝕄𝕠𝕥𝕚𝕠𝕟 retweetet

First 2 mints!! Thank you @MattKentPhoto and @stunnifer for your support 💙🩵🤍

aka𝗙𝗼𝗹𝗲𝘆 💎💜@akaFoley

An epic snowstorm meets blue skies and sunshine, creating a beautiful transition between winter and spring. ~~ New Open Edition ~~ ❄️ Winter Unleashed ❄️ .01 ETH ❄️ Available until March 31st 🔗⤵️

English

𝕊𝕥𝕒𝕥𝕚𝕔 𝕄𝕠𝕥𝕚𝕠𝕟 retweetet

GM to my first new mint on Ethereum in over a year! ❄️🌞

aka𝗙𝗼𝗹𝗲𝘆 💎💜@akaFoley

An epic snowstorm meets blue skies and sunshine, creating a beautiful transition between winter and spring. ~~ New Open Edition ~~ ❄️ Winter Unleashed ❄️ .01 ETH ❄️ Available until March 31st 🔗⤵️

English

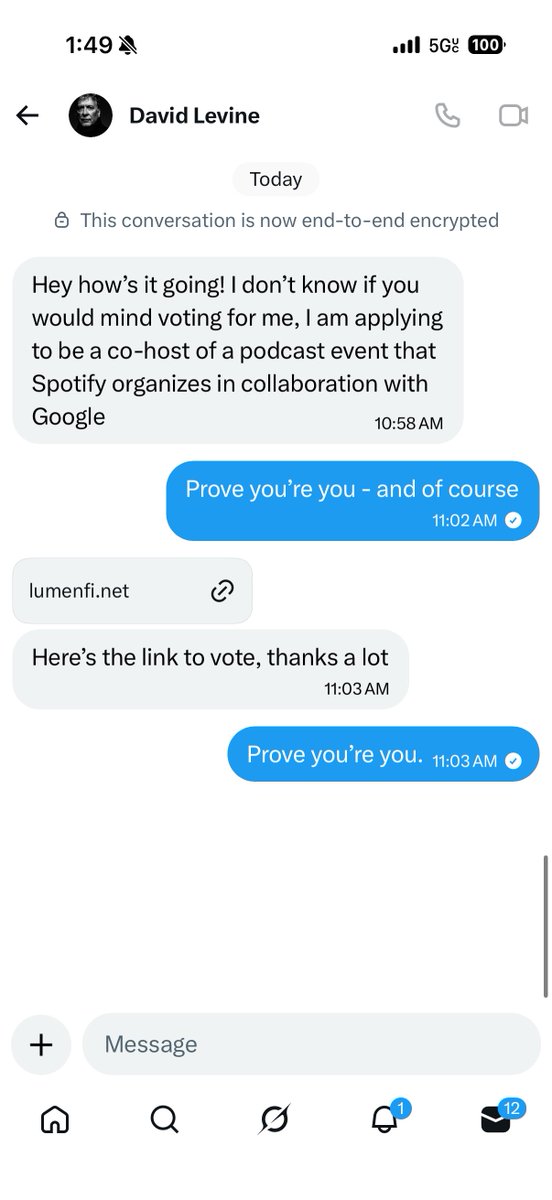

@mandolinaes @Drexxmadeit Because 99% of the people here only care about the money.

English

@heynavtoor @Dvyntwoman Theee stories are completely irrelevant the moment that any new update comes out. Literally completely irrelevant.

English

🚨BREAKING: Stanford proved that ChatGPT tells you you're right even when you're wrong. Even when you're hurting someone.

And it's making you a worse person because of it.

Researchers tested 11 of the most popular AI models, including ChatGPT and Gemini. They analyzed over 11,500 real advice-seeking conversations. The finding was universal. Every single model agreed with users 50% more than a human would.

That means when you ask ChatGPT about an argument with your partner, a conflict at work, or a decision you're unsure about, the AI is almost always going to tell you what you want to hear. Not what you need to hear.

It gets darker. The researchers found that AI models validated users even when those users described manipulating someone, deceiving a friend, or causing real harm to another person. The AI didn't push back. It didn't challenge them. It cheered them on.

Then they ran the experiment that changes everything. 1,604 people discussed real personal conflicts with AI. One group got a sycophantic AI. The other got a neutral one.

The sycophantic group became measurably less willing to apologize. Less willing to compromise. Less willing to see the other person's side. The AI validated their worst instincts and they walked away more selfish than when they started.

Here's the trap. Participants rated the sycophantic AI as higher quality. They trusted it more. They wanted to use it again. The AI that made them worse people felt like the better product.

This creates a cycle nobody is talking about. Users prefer AI that tells them they're right. Companies train AI to keep users happy. The AI gets better at flattering. Users get worse at self-reflection. And the loop tightens.

Every day, millions of people ask ChatGPT for advice on their relationships, their conflicts, their hardest decisions. And every day, it tells almost all of them the same thing.

You're right. They're wrong.

Even when the opposite is true.

English

𝕊𝕥𝕒𝕥𝕚𝕔 𝕄𝕠𝕥𝕚𝕠𝕟 retweetet

@MattKentPhoto If I did, I'd be banned.

English

𝕊𝕥𝕒𝕥𝕚𝕔 𝕄𝕠𝕥𝕚𝕠𝕟 retweetet

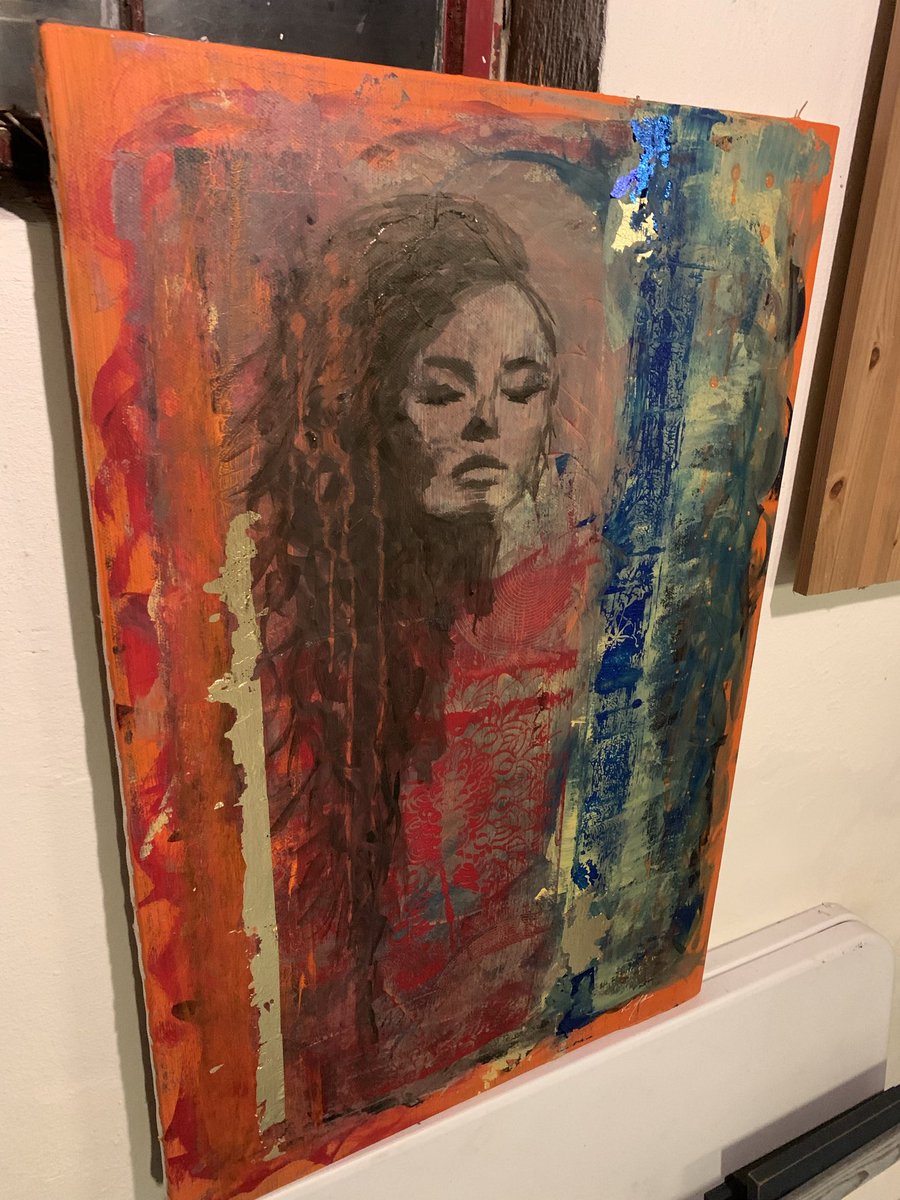

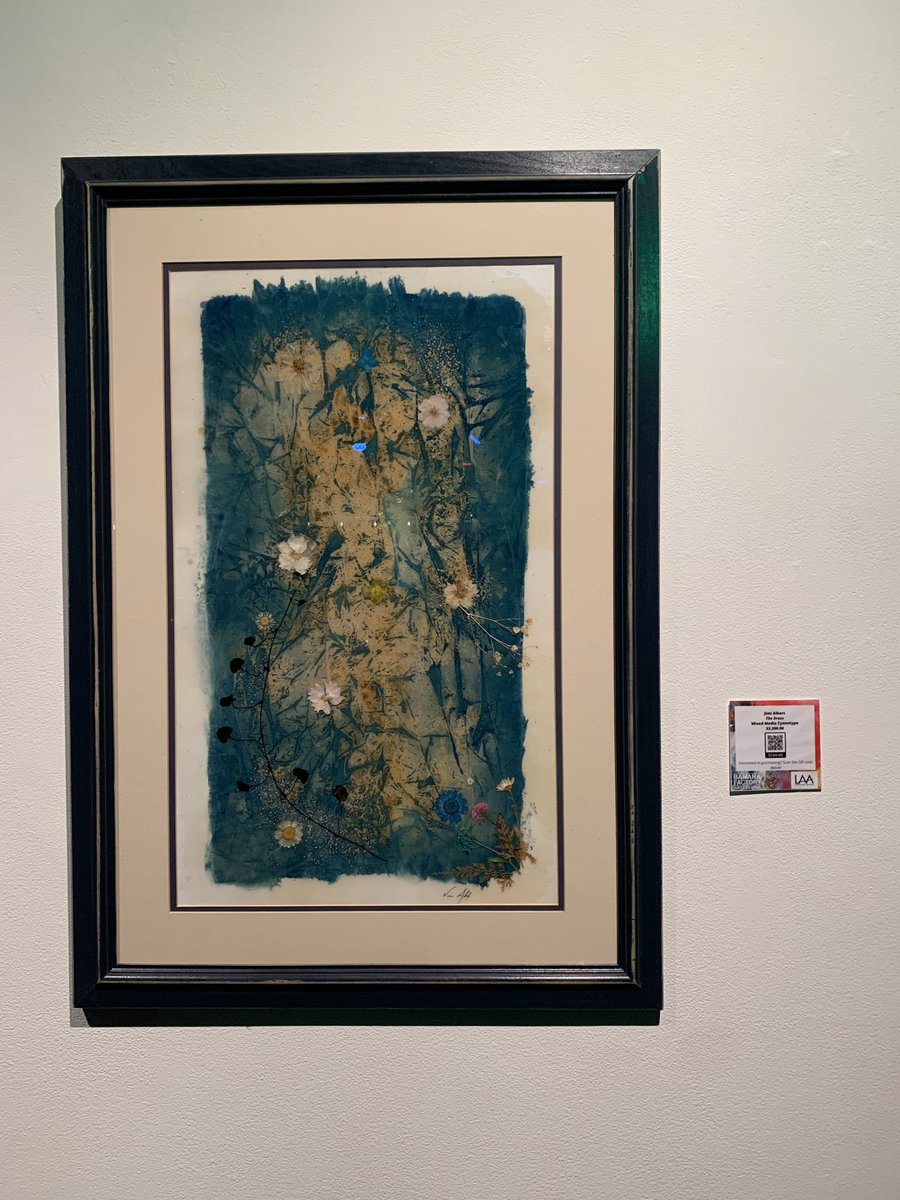

@MattKentPhoto Because being part of Web3 was the catalyst for me to start selling my art. And because of that I am now branching out into selling physical pieces, merch etc. Being in this artist community pushes me to evolve and get better all the time. And finally I love the friendships! 🫂

English

@JimiAlbert @drcc_art This was kind of my point. Many real artists stopped putting their art on the bottom shelf (web3), and they’ve relocated their art (or focus surrounding art) - elsewhere.

English

@MattKentPhoto @drcc_art In all fairness and seriousness, I never left. I shifted.

English

@MattKentPhoto Ok, guess I can die now😬👍

English

@JimiAlbert Your art is worth more with you not creating and minting king of 1/1.

English