Shiv

2.8K posts

@TensorTunesAI

Agentic AI | Learning ML and DL in public | Sharing resources & daily notes

Are you up for a challenge? openai.com/parameter-golf

I'm very bullish on the role of AI qualitative interviewers, but all the results from this exercise should have a big asterisk around what the specific sample is and what it says about AI in general. Who are these 81k users around the world that are responding to this call? Is this telling us something about how AI is generally perceived or what the 81k Claude users think about AI? We already know that Claude users are likely to be quite different than the average "user" on the consumer side, let alone how this selection varies across countries/occupations and continents. Survey research scholars have written entire textbooks about sample selection, and the good folks at organizations like the @uscensusbureau fret night and day about collecting representative data - but I saw very little discussion on this topic or any disclaimers in this report except for this paragraph below in the appendix.

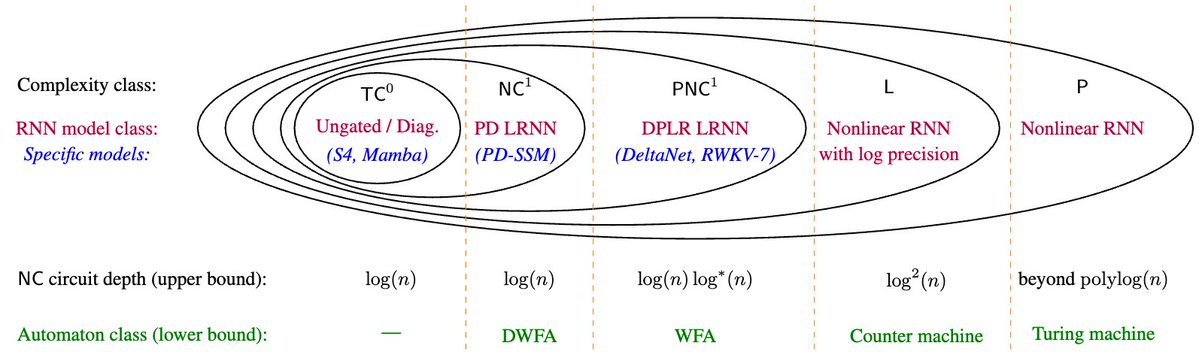

[1/8] New paper with Hongjian Jiang, @YanhongLi2062, Anthony Lin, @Ashish_S_AI: 📜Why Are Linear RNNs More Parallelizable? We identify expressivity differences between linear/nonlinear RNNs and, conversely, barriers to parallelizing nonlinear RNNs 🧵👇

ran some quick weekend experiments on @SarvamAI's 105B model on a subset of the IndicMMLU-Pro dataset Sarvam's model is really good at reasoning efficiency. uses ~2.5x less tokens to reach ~same accuracy