The Humanoid Hub

6.9K posts

The Humanoid Hub

@TheHumanoidHub

Humanoid Robots: Tech, Business, and Social Dynamics. Click the “𝕊𝕦𝕓𝕤𝕔𝕣𝕚𝕓𝕖” button on the profile to support. Run by @dev_and_

How Disney Research brought the animated character Olaf to life, achieving an accurate, stylized gait alongside robust balance, low noise, and thermal safety. Sets new standard for animated-to-physical robotic characters.

Not the flashiest demos, but what’s under the hood represents a foundational shift for general-purpose robotics. World models are the next-gen foundation of Physical AI, not the VLM backbones found in typical VLAs. DreamZero is a 14B-parameter World Action Model (WAM) by NVIDIA that treats robotics as a joint video-and-action prediction task. Unlike traditional Vision-Language-Action (VLA) models that map images directly to motor commands, DreamZero leverages a pretrained video diffusion backbone to predict future world states and actions simultaneously. - achieves 2× better zero-shot generalization to unseen tasks and environments compared to state-of-the-art VLAs. - learns effectively from heterogeneous, non-repetitive data (500 hours), breaking the need for thousands of repeated demonstrations. - adapts to new robot embodiments with just 30 minutes of play data. - enables 7Hz closed-loop control via system optimizations and "DreamZero-Flash," making high-capacity diffusion models viable for real-time use.

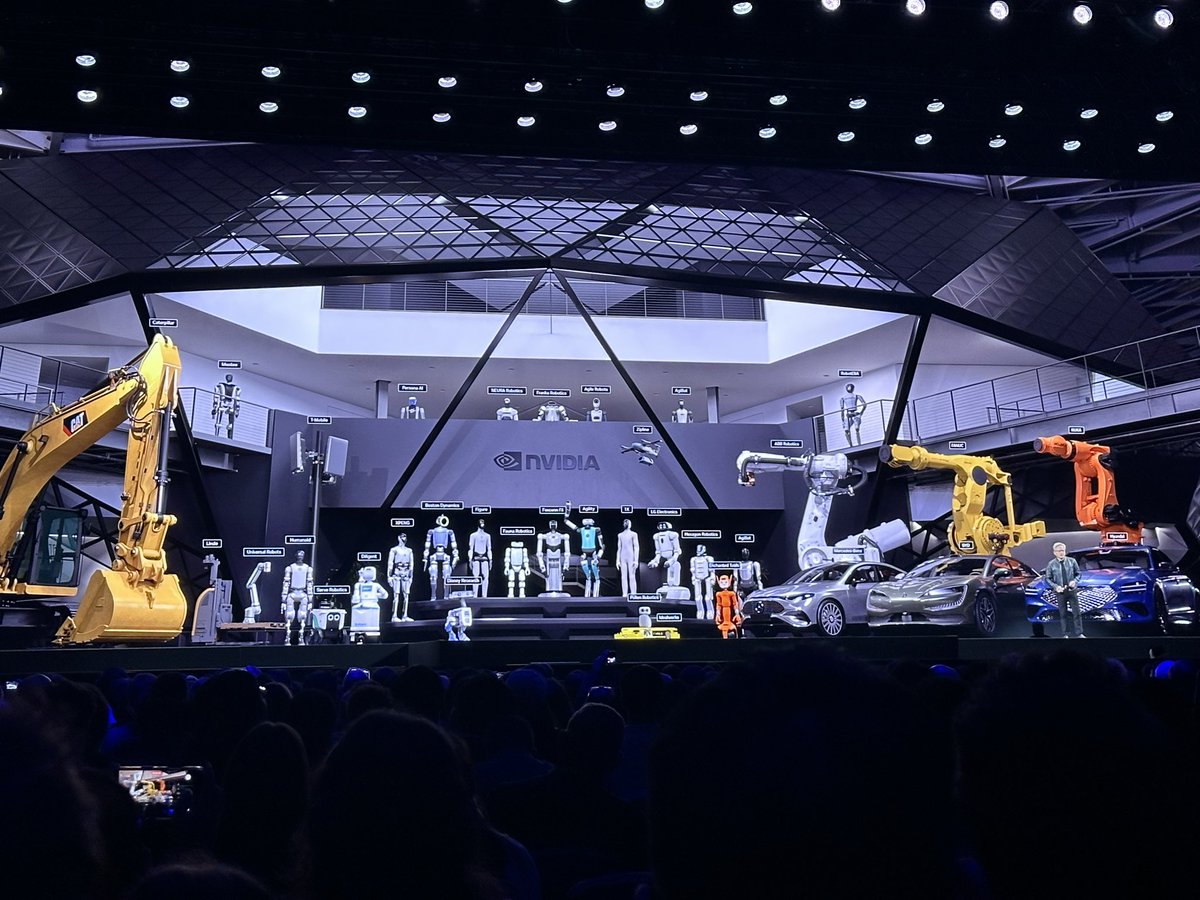

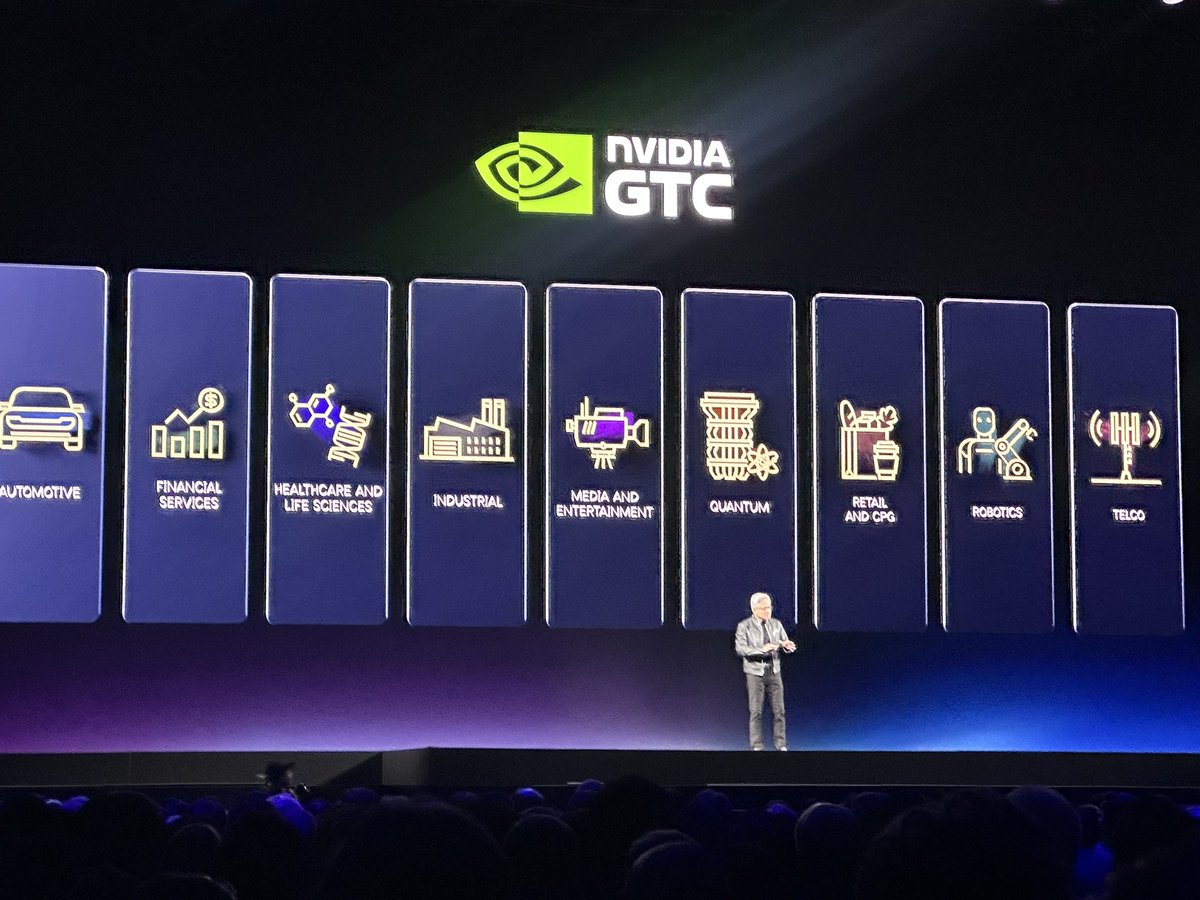

Nvidia targets data center revenue of $1+ trillion for 2025-2027. That’s already quite ridiculous, with the AI physical world only in its zeroth innings . $NVDA

I’ll be at the GTC this week, here with my friend Devang (@TheHumanoidHub) looking forward to see great sessions on robotics. Waiting for Jensen right now. One session I’m especially curious about today: ‘From Concept to Production: Humanoid Robotics at Scale’. nvidia.com/gtc/session-ca… With @pathak2206 (Skild AI)a @chelseabfinn (Stanford / Physical Intelligence), Pras Velagapudi (Agility Robotics), and Amit Goel from @Nvidia. Humanoid robotics needs to move from research to production. Let’s see what the people actually building it have to say….