Angehefteter Tweet

Vectorize

764 posts

Vectorize

@Vectorizeio

Makers of Hindsight. Agent memory that lets your agents learn over time. https://t.co/9U8TmRzNHf

Boulder, CO Beigetreten Aralık 2023

257 Folgt603 Follower

What is Semantic Agent Memory in AI Agents?

Semantic agent memory refers to how AI agents store, organize, and recall information so they can behave more intelligently over time. This image shows three common approaches. Vector memory is the classic RAG-style setup: raw data is extracted, broken into chunks, converted into embeddings, and stored in a vector database.

The agent then retrieves semantically similar chunks when needed. Structured memory uses a relational database instead of embeddings. Here, information is stored in clean, well-defined tables (like users, tasks, events), allowing the agent to perform precise lookups, filtering, and reasoning based on explicit fields. Graph memory goes a step further by extracting entities and their relationships—like “Alice → works_at → Google”—and storing them in a graph database.

This enables richer reasoning across connected facts. Each approach has strengths: vectors for fuzzy recall, structured DBs for precision, and graphs for relationship-aware reasoning. Together, they represent how modern agents build long-term understanding.

Know more about agentic memory through Hindsight: hindsight.vectorize.io

GIF

English

Do you know how AI Agents learn?

Usually, AI agents learn by turning everyday interactions into long-term understanding. Let's get a little deeper understanding. As agents operate, they talk to users, attempt tasks, succeed or fail, and receive feedback. All these experiences flow into a memory layer like Hindsight memory bank, which stores rich agent memories beyond simple chat history.

Using this stored context, agents perform reflection - they revisit past messages, detect patterns, understand user preferences, and analyze why certain tasks failed. This reflection helps them build stronger mental models, which represent their evolving understanding of users, goals, and responsibilities. These mental models improve over time as agents accumulate new experiences, allowing them to plan better, adapt faster, and avoid repeating mistakes.

Ultimately, something like Hindsight (in our case here) transforms raw interactions into structured knowledge, enabling agents to become more capable and personalized with every task they perform.

Why not give Hindsight a try?

Try Hindsight today: hindsight.vectorize.io/developer/api/…

English

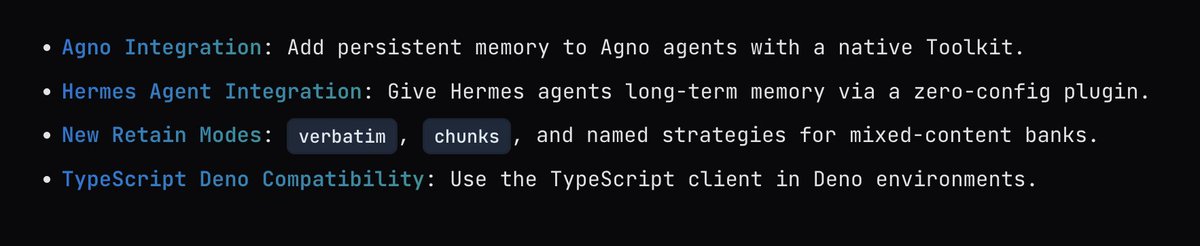

Read more about our @AgnoAgi and @NousResearch Hermes-Agent integrations and much more here: hindsight.vectorize.io/blog/2026/03/1…

English

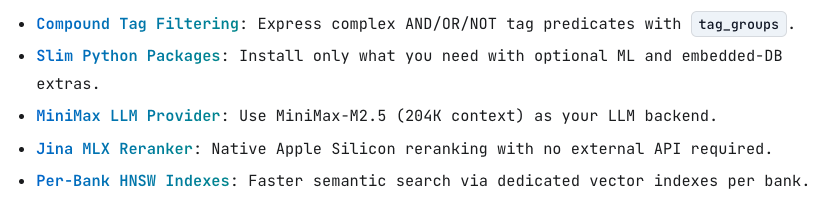

Read all the details here: hindsight.vectorize.io/blog/2026/03/1…

English

Hindsight: The Future of AI Agent Memory Beyond Vector Databases by @TechlatestNet towardsdev.com/hindsight-the-…

English

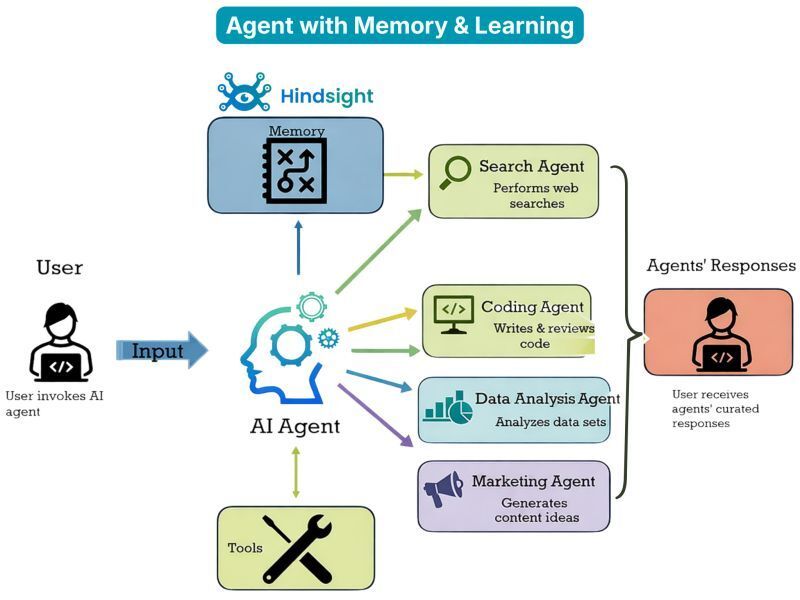

Modern AI agents are evolving beyond simple input-output systems, and Hindsight™ is leading that evolution.

When a user invokes an AI agent, it doesn't just process the request in isolation — it draws from a persistent memory layer powered by Hindsight™, the world's most advanced agent memory system. Unlike traditional RAG-based approaches, Hindsight™ enables agents to recall facts, remember previous interactions, and genuinely reflect on past experiences to improve over time — much like human memory.

Armed with this intelligence, the central AI agent seamlessly orchestrates specialized sub-agents: a Search Agent for web queries, a Coding Agent for writing and reviewing code, a Data Analysis Agent for interpreting datasets, and a Marketing Agent for generating creative content ideas. The agent also leverages integrated tools to execute tasks efficiently.

The result? Curated, context-aware responses delivered back to the user — smarter every time, thanks to Hindsight™.

Know more about Hindsight: github.com/vectorize-io/h…

English

Don't build AI Agents & Agentic applications without a memory layer.

Conversations reset every time, limiting long-term context, personalization, and reasoning.

AI agents forget everything between sessions. Every conversation starts from zero - no context about who you are, what you've discussed, or what the assistant has learned. This isn't just an implementation detail; it fundamentally limits what AI Agents can do. This is exactly where most of the AI Agents fail to scale.

You need an agentic memory layer like Hindsight to overcome the context problems your AI agents face.

Try Hindsight: github.com/vectorize-io/h…

English