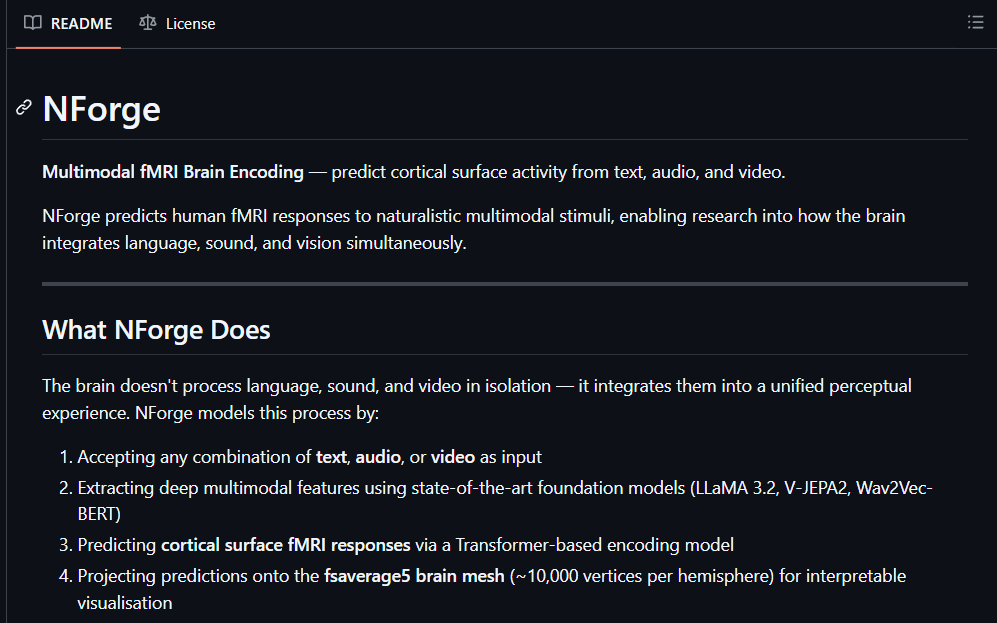

🚨Science nerds are going to lose their minds. @Kaiwritescodes just open sourced a framework that predicts how your brain responds to any text, audio, or video by simulating cortical fMRI activity with 30% more accuracy than Meta's own model. No fMRI scanner. No neuroscience PhD. No million-dollar lab. It's called NForge. Here's what this thing actually does: → Feed it any combination of text, audio, or video and it predicts cortical surface activity across ~20,484 brain vertices → Extracts deep features via LLaMA 3.2, V-JEPA2, and Wav2Vec-BERT simultaneously → Generates ROI attention maps showing exactly which brain regions fire hardest at which moments → Runs real-time streaming predictions from live feature streams -- no pre-loading the full clip → Breaks down exactly how much text vs audio vs video drove each prediction with per-vertex modality attribution scores → Adapts to entirely new subjects with just a few calibration scans -- no full retraining required Here's the wildest part: Built on Meta's TRIBE v2 foundation but adds 6 major capabilities Meta never shipped. Cross-subject generalization. Streaming inference. Modality attribution. torch.compile support. Full test coverage. Professional src/ package layout. You literally point this at a movie clip and it tells you which parts of the human cortex light up -- broken down by what your eyes, ears, and language centers each contributed. That sentence shouldn't be real in 2026. But here we are. 100% Open Source. pip install nforge. (Link in the comments)