Angehefteter Tweet

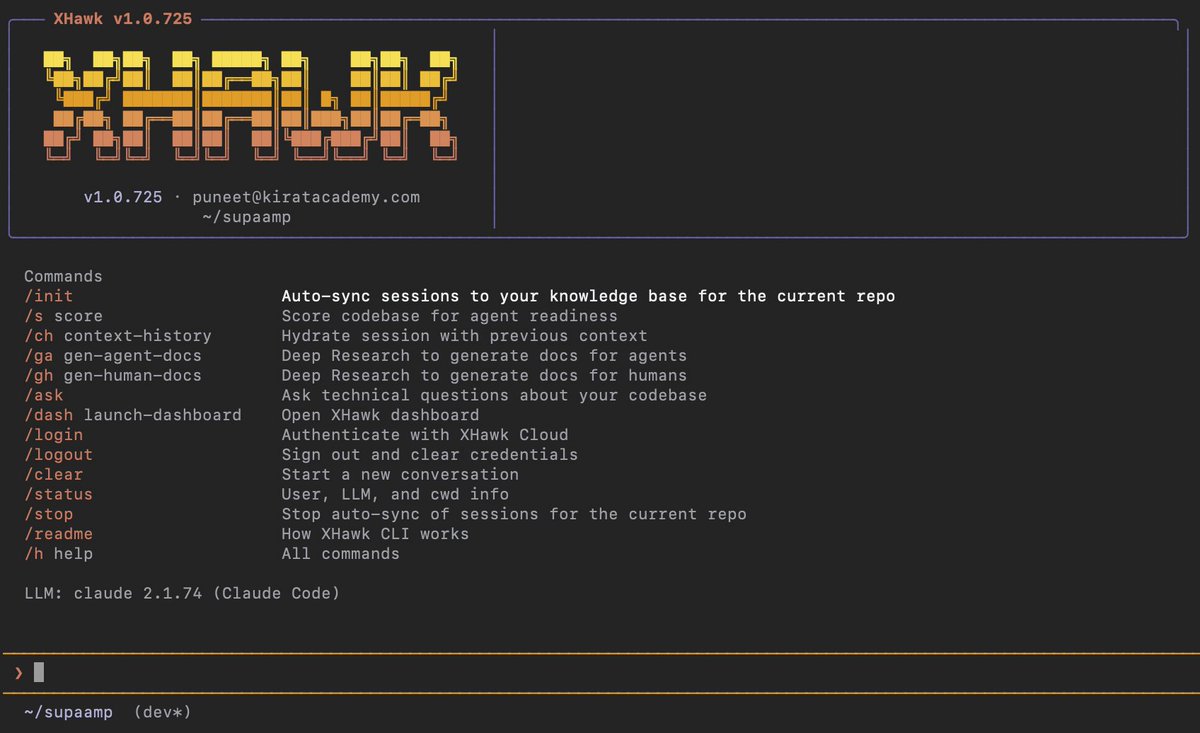

Your AI agents need a boss.

Batty supervises AI coding agents so you don't have to babysit them. Kanban in, tested code out. Terminal-native, tmux-powered, test-gated.

Works with Claude Code, Codex, and Aider.

Demo: youtube.com/watch?v=2wmBcU…

GitHub: github.com/battysh/batty

YouTube

English