Charmaine Cunningham retweetet

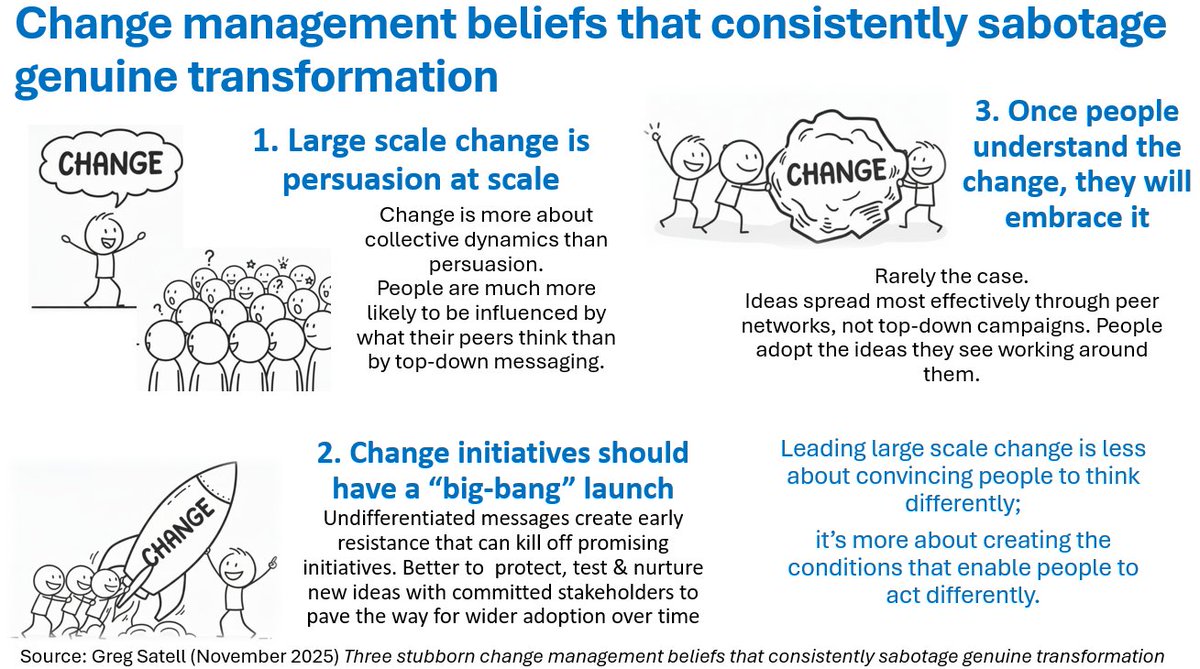

Peer influence is the single most powerful driver of change adoption.

It’s something those of us working in change practice have long known: the trusted colleague who says "this worked for us"; the visible shift in practice from someone others respect; the informal chat that carries more weight than the top-down directive. Peer influence is change experienced through relationships, not instruction. And guess what? New research from @Microsoft (Nancy Bahm & colleagues) confirms that peer influence is also the most important enabling factor in AI rollout.

AI adoption is stalling. Uncertainty makes people choose caution over experimentation, so learning goes underground. When they see trusted colleagues use AI, adapt it to real work & share learning, they’re far more likely to regularly use it themselves. Without social proof, even the best infrastructure & training can’t drive progress.

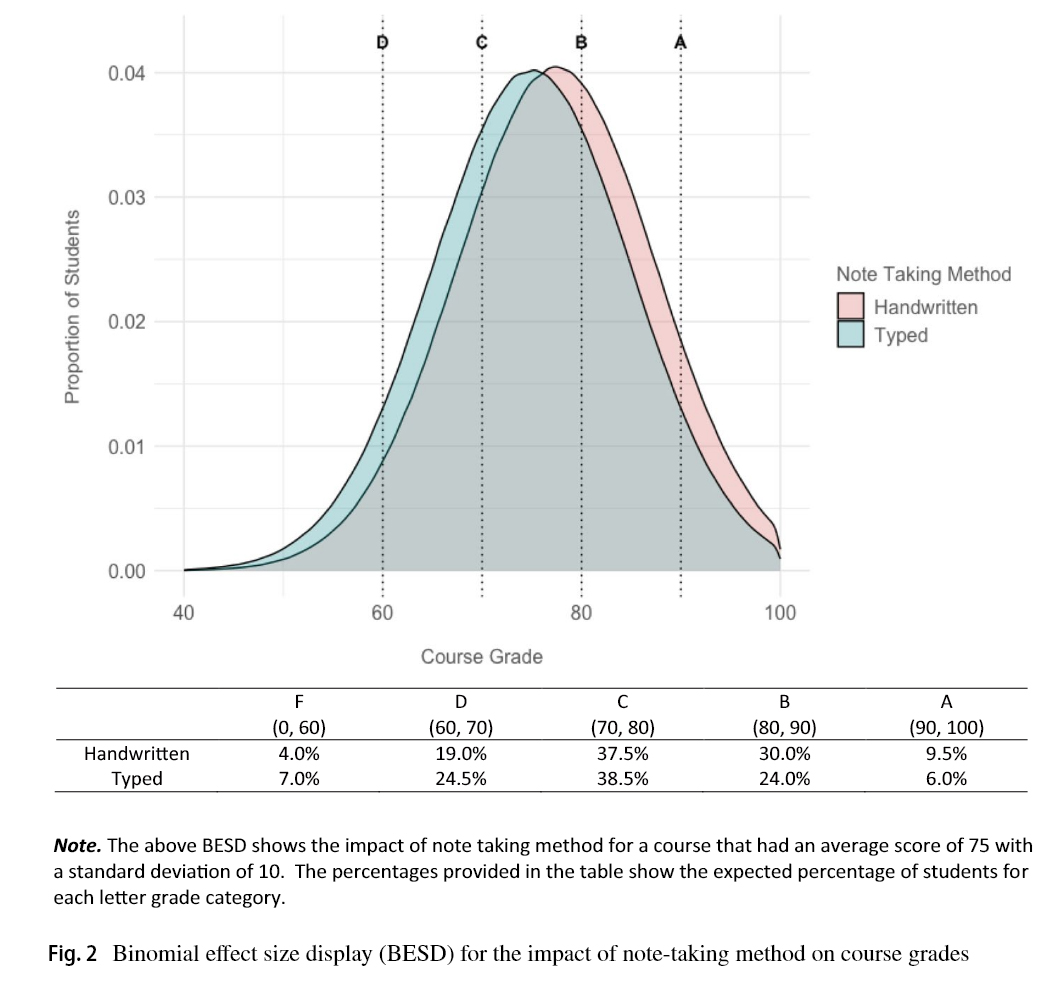

The research data shows the importance of peer influence in AI rollout. A one standard deviation increase in positive peer influence raises the likelihood of being a heavy AI user by 8.9 % points, & increases the probability of using AI agents by 10.4 points. Leadership mandates have no direct effect on usage once peer influence is accounted for.

The conditions for change are clearer than ever. Culture, psychological safety & trusting relationships aren't "soft" enablers - they’re the powerful mechanisms through which change spreads. In their absence, even the best technology investments fail to scale.

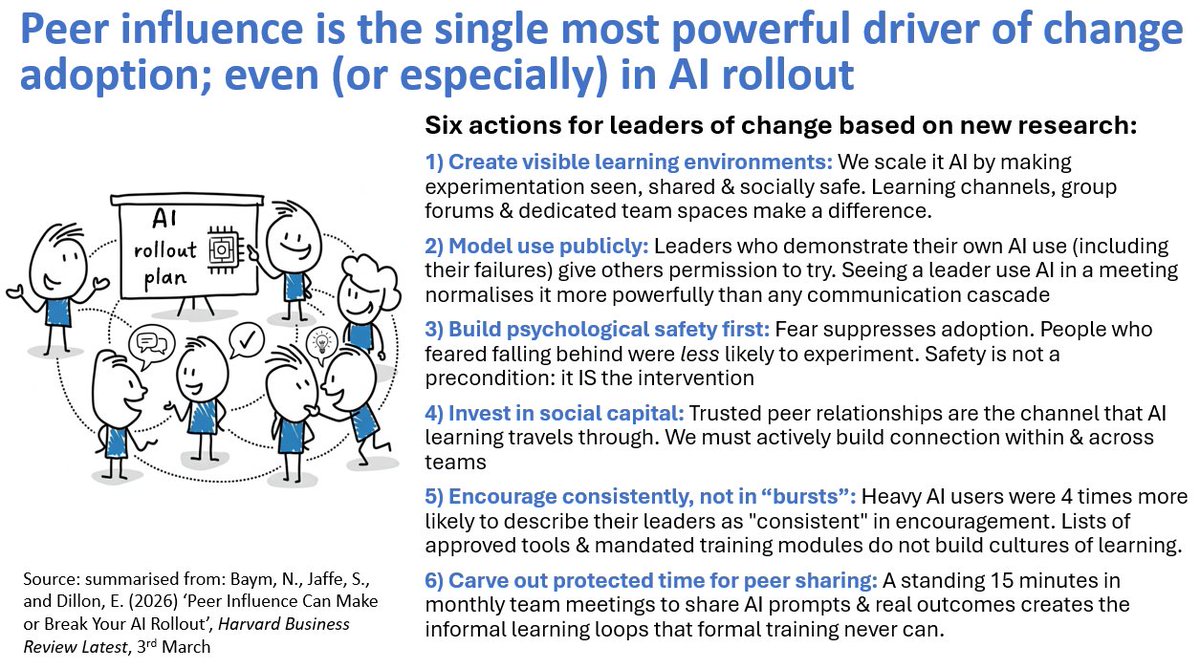

Six actions for leaders of change:

1. Create visible learning environments: We can’t scale AI by urging adoption. We scale it by making experimentation seen, shared & socially safe. Learning channels, group forums & dedicated team spaces can make a big difference.

2. Model use publicly: Leaders who demonstrate their own AI use (including their failures) give others permission to try. Seeing a leader use AI in a meeting normalises it more powerfully than any communication cascade.

3. Build psychological safety first: Fear suppresses adoption. People who feared falling behind were less likely to experiment. Safety is not a precondition: it IS the intervention.

4. Invest in social capital: Trusted peer relationships are the channels that AI learning travels through. We must actively build connection within & across teams.

5. Encourage consistently, not in “bursts”. Heavy AI users were 4 times more likely to describe their leaders as "consistent" in encouragement. Lists of approved tools & mandated training modules do not build cultures of learning.

6. Carve out protected time for peer sharing: A standing 15 minutes in monthly team meetings to share AI prompts & real outcomes creates the informal learning loops that formal training never can.

The research confirms what change practice has long taught us: people change through their relationships, not through policy. AI rollout is just the latest, most visible proof.

hbr.org/2026/03/peer-i…

English