dev_nam

108 posts

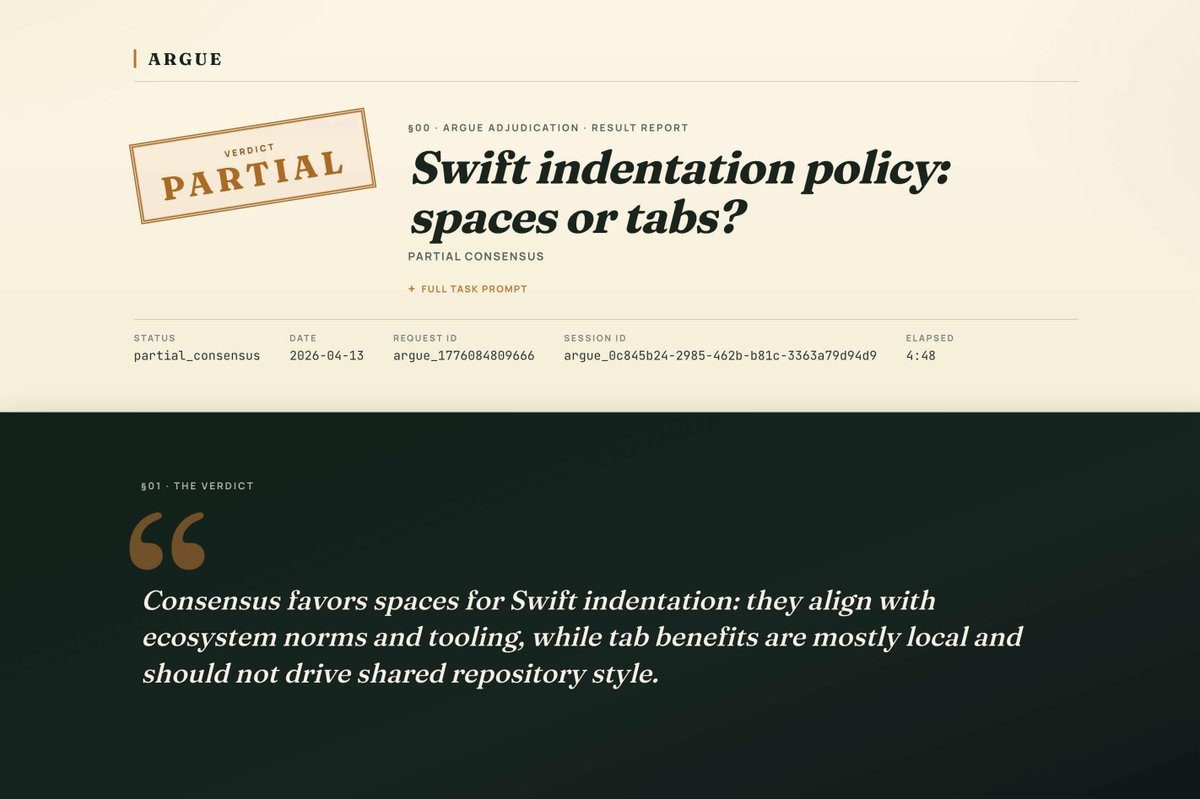

正式介绍一下这几天在做的项目:argue “兼听则明,偏信则暗。” 一个让多个 AI agent 围绕同一个问题展开辩论的工具 —— 让你配置的 agent(Claude / Codex / Gemini / OpenCode 等任意组合)先独立发表意见,再互相挑刺、合并立场、投票表决,最后给你一份带证据、带分歧、带可信度分数的结论。我最近基本都用它做一些关键的技术方案评审、代码审查和重要决策等,会很适合那种“希望多一些角度”的场合。 刚发的 v0.3.0 两个亮点: 📰 argue view —— 跑完任何一次辩论都能一键在浏览器里打开一份排版精致的报告。整份结果压在 URL fragment 里,纯静态托管、零后端,分享链接 = 分享报告,任何人都能看。 🧩 Skill 支持 —— 直接把 argue 作为 skill 装进你每天用的 agent,让 agent 在需要多方审议时自己调度 argue,给你一个顺滑的工作流。 MIT 开源,npm 一行装。欢迎来玩,欢迎点星: github.com/onevcat/argue/…

built Coal (usecoal.xyz) on @base - payment rails for AI agents using x402 + USDC settlement. MCP server, price oracle, agent-discoverable stores. All live on Base mainnet. $150 bounty open for builders 👇 x.com/emmanuel_haank… @Lady_Light_Lsk @base @BasedSouthernAF #BuildWithCoal #0GHackathon #BuildOnBase #BaseAfrica #0GHackathon #BuildOn0G @0G_labs @0g_CN @0g_Eco @HackQuest_